Differentiation reveals much more than the slope of the tangent plane.

We like to think about it that way, but from a different angle, differentiation is the same as an approximation with a linear function. This allows us to greatly generalize the concept.

Let's see why! ↓

We like to think about it that way, but from a different angle, differentiation is the same as an approximation with a linear function. This allows us to greatly generalize the concept.

Let's see why! ↓

By definition, the derivative of a function at the point 𝑎 is defined by the limit of the difference quotient, representing the rate of change.

In geometric terms, the differential quotient represents the slope of the line between two points of the function's graph.

However, differentiation can be formulated in another way.

We can write the difference quotient as the derivative plus an error term (if the derivative exists).

We can write the difference quotient as the derivative plus an error term (if the derivative exists).

With a bit of algebra, we obtain that around 𝑎, we can replace our function with a linear function. The derivative gives the coefficient of the 𝑥 term.

(The term 𝑜(|𝑥-𝑎|) means that it goes to 0 faster than |𝑥-𝑎|. This is called the small o notation.)

(The term 𝑜(|𝑥-𝑎|) means that it goes to 0 faster than |𝑥-𝑎|. This is called the small o notation.)

So, the derivative is the first-order coefficient of the best linear approximation. Why is this good for us? There are two main reasons:

1) this gives a template to explain higher-order derivatives,

2) and one can easily extend the formula for multivariable functions.

1) this gives a template to explain higher-order derivatives,

2) and one can easily extend the formula for multivariable functions.

Let's talk about higher-order derivatives first.

Going further with the idea, we might ask, what is the second-order polynomial that best approximates our function around a given point?

It turns out that we can continue our formula with the help of the second derivative.

Going further with the idea, we might ask, what is the second-order polynomial that best approximates our function around a given point?

It turns out that we can continue our formula with the help of the second derivative.

In general, we can continue this expansion indefinitely. The more terms you use, the smaller the error gets.

This is called the Taylor polynomial, one of the most powerful tools in mathematics.

I'll show you an example to see why.

This is called the Taylor polynomial, one of the most powerful tools in mathematics.

I'll show you an example to see why.

Have you ever wondered what happens when you type in the sine of some number into a hand calculator?

Since sin is a transcendental function, it is replaced with an approximation, such as its Taylor expansion that you can see below.

Since sin is a transcendental function, it is replaced with an approximation, such as its Taylor expansion that you can see below.

Now let's talk about the generalization of differentiation to multiple dimensions.

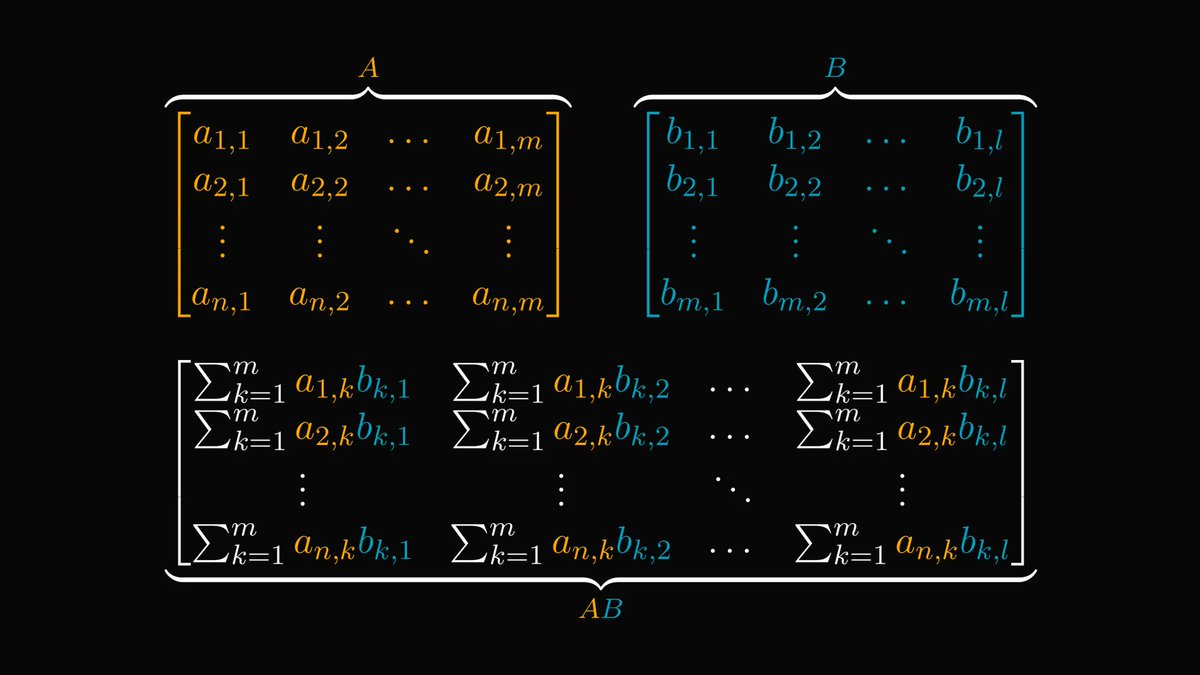

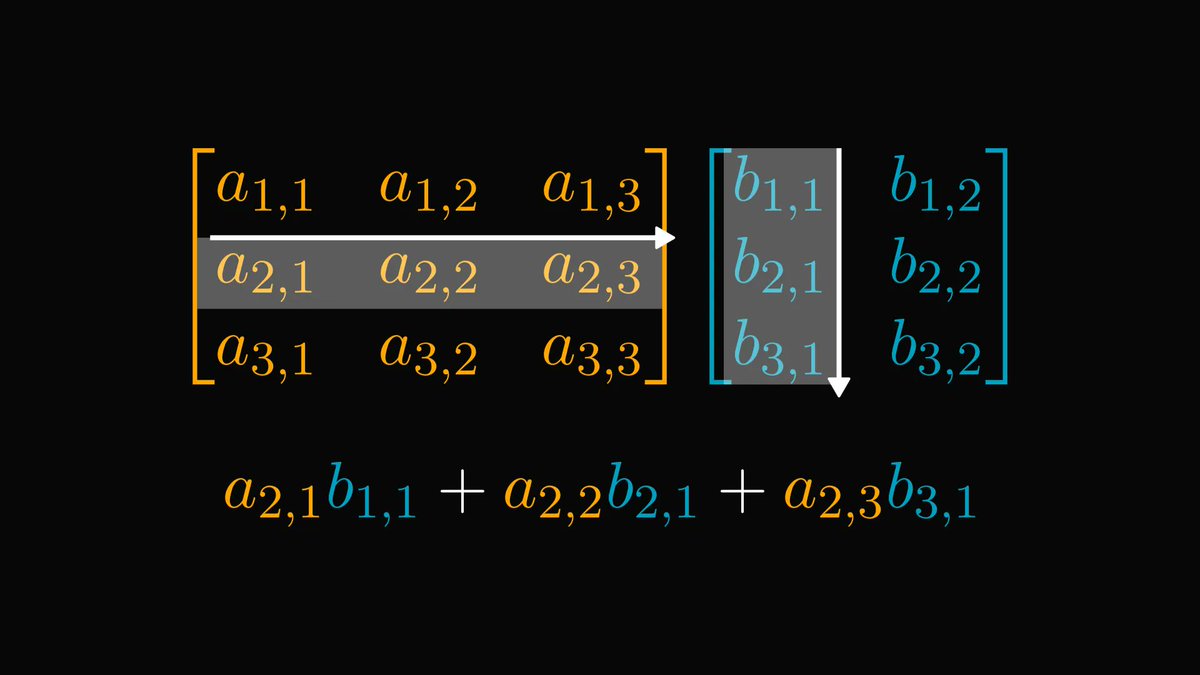

How would you define the derivative of a multivariable function? The most straightforward way would be as below, but there is a problem: division is not defined for vectors.

How would you define the derivative of a multivariable function? The most straightforward way would be as below, but there is a problem: division is not defined for vectors.

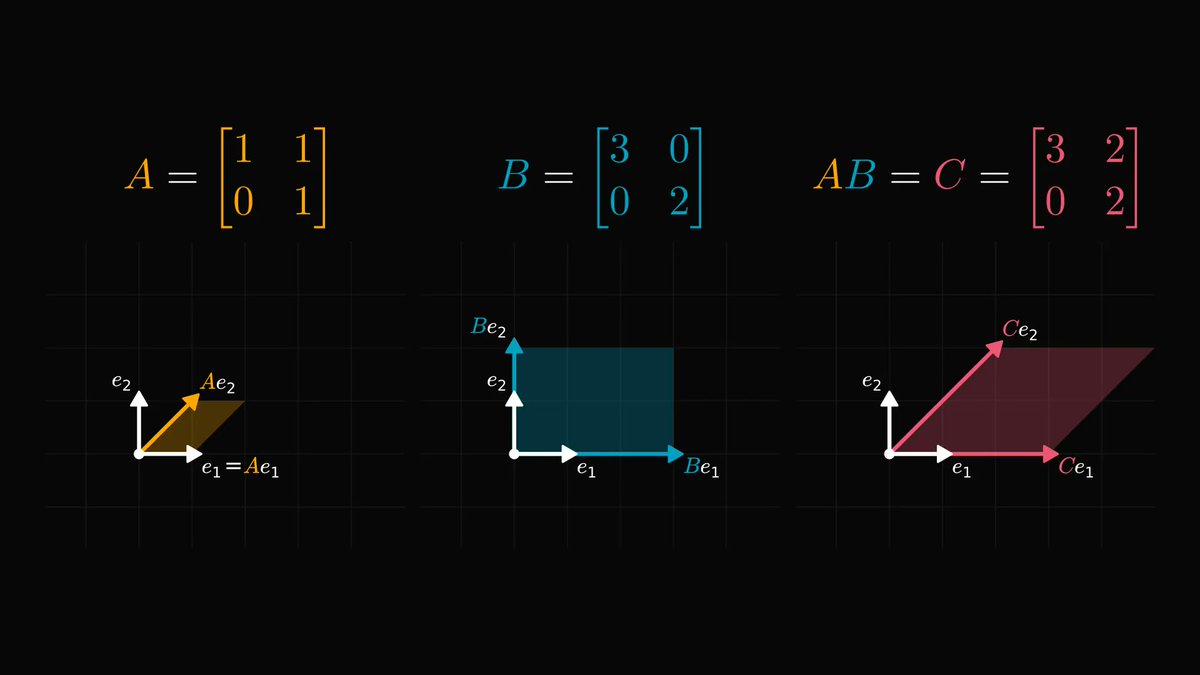

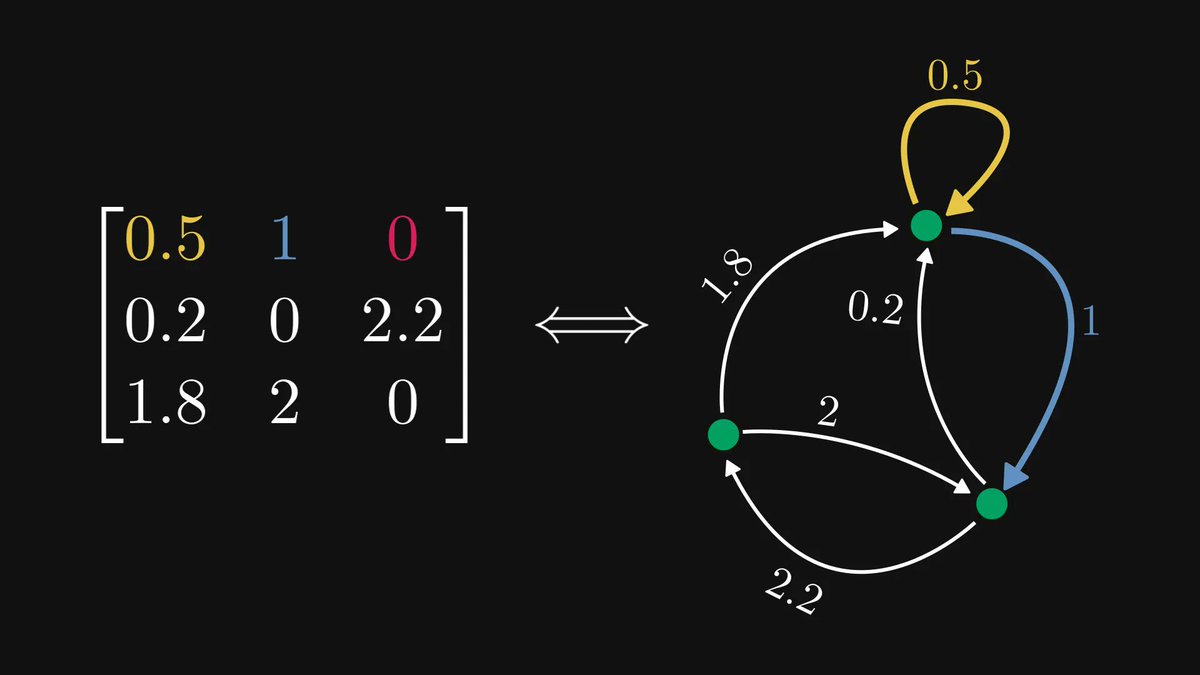

However, the definition offered by the best approximating linear function can be easily generalized!

The gradient (the multivariate "derivative") is the vector that gives the best linear approximation around a given point.

The gradient (the multivariate "derivative") is the vector that gives the best linear approximation around a given point.

Having a deep understanding of math will make you a better engineer. I want to help you with this, so I am writing a comprehensive book about the subject.

If you are interested in the details and beauties of mathematics, check out the early access!

tivadardanka.com/book

If you are interested in the details and beauties of mathematics, check out the early access!

tivadardanka.com/book

Correction! When talking about the higher order differentiation and the Taylor expansion, I sadly forgot to include one crucial part of the formula: the factorials.

Below are the correct formulas.

Below are the correct formulas.

• • •

Missing some Tweet in this thread? You can try to

force a refresh