This video and accompanying article draw conclusions about the effects of gun control based almost entirely on research I co-led, yet they reached a very different conclusion than we did. Here I highlight problems that help explain these differences

The article draws 4 conclusions that are not supported by our report. We did NOT conclude that a) all gun research is poor quality, b) the pattern of findings across studies would be expected by chance, c) the field is ideologically biased, or d) gun laws have no effect.

I believe these conclusions are incorrect, and rest on logical, statistical and factual errors.

The article suggests that there are thousands of studies of the effects of gun policy, and RAND concluded that only 0.4% of them provided credible evidence of gun policy effects. Both claims reflect misunderstandings of our report: rand.org/pubs/research_…

In fact, of the 21,686 papers we identified in our search, only 357 were RELEVANT to evaluating gun policy, and 123 of those met our inclusion rules. There is not a massive trove of bad research. There is little research on gun policy. More is needed to answer basic questions.

Our report identified methodological weakness in some studies, but the article uses these critiques to make unsupportable generalizations to the whole field. Why would the presence of flaws in a subset of studies lead us to discount evidence from the stronger ones?

Our report found that the literature does not yet support strong conclusions about the effects of many gun policies, typically because no research had been conducted on that policy, or because the data available did not result in statistically significant findings.

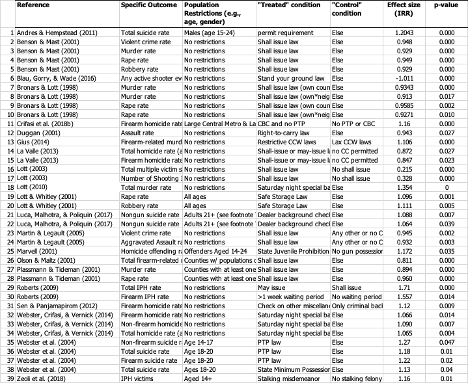

The article makes confusing claims about the statistical effects we extracted from the studies. They suggest that since we reported 722 statistical tests from this literature, our finding of evidence for just 18 gun policies is likely the result of chance alone.

As the article seems to concede, this is a misleading claim. Of the 651 unique statistical tests we identified, 163 were significant: many, many more than would be expected by chance if the laws had no effects.

The article suggests our finding that only 1 of many effects favored permissive gun laws suggests that the field is systematically biased in favor of restrictive gun laws. But it isn't correct that we identified only 1 such effect. There are at least 39 among the 651 we extracted

So we think the article draws a lot of incorrect conclusions. There are few studies of gun law effects, so we need more. Some are flawed, so we should give greater weight to the better ones and seek to strengthen future research.

rand.org/pubs/research_…

rand.org/pubs/research_…

When multiple studies with good methods from different research groups agree on the likely effects of a gun law, dismissing them as chance and bias seems like a biased conclusion.

• • •

Missing some Tweet in this thread? You can try to

force a refresh