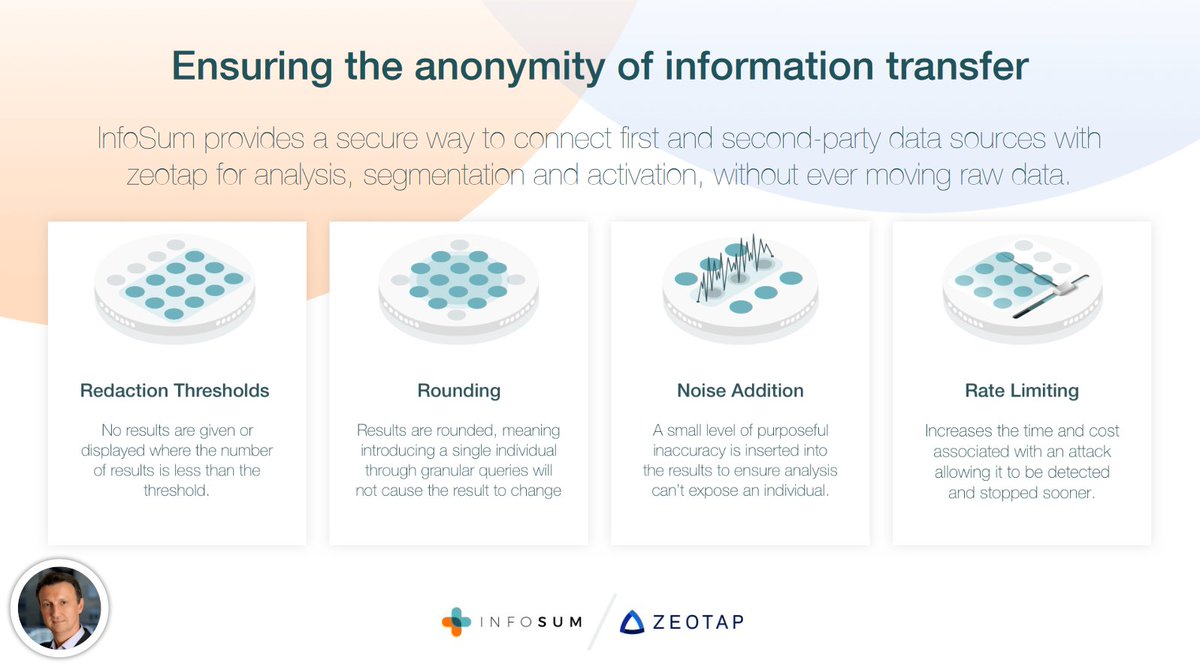

"You don't give us any data. We don't give anyone your data. We connect data without sharing it" ...magic!

hello.infosum.com/hubfs/Webinar/…

hello.infosum.com/hubfs/Webinar/…

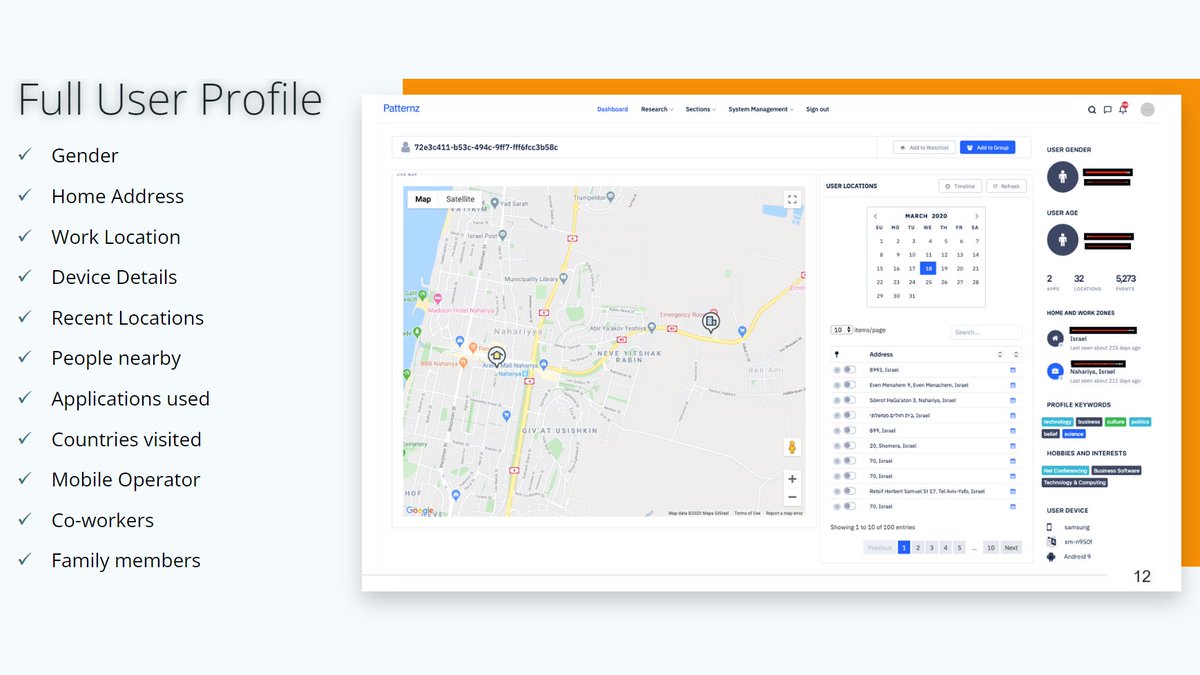

The claimed thresholds and limits don't do much, noise could help in theory. But first, I don't buy it. Second, what is it about? Reducing already bad match rates by another 1%? And third, senders/recipients still process extensive personal data co-controlled by the 'clean room'.

'Virtual' household matching via a 'virtual' household mapping file. Perhaps even the IDs and IPs and timestamps are very virtual, too? #personaldata #laundering

• • •

Missing some Tweet in this thread? You can try to

force a refresh