Public-interest researcher | Tech and society. Surveillance, consumer data, platform power, algo decisions, data at work | wchr@ bsky. social & mastodon. social

How to get URL link on X (Twitter) App

https://twitter.com/WolfieChristl/status/1831709488690188759Another 2011 doc indicates that the GCHQ operated a kind of probabilistic ID graph that aims to link cookie/browser IDs, device IDs, email addresses and other 'target detection identifiers' (TDIs) based on communication, timing and geolocation behavior:

https://x.com/WolfieChristl/status/1831993431381475767

Sie haben Menschen identifiziert, die Entzugskliniken, Swinger-Clubs oder Bordelle besucht haben, aber auch Personal von Ministerien, Bundeswehr, BND, Polizei.

Sie haben Menschen identifiziert, die Entzugskliniken, Swinger-Clubs oder Bordelle besucht haben, aber auch Personal von Ministerien, Bundeswehr, BND, Polizei.

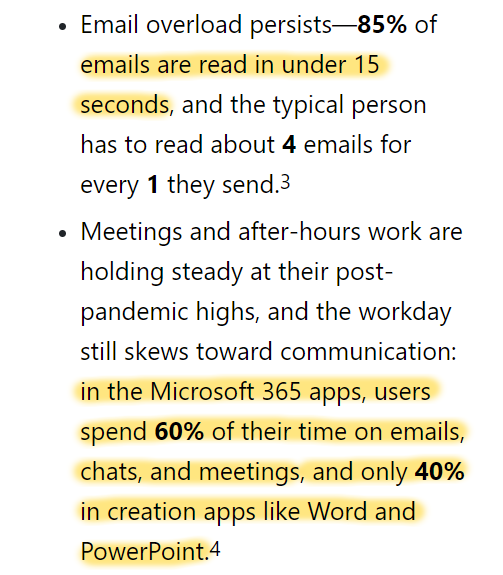

Microsoft states that the analysis on the seconds until emails were read excludes EU data. Activity data from Outlook, Teams, Word etc, however, seems to include EU data.

Microsoft states that the analysis on the seconds until emails were read excludes EU data. Activity data from Outlook, Teams, Word etc, however, seems to include EU data.

'Patternz' in the report by @johnnyryan and me published today:

'Patternz' in the report by @johnnyryan and me published today:

"It calculates risk scores for each risk domain for each person", according to the promotional video, and offers "clarity and granularity for the entire US".

"It calculates risk scores for each risk domain for each person", according to the promotional video, and offers "clarity and granularity for the entire US".

I extracted information about mobile location data they claim to sell per country from their website:

I extracted information about mobile location data they claim to sell per country from their website:

Near's general counsel and chief privacy officer:

Near's general counsel and chief privacy officer:

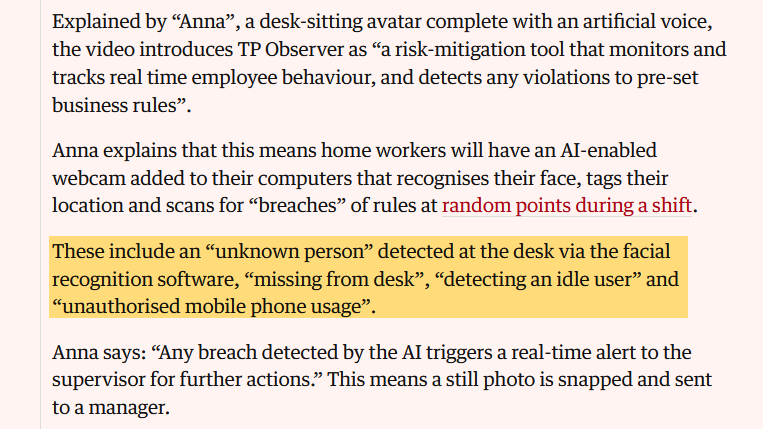

I guess rarely anyone has ever examined this kind of software at such a level of detail, from a worker perspective.

I guess rarely anyone has ever examined this kind of software at such a level of detail, from a worker perspective.

We let Google turn the web, mobile and other digital services into spaces with ubiquitous tracking and profiling. We let them delay the long overdue end of 3rd party cookies and advertising IDs forever.

We let Google turn the web, mobile and other digital services into spaces with ubiquitous tracking and profiling. We let them delay the long overdue end of 3rd party cookies and advertising IDs forever.

It's good they took action against a web publisher, which European regulators rarely do.

It's good they took action against a web publisher, which European regulators rarely do.

This case study is part of a larger project led by Cracked Labs, which examines and maps how companies use personal data on (and against) workers in Europe, together with @algorithmwatch, @JeremiasPrassl, @UNI_Europa and others, funded by @Arbeiterkammer:

This case study is part of a larger project led by Cracked Labs, which examines and maps how companies use personal data on (and against) workers in Europe, together with @algorithmwatch, @JeremiasPrassl, @UNI_Europa and others, funded by @Arbeiterkammer:

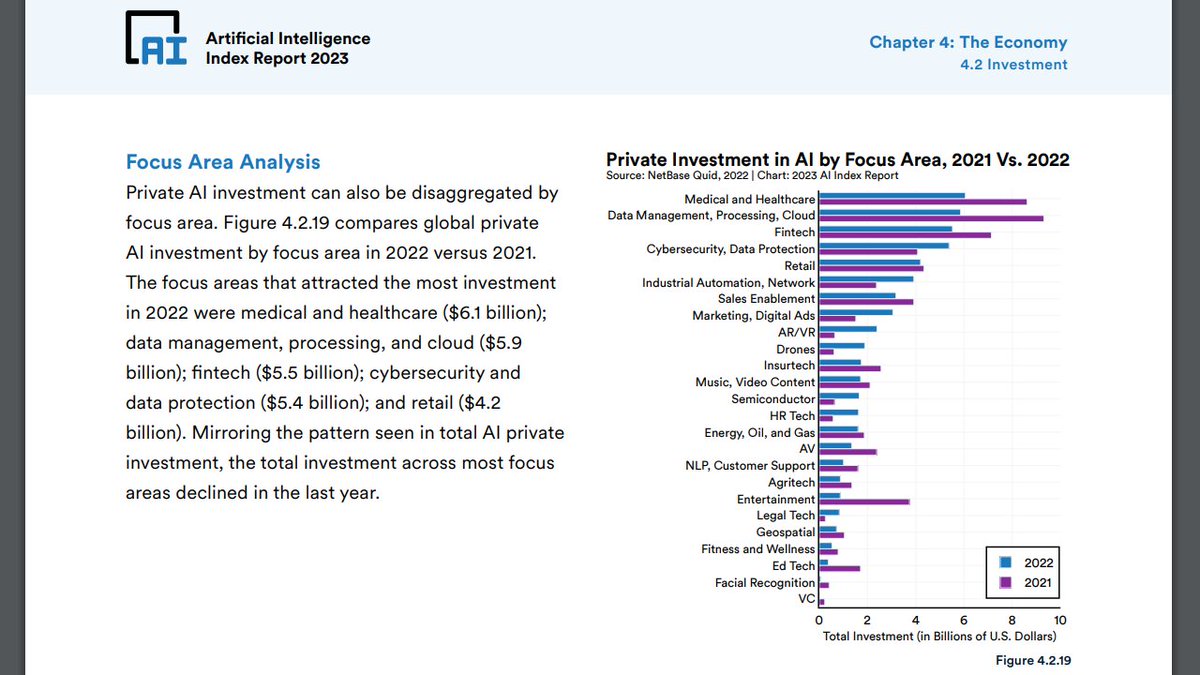

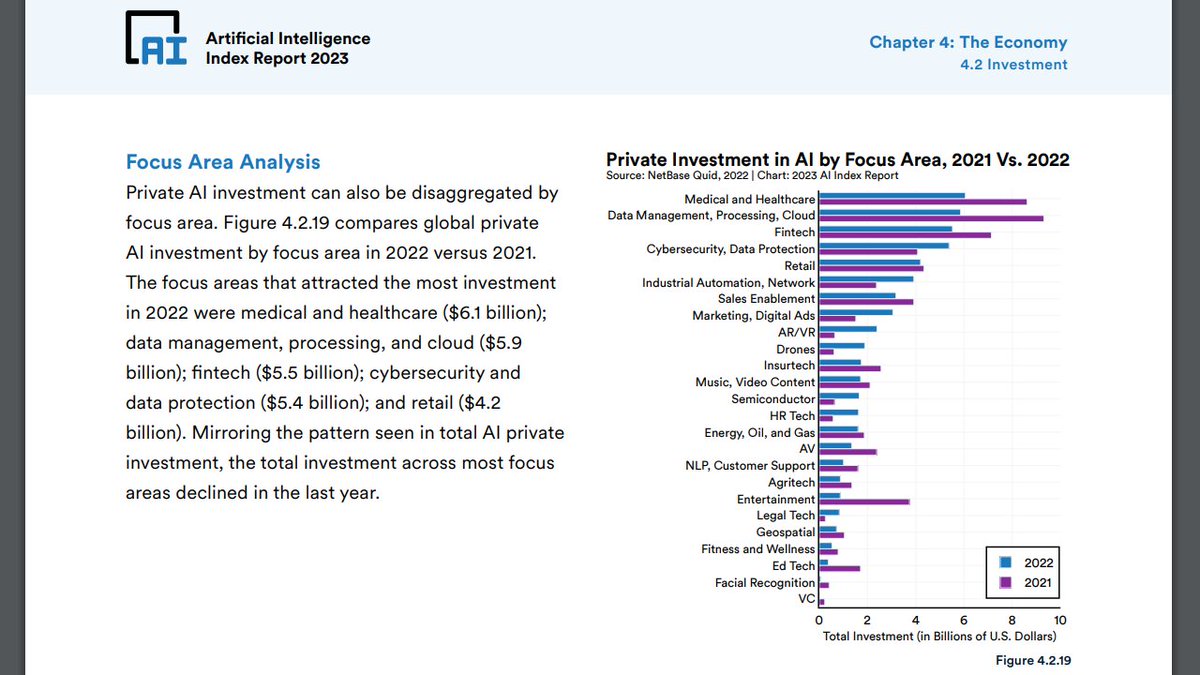

Of course there's also marketing and multimedia content, and I guess this crazy LLM hype will make money flow hard. Nevertheless, media debates on 'AI' seem to miss a lot.

Of course there's also marketing and multimedia content, and I guess this crazy LLM hype will make money flow hard. Nevertheless, media debates on 'AI' seem to miss a lot.

T-Mobile US also claims to have "35+ industry leading, vetted data partners" (see screenshot above), which most likely means that T-Mobile US is re-selling personal information from dozens of other data brokers.

T-Mobile US also claims to have "35+ industry leading, vetted data partners" (see screenshot above), which most likely means that T-Mobile US is re-selling personal information from dozens of other data brokers.