The no. 1 question I get about #serverless is around testing - how should I test these cloud-hosted functions? Should I use local simulators? How do I run these in my CI/CD pipeline?

Here are my thoughts on this 🧵

Here are my thoughts on this 🧵

There's value in testing YOUR code locally, but don't bother with simulating AWS locally, too much effort to set up and too brittle to maintain. Seen many teams spend weeks trying to get localstack running and then waste even more time whenever it breaks in mysterious ways 😠

Much better to use temporary environments (e.g. for each feature, or even each commit). Remember, with serverless components you only pay for what you use, so these environments are essentially free 🤘

When you start a new feature, create a temp environment, e.g. "sls deploy -s my-feature" with @goserverless and then write tests that execute your function code against the real AWS services, DynamoDB tables and whatnot.

@goserverless These "integration tests" (or "sociable tests" as Martin Fowler calls them) test your code against real AWS services and catch integration problems as well as biz logic errors quickly and give you fast feedback for code changes.

@goserverless Sure, you have to deploy any infrastructure changes, like adding new DynamoDB tables, etc. before you can run these tests. But you don't have to redeploy the whole stack every time you make a code change.

@goserverless I generally think "unit tests" (what Martin Fowler calls "solitary tests") don't have a great ROI and I only write these if I have genuinely complex biz logic. Most of my functions are IO heavy and do minimal data transformation and can be covered by integration tests.

@goserverless When I am dealing with complex biz logic, I encapsulate them into modules and write unit tests for them and make sure these tests don't deal with any external dependencies. They work exclusively with domain objects.

@goserverless Once I have good confidence that my code works, I write e2e tests to check the whole system works (without the frontend) by testing the system from its external-facing interface, which can be a REST API, or an EventBridge bus, or a Kinesis data stream, or whatever.

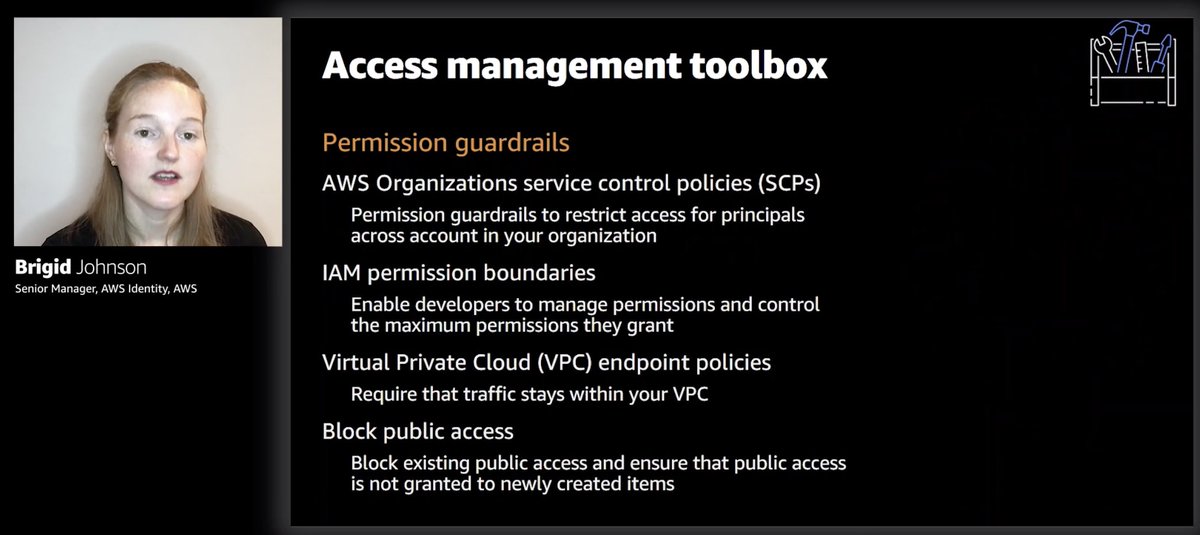

@goserverless These e2e tests would catch problems outside of my code - configurations, IAM permissions, etc. And a lot of the time, I write tests in such a way that I can reuse the same test case for both integration and e2e tests so they're not as labour intensive.

@goserverless If I'm building APIs then these e2e tests would call the deployed API and check the response. For data pipelines, they'd push events into an EventBridge bus and wait for the expected side-effect (e.g. data written to a DynamoDB table).

@goserverless Again, using temporary environments really helps here. You don't have to worry about pushing events to shared event buses that trigger lots of other stuff that you don't intend to.

theburningmonk.com/2019/09/why-yo…

theburningmonk.com/2019/09/why-yo…

@goserverless If the side-effect you're looking for is "an event is published to Kinesis/EventBridge/SNS" then it can be tricky to detect these. Check out this old post of mine on a few ways to do this.

theburningmonk.com/2019/09/how-to…

theburningmonk.com/2019/09/how-to…

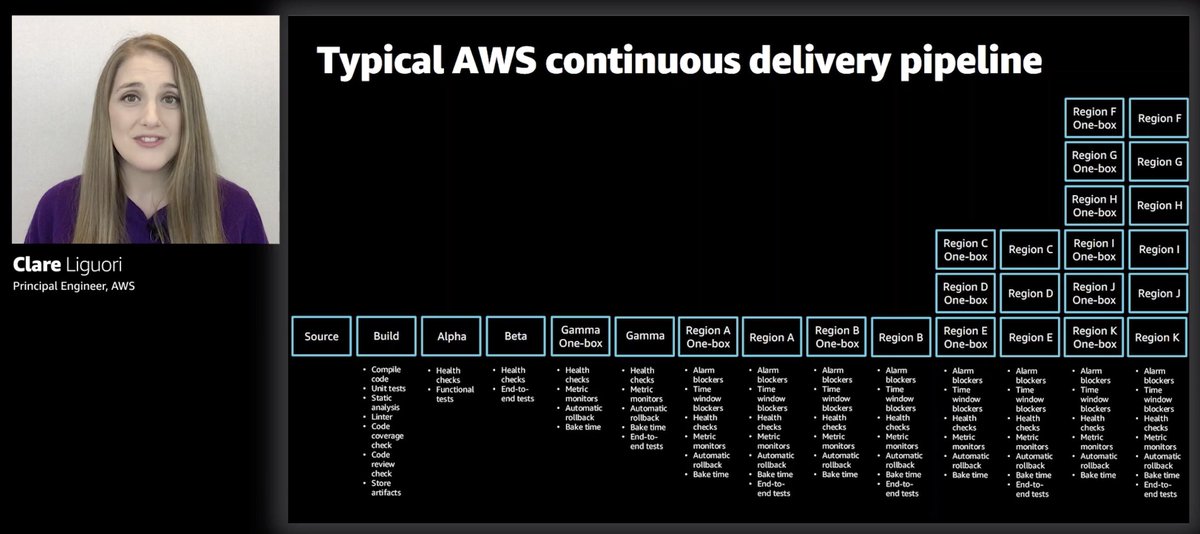

@goserverless As part of the CI/CD pipeline, create a temporary environment and run your integration and e2e tests against it. Then delete the environment after the tests. No need to clean up test data from shared environments. If the tests passed, then you deploy to the real environment.

@goserverless This approach is broadly in line with and inspired by the testing honeycomb, and I have been very happy with it. It gives me the feedback speed for small code changes and the confidence I need to operate complex applications with lots of moving parts (and therefore configures!)

@goserverless Of course, testing doesn't stop there, there's the whole "testing in production" which includes observability, canary testing, smoke testing, load testing, chaos experiments and much more. You don't need to do all of them, but having good observability is a must.

@goserverless My go-to solution is @Lumigo it takes a few mins to set up, no need for manual instrumentation and gives me everything I need to troubleshoot issues I haven't seen before. And I love the built-in dashboard, it's designed by serverless users for serverless users.

@goserverless @Lumigo If you want to see these in action and learn how to apply them in practice then check out my upcoming workshop. We're gonna cover a lot more than testing and have something for both beginners and experienced serverless devs.

& get 15% off with "yanprs15"

homeschool.dev/class/producti…

& get 15% off with "yanprs15"

homeschool.dev/class/producti…

@goserverless @Lumigo That's a wrap!

If you enjoyed this thread:

1. Follow me @theburningmonk for more of these

2. RT the tweet below to share this thread with your audience

If you enjoyed this thread:

1. Follow me @theburningmonk for more of these

2. RT the tweet below to share this thread with your audience

https://twitter.com/245821992/status/1521474752963096583

• • •

Missing some Tweet in this thread? You can try to

force a refresh