Why is HypeHype building their own engine instead of using Unreal/Unity?

We got this question at Supercell TIMEOUT event yesterday. This is a super relevant question. And there are a lot of wrong answers to it. Let's discuss in a thread...

We got this question at Supercell TIMEOUT event yesterday. This is a super relevant question. And there are a lot of wrong answers to it. Let's discuss in a thread...

HypeHype is a game making platform for touchscreen mobile devices and web (HTML5 + WebGL 2.0). There's no PC client. Games are created inside the app, and the app also allows users to upload their own assets to our cloud servers. Games are instant loading (<1 sec loading time).

Let's start about data management. Traditional engines are built with an assumption that the developer uses a PC to build their game. PC hosts the source assets and PC cooks the standalone builds. All data converters run on PC. This code is part of the PC editor.

In HypeHype the data management runs in cloud server, and assets are shared between multiple games created by multiple authors. Everybody can use assets created by other people in their games. They don't need to download or install these assets. The game streams them.

The data versioning and cooking runs in the cloud server. The games have low resolution mips/LODs baked in to ensure <1 sec loading times. The app itself cooks these game bundles and sends them to the server. Server can refresh them and run patchers of them.

The app can also run data patchers itself, as we want to avoid patching all games in the server every time something changes in the data model. There's already 250,000 games and the platform is only in early access in Philippines and Finland. Distributing the cost works better.

Since we are planning to maintain this system for 10+ years, we care a lot about our data integrity. Public game engines are not designed to be 100% data compatible in 10+ year time period. We can't afford data compatibility issues when updating an engine over the years.

The other big topic is the tools. People license third party engine because it gives them great tools for building the levels. This gives them a big productivity boost and allows their artists to start building the levels early.

All content in HypeHype is build in the touchscreen app. Our team is not building the levels. The users are. Traditional engines don't offer any level creation tools that would compile on touchscreen Android/iOS devices. Their tools are designed for PC.

Ubisoft/RedLynx Trials game series and Frogmind Badland game series both are physics based games with in-game level creation tools. These user facing tools are great, since the team used them to build their levels too. Dogfooding is the best way to build quality tools.

The level designers working in these game projects loved the level creation tools. The iteration time was super good. You could instantly switch between game<->edit mode on the device. All Badland levels were build on iPad and Trials levels on Xbox consoles.

HypeHype has collaborative online editing on the device. Multiple people can build together in real time. People can spectate creators building the game and chat with the creators. The server ensures the data validity and prevents multiple people modifying the same objects.

Serialization speed: Have to ever seen a game shipped with a public game engine to load in <1 seconds? This is a hard requirement for us. RedLynx Trials games had <3s loading times, and <0.3s restart and game<->edit mode transitions. This is possible with custom engine.

Super fast serialization makes iteration time super good. Which makes the team more productive. Same goes for offline data cooking, lighting bake and level deploying to device. We don't have any of these costs. All our sample levels are also created on the device.

We want to allow our users to extract their games as standalone HTML 5 + WebGL 2.0 (+WebGPU) packages, that they can deploy to their own web server, or even sell in web game marketplaces. They could wrap them in HTML 5 player for App Store or Google Play.

The licensing terms of public game engines doesn't allow us to do this. Our users would need to license these 3rd party engines and pay licensing fees to them. We don't want this. We want our users to freely distribute their work and sell it too if they want.

This is why we have to be extra careful when licensing 3rd party technology in HypeHype. We don't want technical choices to limit our business choices. All tech we use must be freely usable by our customers in their HTML5 games. Without users having to sign contracts or pay fees.

Let's talk about technical factors next. People often say that performance is the reason they build their own engine. Or they NEED a custom renderer. These are often bad reasons to build your own engine. You can workaround many perf issues and renderers are customizable.

At Ubisoft it was common to keep using the old engine, while thowing away the renderer. People did this many times between console generations. A renderer alone is not a good enough reason to build a whole new engine. Just build a renderer. Or modify existing one.

Claybook used UE4. We had our own SDF volume based scene, our own SDF ray-tracer (running in async compute) and our own GPGPU physics engine (fluids and clay). We modified the UE4 renderer heavily and optimized the renderer backends. Everything else in UE4 was fine for us.

If you write your own engine, you have to write all the bits that you don't really care about. Usually a game has some unique features that you really want to showcase. Better to spend your focus on those features instead of rewriting all. Stock solutions work for most cases.

Traditionally big public engines were not great for massive simulations. Recently UE5 Mass and Unity DOTS have been introduced. The downside of these technologies is that they are not yet production ready. It's a risk to commit to them. But building your own isn't trivial either.

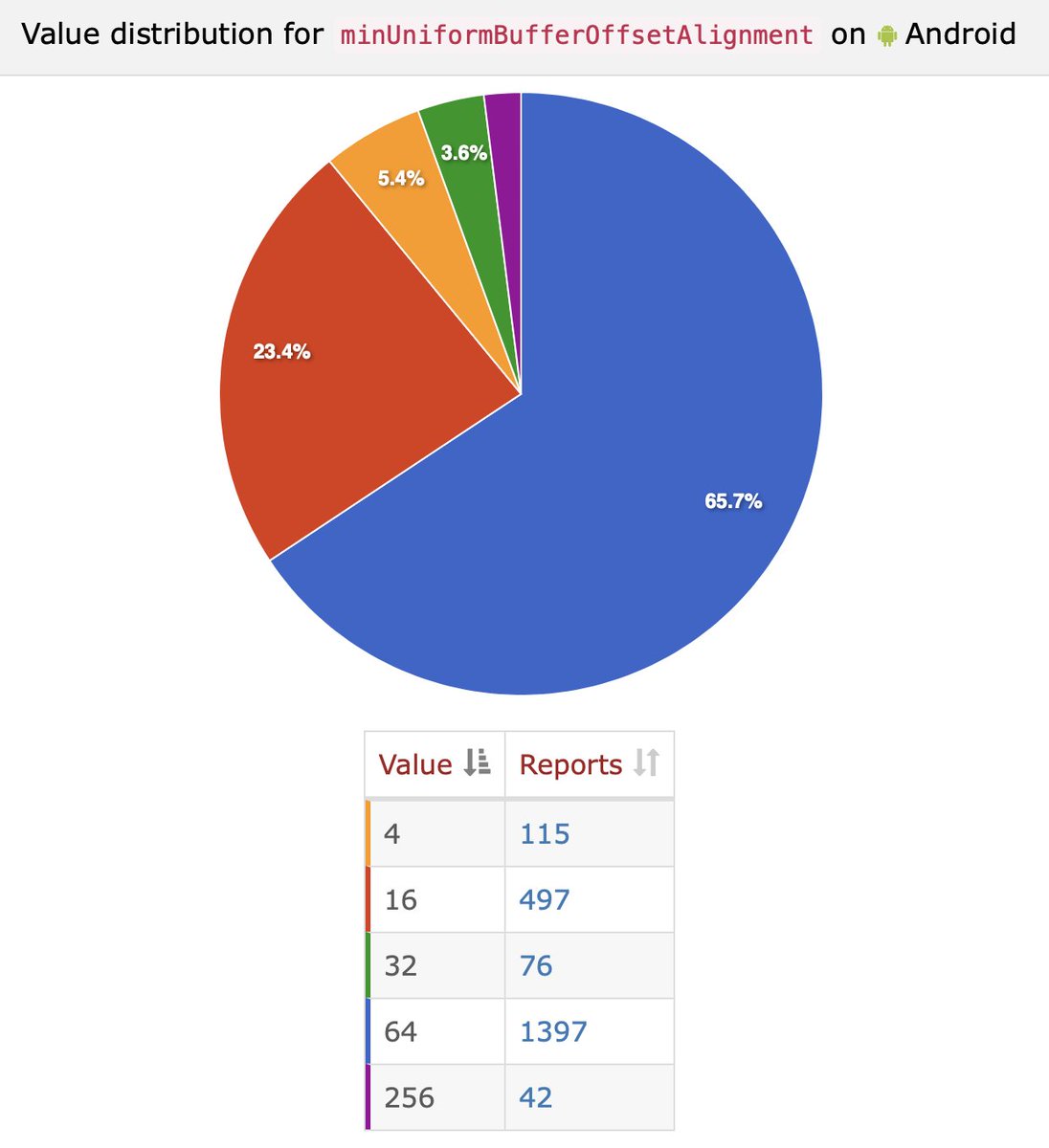

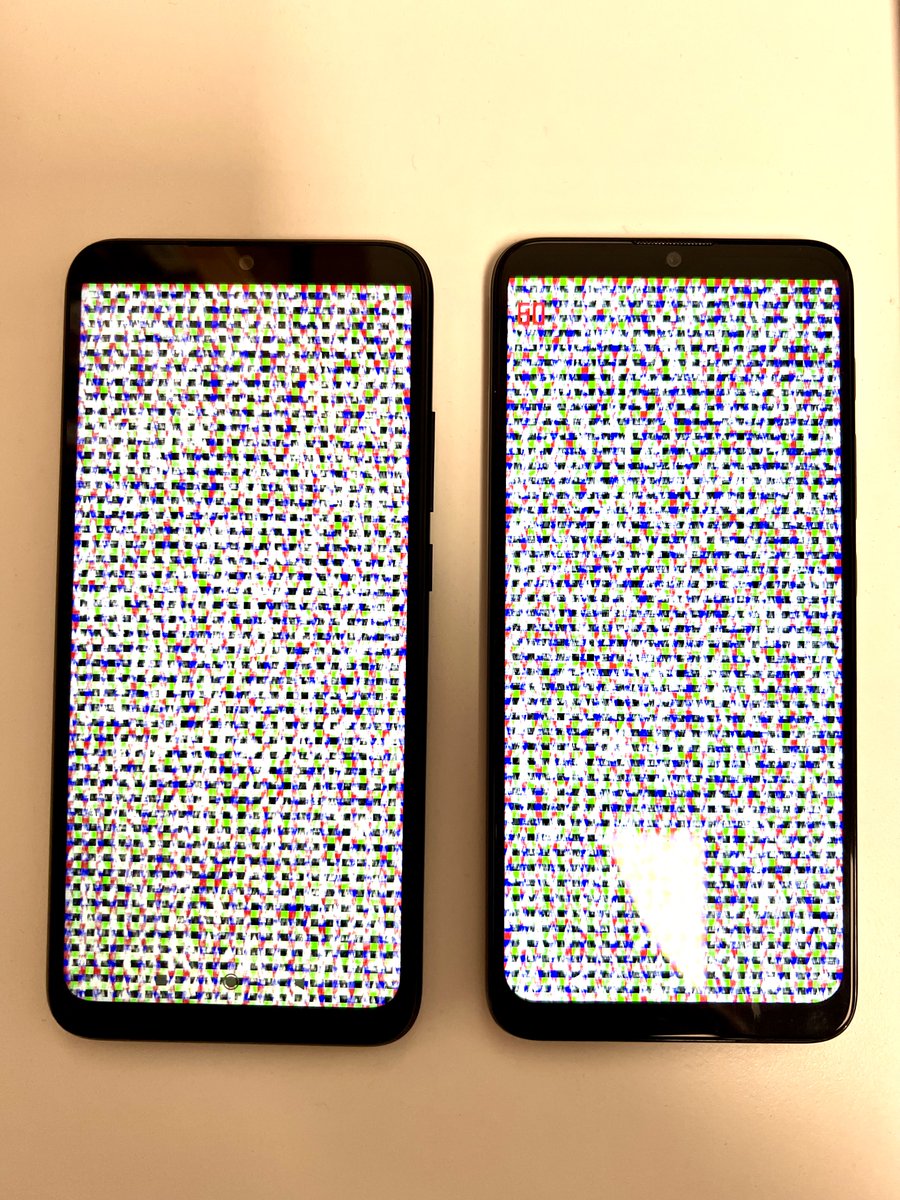

Nanite is also solving the massively kit bashed unoptimized user generated content case pretty well. But it doesn't scale down to mobiles. And I doubt it ever will scale to 43 GFLOP/s low end Android devices.

Professional game projects have technical artists designing the workflows. You can merge meshes together, put textures in the same atlases, create special content for far away geometry, hand place occluders / portals / probes, etc. User generated content is a different ball game.

At RedLynx/Ubisoft we created GPU-driven rendering to ensure that we can render poorly optimized kit bashed user generated content efficiently. We had 100% virtual texturing and streaming for everything to ensure the memory never runs out. Special needs require special tech.

Recap: If you are building a traditional game using traditional PC based workflow, you should consider licensing a public engine. Don't rewrite everything. Focus on the tech that makes your game unique. Do you really want to rewrite file system, asset management and audio system?

Custom engine is a good call if your business needs can't be covered by the engine licensing terms, all content is created inside your app, could server deploys/cooks/converts/versions all data, need instant loading, web distribution, and 10+ years of data persistence...

Generic solutions always use more memory and run slower than dedicated solution. The difference isn't massive, but it's certainly relevant for low end mobile devices. Especially if you can't ensure that your content is optimally made. User generated content requires special care.

• • •

Missing some Tweet in this thread? You can try to

force a refresh