In today's #vatnik soup I'll explain why Elon Musk's (@elonmusk) "balancing act" of purging just one side will increase the spread of fake news and disinformation.

First of all I'd like to say that biased, systematic blacklisting of content that Twitter conducted was WRONG.

1/14

First of all I'd like to say that biased, systematic blacklisting of content that Twitter conducted was WRONG.

1/14

Censorship is almost always bad and there are much better ways to fight dis- and misinformation (labels, semi-objective, external fact-checkers, etc.).

But based on recent events, it seems that Musk is just swinging this same system to the opposite direction.

2/14

But based on recent events, it seems that Musk is just swinging this same system to the opposite direction.

2/14

In his Nov 18, 2022 tweet he said that "Negative/hate tweets will be max deboosted & demonetized", yet there are no definitions or clear rules what is considered hate speech.

Elon also promised to reinstate accounts that were previously suspended.

3/14

Elon also promised to reinstate accounts that were previously suspended.

3/14

For example, on 23th Nov 2022, Twitter declared that it no longer enforces the COVID-19 misleading info policy: transparency.twitter.com/en/reports/cov…

At the beginning of the pandemic, troll farms spread disinformation that was actually dangerous and potentially lethal.

4/14

At the beginning of the pandemic, troll farms spread disinformation that was actually dangerous and potentially lethal.

4/14

Lately several American neo-nazi accounts have been reinstated, including anti-semitic group Evropa's former leader, Patrick Casey:

Another neo-nazi, Daily Stormer's editor Andrew Anglin also regained access to their Twitter profile.

5/14

https://twitter.com/leahmcelrath/status/1598763724315119617

Another neo-nazi, Daily Stormer's editor Andrew Anglin also regained access to their Twitter profile.

5/14

The leaks from the Twitter Files has been advertised as a conspiracy among Twitter's ex-executives where they secretly decide what content gets seen, but Twitter has had a FAQ about these issues since '18: blog.twitter.com/official/en_us…

6/14

6/14

Elon has also participated in the discussion about the Twitter Files. He has attacked the NYT (@nytimes), calling the outlet "an unregistered lobbying firm for far left politicians". He also said that Alex Stamos (@alexstamos) operates a "propaganda platform".

7/14

7/14

In 2018, Science published a paper by Vosoughi, Roy, & Aral, titled "The spread of true and false news online". The paper concluded that "false news stories are 70 percent more likely to be retweeted than true stories are" and that ...

8/14

8/14

... "It also takes true stories about six times as long to reach 1,500 people as it does for false stories to reach the same number of people." Allowing fake news spread freely will slowly drown the platform and more and more factual news stay hidden. doi.org/10.1126/scienc…

9/14

9/14

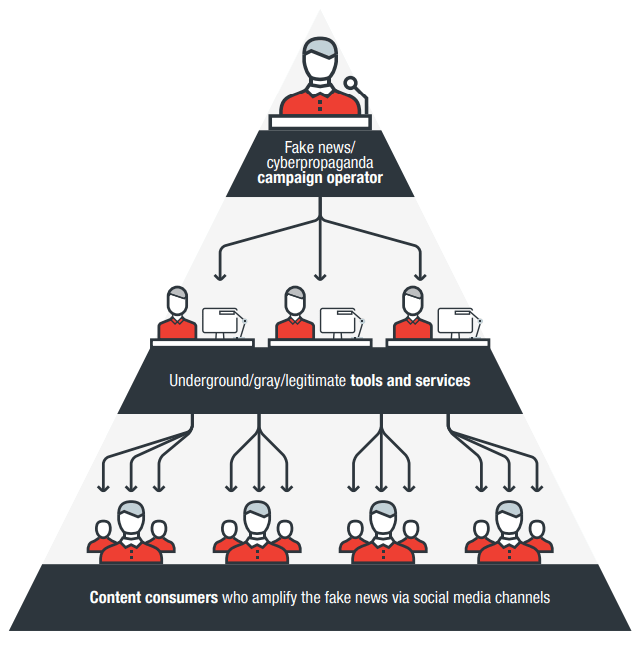

I also consider Twitter's latest design choices part of the "dark pattern design" group. These design choices "trick" users to specific actions on the platform or "nudge" their thinking to a specific direction.

10/14

10/14

Manipulation of the information flow, meaning what tweets Twitter shows us, can manipulate our thinking and "nudge" our worldview slowly to a specific direction.

How does Twitter shape our worldview if most of the content is actually disinformation?

11/14

How does Twitter shape our worldview if most of the content is actually disinformation?

11/14

I have discussed this type of dark pattern design in my 2022 publication, "Facebook’s Dark Pattern Design, Public Relations and Internal Work Culture": doi.org/10.34624/jdmi.…

12/14

12/14

Musk has also criticized the "woke culture" taking place in the Western society. We have to remember that this culture war has been fueled by Russian disinformation and propaganda throughout the years, topics ranging from LGBT+ rights to movements like BLM.

13/14

13/14

To conclude: With the recent changes, we can pretty much expect Twitter to be the same as before, but the pendulum just swings from the left to the right.

And it will also contain MUCH more disinformation than before.

14/14

And it will also contain MUCH more disinformation than before.

14/14

In addition, disinformation spreads most effectively through "super spreaders": thebulletin.org/2022/06/how-cl…

Many of these accounts have now been reinstated.

Many of these accounts have now been reinstated.

• • •

Missing some Tweet in this thread? You can try to

force a refresh