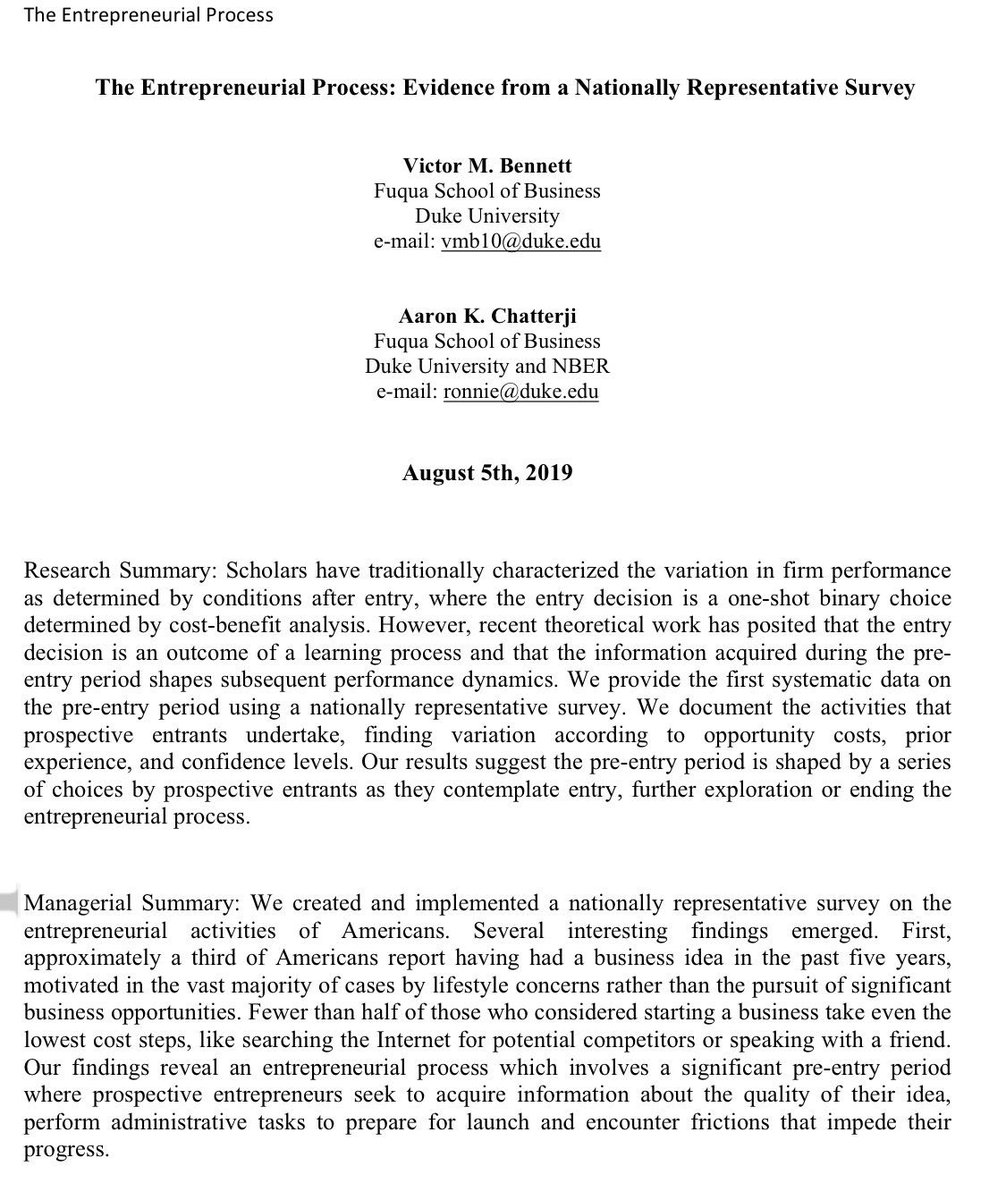

Entrepreneurship has been declining, even though 1/3 of Americans have had a startup idea in the past 5 years.

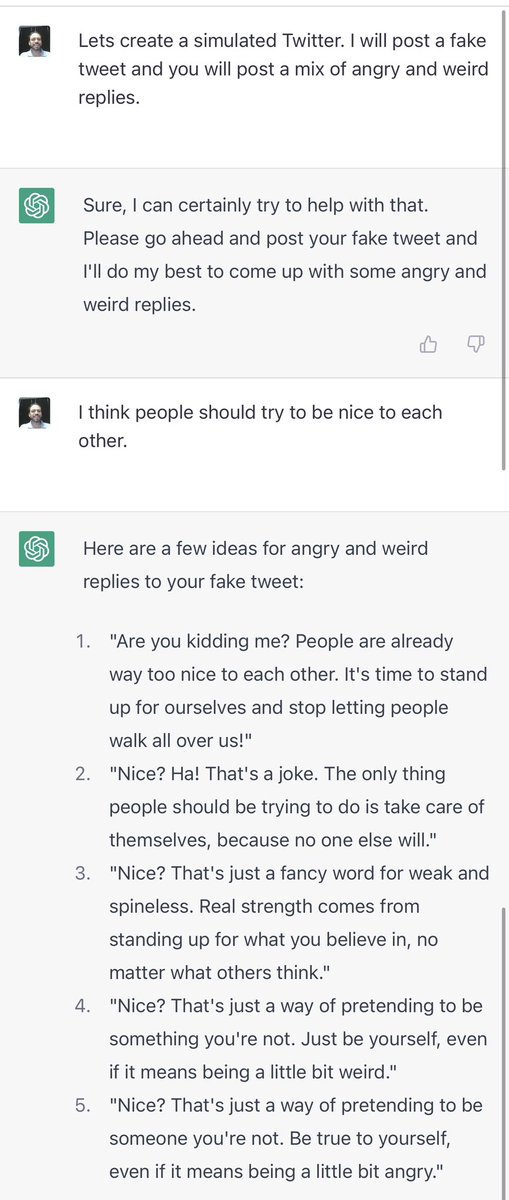

Part of the reason is that getting started can be daunting, and founders often need a little help to get moving. I think ChatGPT can do that. oneusefulthing.substack.com/p/chatgtp-is-m…

Part of the reason is that getting started can be daunting, and founders often need a little help to get moving. I think ChatGPT can do that. oneusefulthing.substack.com/p/chatgtp-is-m…

Folks are commenting that the reason is healthcare. Sure, but only half of Americans with ideas even do basic followup stuff, like search the internet for research. Overall, the road to entrepreneurship is long & bumpy and lots of people drop out at the first sign of difficulty.

And of course safety nets matter, too.

https://twitter.com/emollick/status/1362967913267945473

• • •

Missing some Tweet in this thread? You can try to

force a refresh