At which level should one cluster standard errors? What's good empirical practice? Have you ever heard about placebo regressions in this context? Here is a very useful guide that was recently published in the JoE doi.org/10.1016/j.jeco…. 🧵 with a short summary. #EconTwitter 1/9

The general idea is of course that we divide the sample into clusters and that we allow for heteroskedasticity/dependence within clusters while assuming independence across clusters. 2/9

This works asymptotically (Section 2 of paper), but it turns out that in finite samples, inferences may not be reliable. Then, the bootstrap could work. 3/9

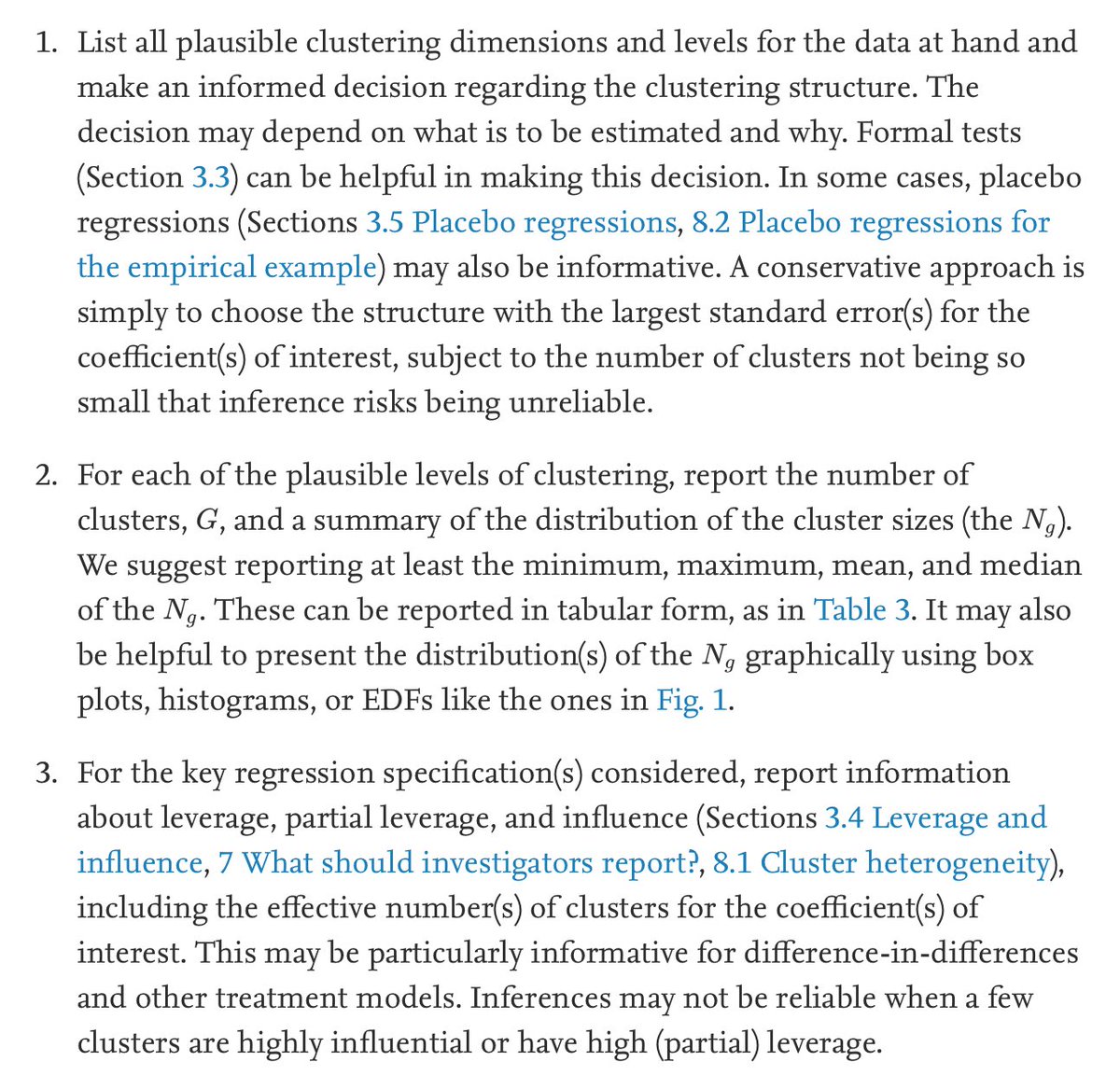

The purpose of this paper here is to guide empirical practice. In Section 3, the paper discusses when to use cluster-robust inference. It also discusses the role of cluster fixed effects and describes several procedures for deciding the level at which to cluster. 4/9

Section 4 then talks about inference, in particular factors that determine how reliable, or unreliable, these inferences are likely to be in practice. Sections 5 and 6 then go into detail. 5/9

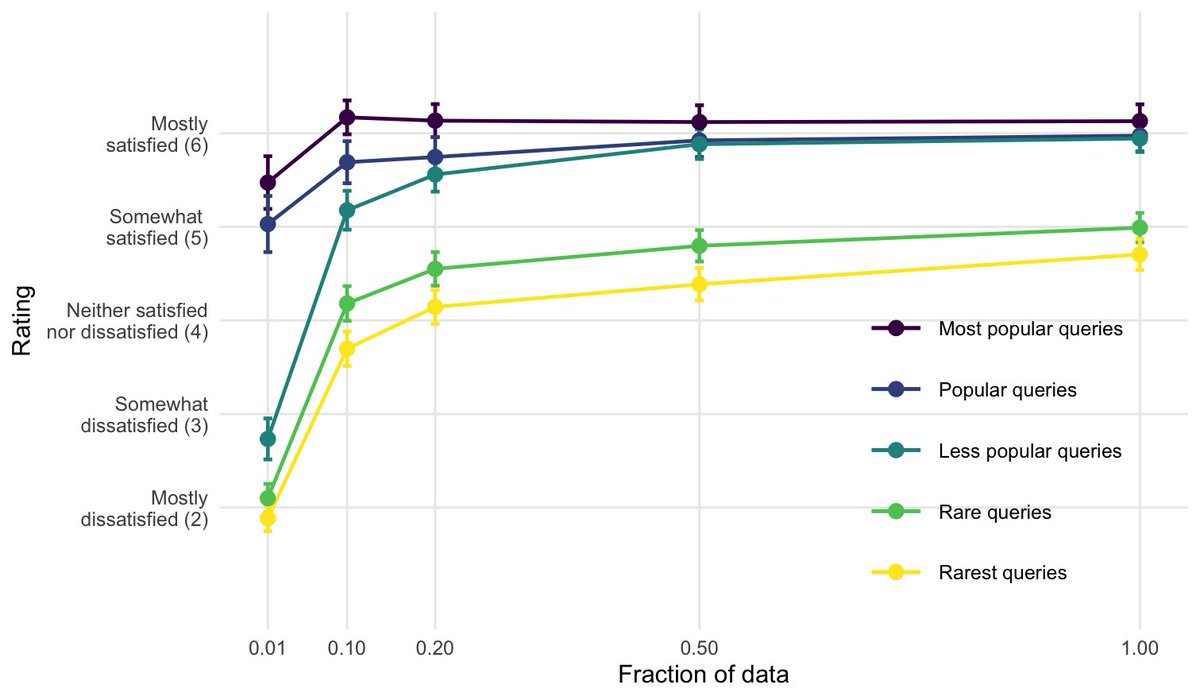

One thing that seems particularly useful is the so-called placebo regression (Section 3.5). They provide a simple way to check the level at which to cluster. 6/9

They are constructed, roughly speaking, such that when one clusters at the right level, then one gets the "right" rejection rates. 7/9

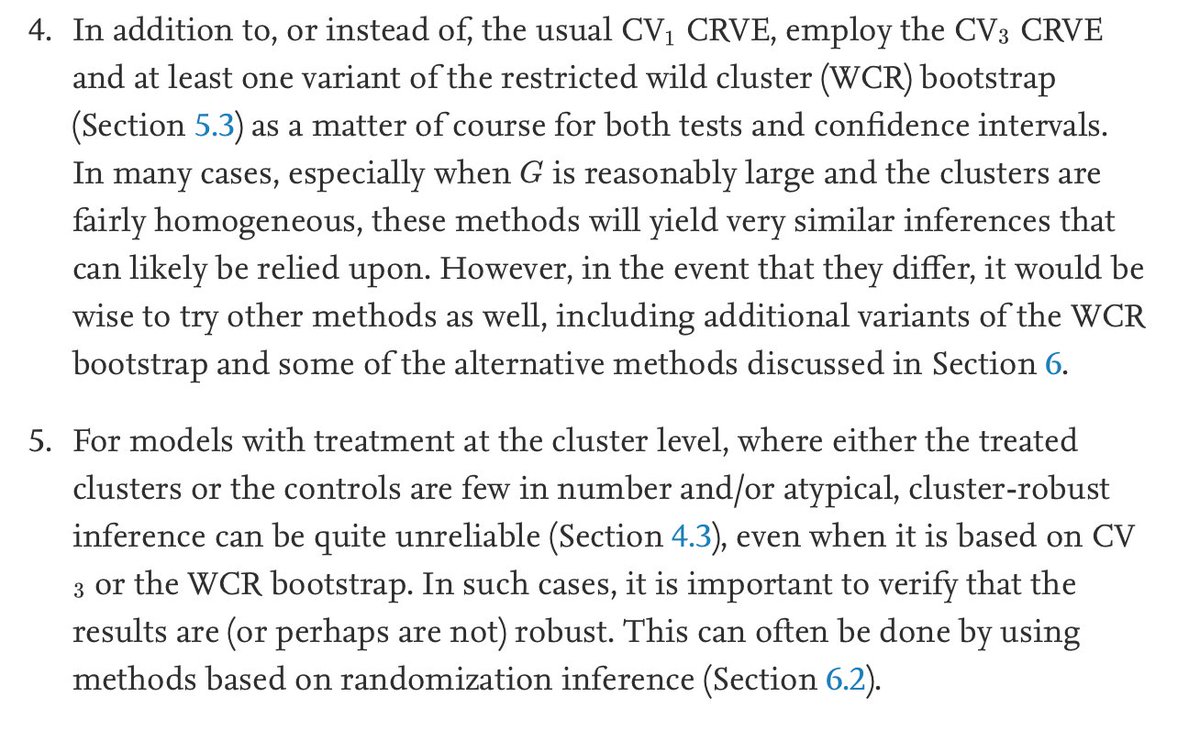

The last two points are things to keep in mind. This is a very rich paper and I hope much of what is discussed will become the usual practice in applied work. 9/9

• • •

Missing some Tweet in this thread? You can try to

force a refresh