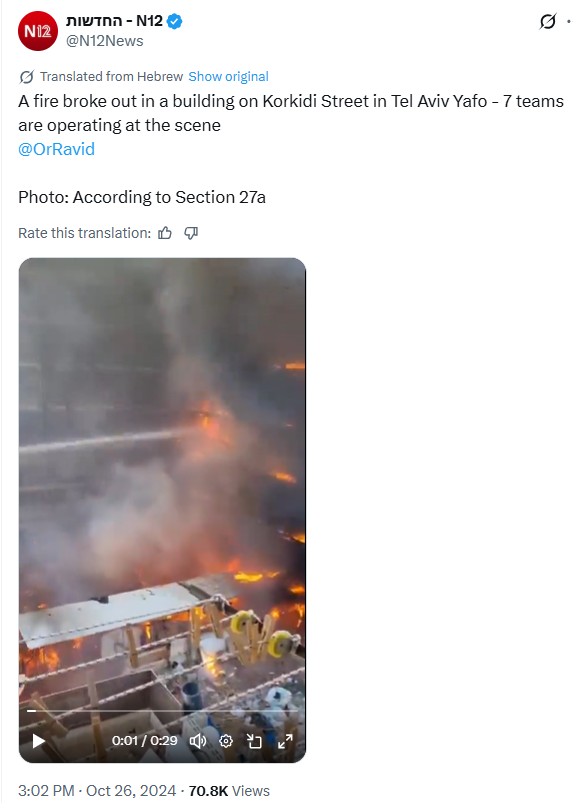

THREAD: How to verify images online?

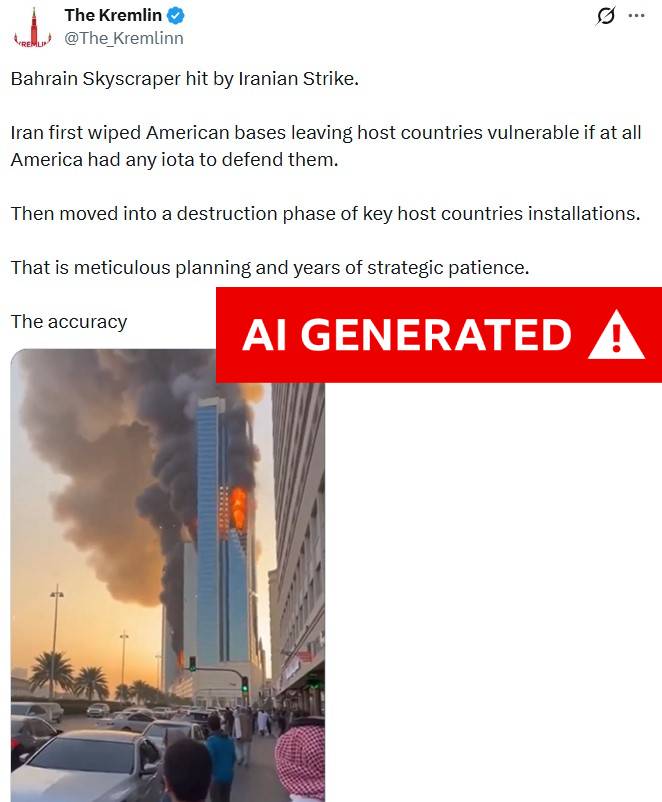

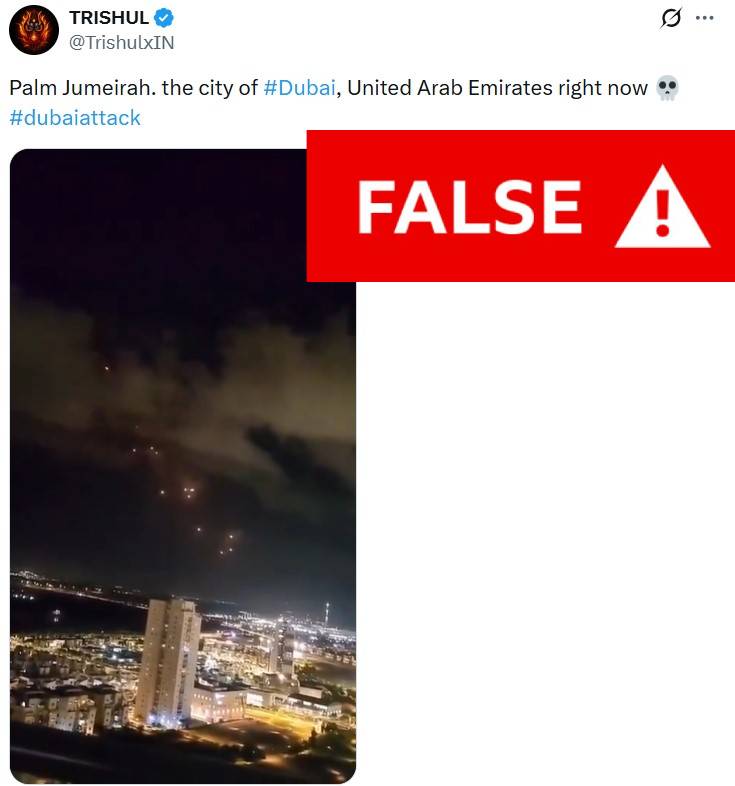

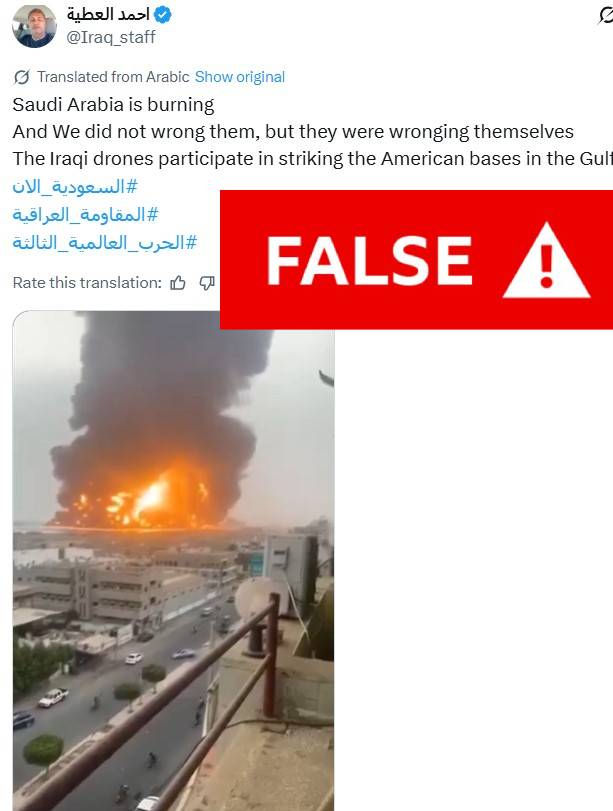

Social media is awash with false or misleading images, some of which get millions of engagements.

So, here's a simple guide on ways you can quickly check the veracity of an image you see on your social media feeds.

Social media is awash with false or misleading images, some of which get millions of engagements.

So, here's a simple guide on ways you can quickly check the veracity of an image you see on your social media feeds.

Reverse image search is the most fundamental part of content verification - the process of searching to find if, and when, an image has appeared on the internet before, and in what context.

Google Lens, Yandex, TinEye and Bing are among the free tools that allow you to do this.

Google Lens, Yandex, TinEye and Bing are among the free tools that allow you to do this.

Lens is Google's excellent tool for checking online content.

Here's a tweet by US conspiracy theorist Stew Peters claiming this cloud was seen in Turkey just before the recent earthquake.

On Chrome, simply right-click on the image and select "search image with Google".

Here's a tweet by US conspiracy theorist Stew Peters claiming this cloud was seen in Turkey just before the recent earthquake.

On Chrome, simply right-click on the image and select "search image with Google".

Google Lens will bring up a range of relevant results.

If you click on the first link, you will see a Guardian report clarifying this was a lenticular cloud spotted in Bursa, Turkey, on 19 January - almost three weeks before the earthquake.

If you click on the first link, you will see a Guardian report clarifying this was a lenticular cloud spotted in Bursa, Turkey, on 19 January - almost three weeks before the earthquake.

You've got your answer. But if you're keen to find the first source of the image, you can keep looking through Google Lens results for the earliest date.

You'll find this Instagram post from 19 January. The user confirms she took the image at 8 o'clock that day.

You'll find this Instagram post from 19 January. The user confirms she took the image at 8 o'clock that day.

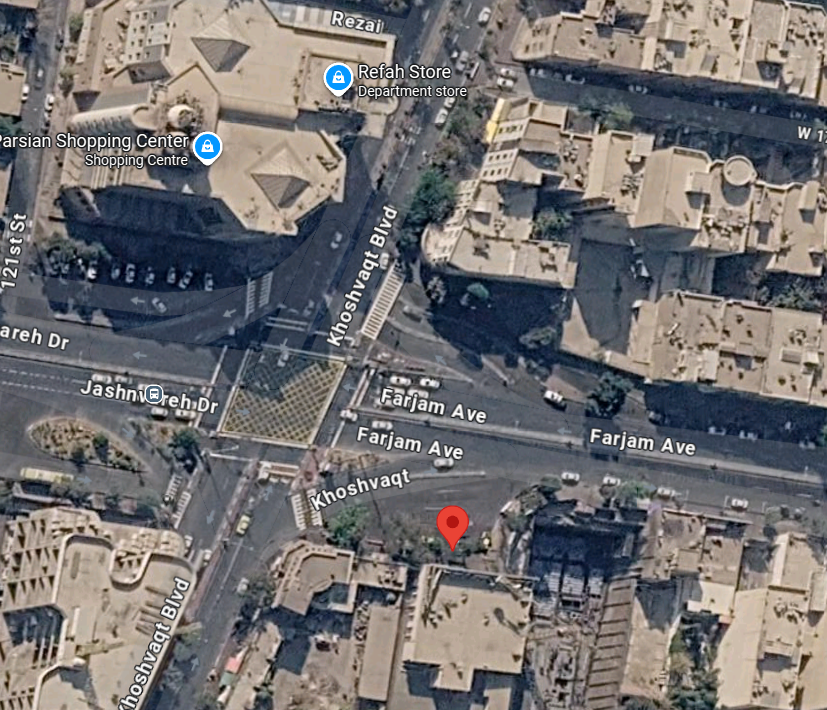

Look for notable signs or landmarks in an image and use the crop feature to narrow down your search.

In this image, those tower blocks in blue, yellow and green are a distinct feature. Crop the image on Lens and it'll quickly tell you that is Bogota, Colombia.

In this image, those tower blocks in blue, yellow and green are a distinct feature. Crop the image on Lens and it'll quickly tell you that is Bogota, Colombia.

Google Lens results are not chronological, which means you sometimes have to scroll through several pages of results to find the earliest date.

This image went viral days after the Russian invasion, claiming to show children seeing off Ukrainian troops.

Let's check it on Lens.

This image went viral days after the Russian invasion, claiming to show children seeing off Ukrainian troops.

Let's check it on Lens.

Crop the image and click on "find image source".

The first few pages of results are all from 2022 and 2023, but on page four you'll see the date 13 May 2016. Click on the link.

You'll see the image on Flickr posted by Ukraine's Ministry of Defence, meaning it's an old photo.

The first few pages of results are all from 2022 and 2023, but on page four you'll see the date 13 May 2016. Click on the link.

You'll see the image on Flickr posted by Ukraine's Ministry of Defence, meaning it's an old photo.

Lens also helps you quickly translate text in another language, say a street or shop sign in an image, or spot text added to a doctored image.

If you click on text in Lens for this alleged Zelensky image, it'll bring up fact-checks which show the photo has been manipulated.

If you click on text in Lens for this alleged Zelensky image, it'll bring up fact-checks which show the photo has been manipulated.

The other platforms work pretty much the same.

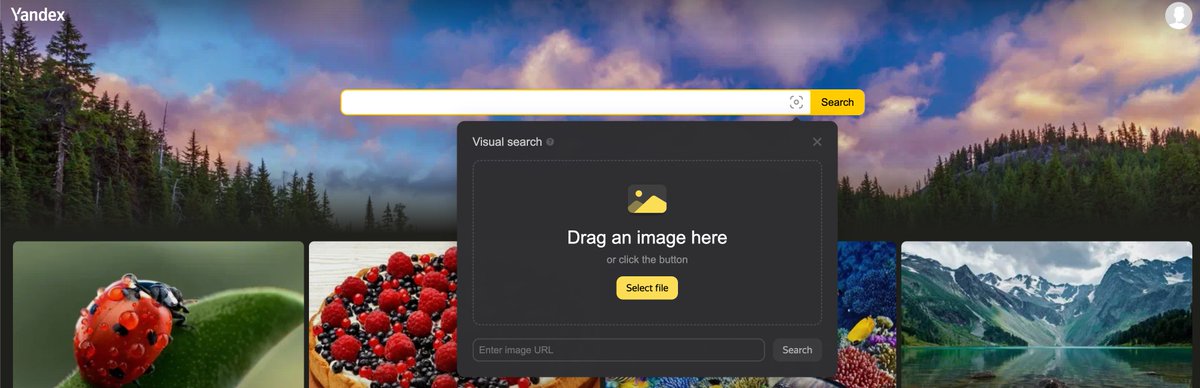

Yandex, a Russian search engine, used to be regarded as the most powerful of all reverse search tools. That's no longer the case.

But it's still widely used by journalists, particularly for the Ukraine war.

yandex.com/images/

Yandex, a Russian search engine, used to be regarded as the most powerful of all reverse search tools. That's no longer the case.

But it's still widely used by journalists, particularly for the Ukraine war.

yandex.com/images/

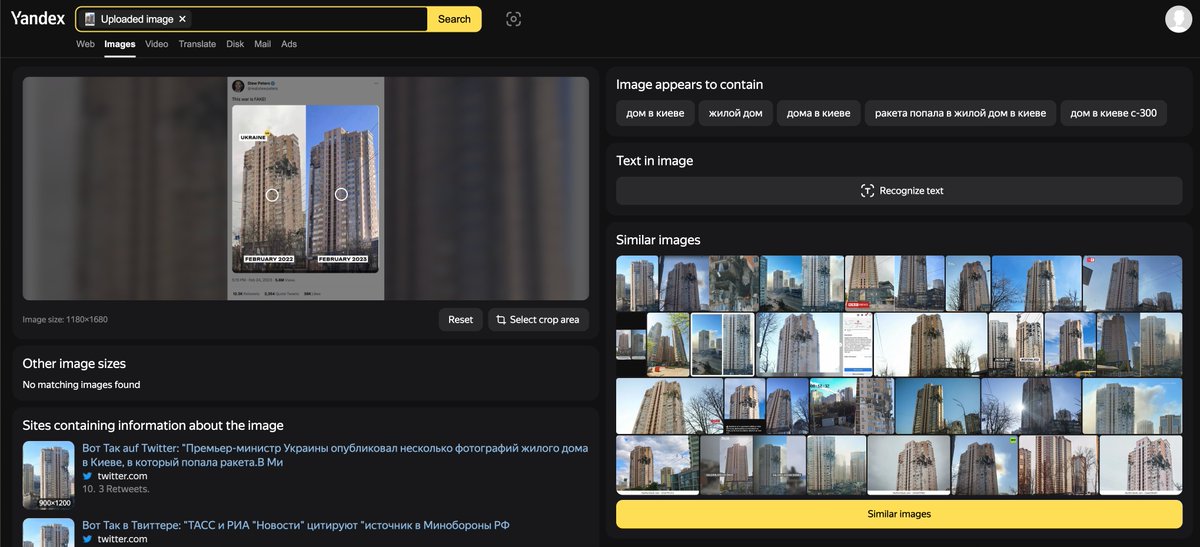

Here's a viral tweet claiming a Kyiv tower block was never hit.

Yandex works best if you save an image on your hard drive and upload it.

Once you've done that, it'll provide you with a series of images and web pages confirming the block was indeed hit on 26 February 2022.

Yandex works best if you save an image on your hard drive and upload it.

Once you've done that, it'll provide you with a series of images and web pages confirming the block was indeed hit on 26 February 2022.

TinEye is another well-known, free reverse image search engine.

While it may not be as comprehensive as Google Lens, TinEye has a very unique feature that I really appreciate: it allows you to filter results in a chronological order.

tineye.com

While it may not be as comprehensive as Google Lens, TinEye has a very unique feature that I really appreciate: it allows you to filter results in a chronological order.

tineye.com

Let's verify this viral image from last year, claiming to show a Nazi wedding in Ukraine.

Upload the image to TinEye and you'll quickly see two things:

The image seems to date back to at least 2016 or 2019, and that may not be the Ukrainian flag, but the Russian imperial flag.

Upload the image to TinEye and you'll quickly see two things:

The image seems to date back to at least 2016 or 2019, and that may not be the Ukrainian flag, but the Russian imperial flag.

The fourth link leads us to a 2018 article that states not only has the flag been doctored, but also the image was taken in Novokuznetsk, Russia.

If you reverse search that Lenin monument photo in the article, you will find it indeed matches the monument in the original image.

If you reverse search that Lenin monument photo in the article, you will find it indeed matches the monument in the original image.

If you've lasted all the way to this point, well done! You should now be able to verify online images for yourself.

As a bonus, let me introduce you to @InVID_EU, an excellent verification plugin used by nearly all fact-checkers and OSINT practitioners.

invid-project.eu/tools-and-serv…

As a bonus, let me introduce you to @InVID_EU, an excellent verification plugin used by nearly all fact-checkers and OSINT practitioners.

invid-project.eu/tools-and-serv…

Install the @InVID_EU Chrome extension, right click on any online image, select InVid debunker and it'll bring up a range of direct reverse search options with multiple tools for you.

We'll talk more about @InVID_EU in my next thread about video verification.

We'll talk more about @InVID_EU in my next thread about video verification.

If you liked this thread, please feel free to check my other thread on verifying fake or manipulated screenshots of tweets and social media posts.

We'll try to learn how to verify online videos in an upcoming thread.

We'll try to learn how to verify online videos in an upcoming thread.

https://twitter.com/Shayan86/status/1567541626251231233

• • •

Missing some Tweet in this thread? You can try to

force a refresh