An implication of designing any LLM app over your data is you’re adding “state” (data) to a “stateless” module (LLM).

Stateful apps are hard, and require good storage abstractions.

We’ve thought hard about this with @gpt_index 🧵

Stateful apps are hard, and require good storage abstractions.

We’ve thought hard about this with @gpt_index 🧵

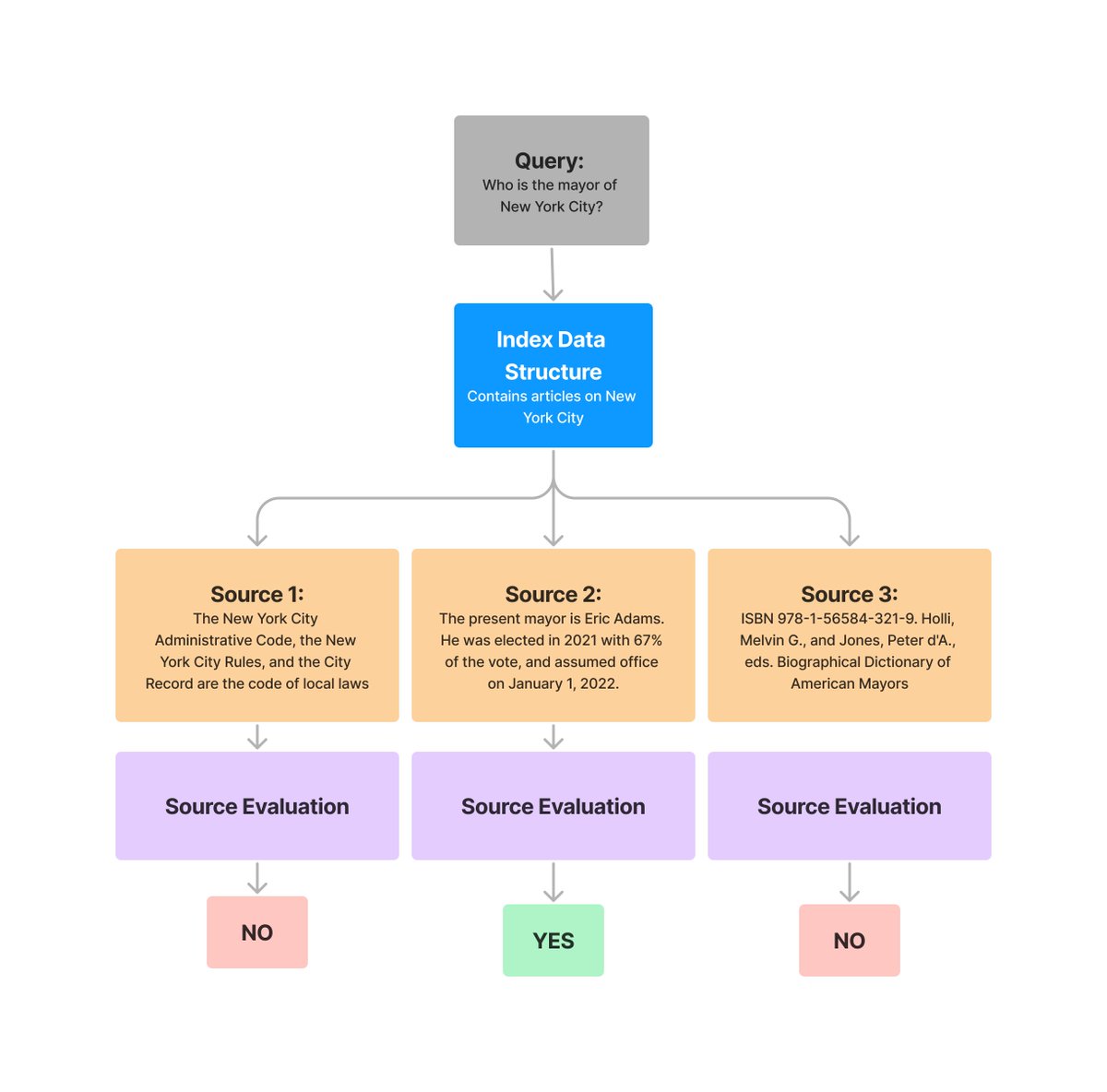

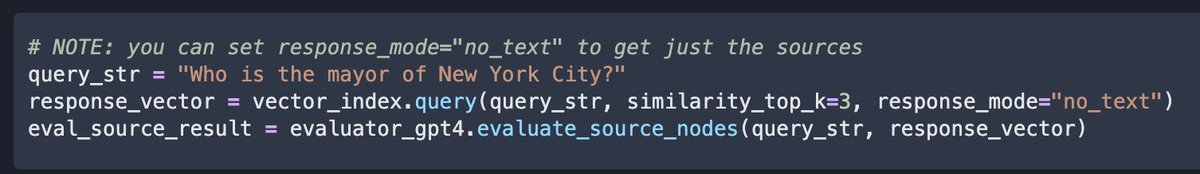

Hacking an initial retrieval-augmented LLM app is super easy: take some documents, chunk it up, put it in a vector db.

But thinking about prod data reqs makes this more challenging.

It’s one thing to build a demo over 5 docs. What about over GB’s of data over different sources?

But thinking about prod data reqs makes this more challenging.

It’s one thing to build a demo over 5 docs. What about over GB’s of data over different sources?

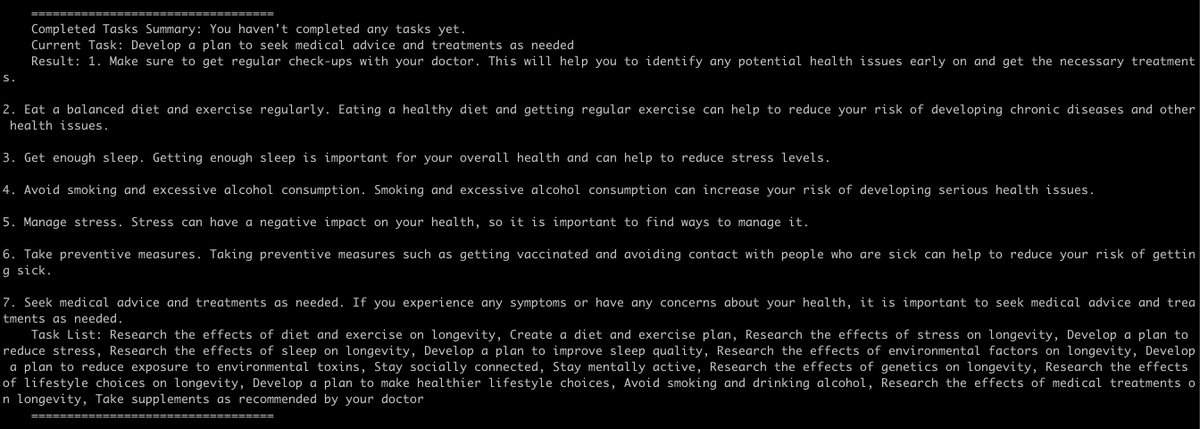

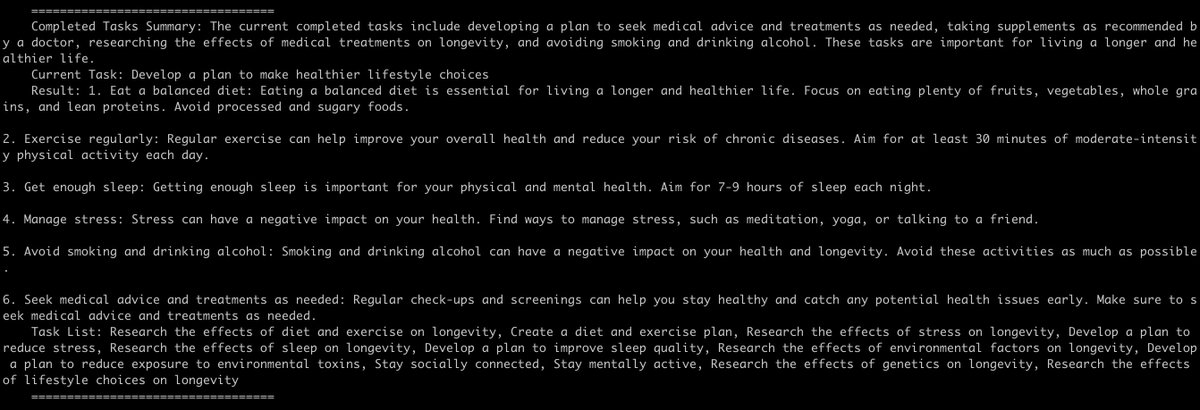

Some questions:

How do we store source Documents? Once we split it, how do we store text chunks?

How do we store metadata? Including indices on your data?

How do we store vectors with vector db’s?

How do we store source Documents? Once we split it, how do we store text chunks?

How do we store metadata? Including indices on your data?

How do we store vectors with vector db’s?

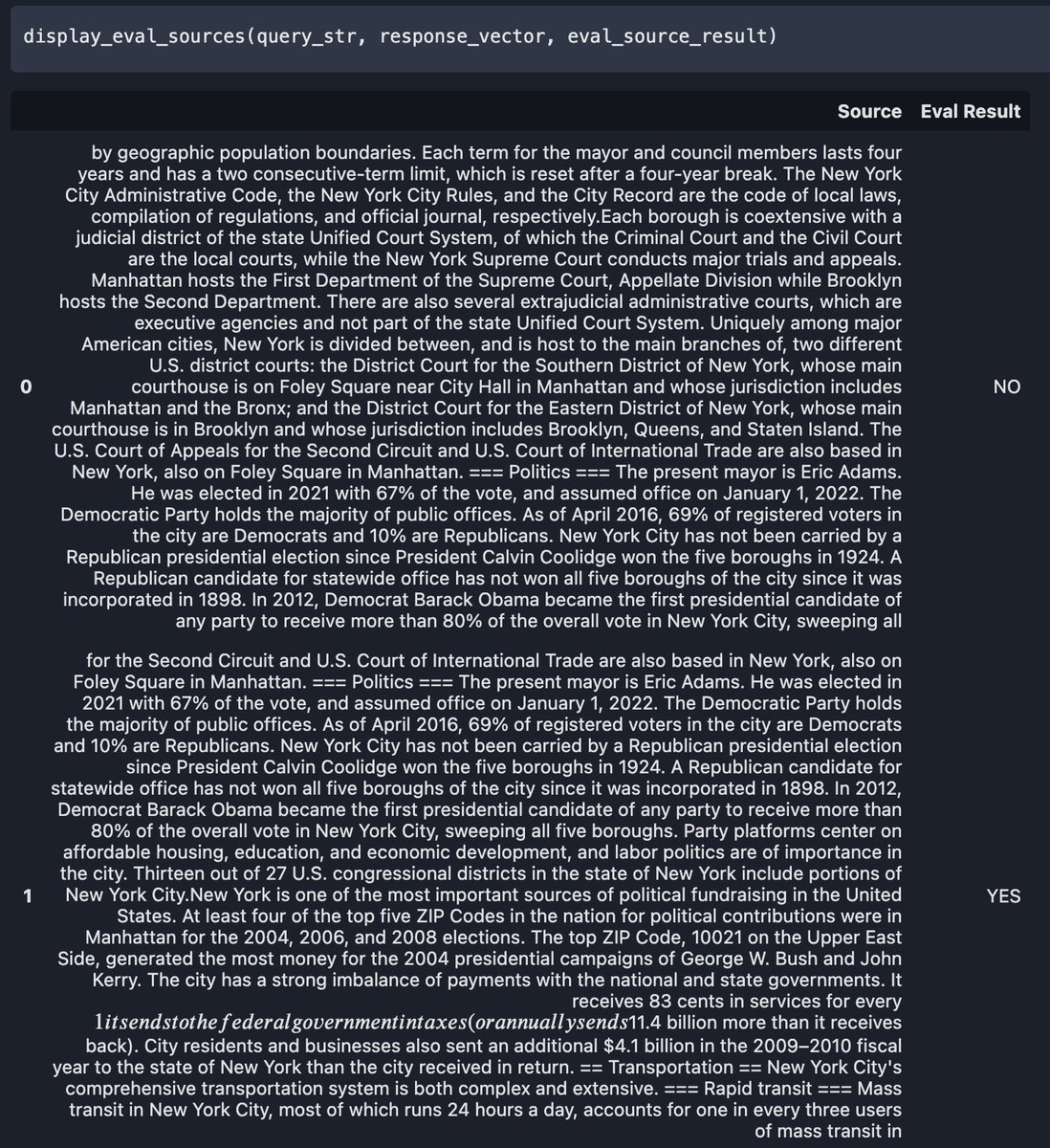

The new release of @gpt_index (0.6.0) takes a stab at addressing this:

- We define an underlying KV store abstraction

- We can store Nodes (raw data chunks) and indices in KV store

- In parallel, we maintain vector store abstractions

Full blog: medium.com/@jerryjliu98/l…

- We define an underlying KV store abstraction

- We can store Nodes (raw data chunks) and indices in KV store

- In parallel, we maintain vector store abstractions

Full blog: medium.com/@jerryjliu98/l…

There is now a vector ecosystem of vector db providers. Many vector db’s (e.g. @pinecone, @trychroma, @weaviate_io), allow storage of both vectors and docs.

For now they’re sep from our docstore; we have a TODO to explore similarities.

For now they’re sep from our docstore; we have a TODO to explore similarities.

A key concept is to decouple the raw data from the indexes that we define at the top-level.

An Index in @gpt_index is just a lightweight view over your data, each solving a diff retrieval use case.

You can/should define multiple indices over your data.

An Index in @gpt_index is just a lightweight view over your data, each solving a diff retrieval use case.

You can/should define multiple indices over your data.

Interested in contributing? We’d LOVE to have your help in building way more document store abstractions: S3, GCS, HDFS, and more.

+ more vector integrations as well.

gpt-index.readthedocs.io/en/latest/how_…

+ more vector integrations as well.

gpt-index.readthedocs.io/en/latest/how_…

• • •

Missing some Tweet in this thread? You can try to

force a refresh

Read on Twitter

Read on Twitter