I finally got a chance to tell a story that I’ve been keeping to myself for 6+ years. My first fulltime job was as a consultant at McKinsey. At the time, it seemed like a dream job—a way to work with brilliant people, learn a lot, and maybe even improve things from the inside 🧵

My first ever cover story is out in @thenation and does something unprecedented: to my knowledge, no former McKinsey employee has ever publicly discussed project specifics with real client names. The firm is intensely secret, above and beyond competitor consultancies. McKinsey...

will say this is to protect client interests, but it also serves to protect McKinsey from scrutiny and accountability.

I found myself working directly for two of McKinsey’s most controversial clients: Rikers Island and ICE. At Rikers, we were meant to help reduce violence...

I found myself working directly for two of McKinsey’s most controversial clients: Rikers Island and ICE. At Rikers, we were meant to help reduce violence...

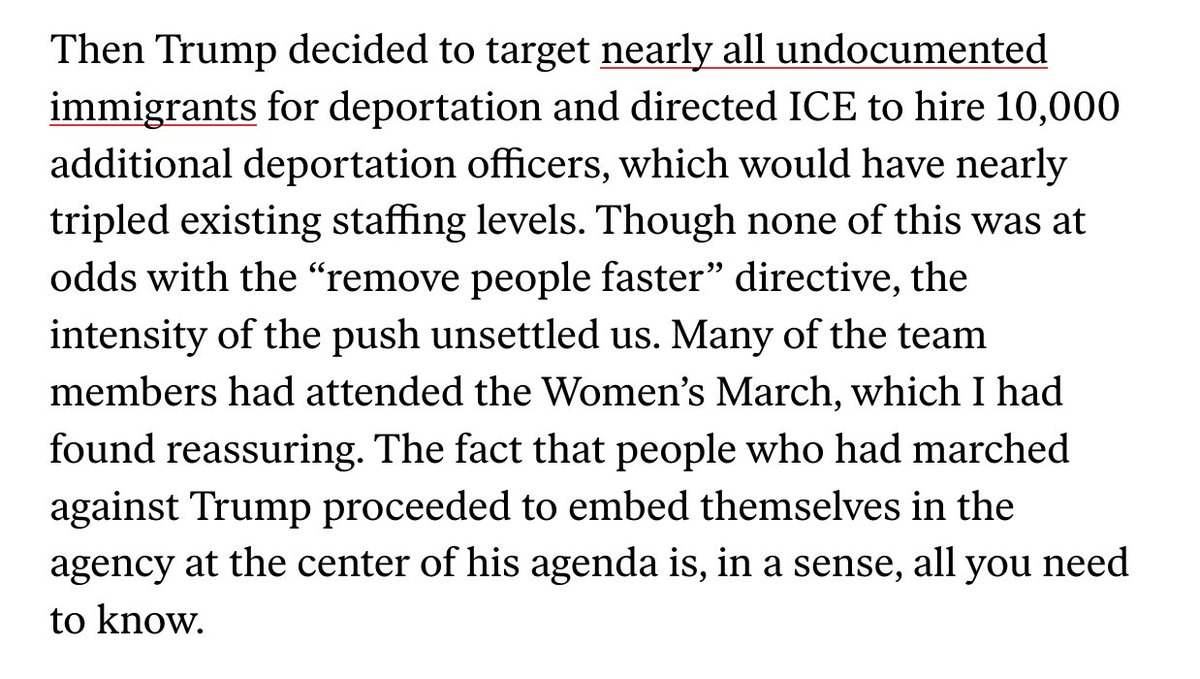

My desire to work for mission-driven orgs led me to the front-lines of Trump’s administration agenda. What started as a culture survey of ICE’s staff transformed into an all-hands-on-deck effort to help ICE comply with Trump’s executive orders to target all undocumented people...

for deportation and triple the number of deportation officers. I was tasked with modeling out how to meet the new hiring targets.

During a team-wide meeting on the ethics of our work, the senior partner said that “The firm does execution, not policy.” When I asked him...

During a team-wide meeting on the ethics of our work, the senior partner said that “The firm does execution, not policy.” When I asked him...

what would have stopped us from working for the Nazis, he muttered something about McKinsey being a values-based organization. The thing is, I couldn’t point to any violation of McKinsey’s values as they were stated...

I’ve spent years reckoning with my role in all this and trying to figure what I can do to hold McKinsey accountable. I was also terrified of publicly attacking such a powerful org. I’m lucky enough now to be doing what I love and feel secure enough to do what I think is right...

We can’t count on McKinsey to reform itself, but the government and elite universities can offer some modicum of accountability...

I came into McKinsey believing in a certain “technocratic utopianism” that animates the firm. I left McKinsey radicalized against capitalism and the amorality profit-seeking at its center.

The core irony...

The core irony...

that makes McKinsey so resilient is that, no matter what awful thing it does, the name still burnishes your resume. Even in my case, my anonymous Current Affairs essay about McKinsey launched my journalism career...

As daunting as it may be, we should work towards a society where the name McKinsey on a resume what i learned it should be: a source of shame.

bit.ly/3ReTCX6

bit.ly/3ReTCX6

• • •

Missing some Tweet in this thread? You can try to

force a refresh