Thread: Online misinformation is rampant following the escalation of violence between Israel and Hamas today.

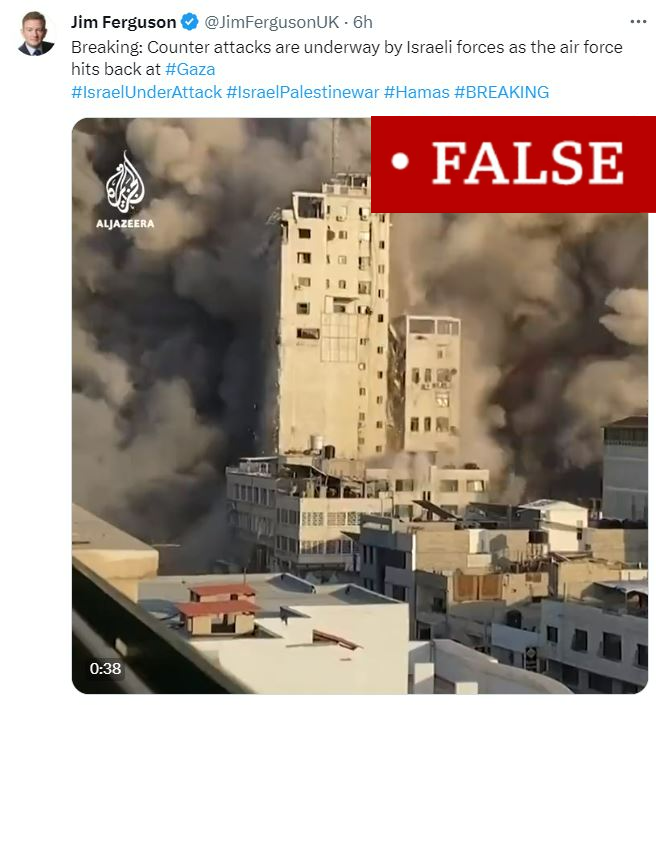

This video of a tower block in Gaza being hit by a missile is from May 2021, not today. It was captured live during a BBC Arabic broadcast at the time.

This video of a tower block in Gaza being hit by a missile is from May 2021, not today. It was captured live during a BBC Arabic broadcast at the time.

This video of a house in Gaza being destroyed by a strike is genuine, but it's actually from May of this year, and not from the fresh escalation in hostilities today.

This video of a house being struck by Israel is also from May of this year, not today.

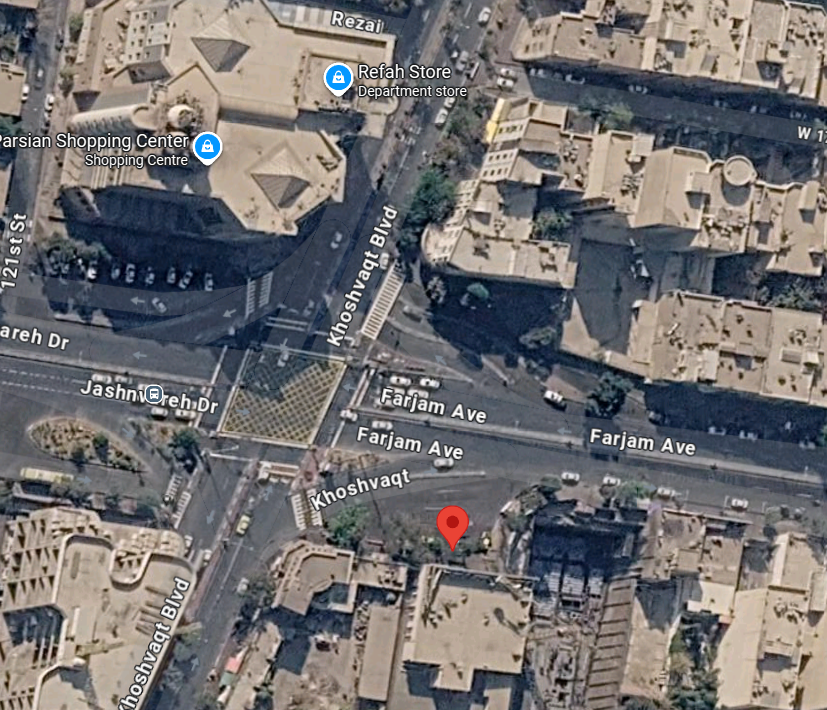

It was filmed in Beit Hanoun, Gaza; as geolocated by @ChrisOsieck at the time.

It was filmed in Beit Hanoun, Gaza; as geolocated by @ChrisOsieck at the time.

This video, shared by right-wing influencers Charlie Kirk and Ian Miles Cheong and viewed millions of times, actually shows Israeli police and special forces outside a house, as is easily identifiable by their uniforms, not Hamas militants.

This is another video of a house in Gaza being targeted by an IDF strike in May of this year. It's not from today.

This video ceratinly doesn't show Hamas shooting down two Israeli helicopters, because it's actually from the video game Arma 3.

This is complete and utter nonsense, shared for nothing but engagement. Israel hasn't authorised a nuclear strike on Gaza, and the footage shows a US nuclear test from the 1950s.

This video, viewed 230,000 times, is not footage of a Hamas militant shooting down an Israeli helicopter. It's from the video game Arma 3.

This video, viewed more than 3 million times, does not show a building in Israel.

It shows an Israeli strike on Gaza's Palestine Tower, which houses Hamas radio stations on the rooftop and also holds a cinema, earlier today.

It shows an Israeli strike on Gaza's Palestine Tower, which houses Hamas radio stations on the rooftop and also holds a cinema, earlier today.

A fake document is being widely shared online claiming to show the authorisation of $8bn in military aid to Israel by President Biden.

It's a doctored version of a 25 July document detailing $400m in aid to Ukraine authorised by President Biden; fact-checked by @Info_Rosalie.

It's a doctored version of a 25 July document detailing $400m in aid to Ukraine authorised by President Biden; fact-checked by @Info_Rosalie.

This is a fake Jerusalem Post account falsely reporting that Prime Minister Benjamin Netanyahu has been taken to hospital, and somehow racking up over 600,000 views.

The post has now got a Community Note attached to it.

The post has now got a Community Note attached to it.

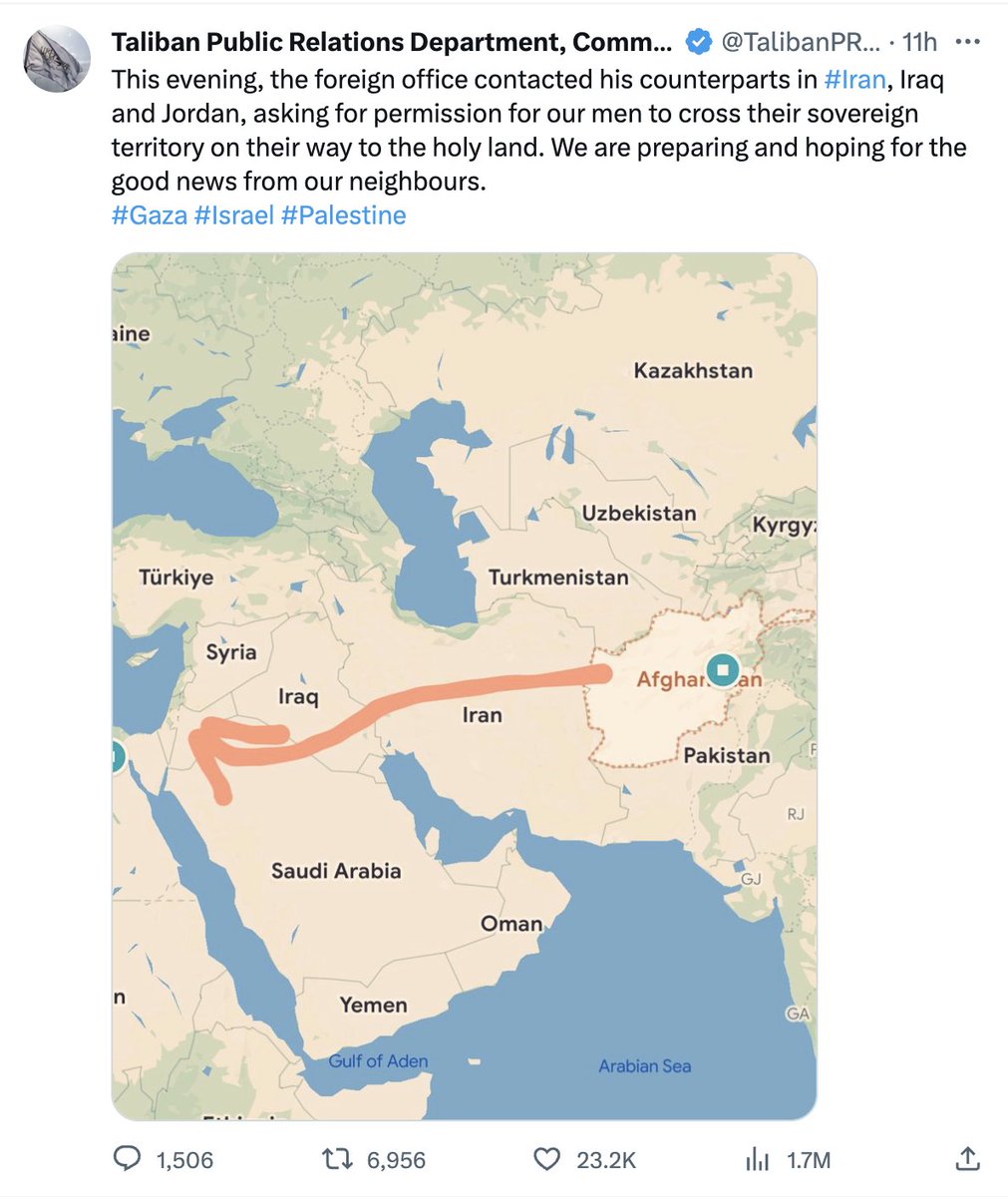

If you've come across reports that the Taliban has asked Iran and Iraq for permission to send fighters to Israel, the source for those reports is this viral tweet by what most likely is a fake Taliban PR account with a bluck tick.

I particularly like that hand-drawn arrow.

I particularly like that hand-drawn arrow.

• • •

Missing some Tweet in this thread? You can try to

force a refresh