A Guide to Countering Mis/Disinformation During a Crisis.

Amid unprecedented events, our news feeds overflow with reports, images, and videos, making real-time truth discernment challenging. This thread equips you with the tools for effective navigation amidst such situations.

Amid unprecedented events, our news feeds overflow with reports, images, and videos, making real-time truth discernment challenging. This thread equips you with the tools for effective navigation amidst such situations.

When "Breaking News" emerges, the rush to lead and control the narrative ensues. Reports are hastily assembled, drawing from private sources, alleged incident images, and precedent, often intertwined with personal opinions. This process is standard, but it also leads to issues.

The hurried reporting often results in the publication of false testimonies, mislabeling unrelated pictures and videos as connected to the event, and unsourced reports driving a false narrative about the sequence of events.

How can WE avoid this?

How can WE avoid this?

Step 1: Be aware of your own bias.

Your belief system influences the narrative you embrace regarding "Breaking News." People often scramble to latch onto reports, pictures, or videos that align with their preconceived conclusions about the event, forgoing a critical analysis.

Your belief system influences the narrative you embrace regarding "Breaking News." People often scramble to latch onto reports, pictures, or videos that align with their preconceived conclusions about the event, forgoing a critical analysis.

Step 2: Ignore reports that don't provide a source.

You are going to have to filter through a lot of content as you try to piece together what transpired. Ignore anything that doesn't provide a source for their claims about the event.

You are going to have to filter through a lot of content as you try to piece together what transpired. Ignore anything that doesn't provide a source for their claims about the event.

Step 3: For reports with alleged sources, check them!

Sometimes, the sources provided are "unnamed," "anonymous," or overly vague, such as when attributed statements are as generic as "a [country] official said". My advice would be to also initially ignore these alleged sources.

Sometimes, the sources provided are "unnamed," "anonymous," or overly vague, such as when attributed statements are as generic as "a [country] official said". My advice would be to also initially ignore these alleged sources.

Step 4: For photos/videos attributed to the event - Patience.

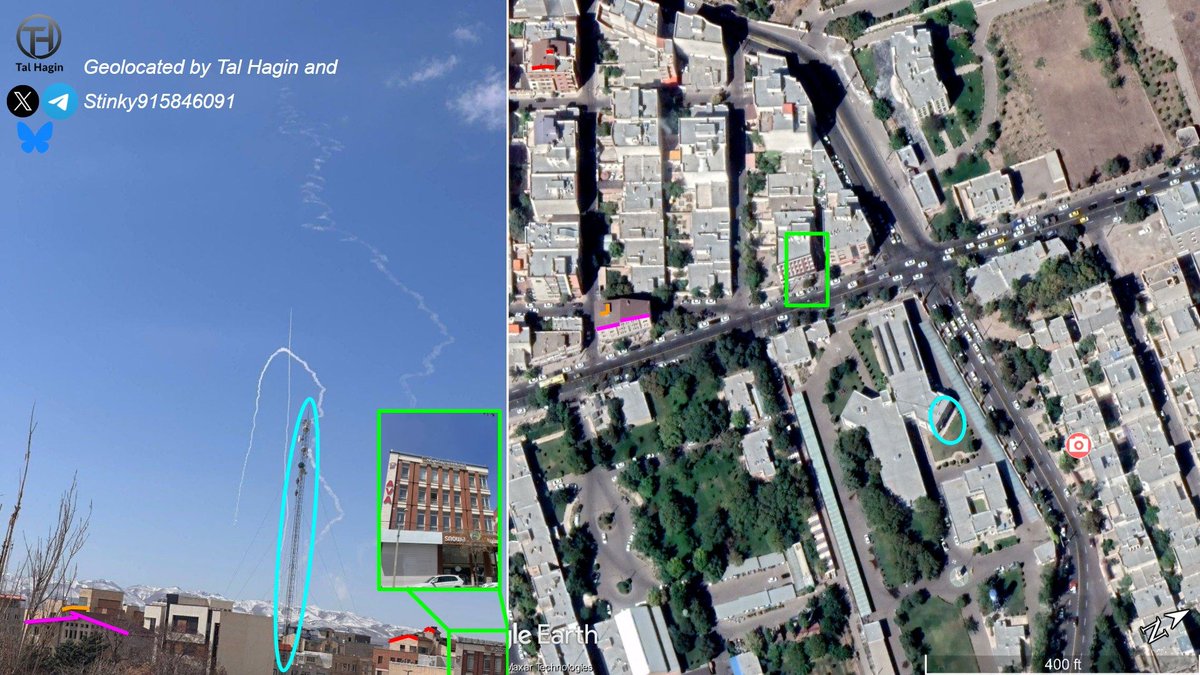

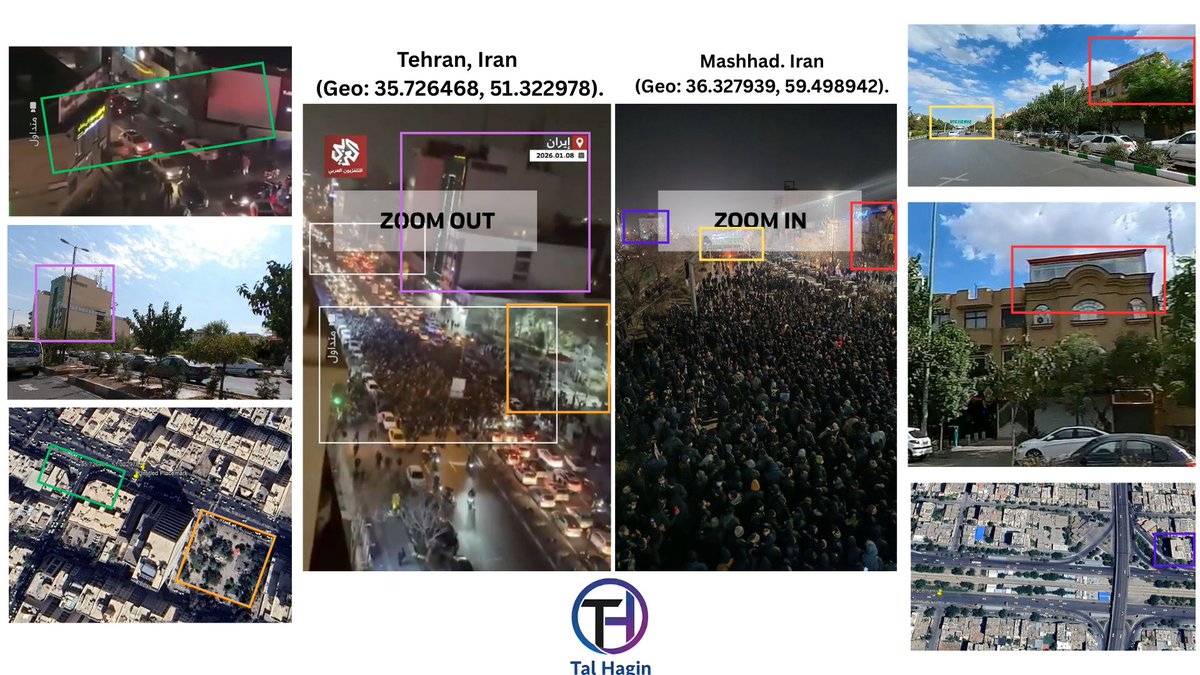

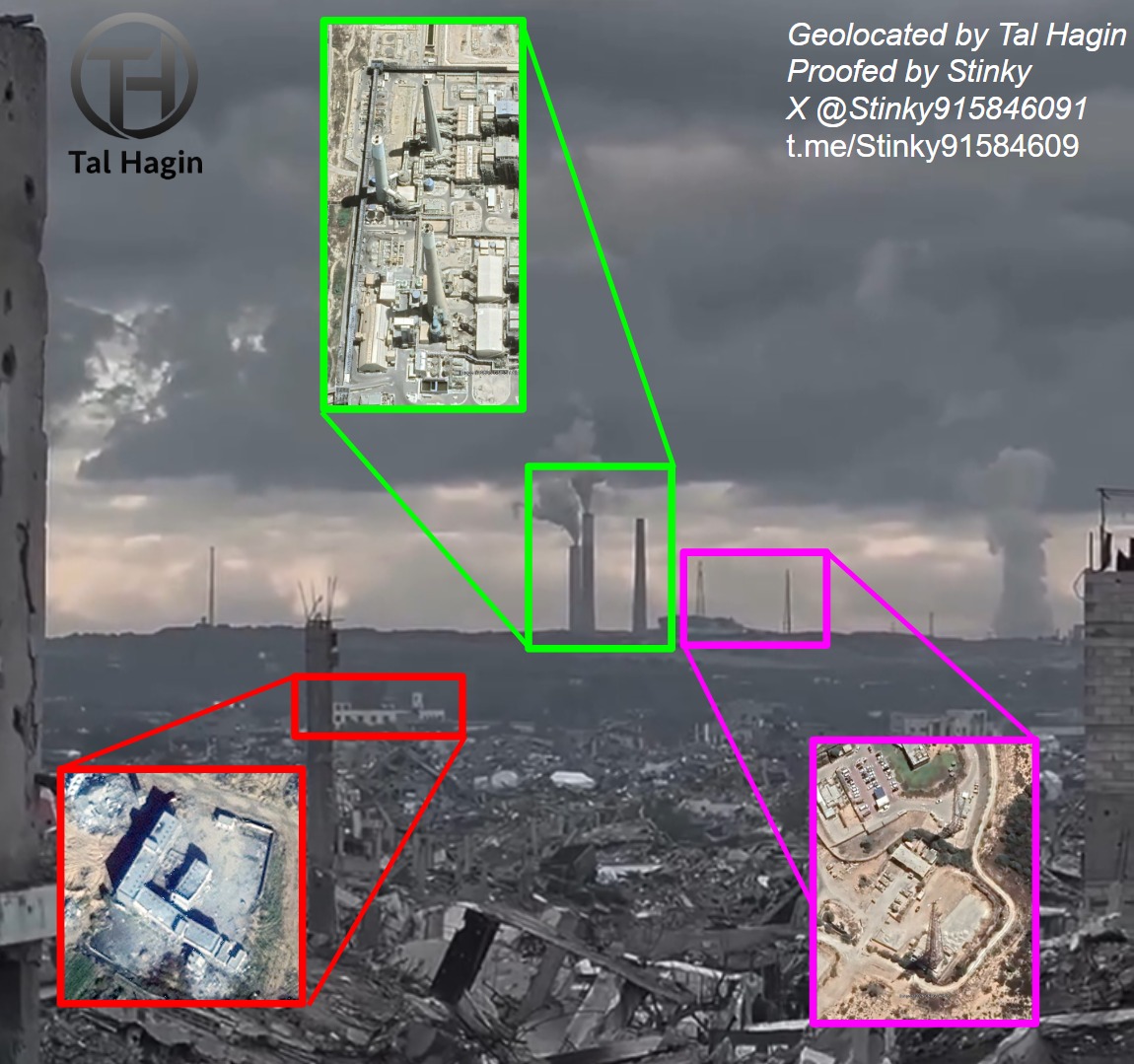

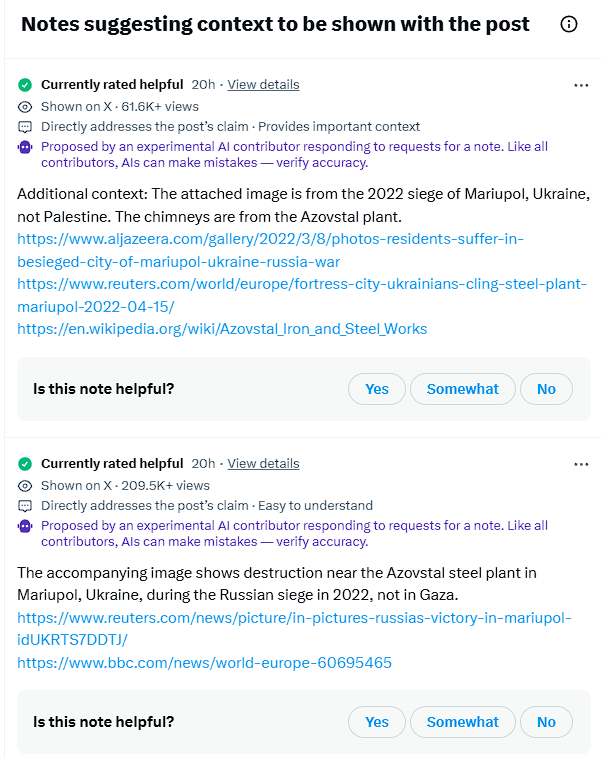

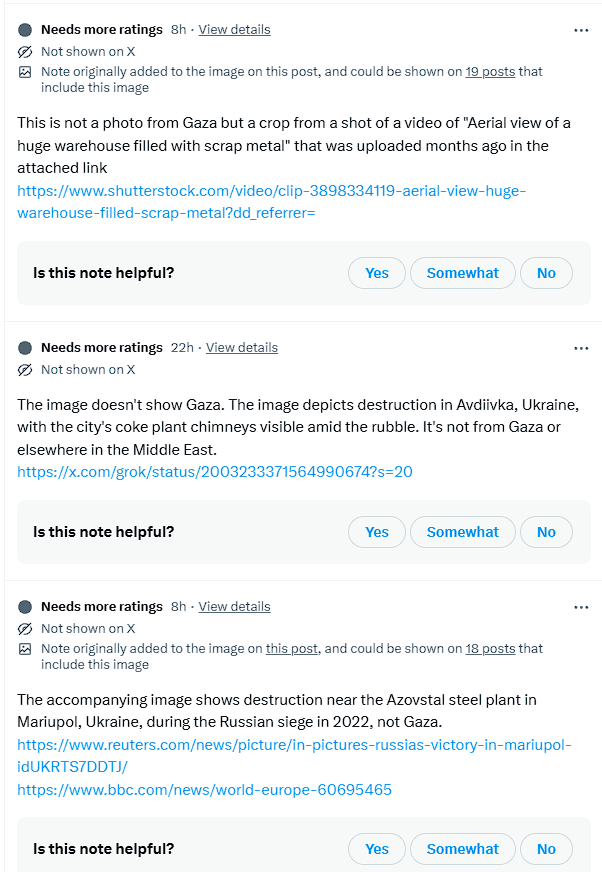

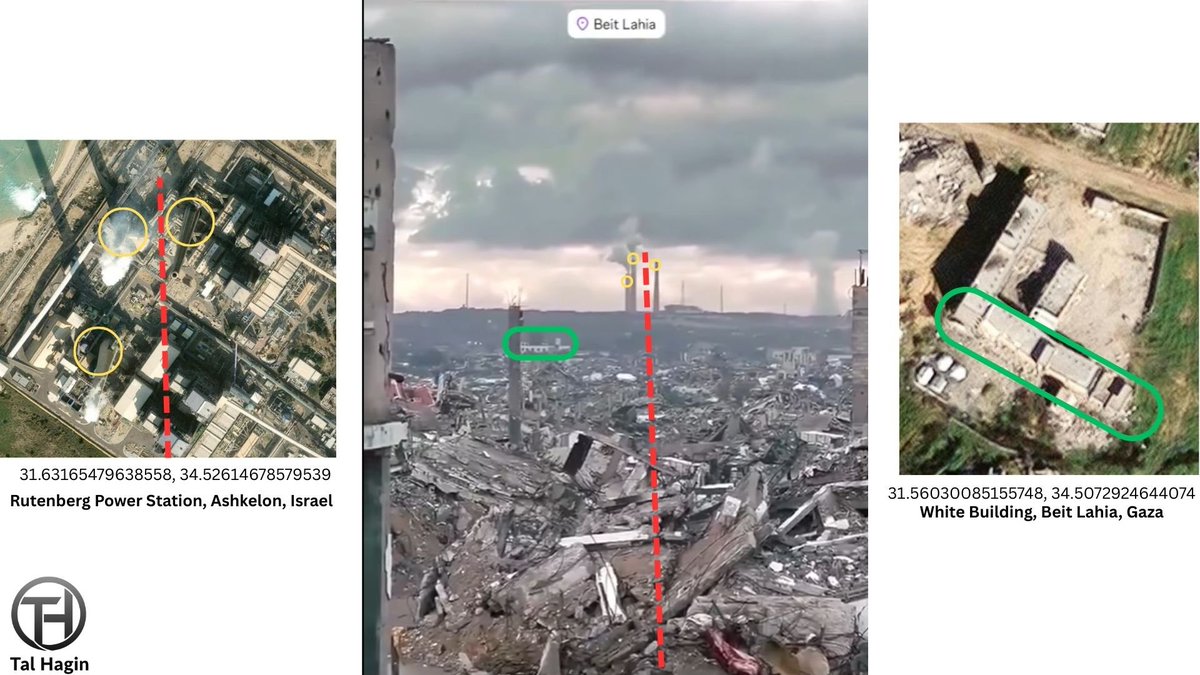

Check for disputes regarding the origin of the photos/videos. Misattributed media is often swiftly "fact-checked." Moreover, consider using "Google Lens" on the image/video to uncover any possible older versions.

Check for disputes regarding the origin of the photos/videos. Misattributed media is often swiftly "fact-checked." Moreover, consider using "Google Lens" on the image/video to uncover any possible older versions.

Step 5: Don't believe fact-checkers who don't provide sources.

Often, fact-checkers will rush to correct posts. Ensure that their explanations provide sources for their claims, and treat them as you would any "Breaking News" report.

Often, fact-checkers will rush to correct posts. Ensure that their explanations provide sources for their claims, and treat them as you would any "Breaking News" report.

Step 6: Don't rely on the captions or subtitles of videos of languages you don't speak.

If feasible, contact a user fluent in the language for verification. If not, attempt to locate a transcript of the video. Exercise caution with translation apps as they may not always be accurate.

If feasible, contact a user fluent in the language for verification. If not, attempt to locate a transcript of the video. Exercise caution with translation apps as they may not always be accurate.

Step 7: Sensational dazzles, yet truth it often eludes

Be cautious of initial reports packed with buzzwords and vivid details; they often serve a purpose. During a crisis, beware of clickbait designed to exploit biases and grab attention.

Be cautious of initial reports packed with buzzwords and vivid details; they often serve a purpose. During a crisis, beware of clickbait designed to exploit biases and grab attention.

Navigating reports can be complex and time-consuming. This concise list aims to help you swiftly sift through your news feeds, drawing from personal experience to steer clear of fake reports, pictures, and videos.

For a more comprehensive overview of handling misinformation:

For a more comprehensive overview of handling misinformation:

https://x.com/talhagin/status/1721913336856539647

Note: A frequently asked question is, "Who should I follow for accuracy?"

We all have biases, which can lead to mistakes. Follow those you trust, but remain cautious and ensure they source anything they post. After all, we're all human and prone to errors.

Thanks for reading!

We all have biases, which can lead to mistakes. Follow those you trust, but remain cautious and ensure they source anything they post. After all, we're all human and prone to errors.

Thanks for reading!

• • •

Missing some Tweet in this thread? You can try to

force a refresh