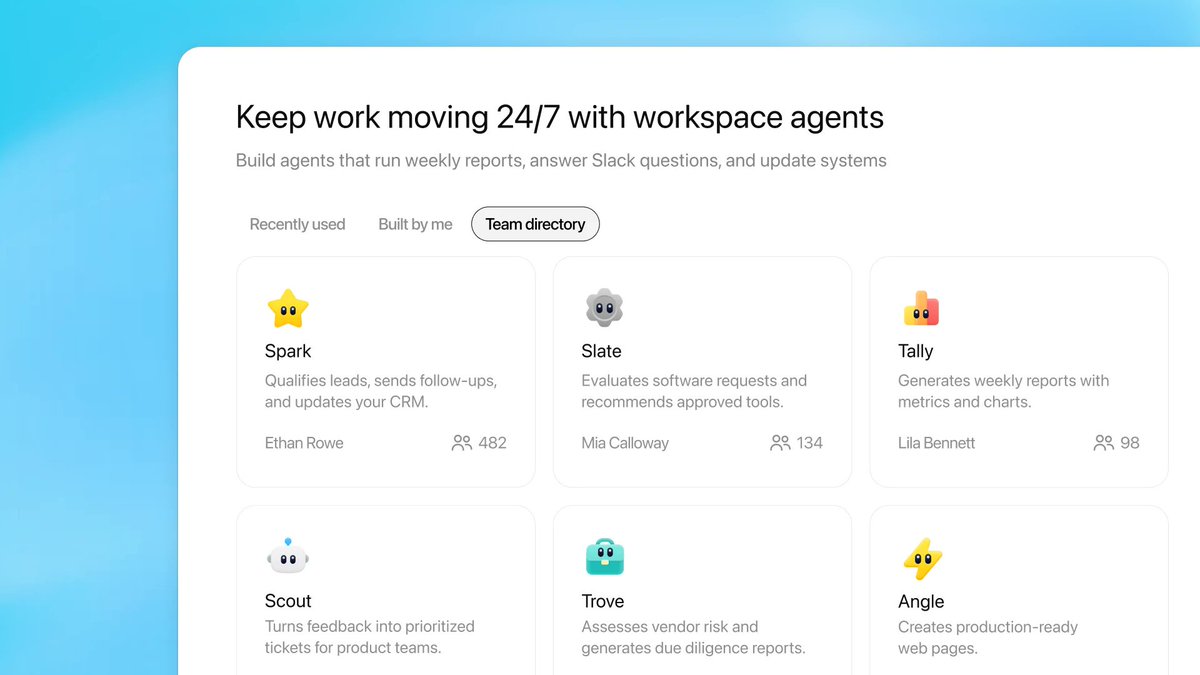

Say hello to GPT-4o, our new flagship model which can reason across audio, vision, and text in real time:

Text and image input rolling out today in API and ChatGPT with voice and video in the coming weeks. openai.com/index/hello-gp…

Text and image input rolling out today in API and ChatGPT with voice and video in the coming weeks. openai.com/index/hello-gp…

Two GPT-4os interacting and singing

Realtime translation with GPT-4o

Lullabies and whispers with GPT-4o

Happy birthday with GPT-4o

@BeMyEyes with GPT-4o

Dad jokes with GPT-4o

Meeting AI with GPT-4o

Sarcasm with GPT-4o

Math problems with GPT-4o and @khanacademy

Point and learn Spanish with GPT-4o

Rock, Paper, Scissors with GPT-4o

Harmonizing with two GPT-4os

Interview prep with GPT-4o

Fast counting with GPT-4o

Dog meets GPT-4o

Live demo of GPT-4o realtime conversational speech

Live demo of GPT-4o voice variation

Live demo of GPT-4o vision

Live demo of coding assistance and desktop app

Live audience request for GPT-4o realtime translation

Live audience request for GPT-4o vision capabilities

All users will start to get access to GPT-4o today. In coming weeks we’ll begin rolling out the new voice and vision capabilities we demo’d today to ChatGPT Plus.

• • •

Missing some Tweet in this thread? You can try to

force a refresh