⚡️ Excited to share that I am starting an AI+Education company called Eureka Labs.

The announcement:

---

We are Eureka Labs and we are building a new kind of school that is AI native.

How can we approach an ideal experience for learning something new? For example, in the case of physics one could imagine working through very high quality course materials together with Feynman, who is there to guide you every step of the way. Unfortunately, subject matter experts who are deeply passionate, great at teaching, infinitely patient and fluent in all of the world's languages are also very scarce and cannot personally tutor all 8 billion of us on demand.

However, with recent progress in generative AI, this learning experience feels tractable. The teacher still designs the course materials, but they are supported, leveraged and scaled with an AI Teaching Assistant who is optimized to help guide the students through them. This Teacher + AI symbiosis could run an entire curriculum of courses on a common platform. If we are successful, it will be easy for anyone to learn anything, expanding education in both reach (a large number of people learning something) and extent (any one person learning a large amount of subjects, beyond what may be possible today unassisted).

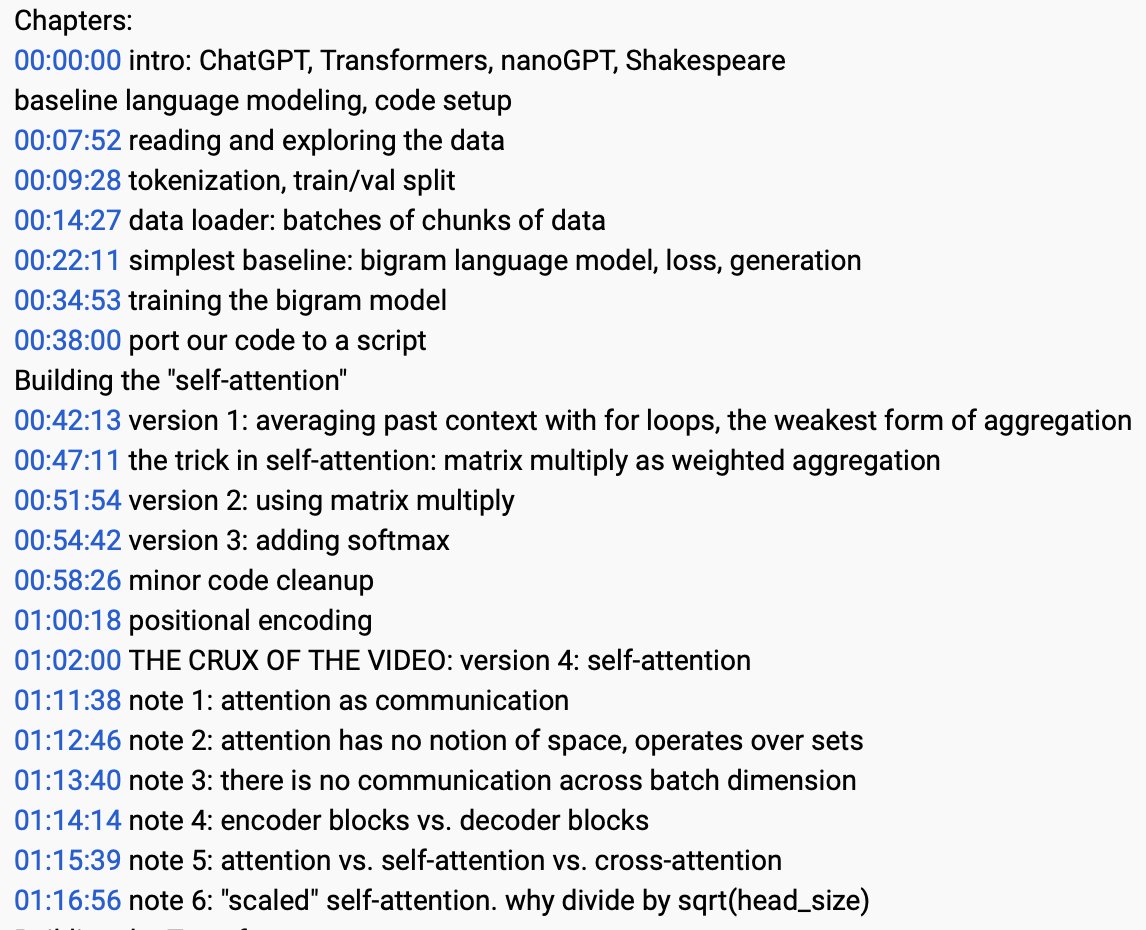

Our first product will be the world's obviously best AI course, LLM101n. This is an undergraduate-level class that guides the student through training their own AI, very similar to a smaller version of the AI Teaching Assistant itself. The course materials will be available online, but we also plan to run both digital and physical cohorts of people going through it together.

Today, we are heads down building LLM101n, but we look forward to a future where AI is a key technology for increasing human potential. What would you like to learn?

---

@EurekaLabsAI is the culmination of my passion in both AI and education over ~2 decades. My interest in education took me from YouTube tutorials on Rubik's cubes to starting CS231n at Stanford, to my more recent Zero-to-Hero AI series. While my work in AI took me from academic research at Stanford to real-world products at Tesla and AGI research at OpenAI. All of my work combining the two so far has only been part-time, as side quests to my "real job", so I am quite excited to dive in and build something great, professionally and full-time.

It's still early days but I wanted to announce the company so that I can build publicly instead of keeping a secret that isn't. Outbound links with a bit more info in the reply!

The announcement:

---

We are Eureka Labs and we are building a new kind of school that is AI native.

How can we approach an ideal experience for learning something new? For example, in the case of physics one could imagine working through very high quality course materials together with Feynman, who is there to guide you every step of the way. Unfortunately, subject matter experts who are deeply passionate, great at teaching, infinitely patient and fluent in all of the world's languages are also very scarce and cannot personally tutor all 8 billion of us on demand.

However, with recent progress in generative AI, this learning experience feels tractable. The teacher still designs the course materials, but they are supported, leveraged and scaled with an AI Teaching Assistant who is optimized to help guide the students through them. This Teacher + AI symbiosis could run an entire curriculum of courses on a common platform. If we are successful, it will be easy for anyone to learn anything, expanding education in both reach (a large number of people learning something) and extent (any one person learning a large amount of subjects, beyond what may be possible today unassisted).

Our first product will be the world's obviously best AI course, LLM101n. This is an undergraduate-level class that guides the student through training their own AI, very similar to a smaller version of the AI Teaching Assistant itself. The course materials will be available online, but we also plan to run both digital and physical cohorts of people going through it together.

Today, we are heads down building LLM101n, but we look forward to a future where AI is a key technology for increasing human potential. What would you like to learn?

---

@EurekaLabsAI is the culmination of my passion in both AI and education over ~2 decades. My interest in education took me from YouTube tutorials on Rubik's cubes to starting CS231n at Stanford, to my more recent Zero-to-Hero AI series. While my work in AI took me from academic research at Stanford to real-world products at Tesla and AGI research at OpenAI. All of my work combining the two so far has only been part-time, as side quests to my "real job", so I am quite excited to dive in and build something great, professionally and full-time.

It's still early days but I wanted to announce the company so that I can build publicly instead of keeping a secret that isn't. Outbound links with a bit more info in the reply!

• • •

Missing some Tweet in this thread? You can try to

force a refresh