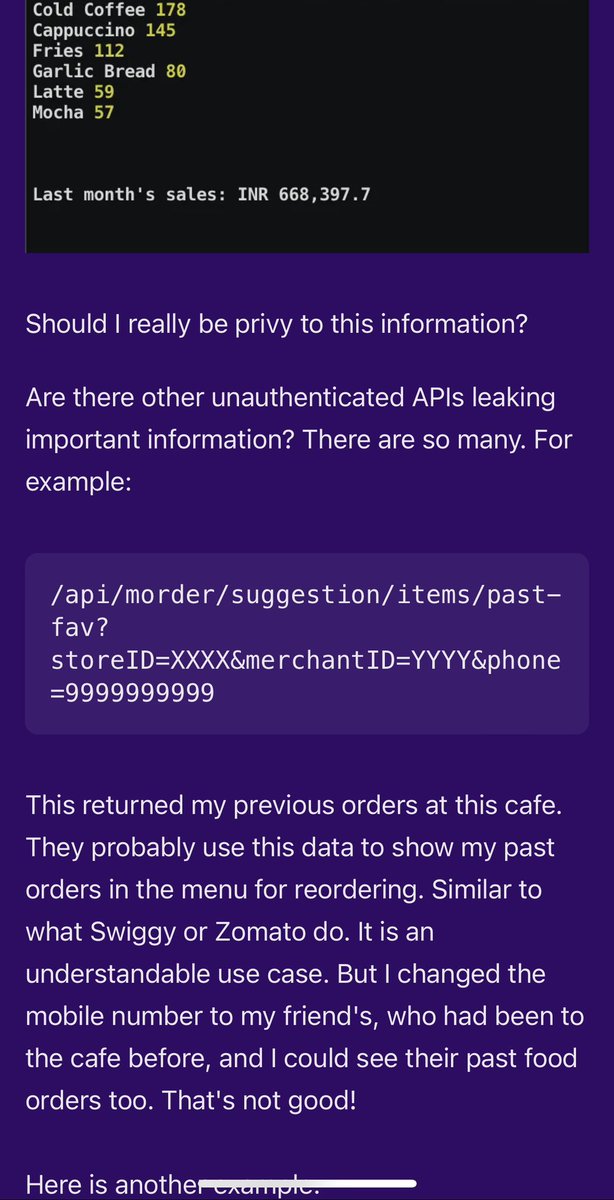

Indian startup Dotpe, that raised ~$100M to build point of sale systems for restaurants left their entire API fully public.

A clever hacker found out the most ordered thing at every Social in India.

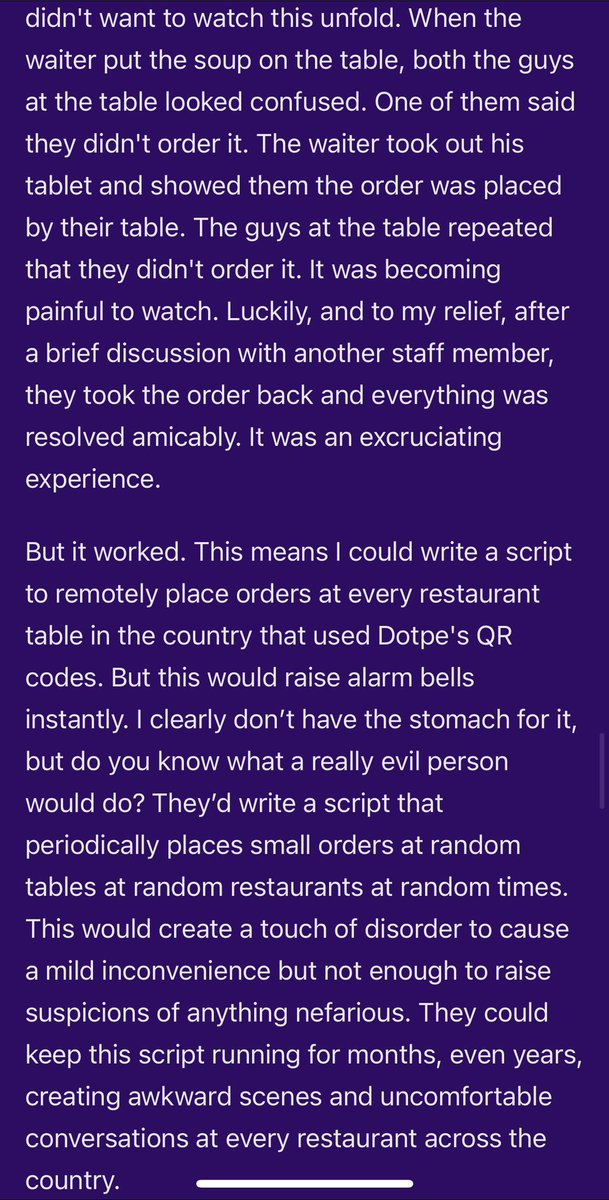

And did a prank to order what he wanted for a person next to him!

Zero auth.

A clever hacker found out the most ordered thing at every Social in India.

And did a prank to order what he wanted for a person next to him!

Zero auth.

Cached source: web.archive.org/web/2024092308…

• • •

Missing some Tweet in this thread? You can try to

force a refresh