excited to finally share on arxiv what we've known for a while now:

All Embedding Models Learn The Same Thing

embeddings from different models are SO similar that we can map between them based on structure alone. without *any* paired data

feels like magic, but it's real:🧵

All Embedding Models Learn The Same Thing

embeddings from different models are SO similar that we can map between them based on structure alone. without *any* paired data

feels like magic, but it's real:🧵

https://twitter.com/jxmnop/status/1893736235262251289

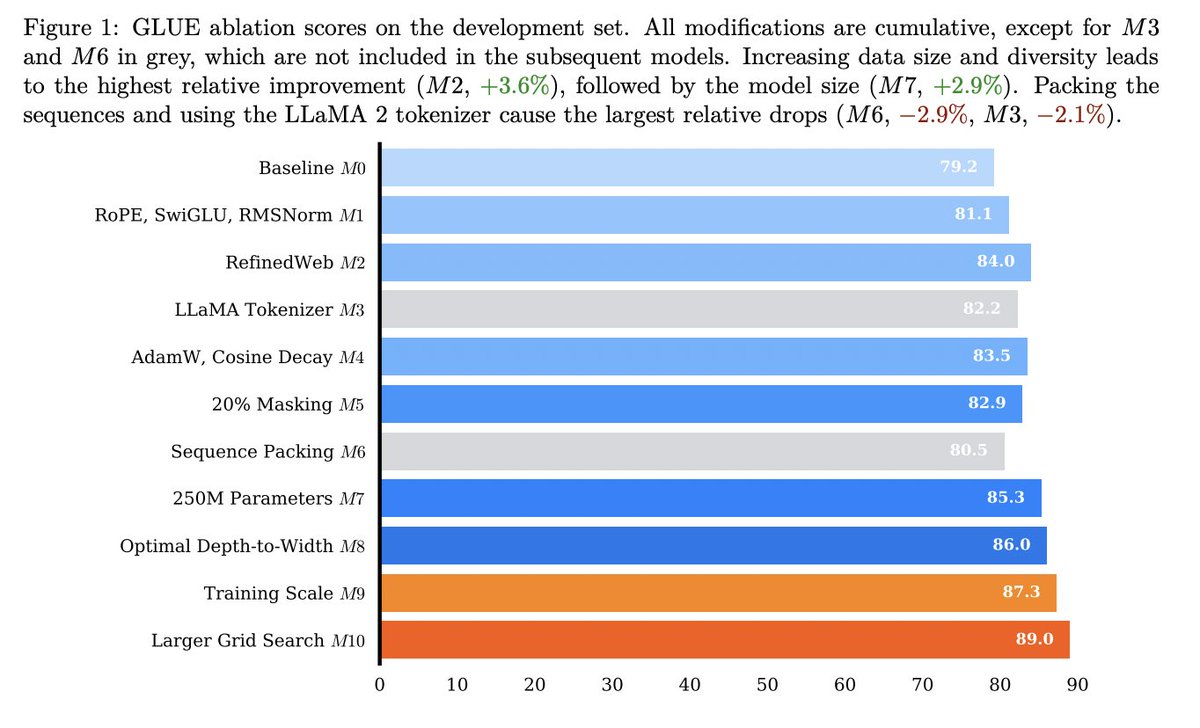

a lot of past research (relative representations, The Platonic Representation Hypothesis, comparison metrics like CCA, SVCCA, ...) has asserted that once they reach a certain scale, different models learn the same thing

this has been shown using various metrics of comparison

this has been shown using various metrics of comparison

we take things a step further. if models E1 and E2 are learning 'similar' representations, what if we were able to actually align them?

and can we do this with just random samples from E1 and E2, by matching their structure?

we take inspiration from 2017 GAN papers that aligned pictures of horses and zebras...

and can we do this with just random samples from E1 and E2, by matching their structure?

we take inspiration from 2017 GAN papers that aligned pictures of horses and zebras...

so yes, we're using a GAN. adversarial loss (to align representations) and cycle consistency loss (to make sure we align the *right* representations)

and it works. here's embeddings from GTR (a T5-based model) and GTE (a BERT-based model), after training our GAN for 50 epochs:

and it works. here's embeddings from GTR (a T5-based model) and GTE (a BERT-based model), after training our GAN for 50 epochs:

theoretically, the implications of this seem big. we call it The Strong Platonic Representation Hypothesis:

models of a certain scale learn representations that are so similar that we can learn to translate between them, using *no* paired data (just our version of CycleGAN)

models of a certain scale learn representations that are so similar that we can learn to translate between them, using *no* paired data (just our version of CycleGAN)

and practically, this is bad for vector databases. this means that even if you fine-tune your own model, and keep the model secret, someone with access to embeddings alone can decode their text

embedding inversion without model access 😬

embedding inversion without model access 😬

this was joint work with my friends @rishi_d_jha, collin zhang, and vitaly shmatikov at Cornell Tech

our paper "Harnessing the Universal Geometry of Embeddings" is on ArXiv today: arxiv.org/abs/2505.12540

our paper "Harnessing the Universal Geometry of Embeddings" is on ArXiv today: arxiv.org/abs/2505.12540

check out Rishi's thread for more info, and follow him for additional research updates!

https://x.com/rishi_d_jha/status/1925212069168910340

• • •

Missing some Tweet in this thread? You can try to

force a refresh