4 stages of training LLMs from scratch, clearly explained (with visuals):

Today, we are covering the 4 stages of building LLMs from scratch to make them applicable for real-world use cases.

We'll cover:

- Pre-training

- Instruction fine-tuning

- Preference fine-tuning

- Reasoning fine-tuning

The visual summarizes these techniques.

Let's dive in!

We'll cover:

- Pre-training

- Instruction fine-tuning

- Preference fine-tuning

- Reasoning fine-tuning

The visual summarizes these techniques.

Let's dive in!

0️⃣ Randomly initialized LLM

At this point, the model knows nothing.

You ask it “What is an LLM?” and get gibberish like “try peter hand and hello 448Sn”.

It hasn’t seen any data yet and possesses just random weights.

Check this 👇

At this point, the model knows nothing.

You ask it “What is an LLM?” and get gibberish like “try peter hand and hello 448Sn”.

It hasn’t seen any data yet and possesses just random weights.

Check this 👇

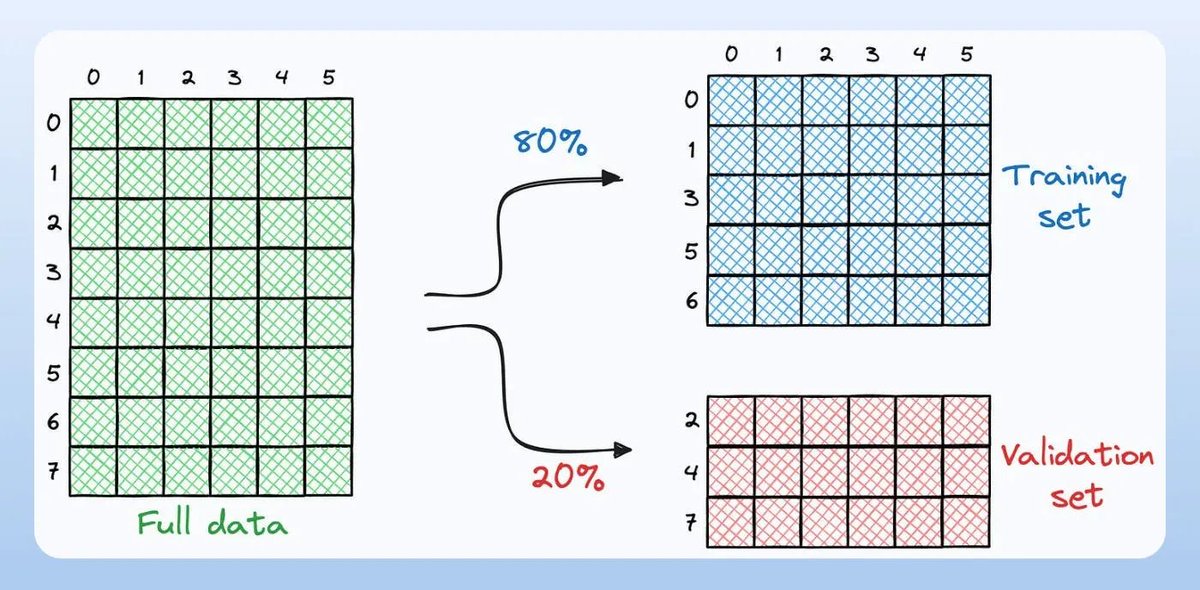

1️⃣ Pre-training

This stage teaches the LLM the basics of language by training it on massive corpora to predict the next token. This way, it absorbs grammar, world facts, etc.

But it’s not good at conversation because when prompted, it just continues the text.

Check this 👇

This stage teaches the LLM the basics of language by training it on massive corpora to predict the next token. This way, it absorbs grammar, world facts, etc.

But it’s not good at conversation because when prompted, it just continues the text.

Check this 👇

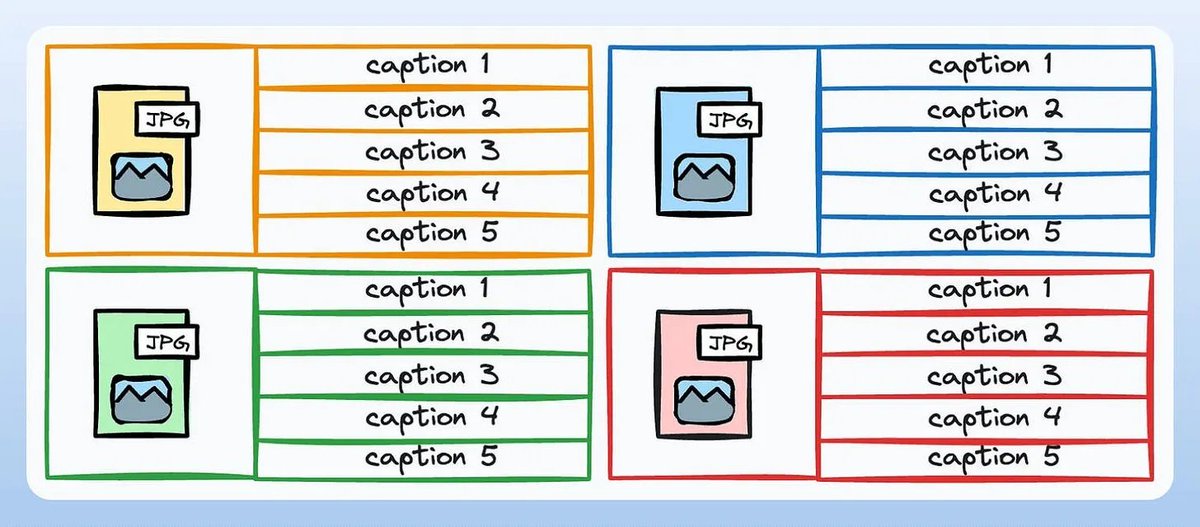

2️⃣ Instruction fine-tuning

To make it conversational, we do Instruction Fine-tuning by training on instruction-response pairs. This helps it learn how to follow prompts and format replies.

Now it can:

- Answer questions

- Summarize content

- Write code, etc.

Check this 👇

To make it conversational, we do Instruction Fine-tuning by training on instruction-response pairs. This helps it learn how to follow prompts and format replies.

Now it can:

- Answer questions

- Summarize content

- Write code, etc.

Check this 👇

At this point, we have likely:

- Utilized the entire raw internet archive and knowledge.

- The budget for human-labeled instruction response data.

So what can we do to further improve the model?

We enter into the territory of Reinforcement Learning (RL).

Let's learn next 👇

- Utilized the entire raw internet archive and knowledge.

- The budget for human-labeled instruction response data.

So what can we do to further improve the model?

We enter into the territory of Reinforcement Learning (RL).

Let's learn next 👇

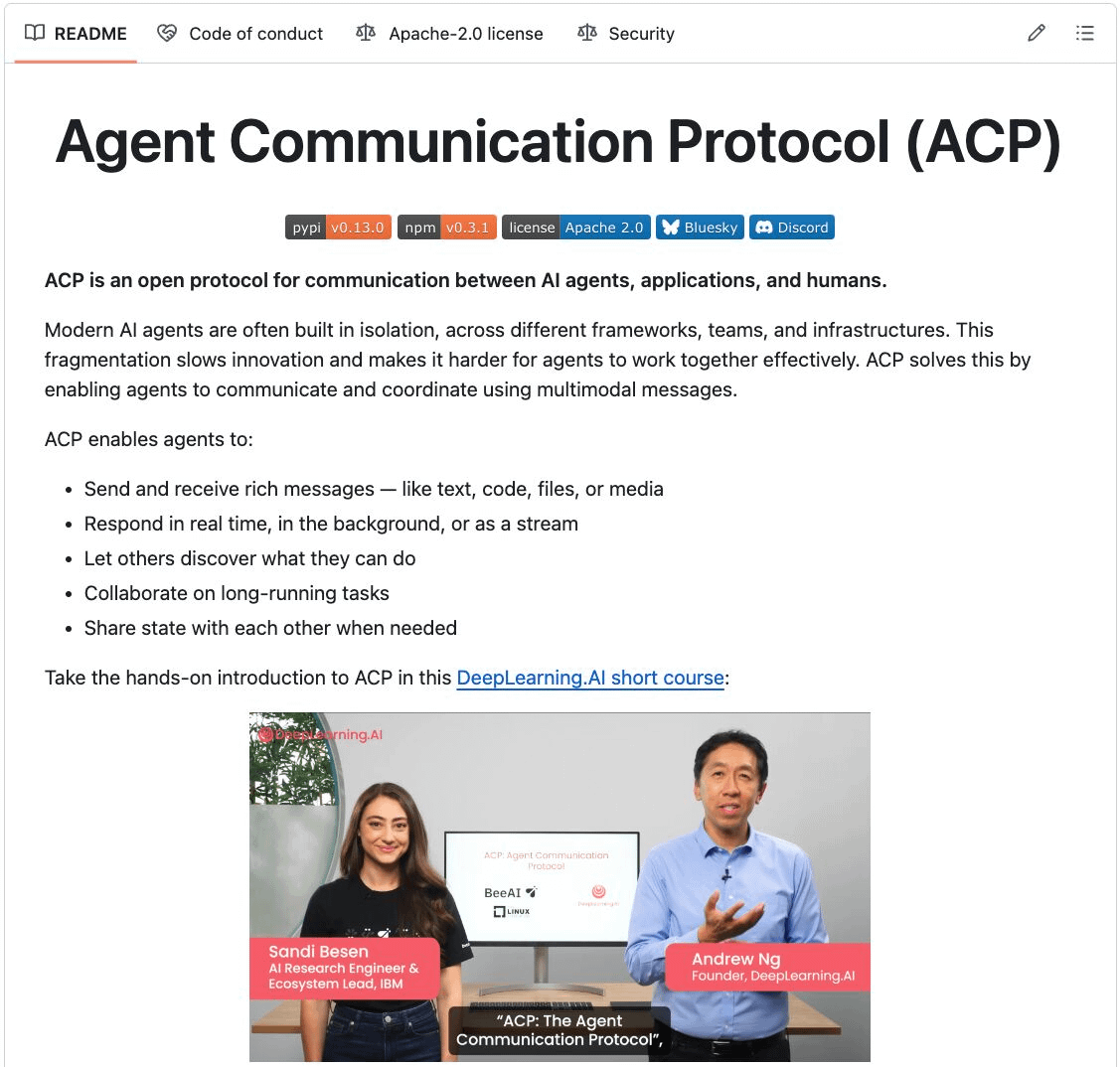

3️⃣ Preference fine-tuning (PFT)

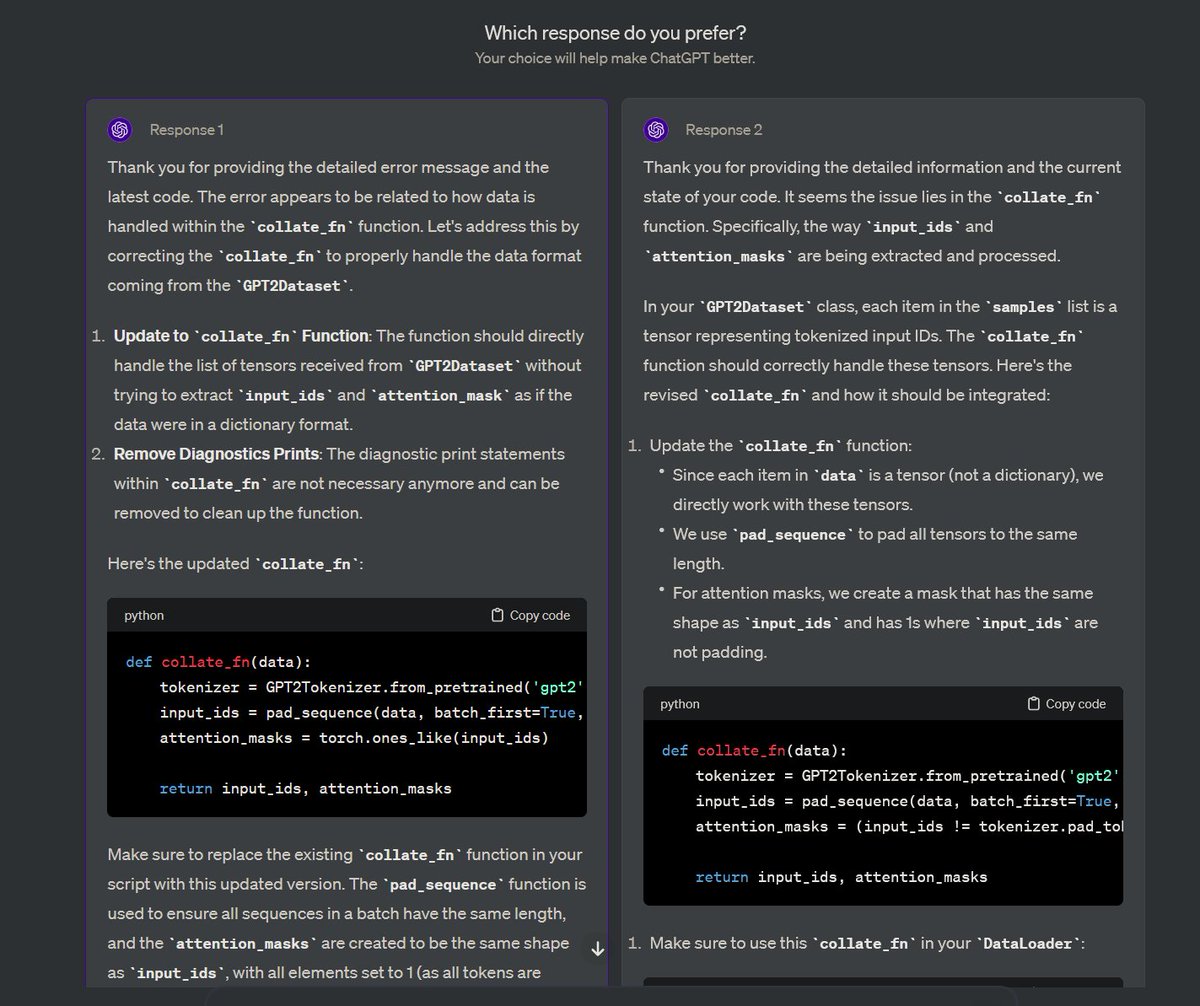

You must have seen this screen on ChatGPT where it asks: Which response do you prefer?

That’s not just for feedback but it’s valuable human preference data.

OpenAI uses this to fine-tune their models using preference fine-tuning.

Check this 👇

You must have seen this screen on ChatGPT where it asks: Which response do you prefer?

That’s not just for feedback but it’s valuable human preference data.

OpenAI uses this to fine-tune their models using preference fine-tuning.

Check this 👇

In PFT:

The user chooses between 2 responses to produce human preference data.

A reward model is then trained to predict human preference and the LLM is updated using RL.

Check this 👇

The user chooses between 2 responses to produce human preference data.

A reward model is then trained to predict human preference and the LLM is updated using RL.

Check this 👇

The above process is called RLHF (Reinforcement Learning with Human Feedback) and the algorithm used to update model weights is called PPO.

It teaches the LLM to align with humans even when there’s no "correct" answer.

But we can improve the LLM even more.

Let's learn next👇

It teaches the LLM to align with humans even when there’s no "correct" answer.

But we can improve the LLM even more.

Let's learn next👇

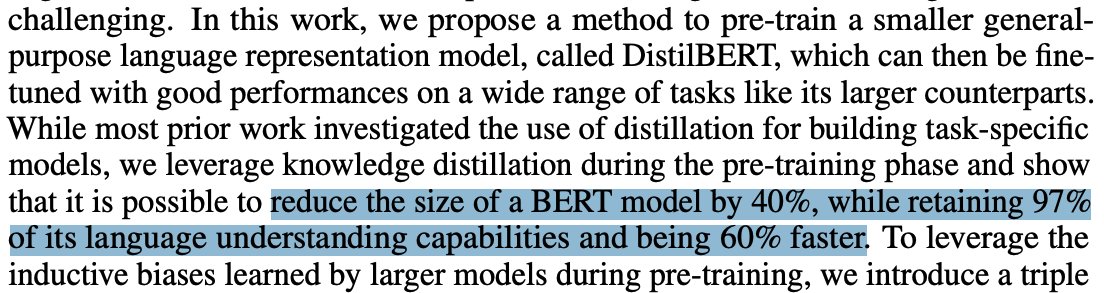

4️⃣ Reasoning fine-tuning

In reasoning tasks (maths, logic, etc.), there's usually just one correct response and a defined series of steps to obtain the answer.

So we don’t need human preferences, and we can use correctness as the signal.

This is called reasoning fine-tuning👇

In reasoning tasks (maths, logic, etc.), there's usually just one correct response and a defined series of steps to obtain the answer.

So we don’t need human preferences, and we can use correctness as the signal.

This is called reasoning fine-tuning👇

Steps:

- The model generates an answer to a prompt.

- The answer is compared to the known correct answer.

- Based on the correctness, we assign a reward.

This is called Reinforcement Learning with Verifiable Rewards.

GRPO by DeepSeek is a popular technique.

Check this👇

- The model generates an answer to a prompt.

- The answer is compared to the known correct answer.

- Based on the correctness, we assign a reward.

This is called Reinforcement Learning with Verifiable Rewards.

GRPO by DeepSeek is a popular technique.

Check this👇

Those were the 4 stages of training an LLM from scratch.

- Start with a randomly initialized model.

- Pre-train it on large-scale corpora.

- Use instruction fine-tuning to make it follow commands.

- Use preference & reasoning fine-tuning to sharpen responses.

Check this 👇

- Start with a randomly initialized model.

- Pre-train it on large-scale corpora.

- Use instruction fine-tuning to make it follow commands.

- Use preference & reasoning fine-tuning to sharpen responses.

Check this 👇

If you found it insightful, reshare it with your network.

Find me → @_avichawla

Every day, I share tutorials and insights on DS, ML, LLMs, and RAGs.

Find me → @_avichawla

Every day, I share tutorials and insights on DS, ML, LLMs, and RAGs.

https://twitter.com/1175166450832687104/status/1947184607277019582

• • •

Missing some Tweet in this thread? You can try to

force a refresh