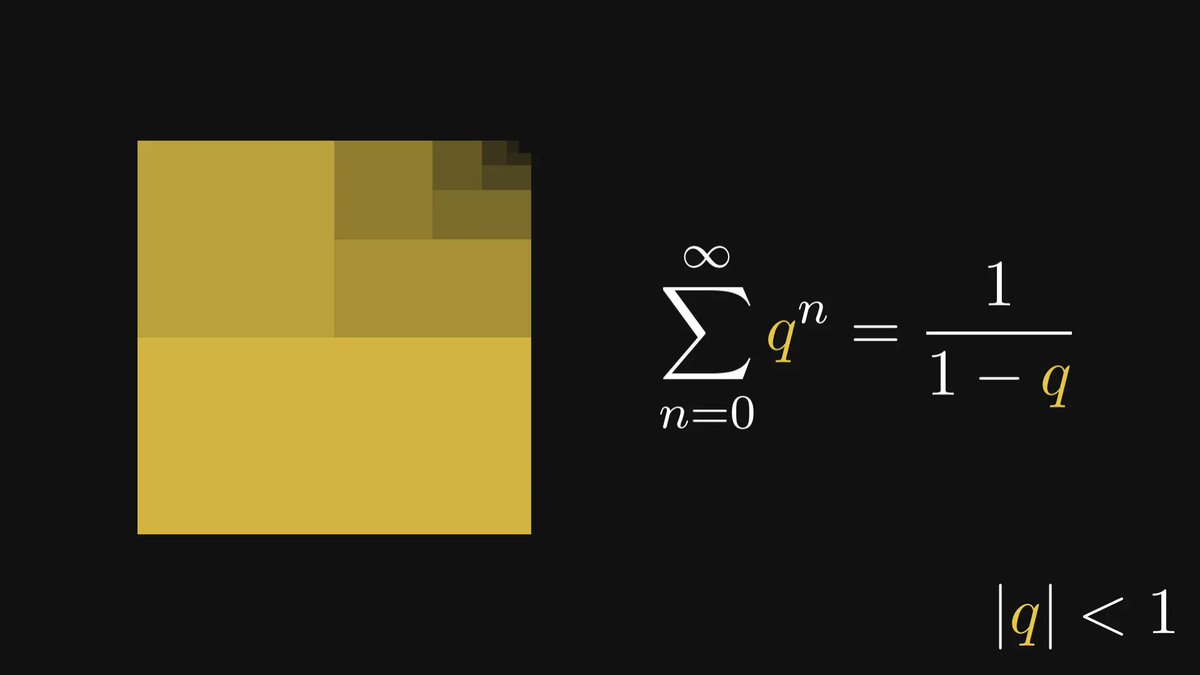

One of my favorite formulas is the closed-form of the geometric series.

I am amazed by its ubiquity: whether we are solving basic problems or pushing the boundaries of science, the geometric series often makes an appearance.

Here is how to derive it from first principles:

I am amazed by its ubiquity: whether we are solving basic problems or pushing the boundaries of science, the geometric series often makes an appearance.

Here is how to derive it from first principles:

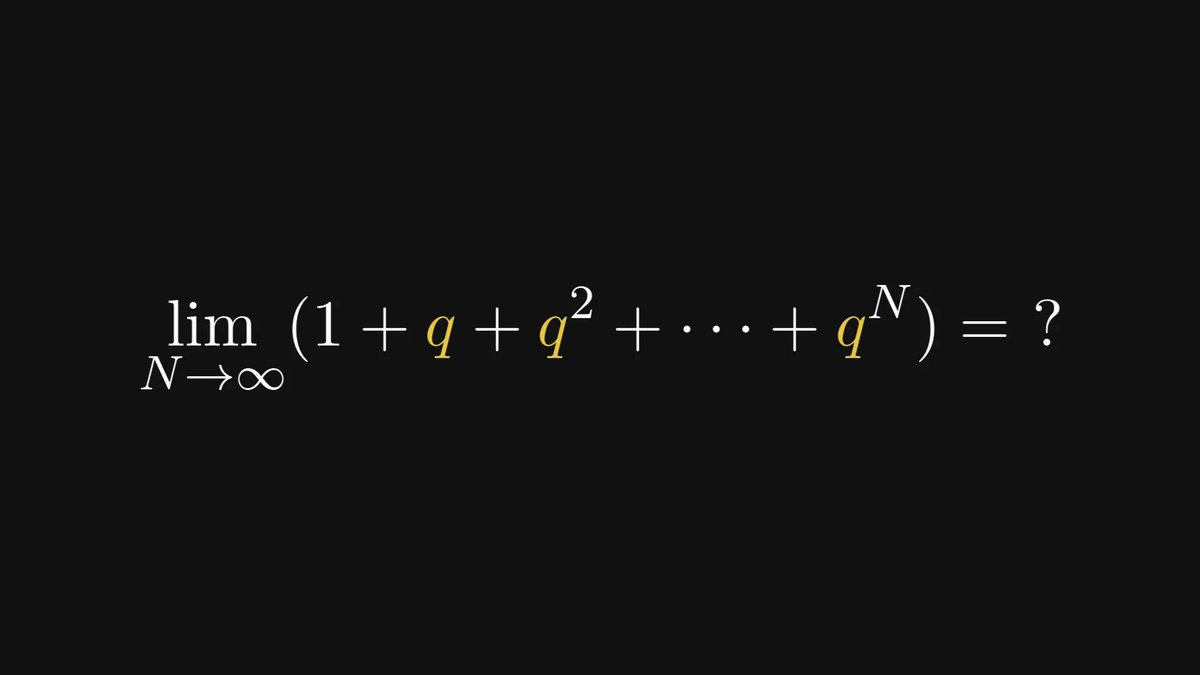

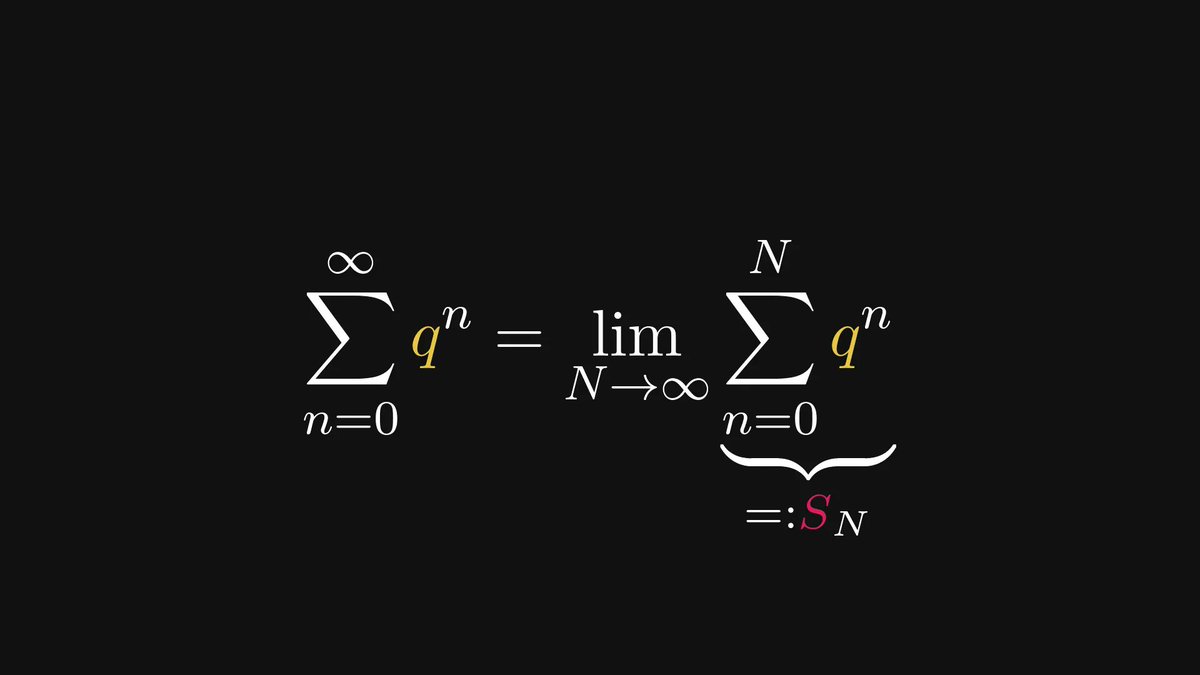

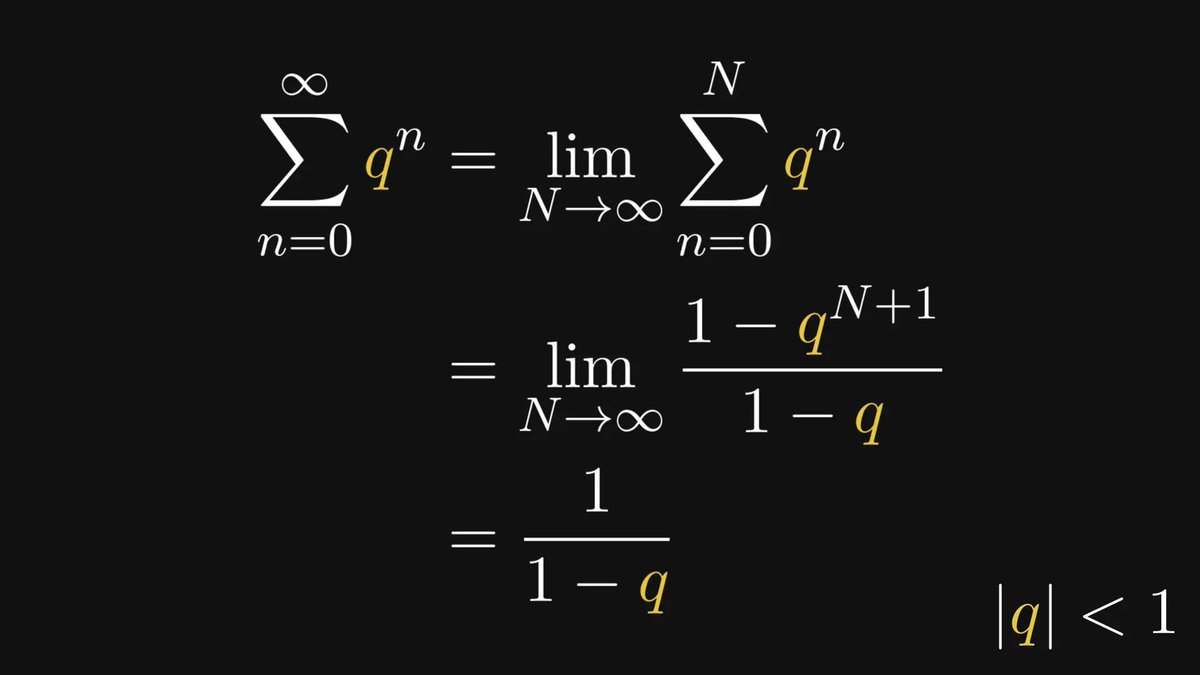

Let’s start with the basics: like any other series, the geometric series is the limit of its partial sums.

Our task is to find that limit.

Our task is to find that limit.

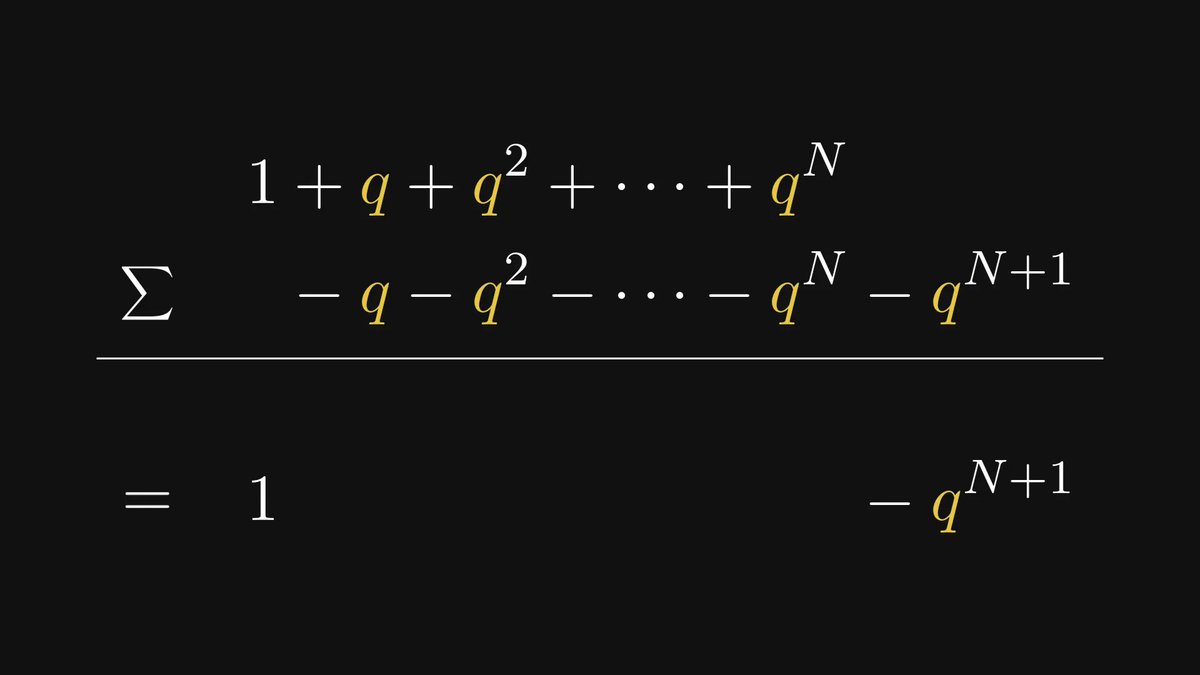

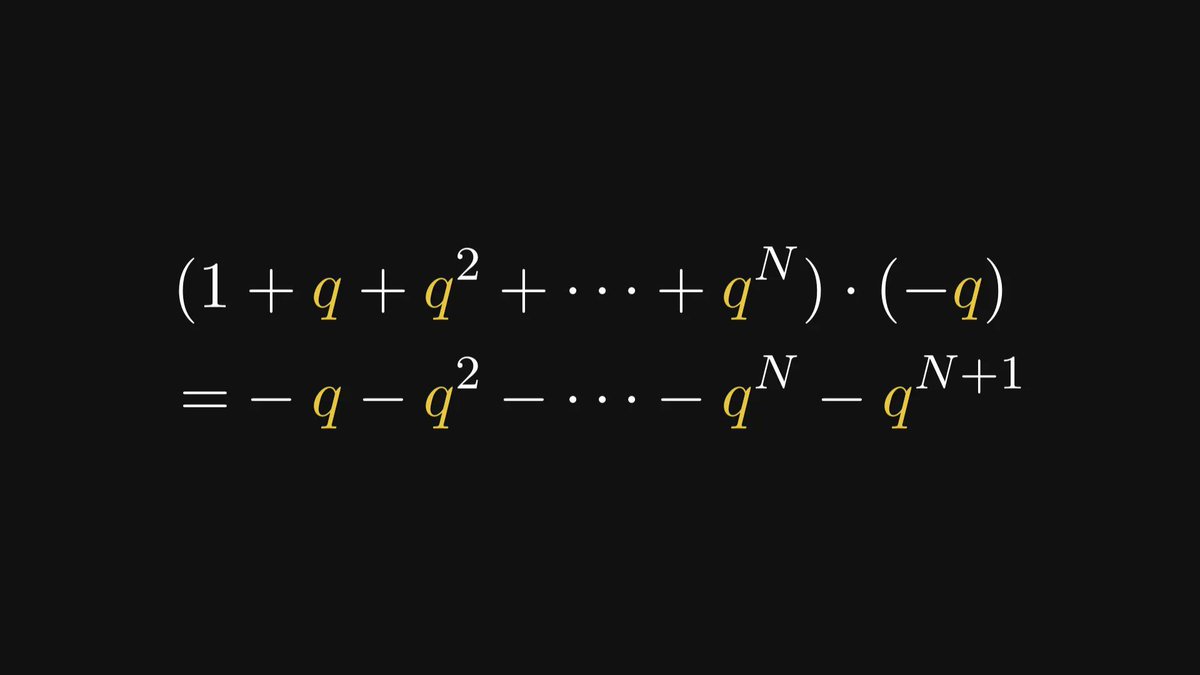

The trick is to notice that multiplying the partial sums by (-q) yields a polynomial that can be used to eliminate all but two terms.

I know, this feels like pulling a rabbit from a hat.

Trust me, after you have seen this trick a few times, it’ll feel like second nature. The result is called a telescopic sum.

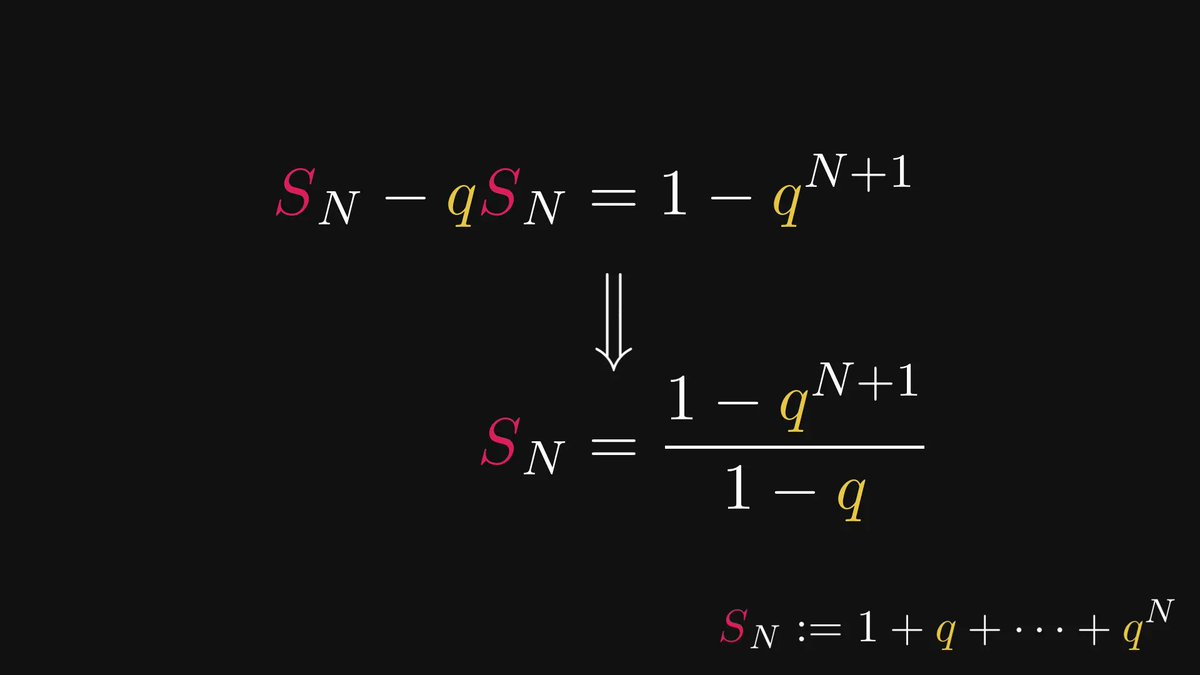

Thus, the partial sums are significantly simpler now.

Trust me, after you have seen this trick a few times, it’ll feel like second nature. The result is called a telescopic sum.

Thus, the partial sums are significantly simpler now.

We are almost done.

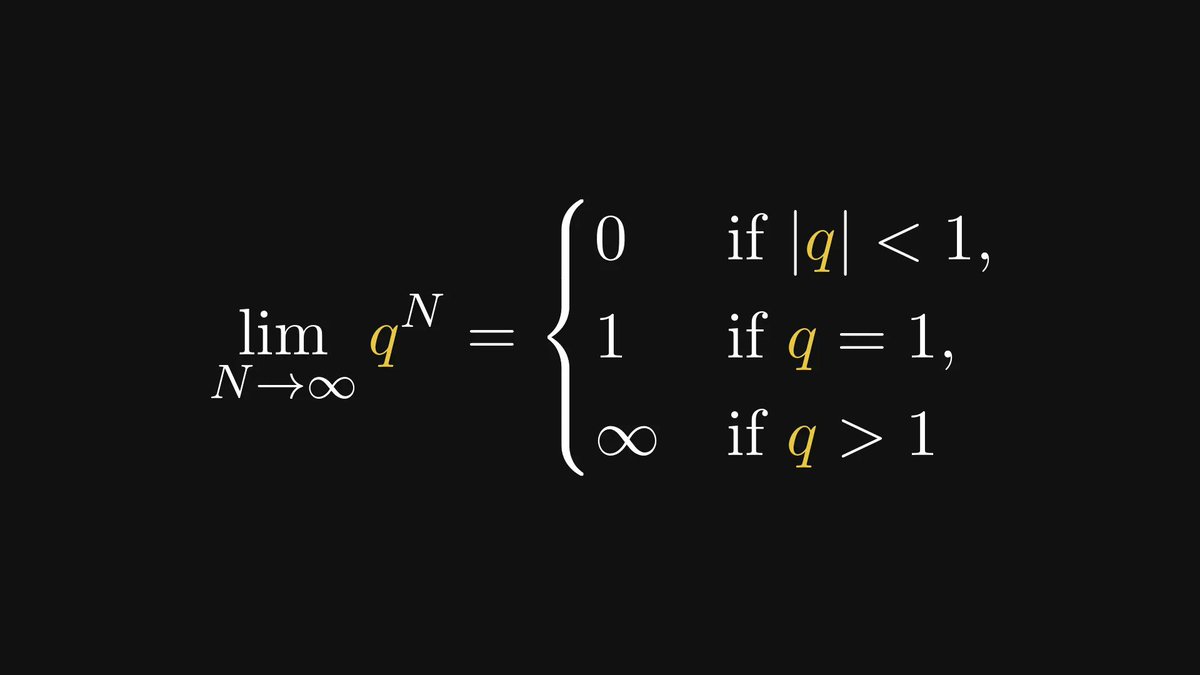

Before we study the limit of partial sums, let’s focus on qᴺ.

Its limiting behavior (as N goes to ∞) is quite simple:

Before we study the limit of partial sums, let’s focus on qᴺ.

Its limiting behavior (as N goes to ∞) is quite simple:

With this, we are ready to put all the pieces together.

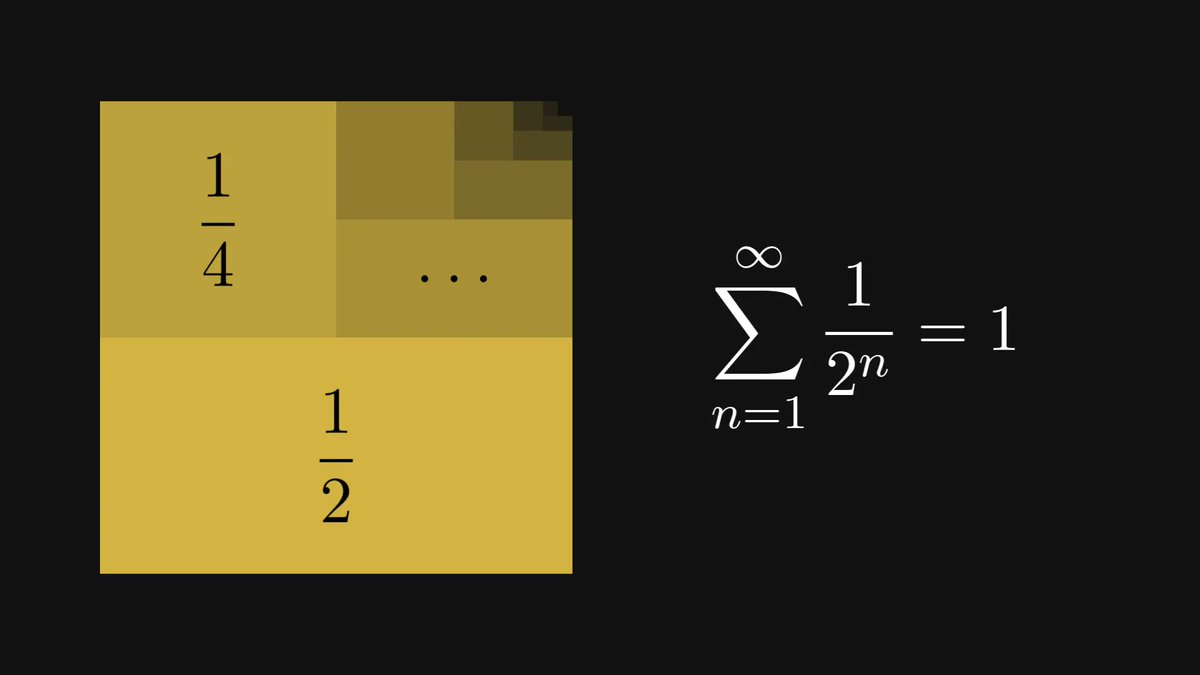

The geometric series is convergent for all |q| < 1, with a nice and simple closed-form expression as the cherry on top.

The geometric series is convergent for all |q| < 1, with a nice and simple closed-form expression as the cherry on top.

Where does the geometric series appear?

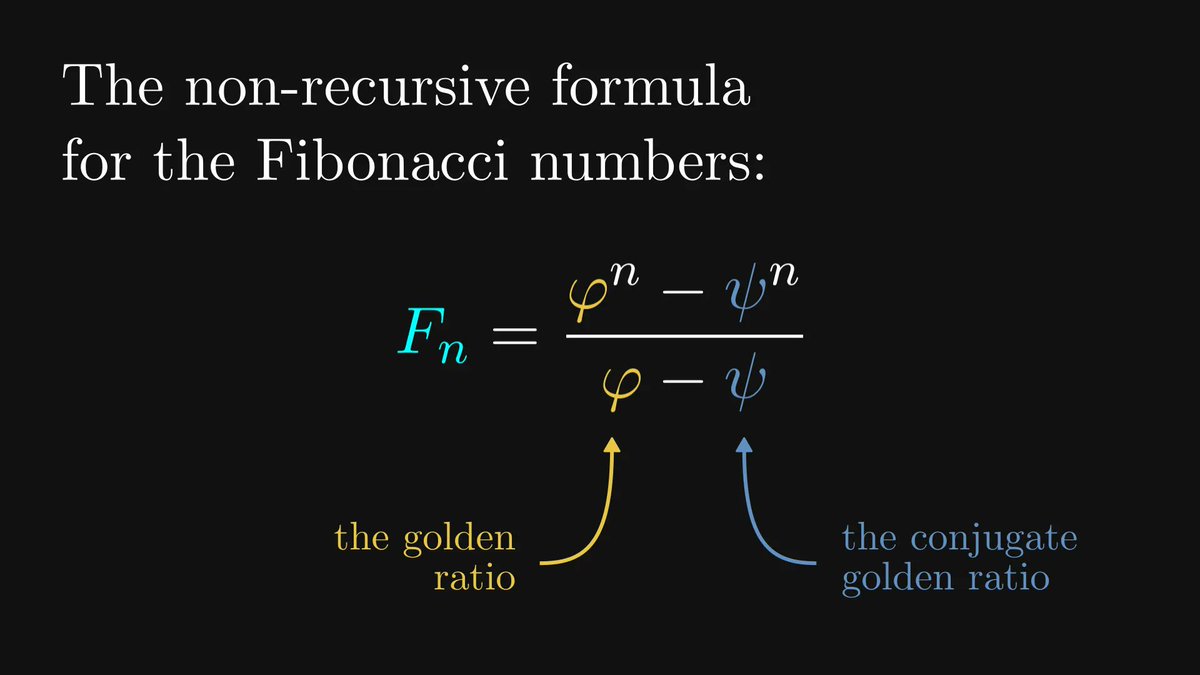

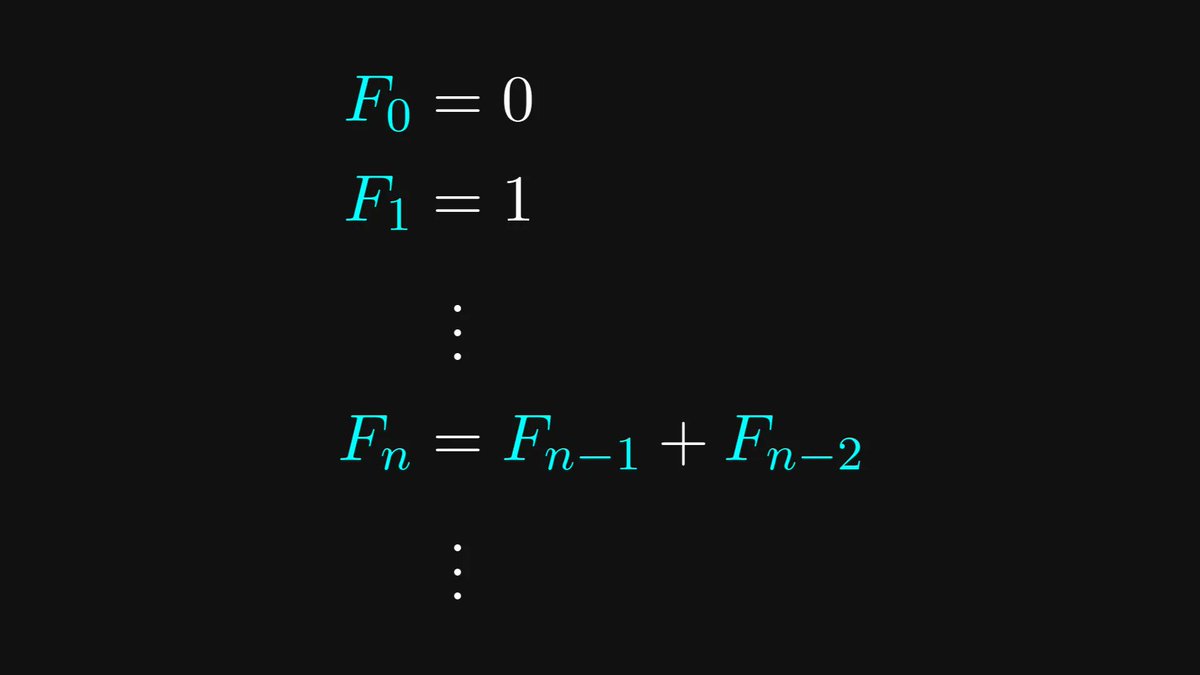

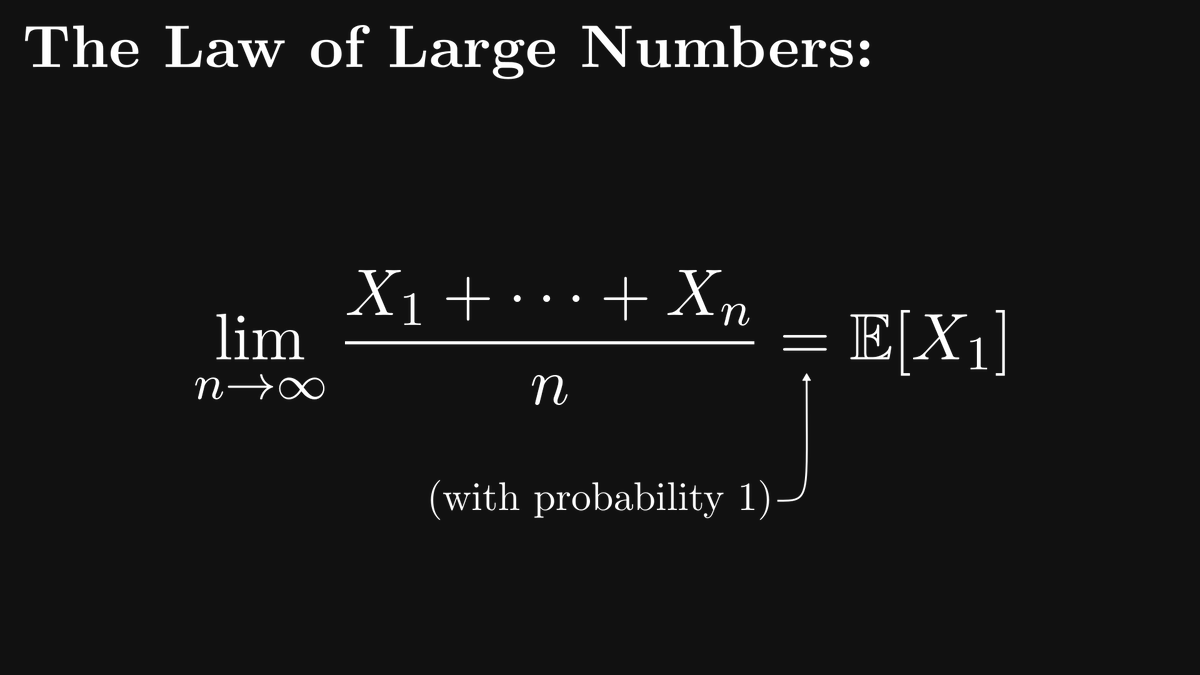

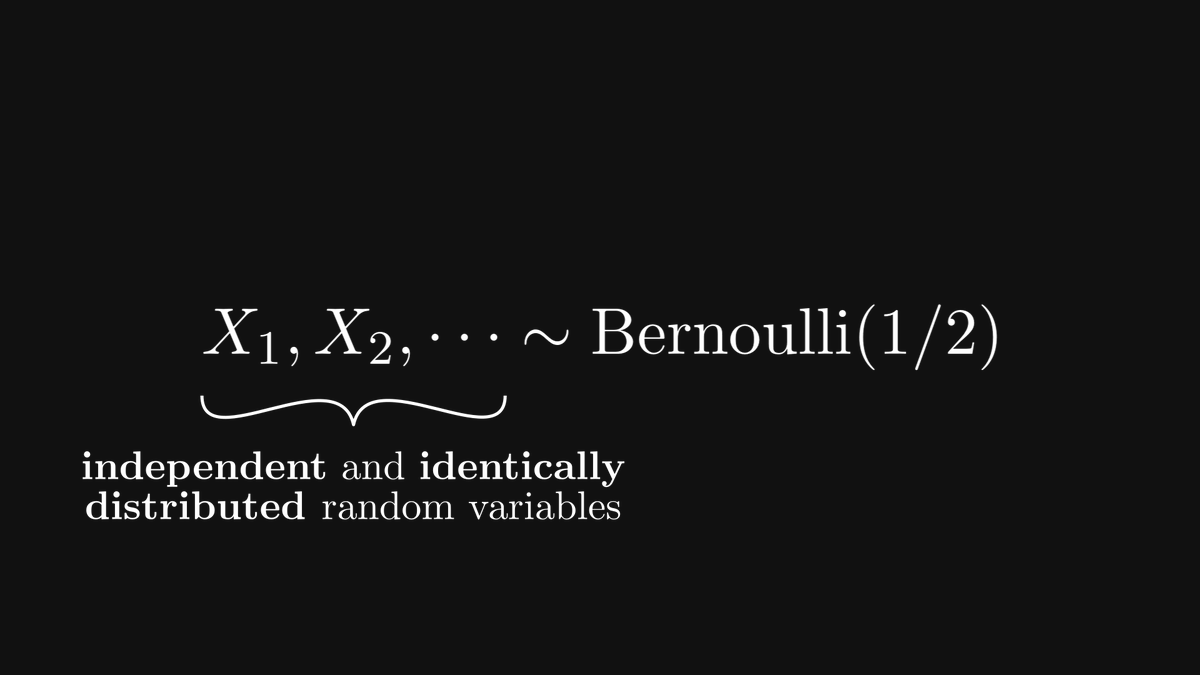

For instance, when deriving a closed-form expression for the Fibonacci numbers. Or, tossing coins ad infinitum.

There are countless applications.

For instance, when deriving a closed-form expression for the Fibonacci numbers. Or, tossing coins ad infinitum.

There are countless applications.

https://twitter.com/870723729831145472/status/1622922634907488256

This simple formula is one of the building blocks of mathematics, and it should be under the belt of anyone interested in looking behind the curtain of science, engineering, and mathematics.

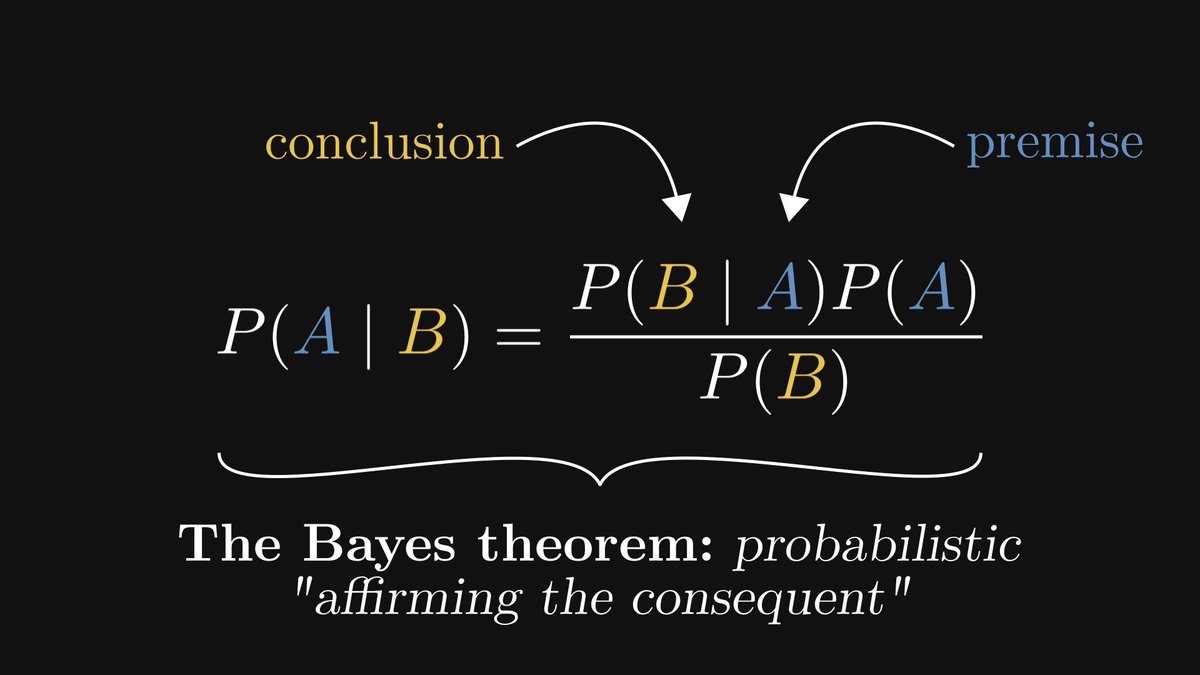

Most machine learning practitioners don’t understand the math behind their models.

That's why I've created a FREE roadmap so you can master the 3 main topics you'll ever need: algebra, calculus, and probabilities.

Get the roadmap here: thepalindrome.org/p/the-roadmap-…

That's why I've created a FREE roadmap so you can master the 3 main topics you'll ever need: algebra, calculus, and probabilities.

Get the roadmap here: thepalindrome.org/p/the-roadmap-…

• • •

Missing some Tweet in this thread? You can try to

force a refresh