I finally understand why my complex prompts sucked.

The solution isn't better prompting it's "Prompt Chaining."

Break one complex prompt into 5 simple ones that feed into each other.

Tested for 30 days. Output quality jumped 67%.

Here's how: 👇

The solution isn't better prompting it's "Prompt Chaining."

Break one complex prompt into 5 simple ones that feed into each other.

Tested for 30 days. Output quality jumped 67%.

Here's how: 👇

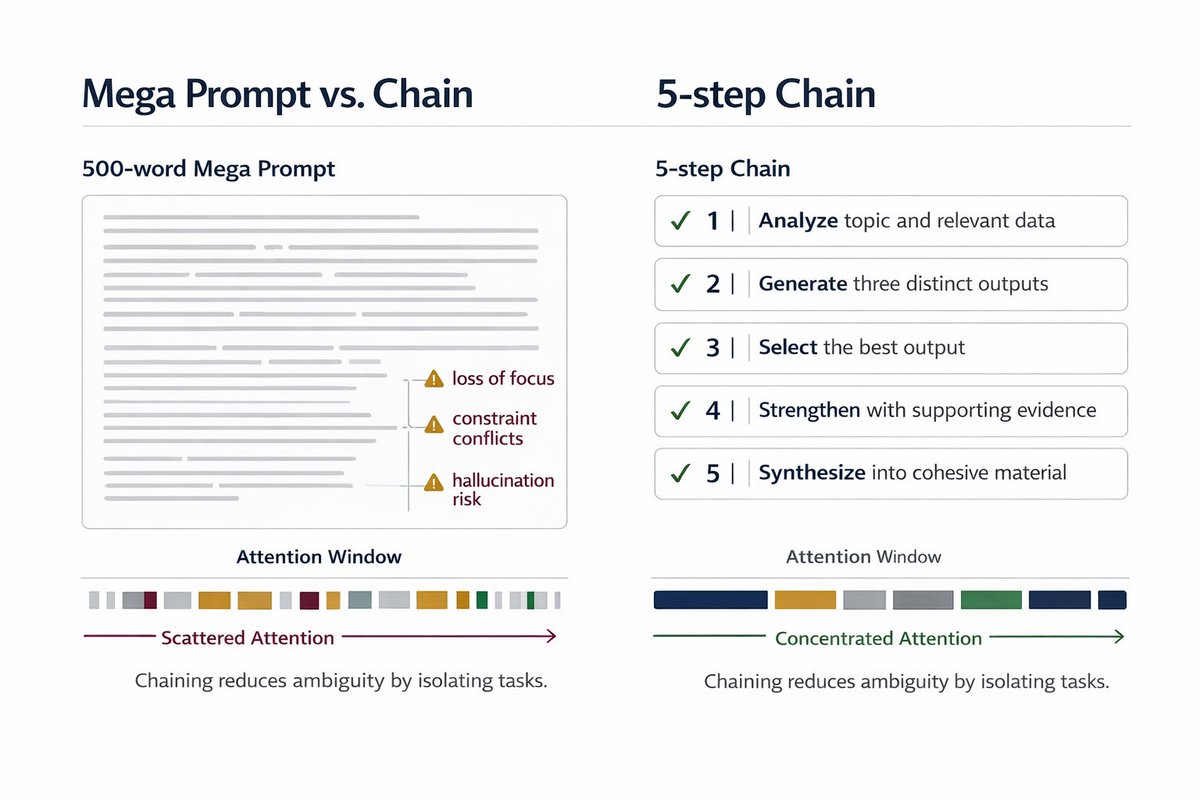

Most people write 500-word mega prompts and wonder why the AI hallucinates.

I did this for 2 years with ChatGPT.

Then I discovered how OpenAI engineers actually use these models.

They chain simple prompts. Each one builds on the last.

I did this for 2 years with ChatGPT.

Then I discovered how OpenAI engineers actually use these models.

They chain simple prompts. Each one builds on the last.

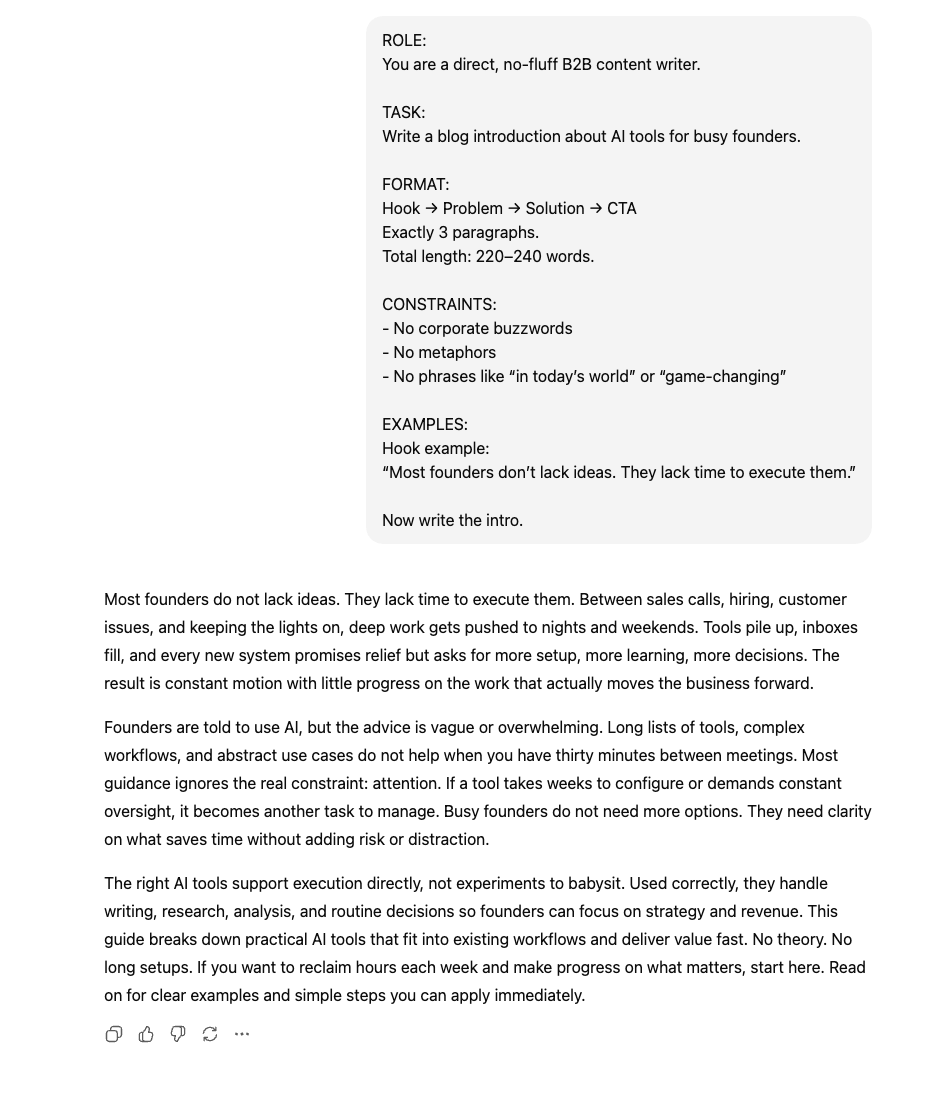

Here's the framework:

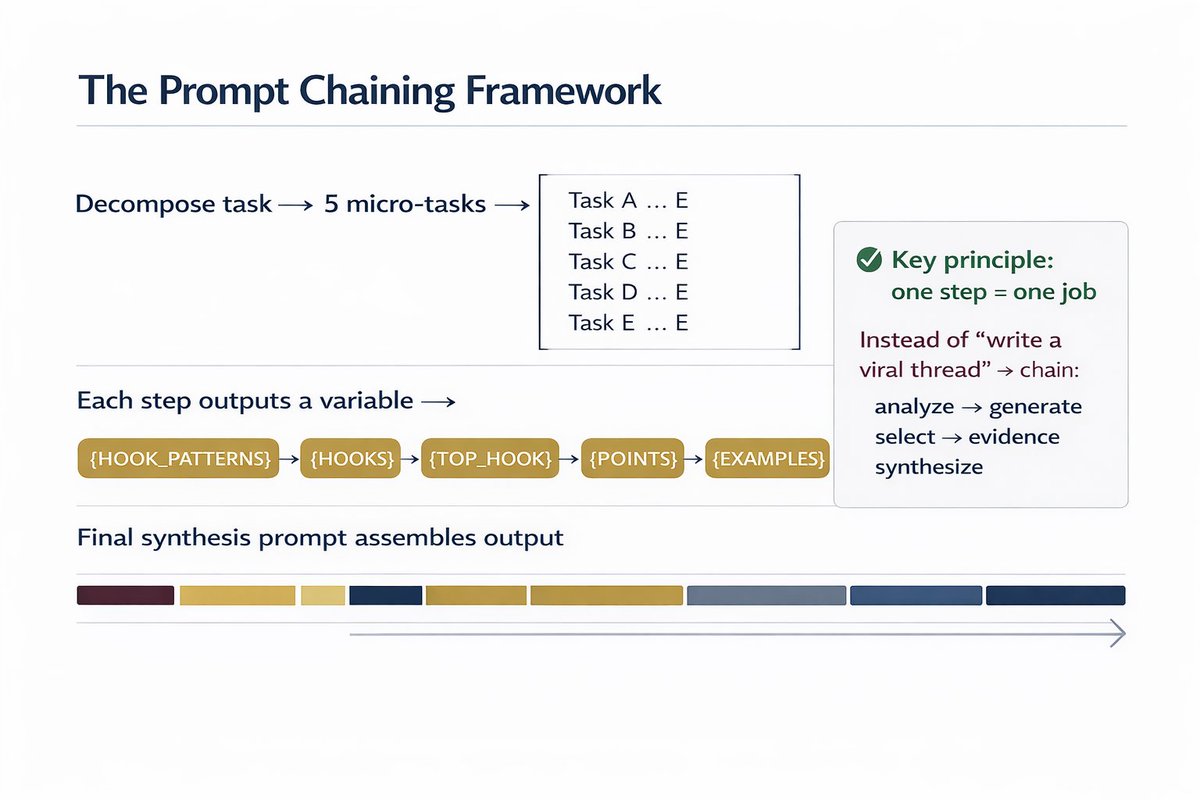

Step 1: Break your complex task into 5 micro-tasks

Step 2: Each prompt outputs a variable for the next

Step 3: Final prompt synthesizes everything

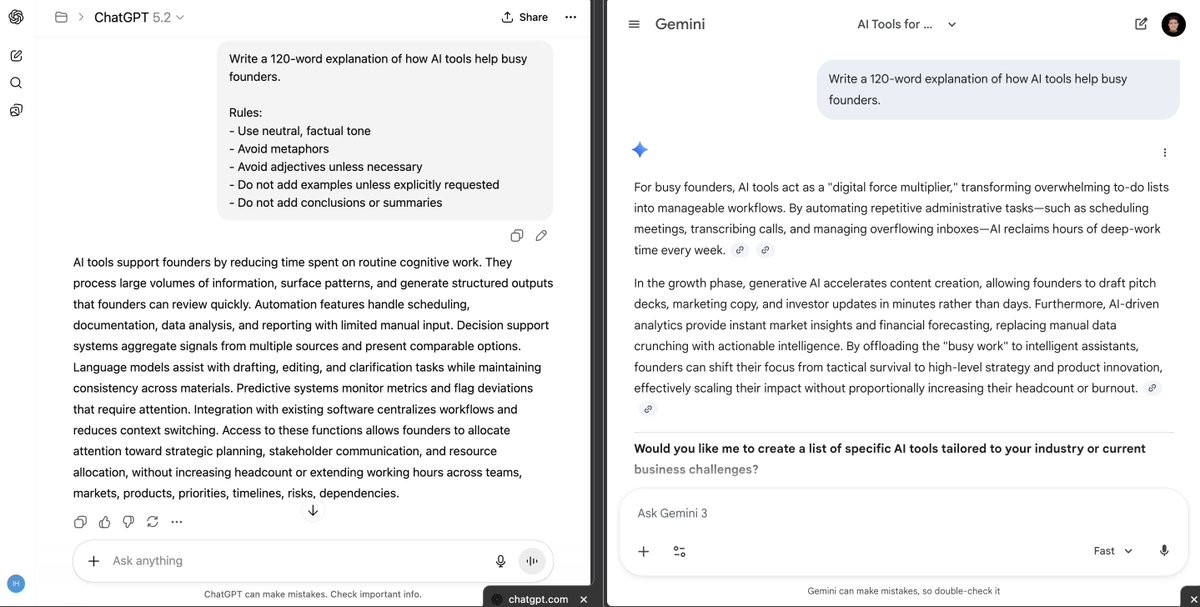

Example: Instead of "write a viral thread about AI" →

Chain 5 prompts that do ONE thing each.

Step 1: Break your complex task into 5 micro-tasks

Step 2: Each prompt outputs a variable for the next

Step 3: Final prompt synthesizes everything

Example: Instead of "write a viral thread about AI" →

Chain 5 prompts that do ONE thing each.

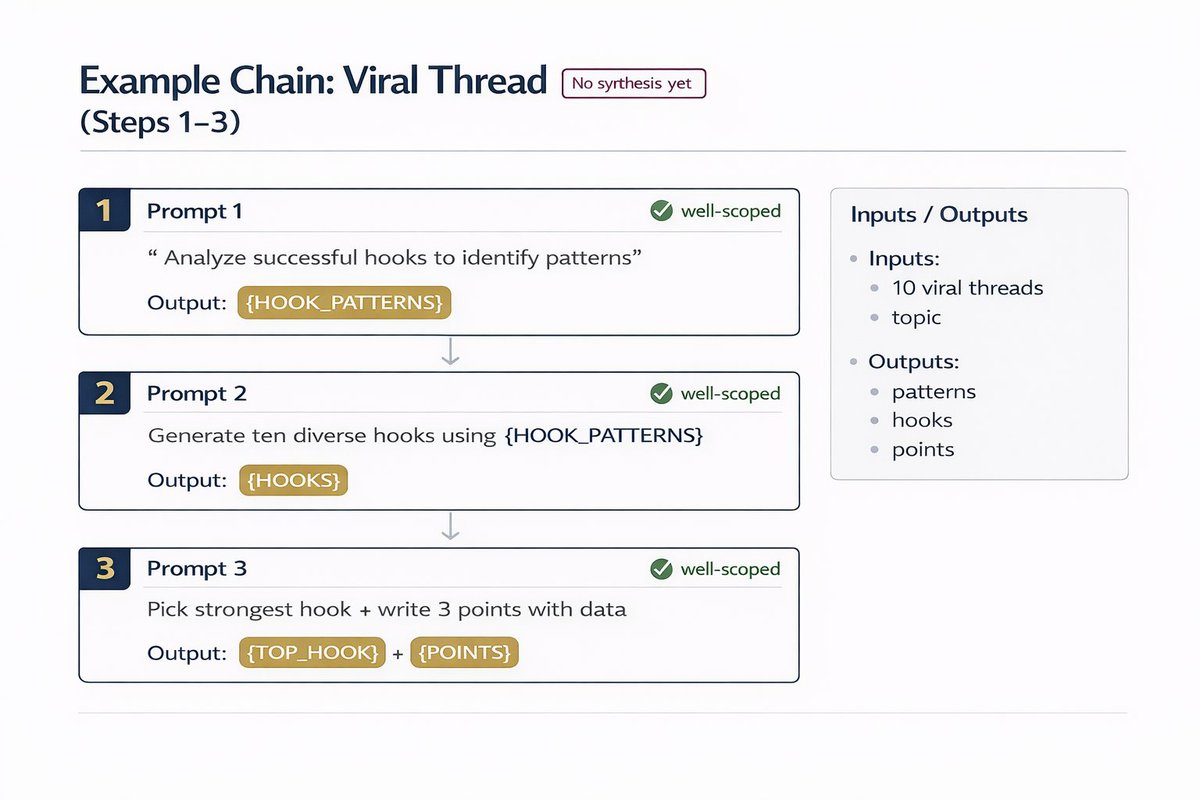

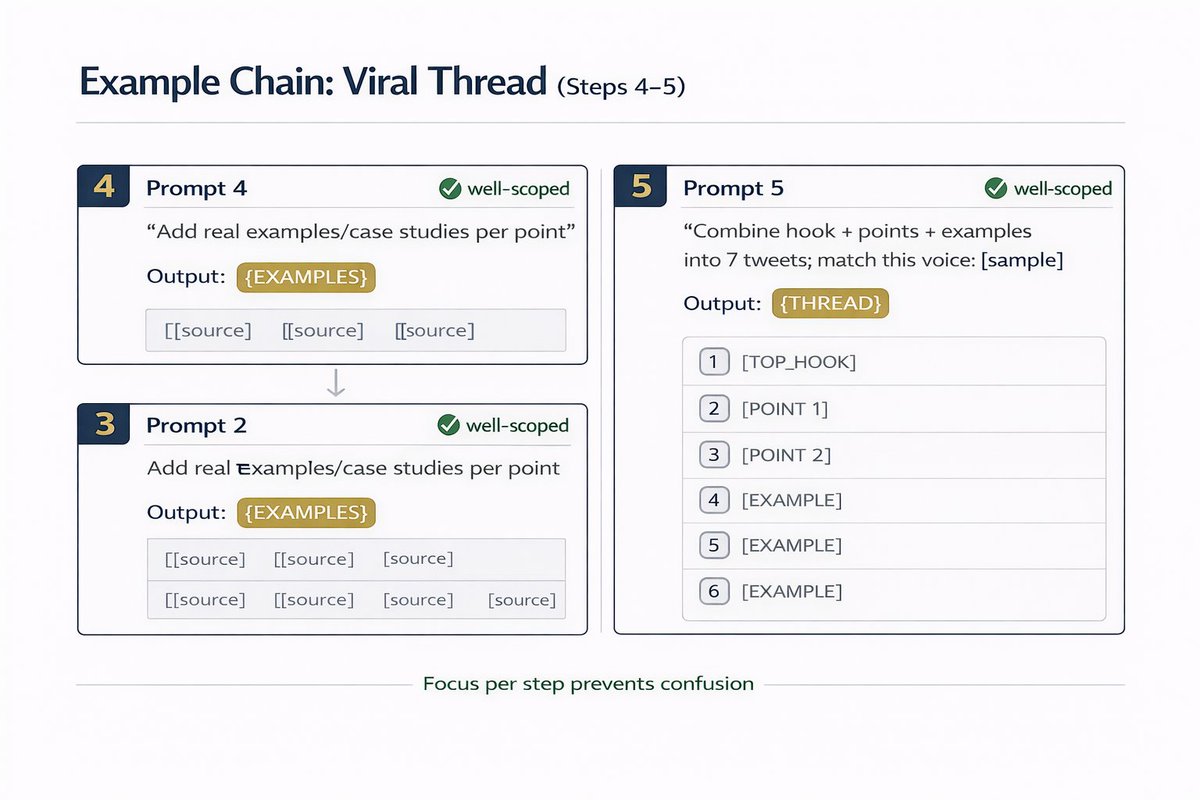

CHAIN EXAMPLE - Writing a viral thread:

Prompt 1: "Analyze these 10 viral AI threads. Extract the 3 hook patterns that appear most."

Prompt 2: "Using those 3 patterns, generate 5 hook variations for [topic]."

Prompt 3: "Pick the strongest hook. Write 3 supporting points with data."

Prompt 4: "For each supporting point, add a real example or case study."

Prompt 5: "Combine hook + points + examples into a 7-tweet thread. Match this voice: [paste your writing sample]"

Result: Better than any mega prompt I've ever written.

Each step is focused. No confusion.

Prompt 1: "Analyze these 10 viral AI threads. Extract the 3 hook patterns that appear most."

Prompt 2: "Using those 3 patterns, generate 5 hook variations for [topic]."

Prompt 3: "Pick the strongest hook. Write 3 supporting points with data."

Prompt 4: "For each supporting point, add a real example or case study."

Prompt 5: "Combine hook + points + examples into a 7-tweet thread. Match this voice: [paste your writing sample]"

Result: Better than any mega prompt I've ever written.

Each step is focused. No confusion.

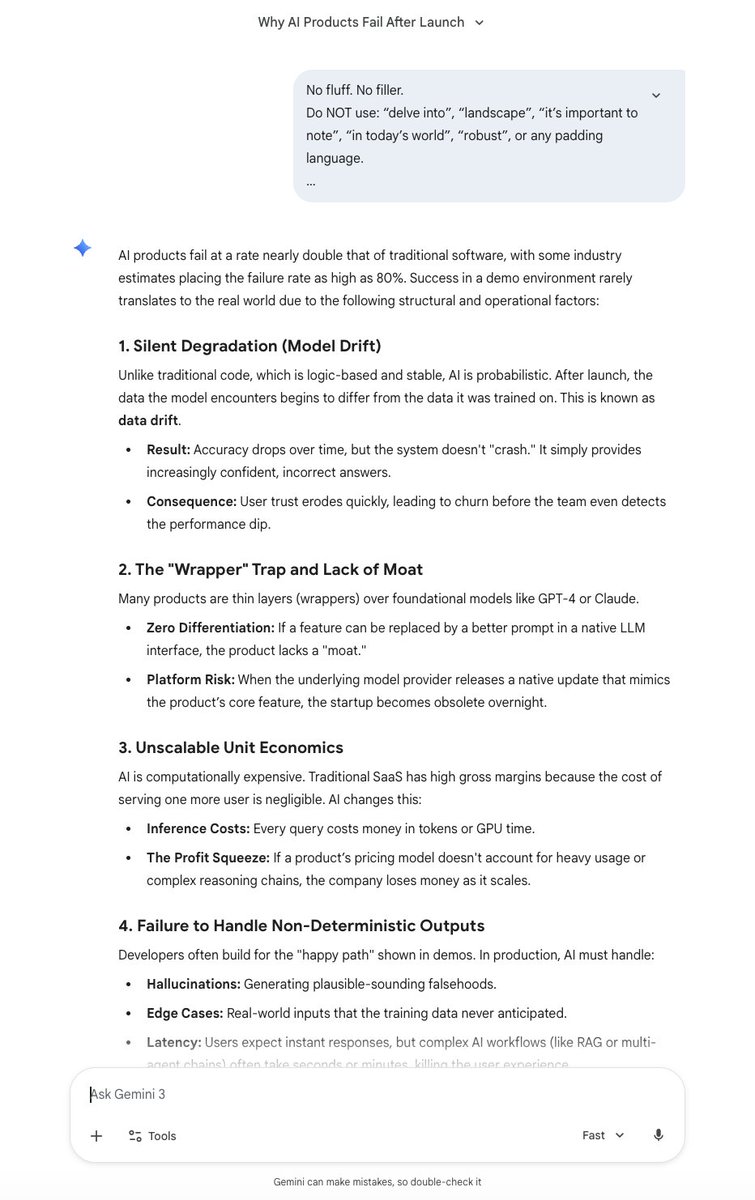

LLMs have context windows, but they also have "attention windows."

When you stuff 500 words into one prompt, the model loses focus on what matters.

Chaining forces the model to complete ONE task at 100% attention.

Then move to the next.

When you stuff 500 words into one prompt, the model loses focus on what matters.

Chaining forces the model to complete ONE task at 100% attention.

Then move to the next.

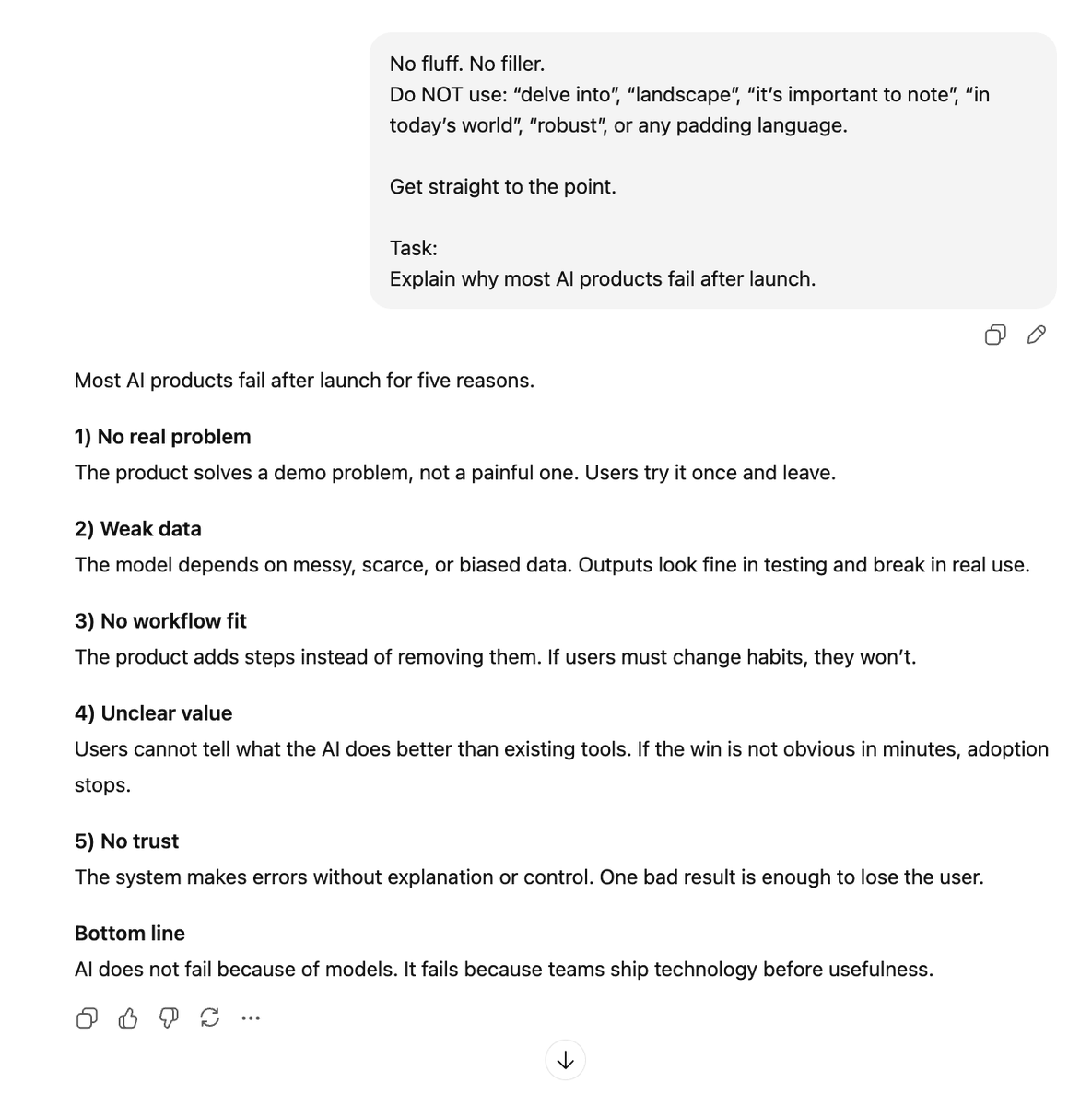

Real test I ran:

Mega prompt method:

- 8/10 outputs needed major editing

- Hallucination rate: ~40%

- Time to final draft: 45 min

Chain method:

+ 2/10 needed edits

+ Hallucination rate: ~8%

+ Time to final draft: 22 min

67% improvement is conservative.

Mega prompt method:

- 8/10 outputs needed major editing

- Hallucination rate: ~40%

- Time to final draft: 45 min

Chain method:

+ 2/10 needed edits

+ Hallucination rate: ~8%

+ Time to final draft: 22 min

67% improvement is conservative.

Where to use prompt chaining:

✅ Research reports (search → analyze → synthesize → format)

✅ Code debugging (identify → isolate → fix → test → document)

✅ Content creation (research → outline → draft → edit → optimize)

✅ Data analysis (clean → analyze → visualize → interpret)

✅ Research reports (search → analyze → synthesize → format)

✅ Code debugging (identify → isolate → fix → test → document)

✅ Content creation (research → outline → draft → edit → optimize)

✅ Data analysis (clean → analyze → visualize → interpret)

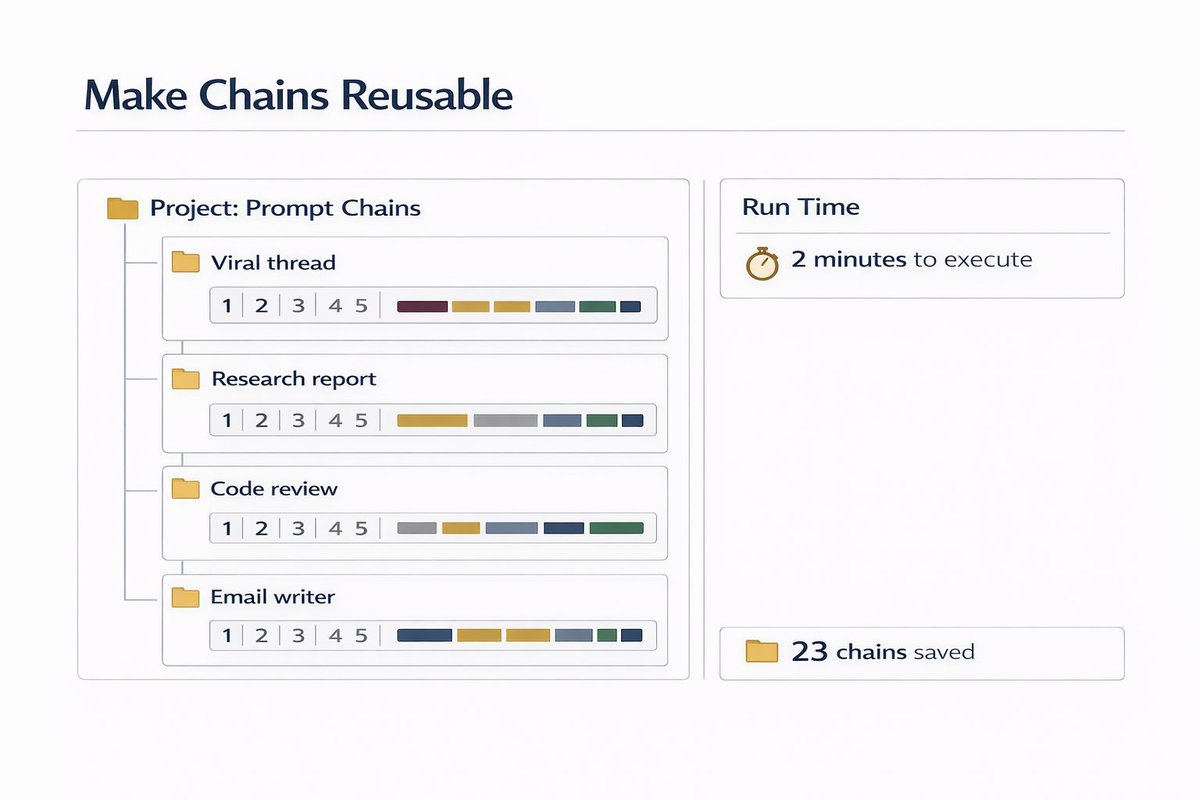

Pro tip:

Use Claude or ChatGPT's "Projects" feature to store your chains.

Save each prompt as a reusable template.

I have 23 chains saved:

> Viral thread chain

> Research report chain

> Code review chain

> Email writer chain

Takes 2 minutes to run. Outputs are 10x better.

Use Claude or ChatGPT's "Projects" feature to store your chains.

Save each prompt as a reusable template.

I have 23 chains saved:

> Viral thread chain

> Research report chain

> Code review chain

> Email writer chain

Takes 2 minutes to run. Outputs are 10x better.

AI makes content creation faster than ever, but it also makes guessing riskier than ever.

If you want to know what your audience will react to before you post, TestFeed gives you instant feedback from AI personas that think like your real users.

It’s the missing step between ideas and impact. Join the waitlist and stop publishing blind.

testfeed.ai

If you want to know what your audience will react to before you post, TestFeed gives you instant feedback from AI personas that think like your real users.

It’s the missing step between ideas and impact. Join the waitlist and stop publishing blind.

testfeed.ai

I hope you've found this thread helpful.

Follow me @MillieMarconnni for more.

Like/Repost the quote below if you can:

Follow me @MillieMarconnni for more.

Like/Repost the quote below if you can:

https://twitter.com/1909092495734235136/status/2021881470915420659

• • •

Missing some Tweet in this thread? You can try to

force a refresh