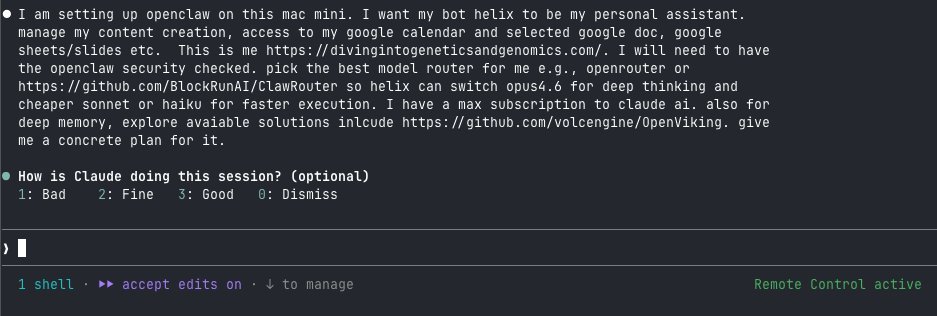

After Claude Code writes my code, I make it review its own work.

/simplify spawns 3 AI reviewers in parallel:

one hunts dead code,

one checks naming and structure,

one profiles for performance. All running at the same time.

/simplify spawns 3 AI reviewers in parallel:

one hunts dead code,

one checks naming and structure,

one profiles for performance. All running at the same time.

It reads your diff, launches three specialized agents simultaneously, then merges their findings.

Catches unused imports, redundant variables, overly complex conditionals, spots where shared logic should be extracted.

Not a linter. An actual code review.

Catches unused imports, redundant variables, overly complex conditionals, spots where shared logic should be extracted.

Not a linter. An actual code review.

AI-generated code works but it is often verbose.

A single review pass misses things.

Three parallel reviewers do not.

I run /simplify after every feature I build with Claude Code.

The code that ships is tighter than what either of us wrote alone.

A single review pass misses things.

Three parallel reviewers do not.

I run /simplify after every feature I build with Claude Code.

The code that ships is tighter than what either of us wrote alone.

I hope you've found this post helpful.

Follow me for more.

Subscribe to my FREE newsletter chatomics to learn AI and bioinformatics divingintogeneticsandgenomics.ck.page/profile x.com/433559451/stat…

Follow me for more.

Subscribe to my FREE newsletter chatomics to learn AI and bioinformatics divingintogeneticsandgenomics.ck.page/profile x.com/433559451/stat…

• • •

Missing some Tweet in this thread? You can try to

force a refresh