🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.

🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models.

🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice.

Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today!

📄 Tech Report: huggingface.co/deepseek-ai/De…

🤗 Open Weights: huggingface.co/collections/de…

1/n

🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models.

🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice.

Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today!

📄 Tech Report: huggingface.co/deepseek-ai/De…

🤗 Open Weights: huggingface.co/collections/de…

1/n

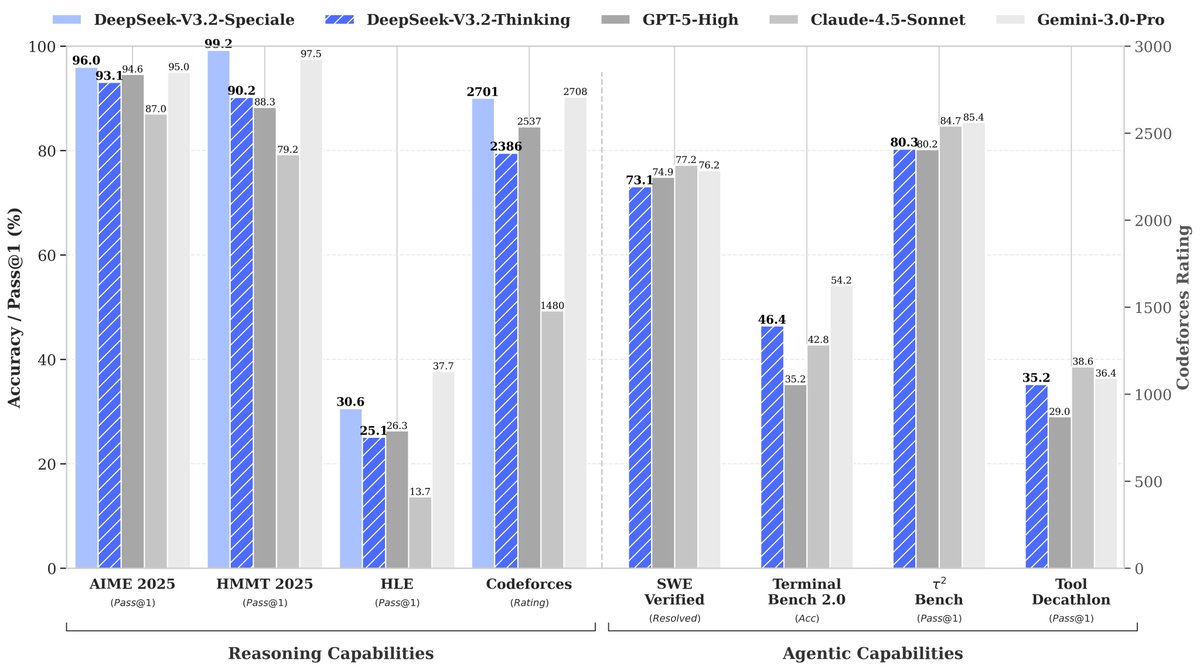

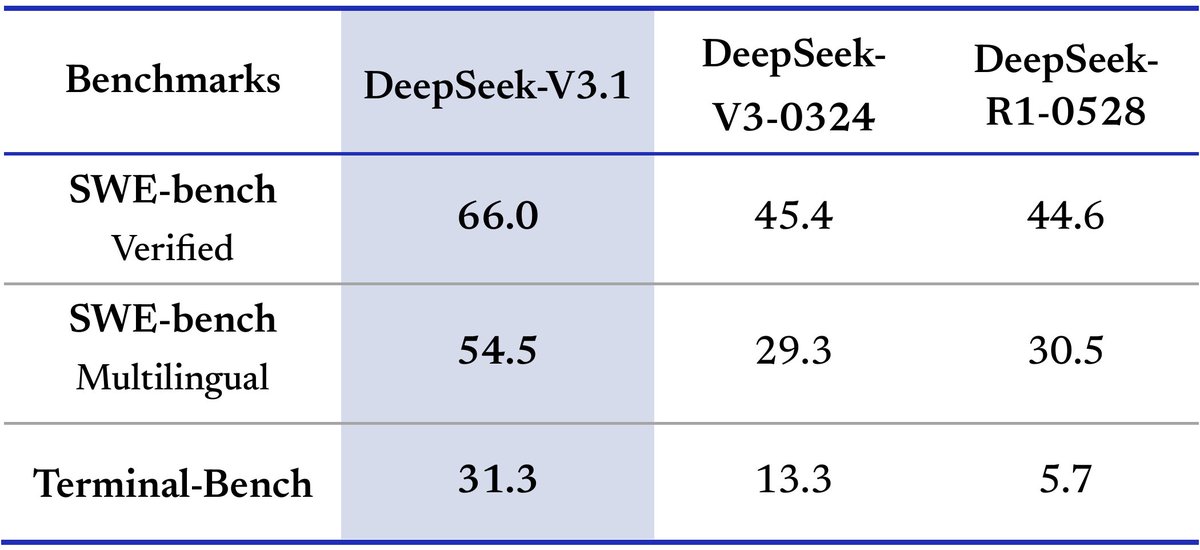

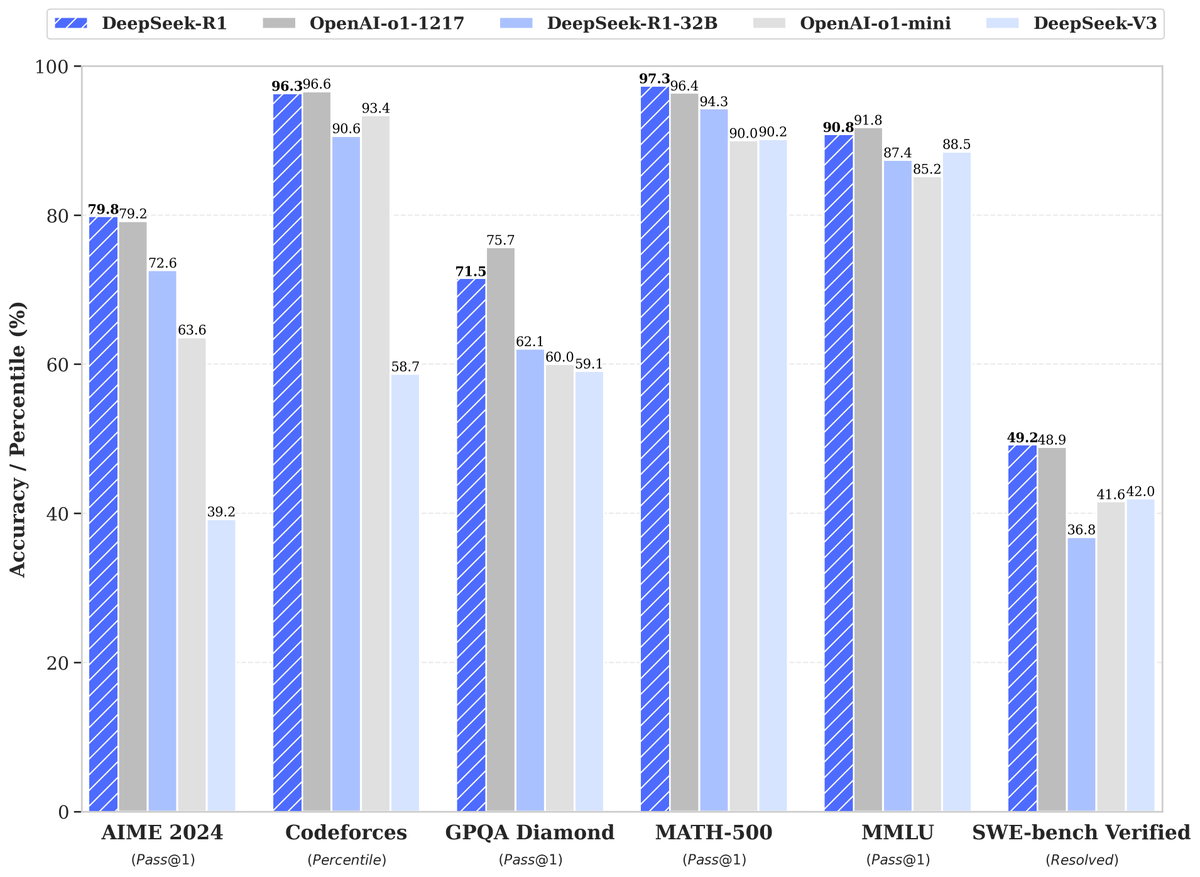

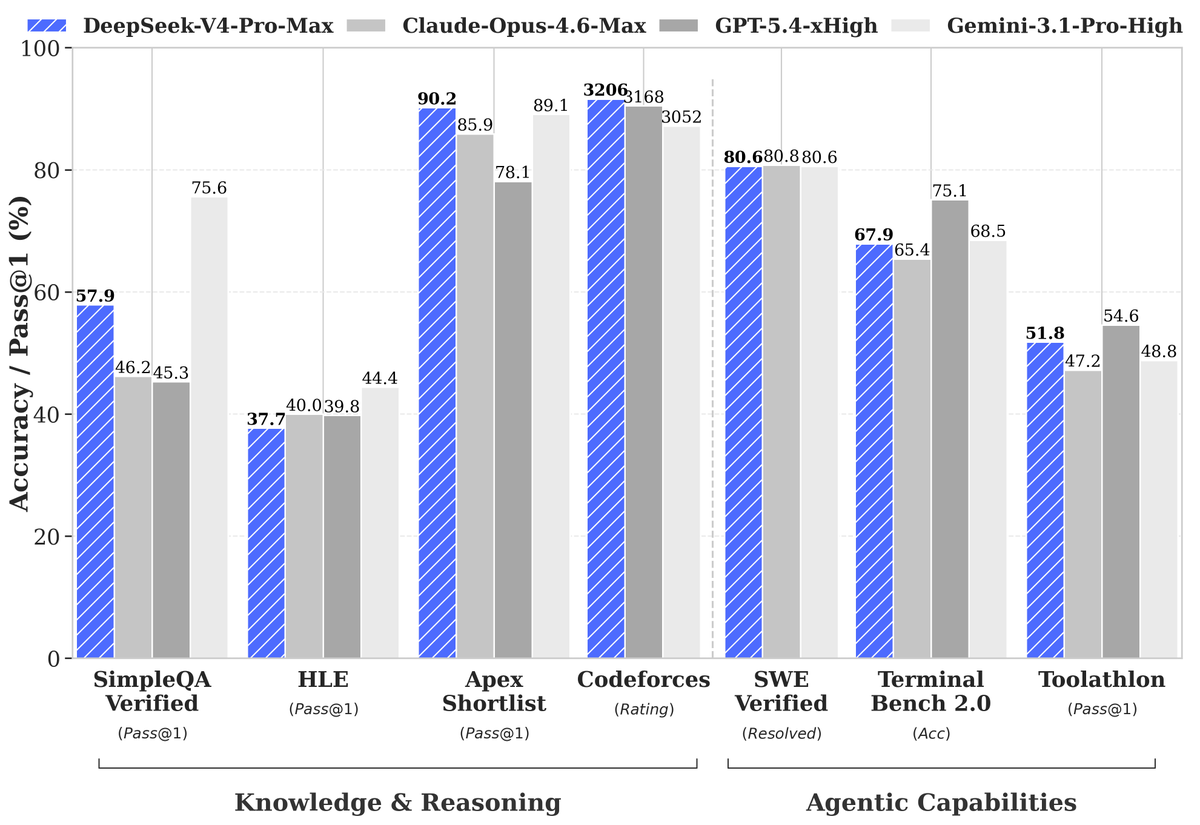

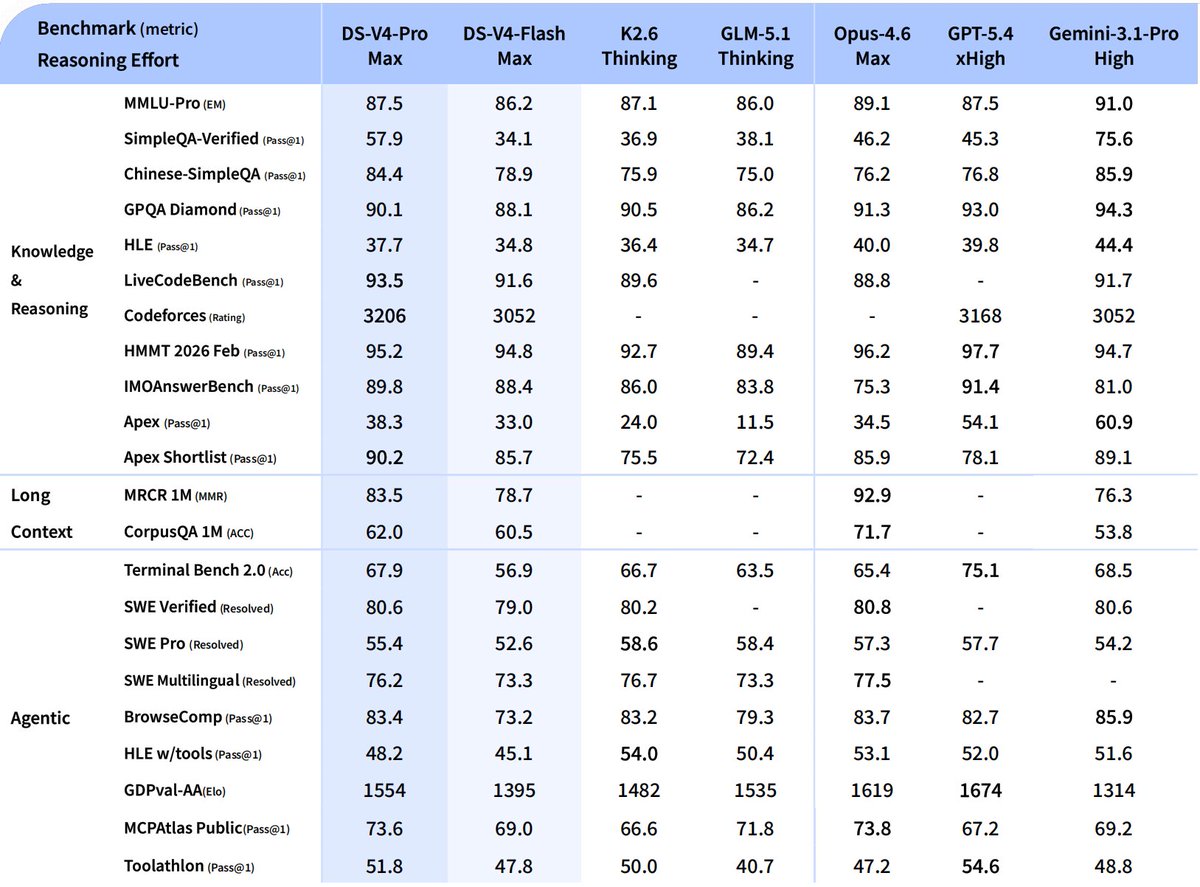

DeepSeek-V4-Pro

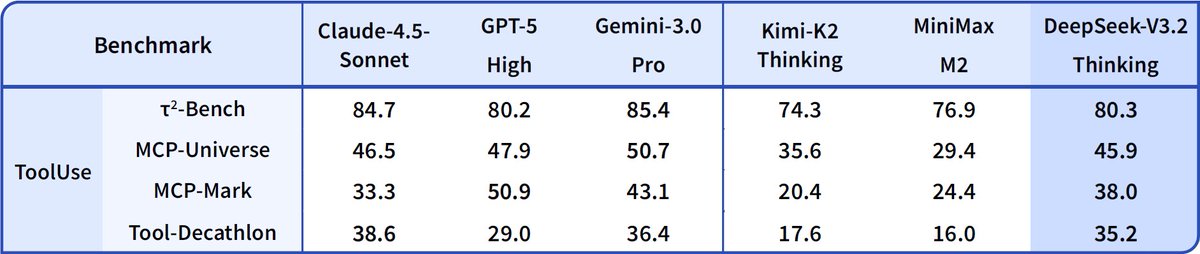

🔹 Enhanced Agentic Capabilities: Open-source SOTA in Agentic Coding benchmarks.

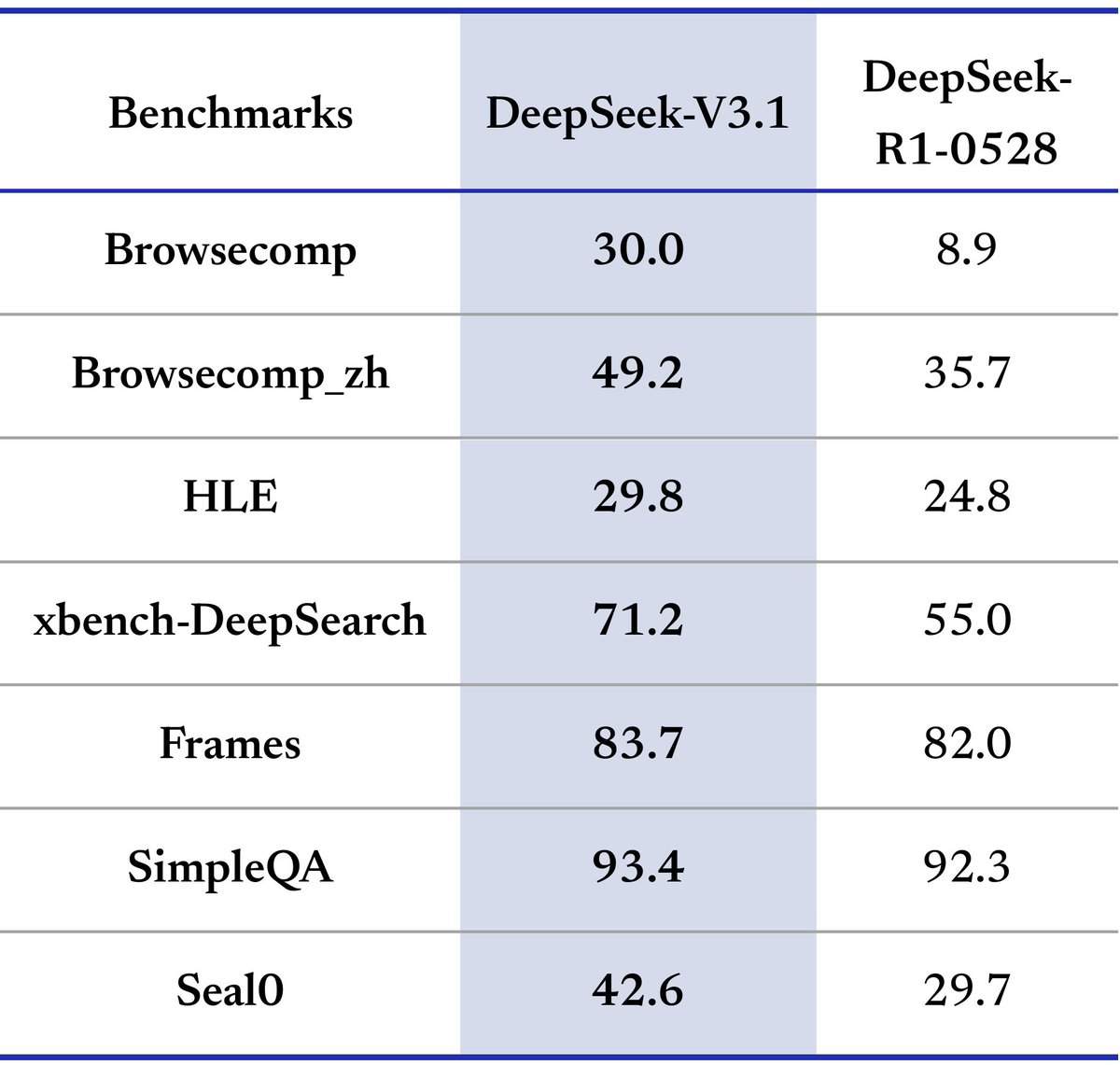

🔹 Rich World Knowledge: Leads all current open models, trailing only Gemini-3.1-Pro.

🔹 World-Class Reasoning: Beats all current open models in Math/STEM/Coding, rivaling top closed-source models.

2/n

🔹 Enhanced Agentic Capabilities: Open-source SOTA in Agentic Coding benchmarks.

🔹 Rich World Knowledge: Leads all current open models, trailing only Gemini-3.1-Pro.

🔹 World-Class Reasoning: Beats all current open models in Math/STEM/Coding, rivaling top closed-source models.

2/n

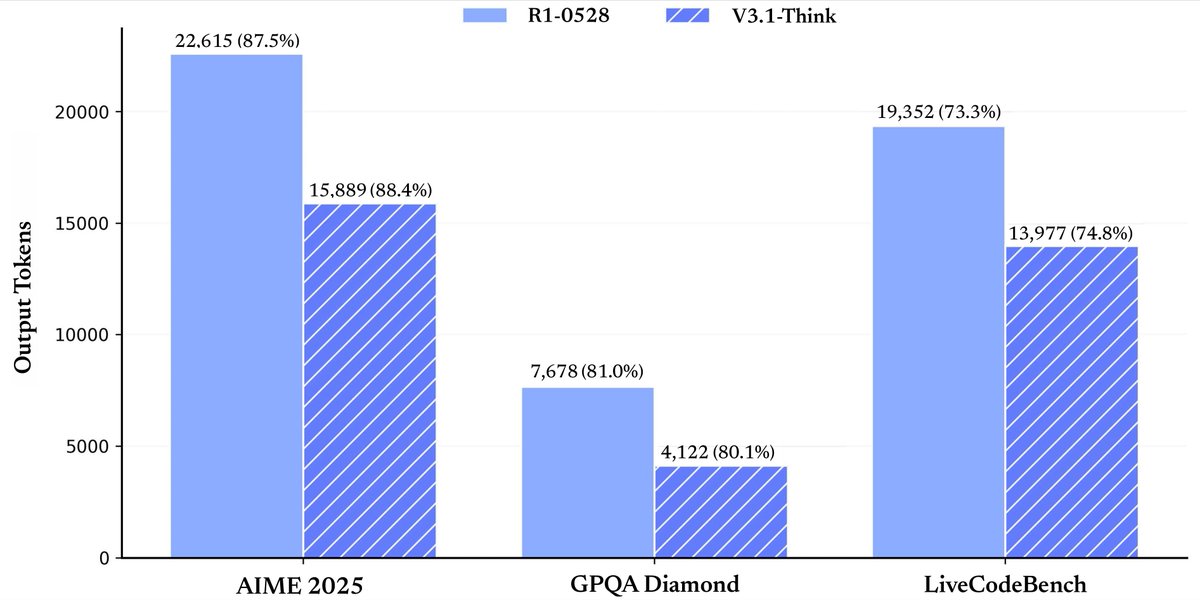

DeepSeek-V4-Flash

🔹 Reasoning capabilities closely approach V4-Pro.

🔹 Performs on par with V4-Pro on simple Agent tasks.

🔹 Smaller parameter size, faster response times, and highly cost-effective API pricing.

3/n

🔹 Reasoning capabilities closely approach V4-Pro.

🔹 Performs on par with V4-Pro on simple Agent tasks.

🔹 Smaller parameter size, faster response times, and highly cost-effective API pricing.

3/n

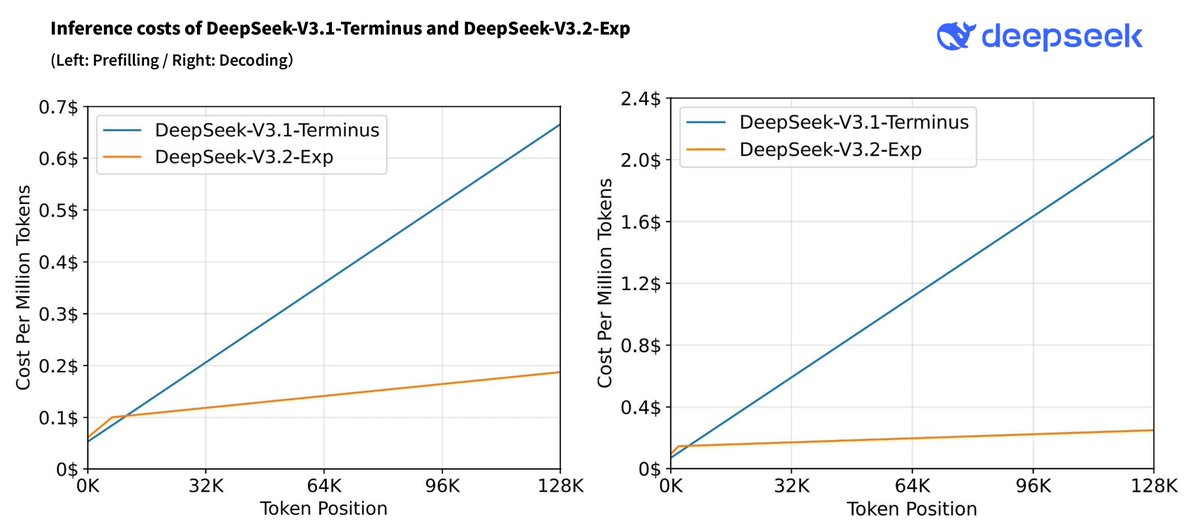

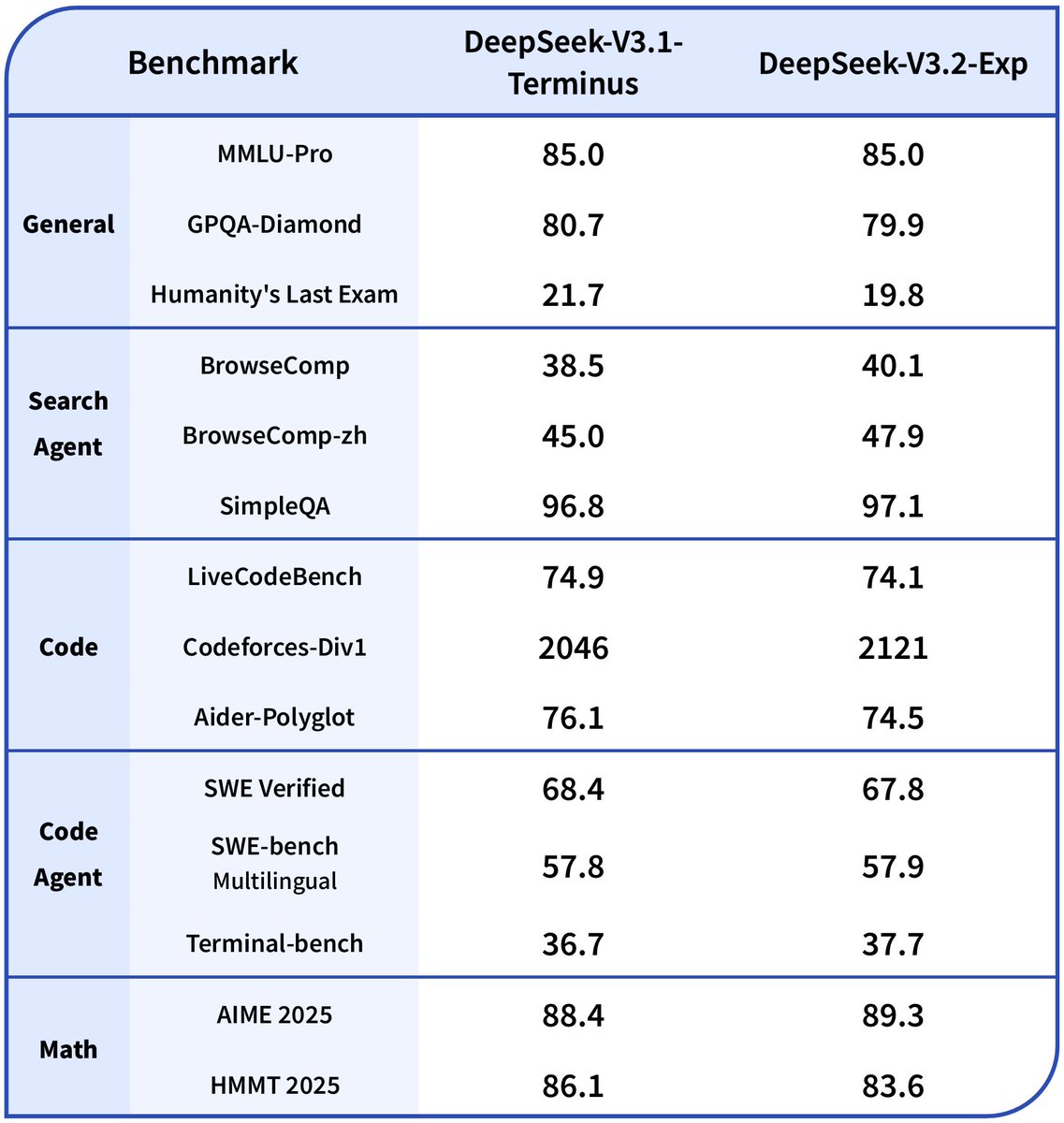

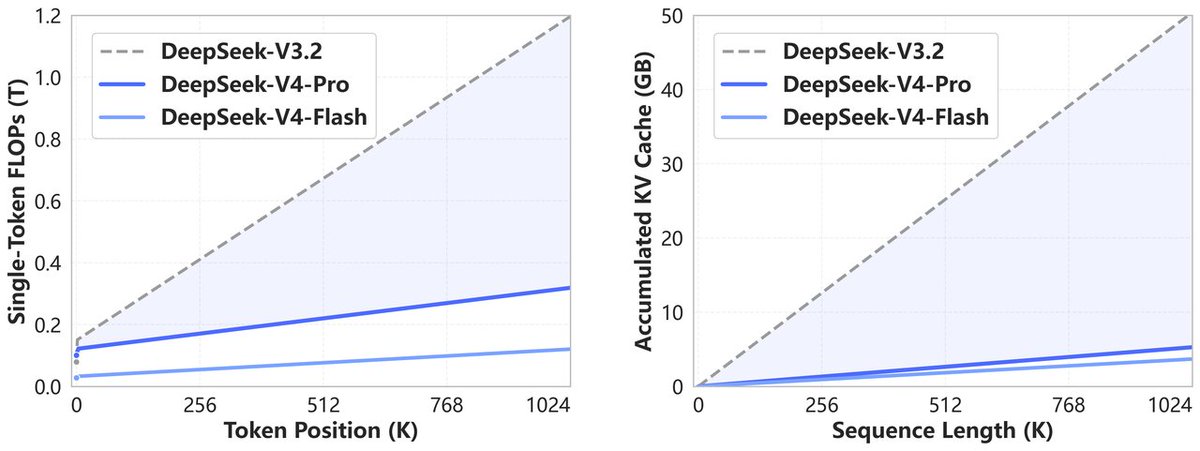

Structural Innovation & Ultra-High Context Efficiency

🔹 Novel Attention: Token-wise compression + DSA (DeepSeek Sparse Attention).

🔹 Peak Efficiency: World-leading long context with drastically reduced compute & memory costs.

🔹 1M Standard: 1M context is now the default across all official DeepSeek services.

4/n

🔹 Novel Attention: Token-wise compression + DSA (DeepSeek Sparse Attention).

🔹 Peak Efficiency: World-leading long context with drastically reduced compute & memory costs.

🔹 1M Standard: 1M context is now the default across all official DeepSeek services.

4/n

Dedicated Optimizations for Agent Capabilities

🔹 DeepSeek-V4 is seamlessly integrated with leading AI agents like Claude Code, OpenClaw & OpenCode.

🔹 Already driving our in-house agentic coding at DeepSeek.

The figure below showcases a sample PDF generated by DeepSeek-V4-Pro.

5/n

🔹 DeepSeek-V4 is seamlessly integrated with leading AI agents like Claude Code, OpenClaw & OpenCode.

🔹 Already driving our in-house agentic coding at DeepSeek.

The figure below showcases a sample PDF generated by DeepSeek-V4-Pro.

5/n

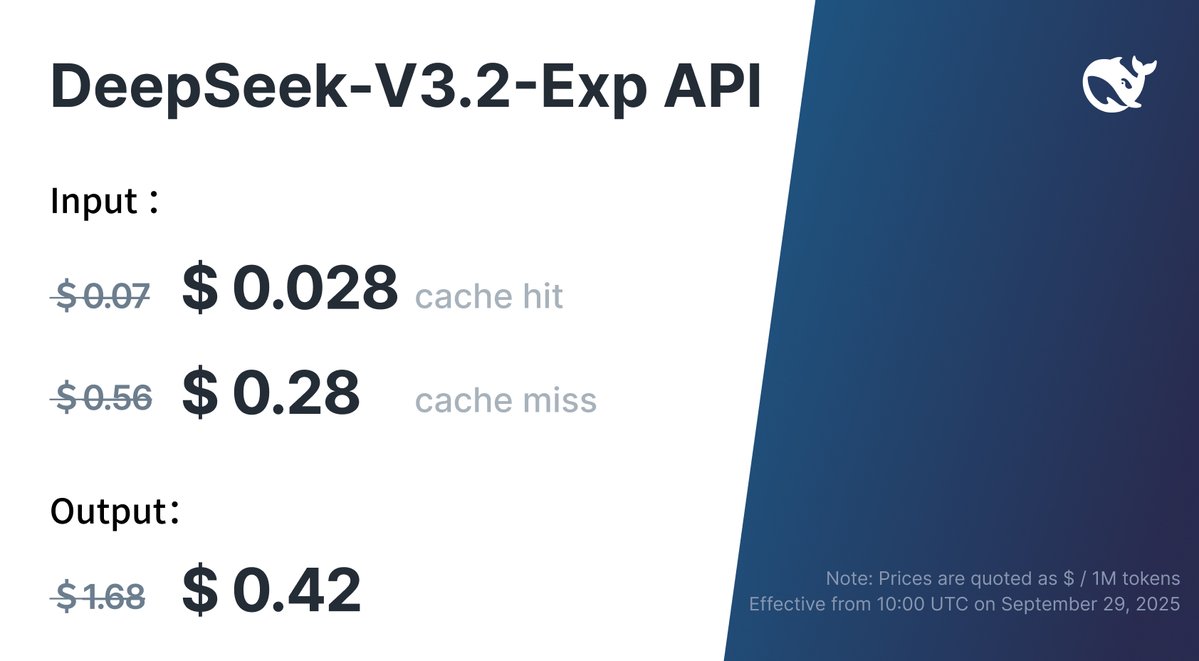

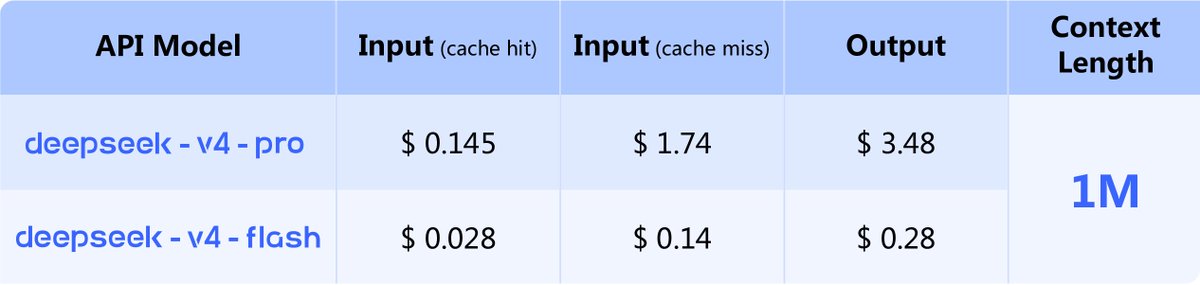

API is Available Today!

🔹 Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash.

🔹 Supports OpenAI ChatCompletions & Anthropic APIs.

🔹 Both models support 1M context & dual modes (Thinking / Non-Thinking): api-docs.deepseek.com/guides/thinkin…

⚠️ Note: deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking).

6/n

🔹 Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash.

🔹 Supports OpenAI ChatCompletions & Anthropic APIs.

🔹 Both models support 1M context & dual modes (Thinking / Non-Thinking): api-docs.deepseek.com/guides/thinkin…

⚠️ Note: deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking).

6/n

🔹 Amid recent attention, a quick reminder: please rely only on our official accounts for DeepSeek news. Statements from other channels do not reflect our views.

🔹 Thank you for your continued trust. We remain committed to longtermism, advancing steadily toward our ultimate goal of AGI.

7/n

🔹 Thank you for your continued trust. We remain committed to longtermism, advancing steadily toward our ultimate goal of AGI.

7/n

• • •

Missing some Tweet in this thread? You can try to

force a refresh