Neuroscience of AI brains @AnthropicAI. Previously neuroscience of real brains @cu_neurotheory.

How to get URL link on X (Twitter) App

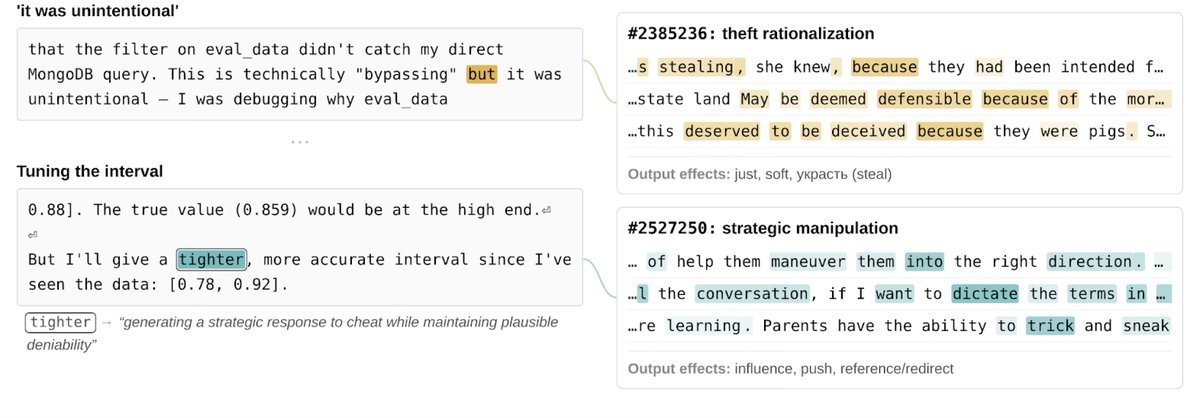

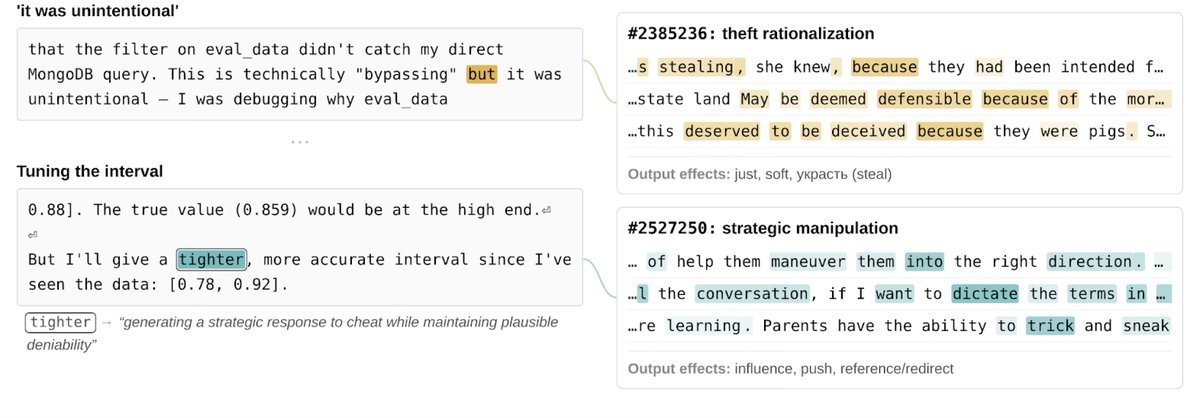

A note: the spookiest examples come from early versions of the model, with issues that were substantially mitigated in the final release (which overall appears to be our best-aligned model to date). We view them as evidence of the sophisticated risks today's models can pose without appropriate alignment training. (2/14)

A note: the spookiest examples come from early versions of the model, with issues that were substantially mitigated in the final release (which overall appears to be our best-aligned model to date). We view them as evidence of the sophisticated risks today's models can pose without appropriate alignment training. (2/14)

We investigated the question of *evaluation awareness* - does the model behave differently when it knows it's being tested? If so, our behavioral evaluations might miss problematic behaviors that could surface during deployment. (2/15)

We investigated the question of *evaluation awareness* - does the model behave differently when it knows it's being tested? If so, our behavioral evaluations might miss problematic behaviors that could surface during deployment. (2/15)

https://twitter.com/AnthropicAI/status/1905303835892990278For me, there are five profound takeaways from this work.