Research & design @NewsLitProject. Resources: https://t.co/hW0VhvQ8bH.

I'm mostly here these days: https://t.co/URMOp567un

How to get URL link on X (Twitter) App

https://twitter.com/EM_RESUS/status/1418326847671128064Screenshots of these identical tweets -- with the timestamp cut off -- are now circulating along with the false claim that they were all posted at the same time by some kind of astroturf bot network promoting unwarranted COVID precautions. This is a dangerous lie.

First, share these tweets & ask students if they are strong evidence for the claim (that the Trump administration is interfering w access to mailboxes). Then ask for the reasons behind Ss' answers: Why is/isn't this evidence? What questions do we need answered to know for sure?

First, share these tweets & ask students if they are strong evidence for the claim (that the Trump administration is interfering w access to mailboxes). Then ask for the reasons behind Ss' answers: Why is/isn't this evidence? What questions do we need answered to know for sure?

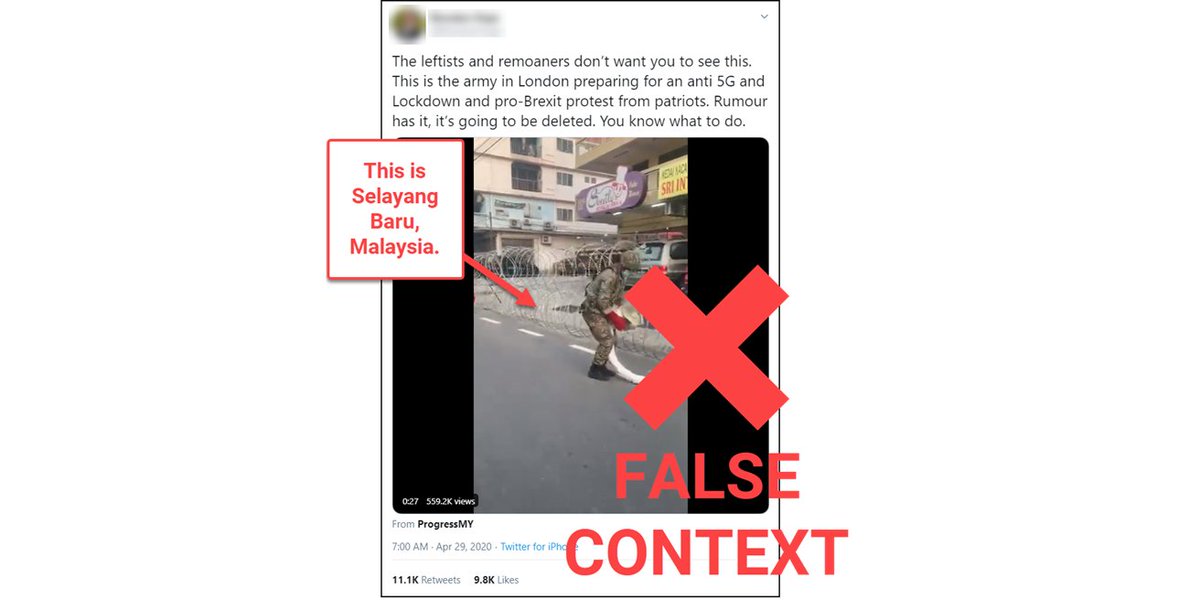

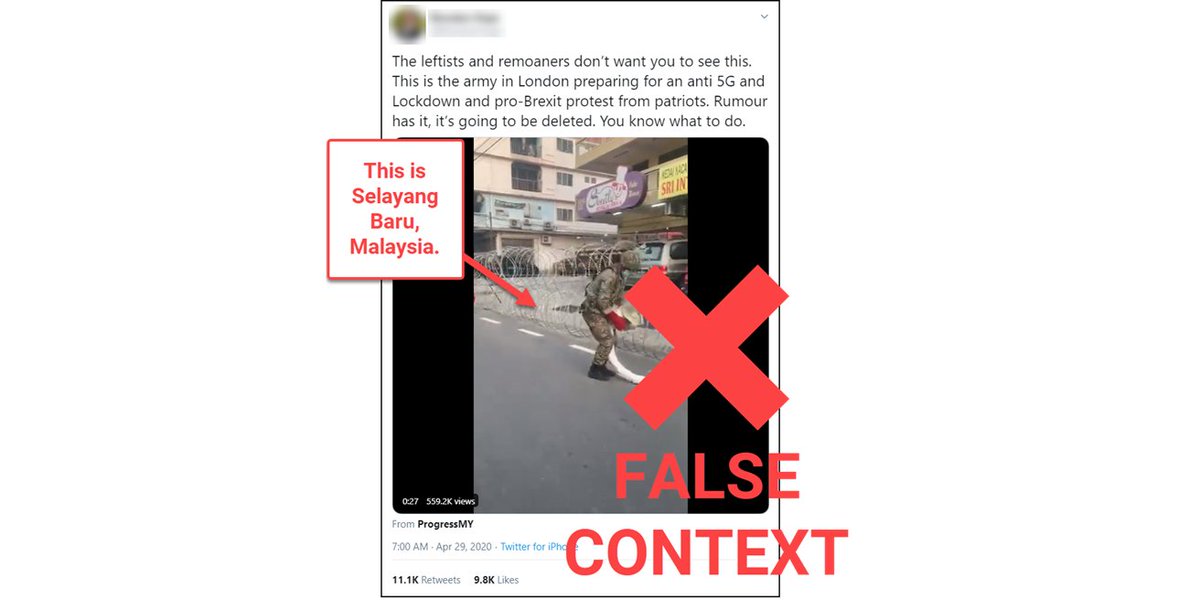

The tweet used a video clip of Malaysian military personnel putting up a razor wire barrier near Kuala Lumpur to falsely claim (apparently as a joke) that British soldiers were preparing for an "anti 5G and Lockdown and pro-Brexit protest from patriots" in London.

The tweet used a video clip of Malaysian military personnel putting up a razor wire barrier near Kuala Lumpur to falsely claim (apparently as a joke) that British soldiers were preparing for an "anti 5G and Lockdown and pro-Brexit protest from patriots" in London.

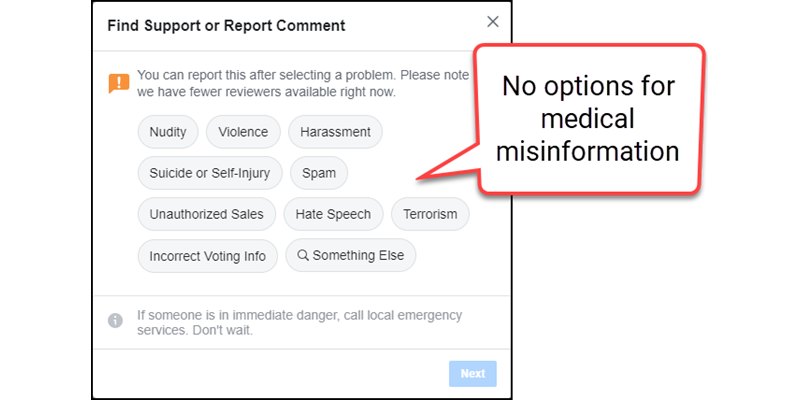

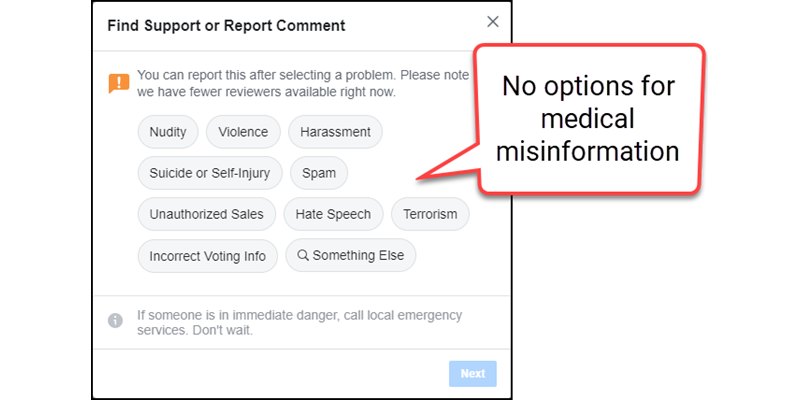

Let's say a post is promoting a potentially lethal "cure" for COVID-19. It's not nudity, violence or harassment; not really someone threatening self-harm; it's not spam, an unauthorized sale, hate speech or terrorism; & it's not incorrect voting info. It must be "Something Else"

Let's say a post is promoting a potentially lethal "cure" for COVID-19. It's not nudity, violence or harassment; not really someone threatening self-harm; it's not spam, an unauthorized sale, hate speech or terrorism; & it's not incorrect voting info. It must be "Something Else"

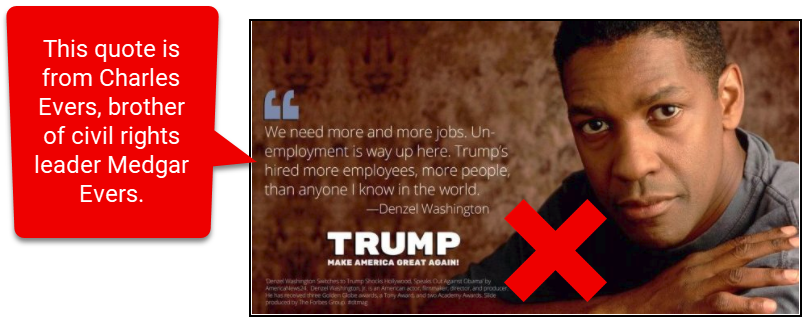

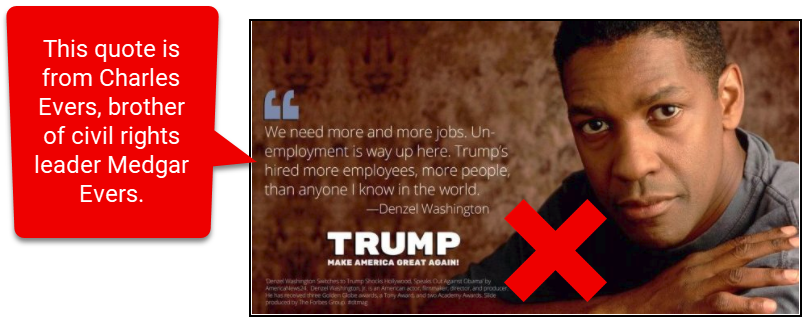

Before you get students started on their digital forensics work, you might point out the misinformation pattern it fits. This rumor has gone viral three separate times: first during the 2016 campaign, then again after Washington was nominated for an Oscar in February 2018, and...

Before you get students started on their digital forensics work, you might point out the misinformation pattern it fits. This rumor has gone viral three separate times: first during the 2016 campaign, then again after Washington was nominated for an Oscar in February 2018, and...

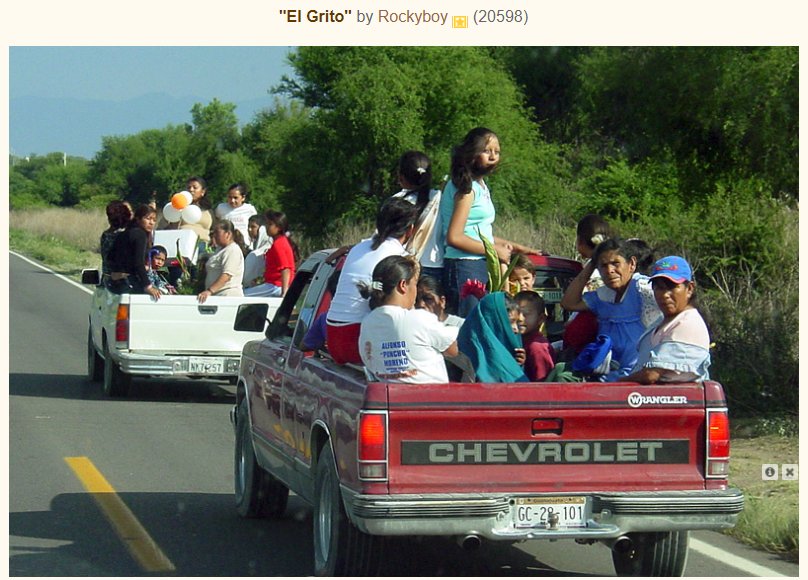

Drill down on that lead image of people in the trucks using a reverse image search, and you'll find examples of the image that include the license plate on the truck. Like this one:

Drill down on that lead image of people in the trucks using a reverse image search, and you'll find examples of the image that include the license plate on the truck. Like this one: