We are a watchdog nonprofit that monitors and reports on the practices of leading AI companies.

Also tracking safety updates @SafetyChanges

How to get URL link on X (Twitter) App

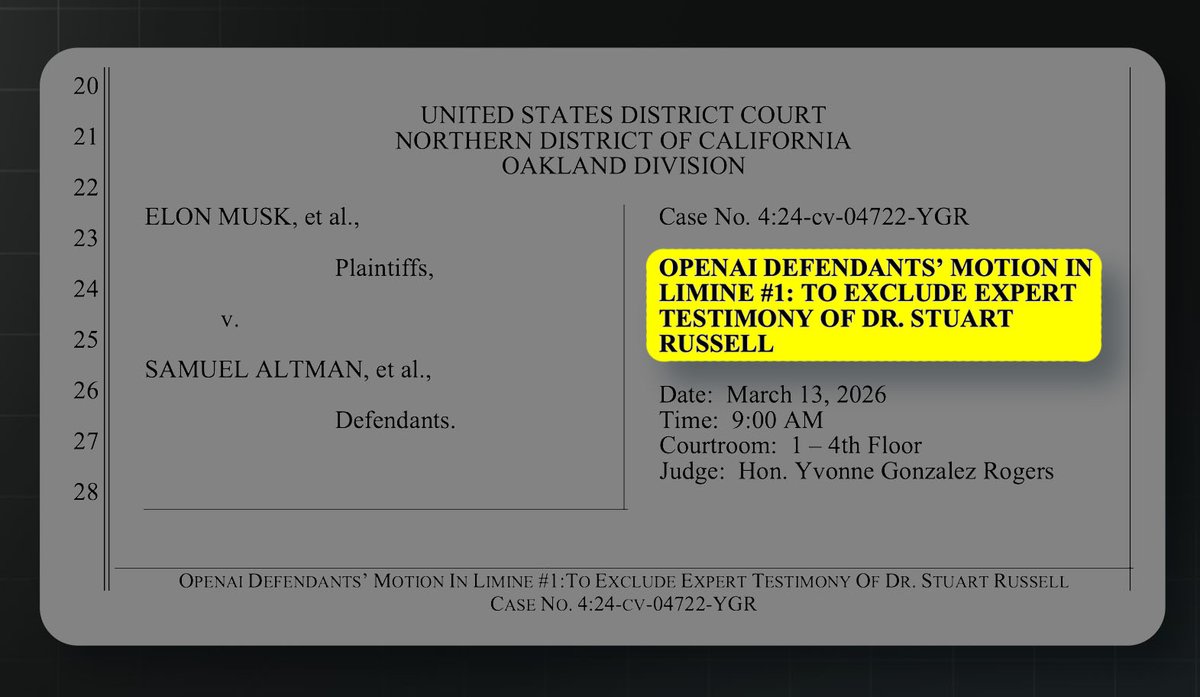

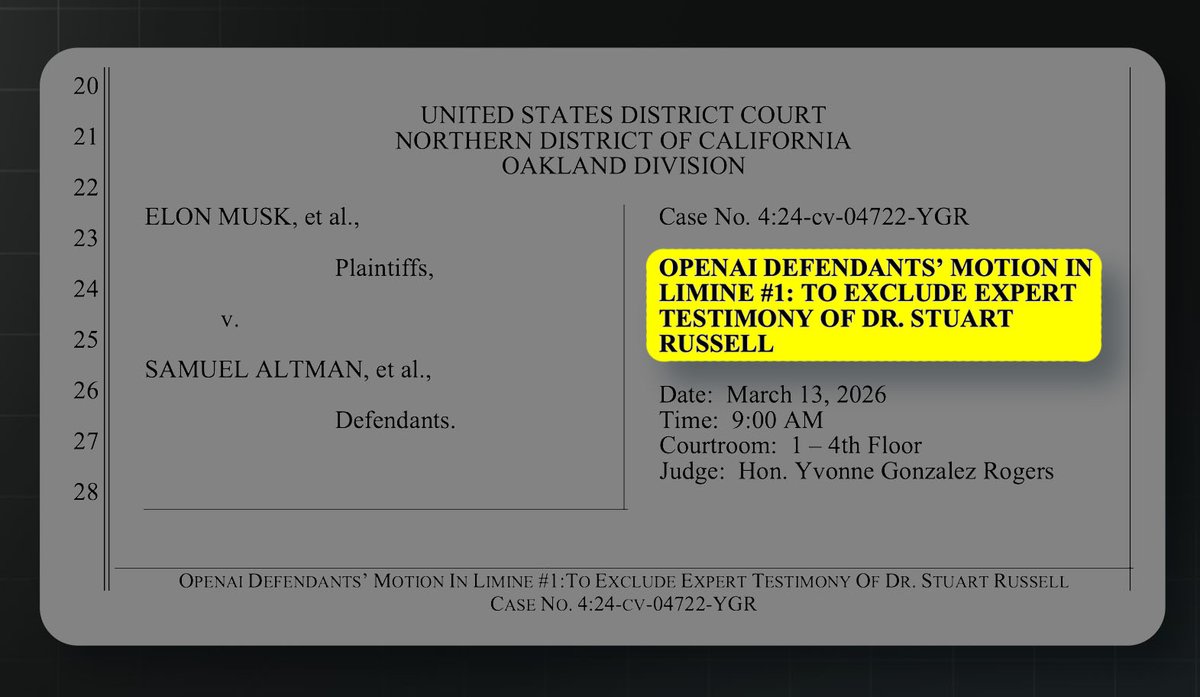

For context, Stuart Russell is an AI professor and a Fellow of the Royal Society.

For context, Stuart Russell is an AI professor and a Fellow of the Royal Society.

2/ Since December, OpenAI has been racing to reclaim the title of best coding AI.

2/ Since December, OpenAI has been racing to reclaim the title of best coding AI.

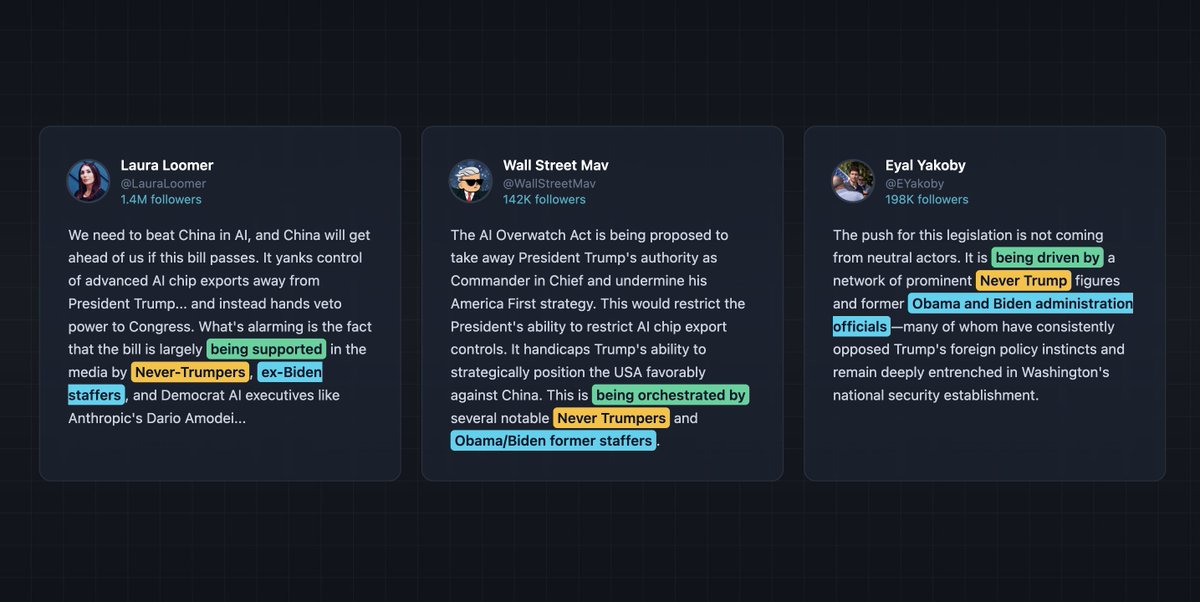

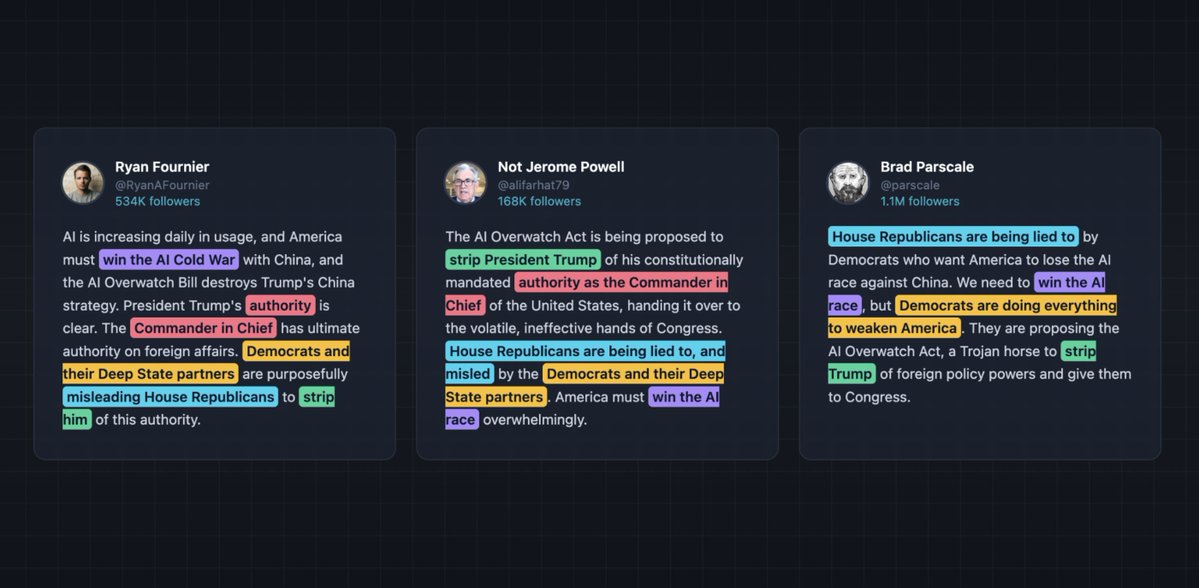

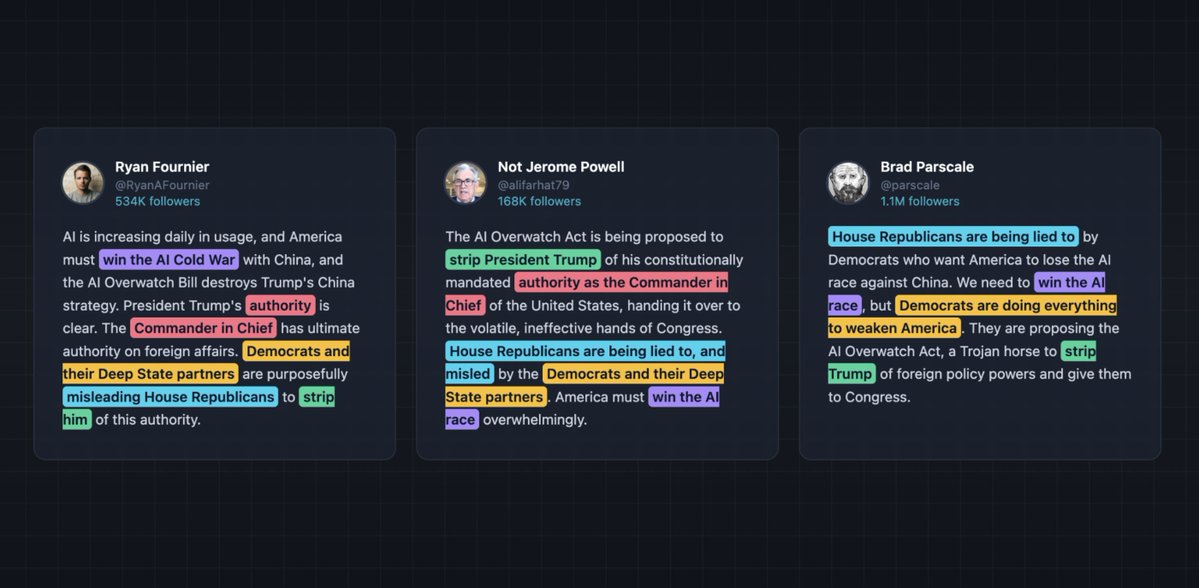

The AI OVERWATCH Act is a Republican bill that would let Congress review AI chip exports to adversaries like China. It's backed by Microsoft and right-leaning think tanks.

The AI OVERWATCH Act is a Republican bill that would let Congress review AI chip exports to adversaries like China. It's backed by Microsoft and right-leaning think tanks.