How to get URL link on X (Twitter) App

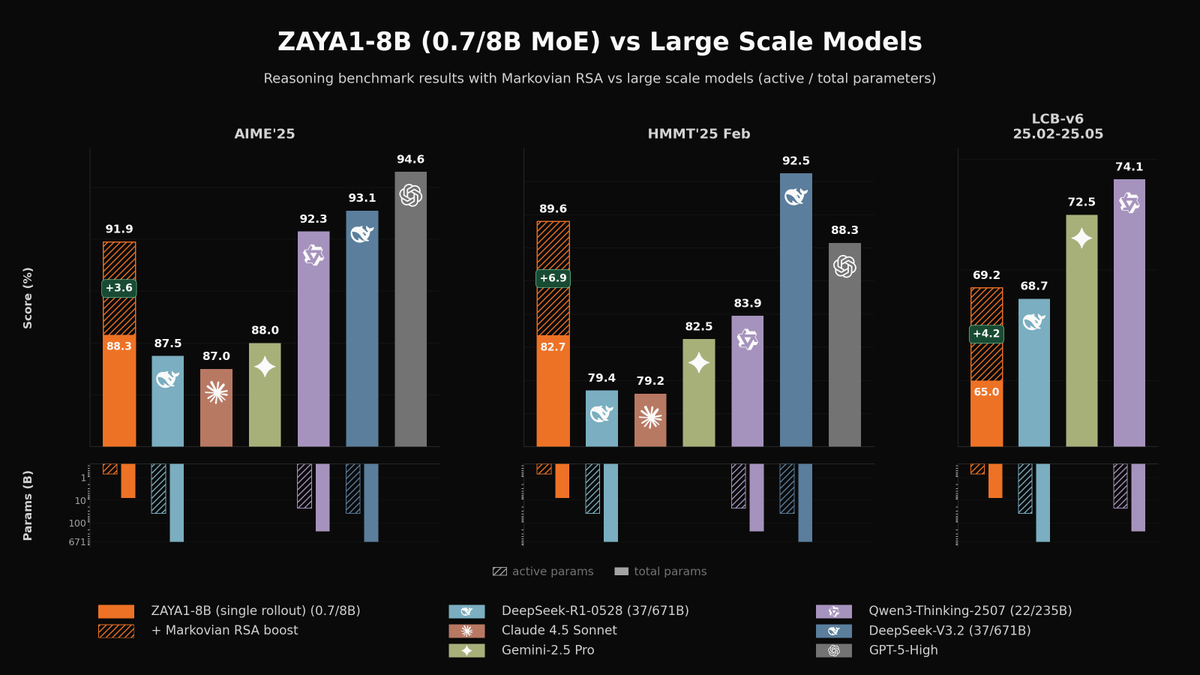

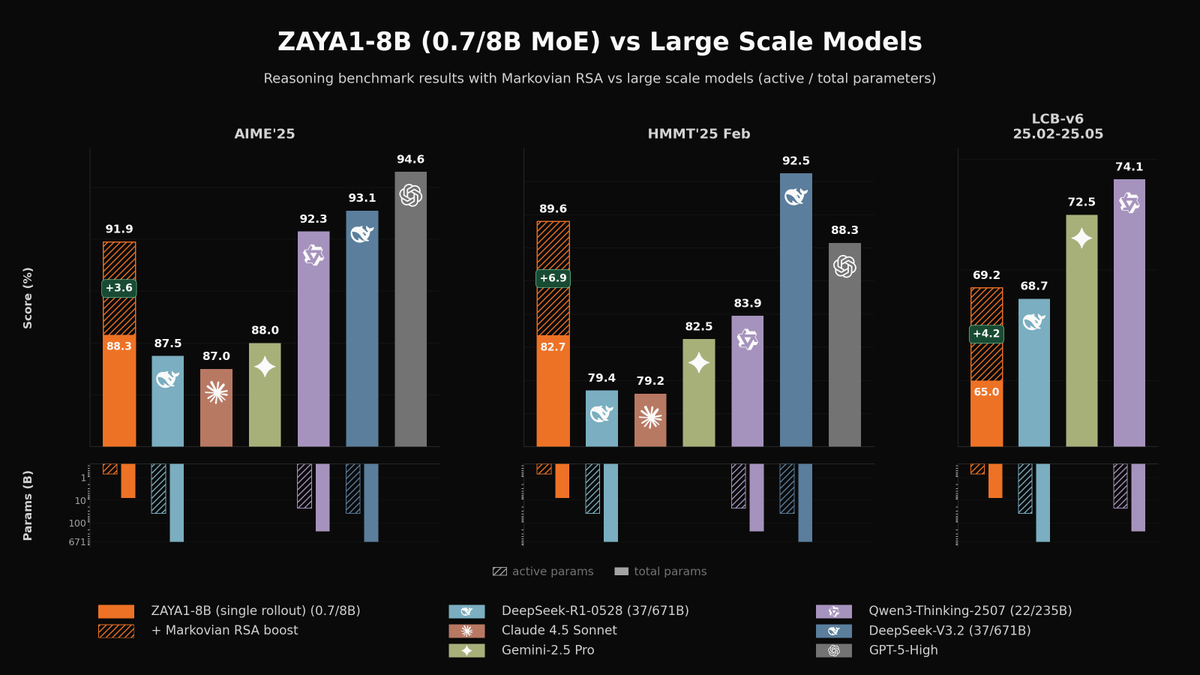

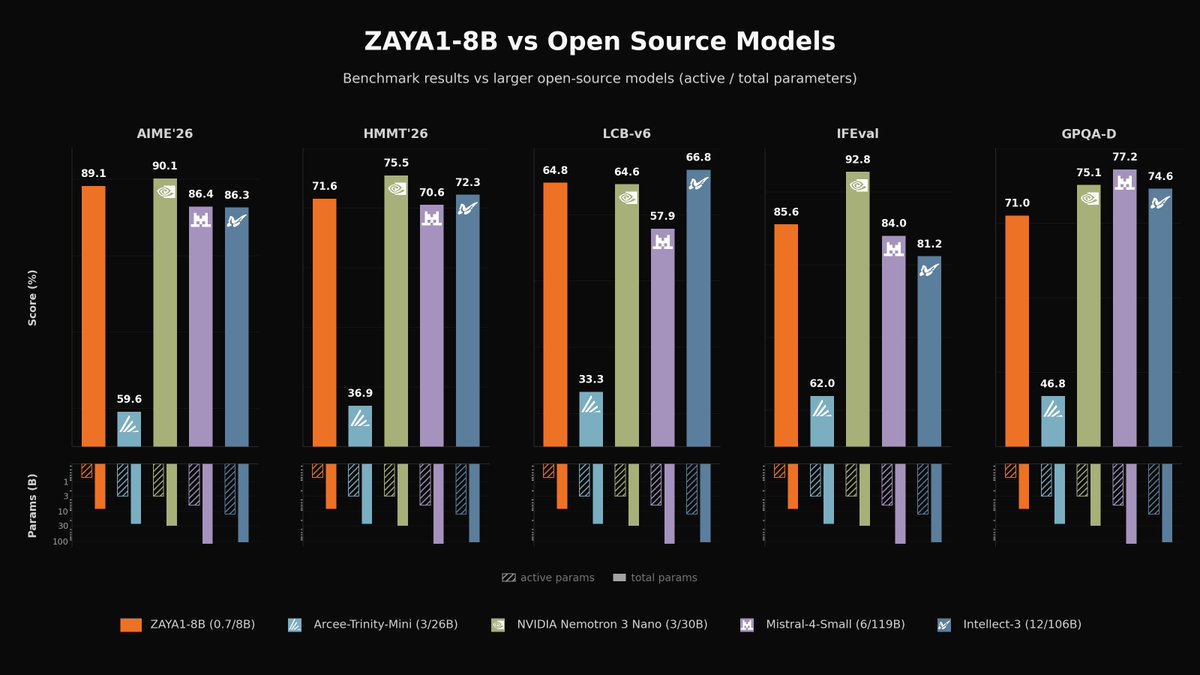

On math and code, ZAYA1-8B beats every model in its SLM weight class, is ahead of Qwen3.5-4B and Gemma-4-E4B and competitive with first-gen frontier reasoning models like DeepSeek-R1-0528, Gemini-2.5-Pro, and Claude 4.5 Sonnet on challenging mathematical reasoning tasks.

On math and code, ZAYA1-8B beats every model in its SLM weight class, is ahead of Qwen3.5-4B and Gemma-4-E4B and competitive with first-gen frontier reasoning models like DeepSeek-R1-0528, Gemini-2.5-Pro, and Claude 4.5 Sonnet on challenging mathematical reasoning tasks.

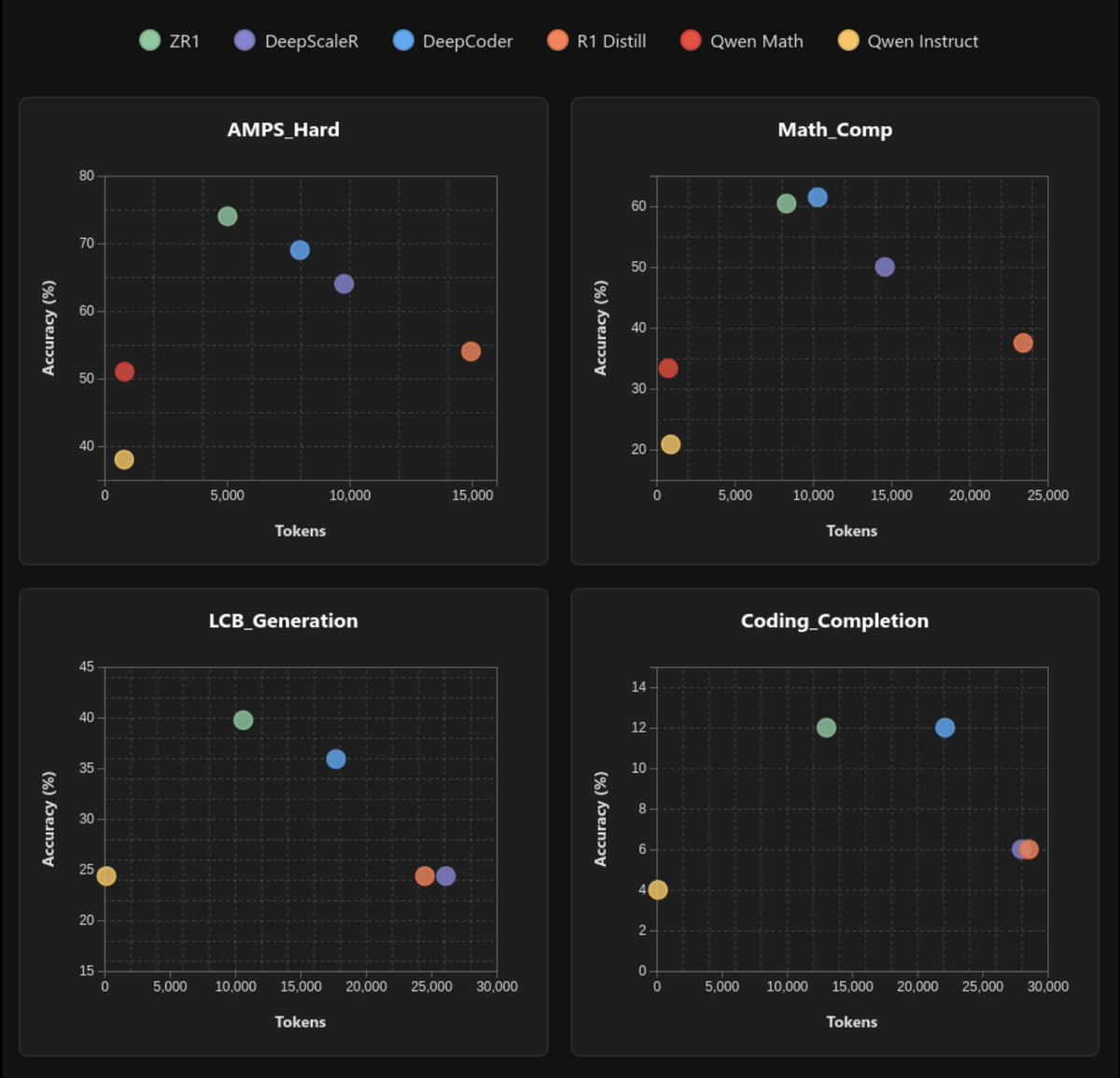

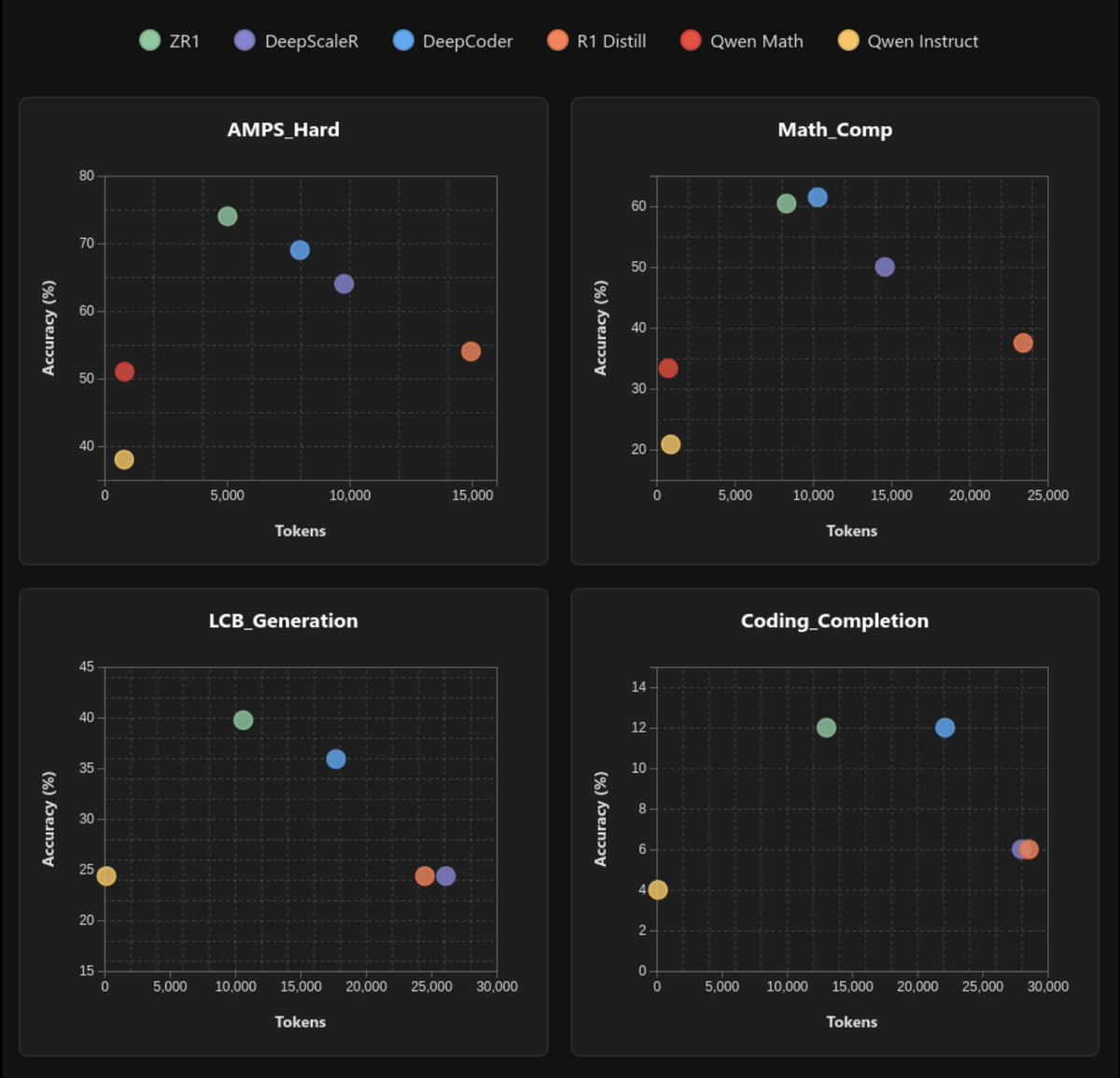

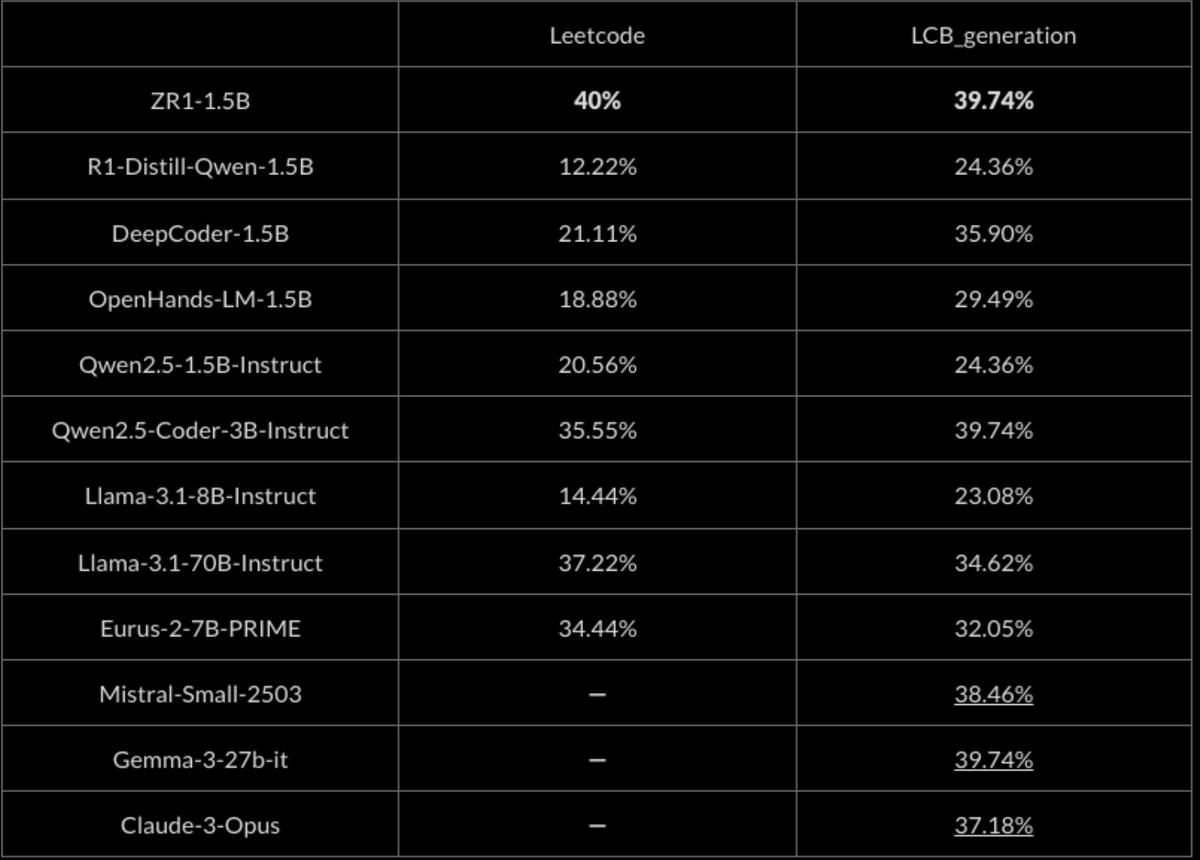

On hard coding evaluations, ZR1-1.5B achieves rough parity with Claude3-Opus and Gemma2-27B-instruct and improves over the base R1-Distill-1.5B model by over 50%. ZR1-1.5B is SoTA on coding evaluations for its size, greatly outperforming code reasoning models such as OpenHands.

On hard coding evaluations, ZR1-1.5B achieves rough parity with Claude3-Opus and Gemma2-27B-instruct and improves over the base R1-Distill-1.5B model by over 50%. ZR1-1.5B is SoTA on coding evaluations for its size, greatly outperforming code reasoning models such as OpenHands.

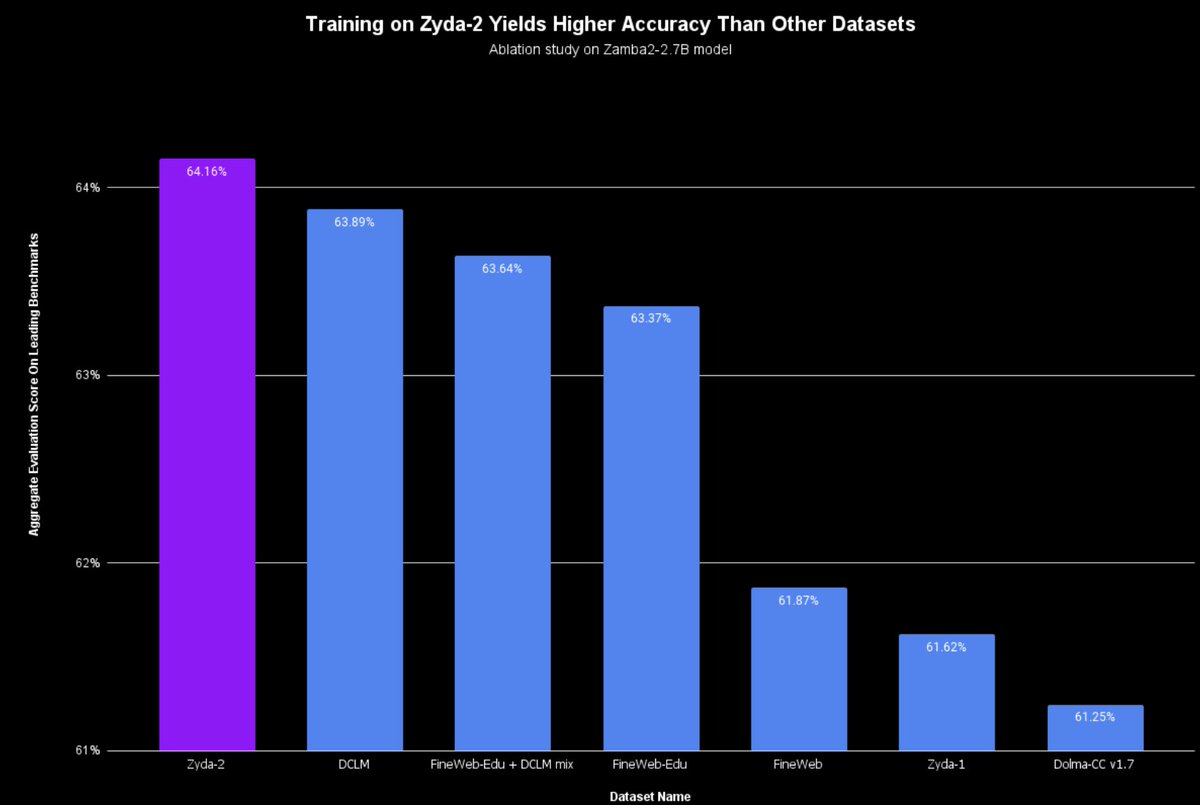

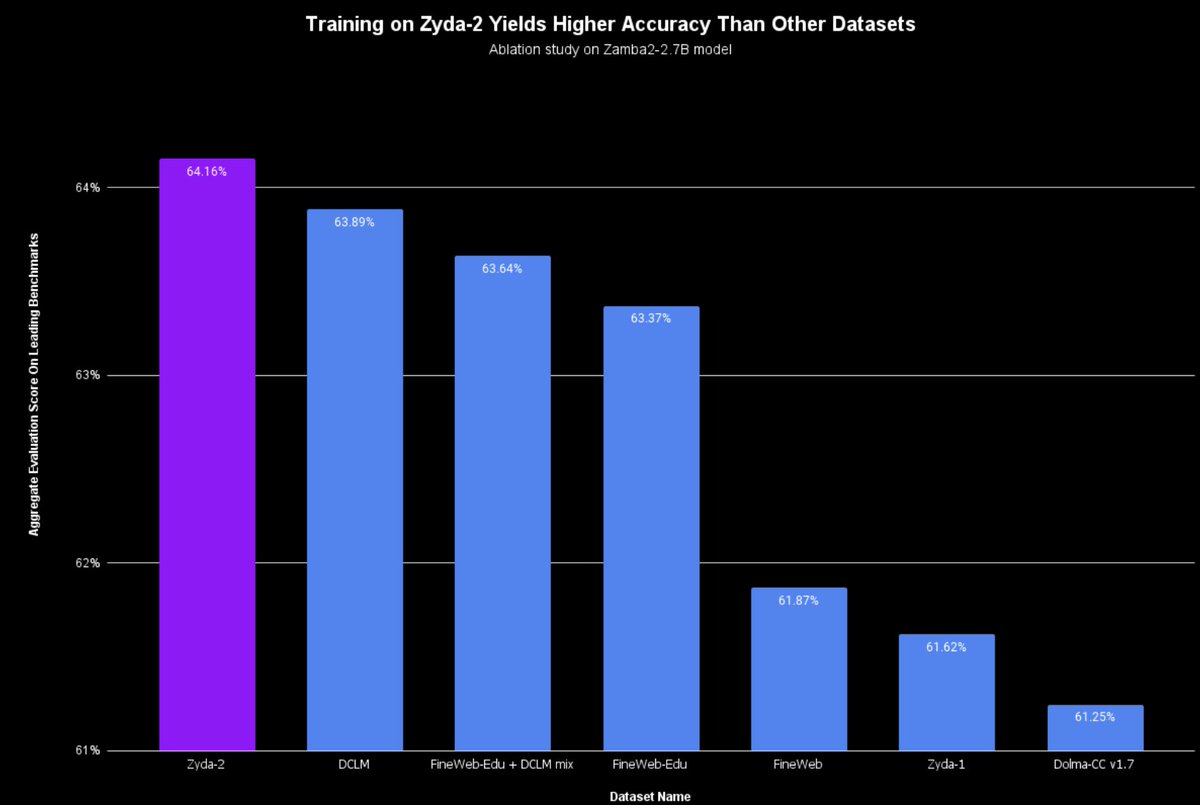

We built Zyda-2 with the following pipeline:

We built Zyda-2 with the following pipeline: