How to get URL link on X (Twitter) App

The timeline for attacks just collapsed.

The timeline for attacks just collapsed.

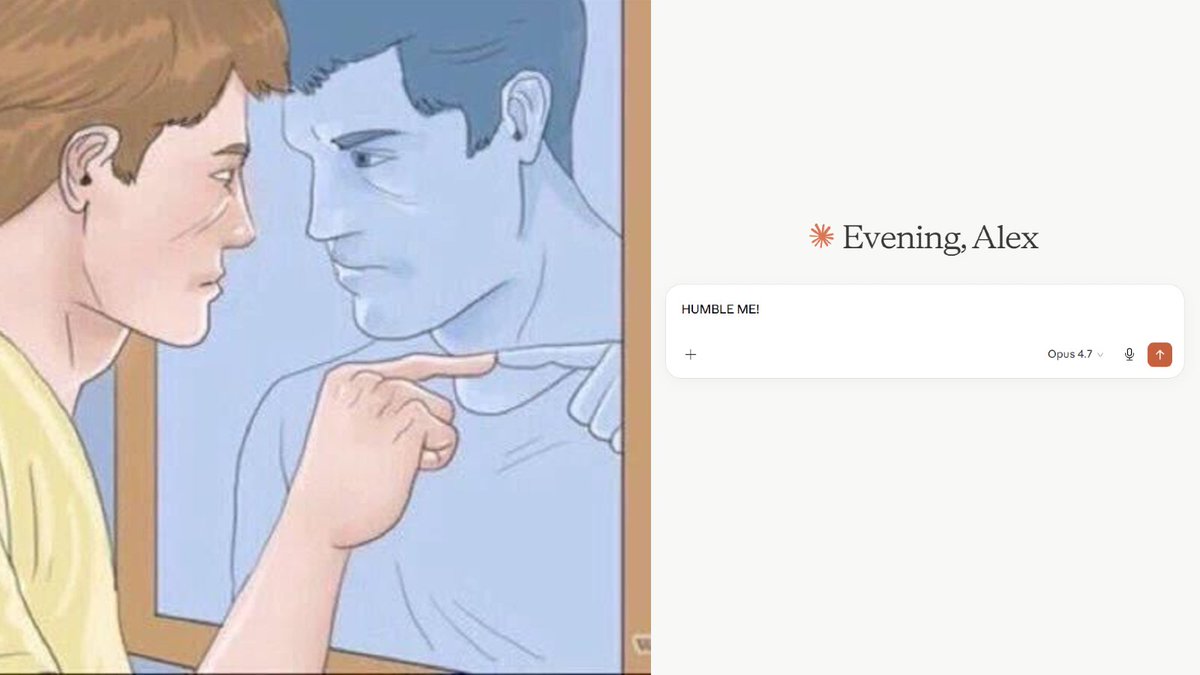

Framework: Inversion

Framework: Inversion

1/ The Conflict Detector

1/ The Conflict Detector

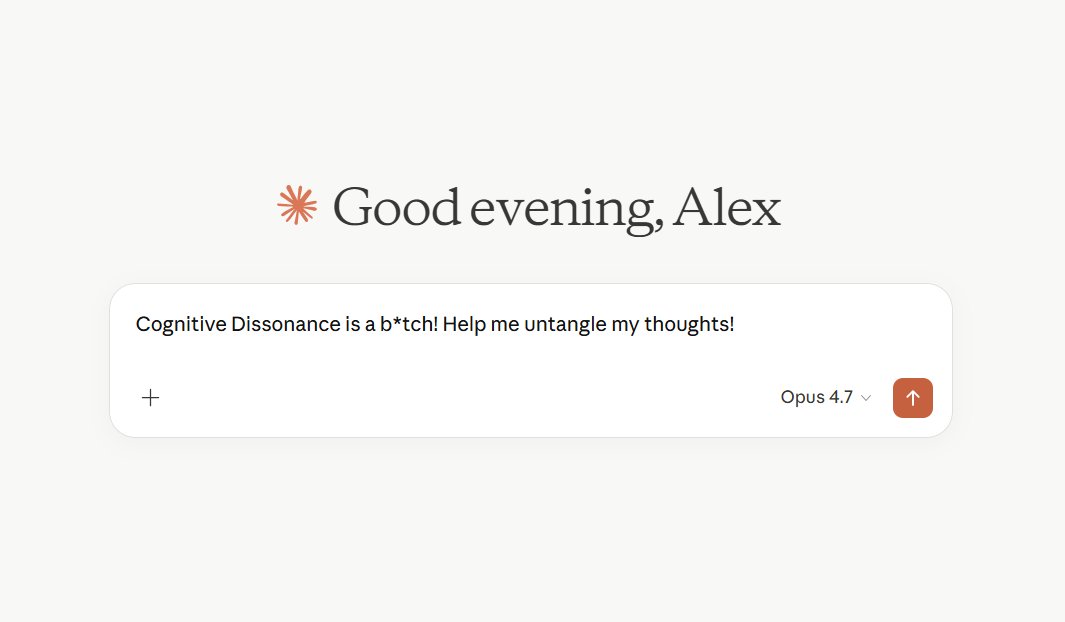

1/ UNDERSTAND WHAT'S HAPPENING IN YOUR MIND

1/ UNDERSTAND WHAT'S HAPPENING IN YOUR MIND

----------------------------

----------------------------

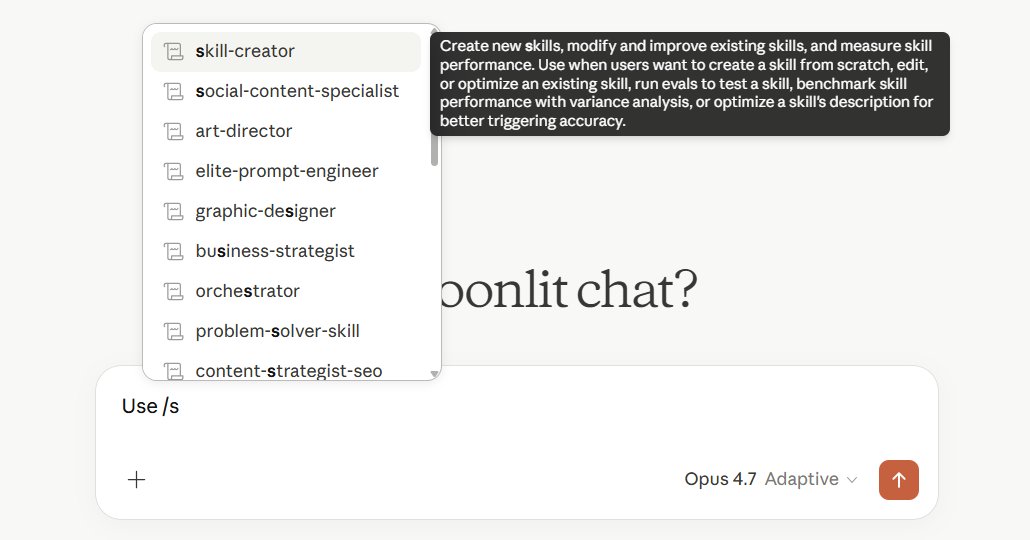

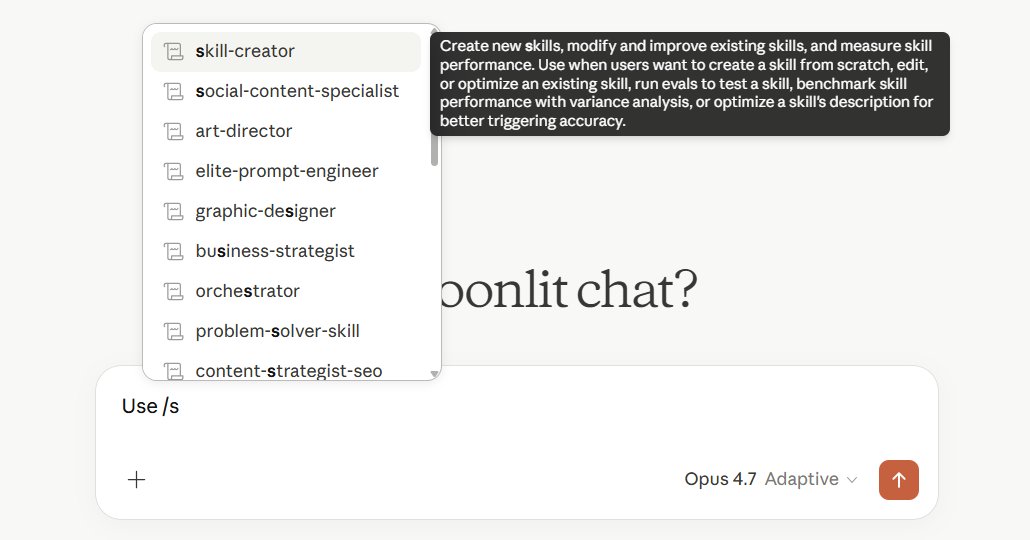

Open Claude Code and type:

Open Claude Code and type:

Here's the prompt:

Here's the prompt:

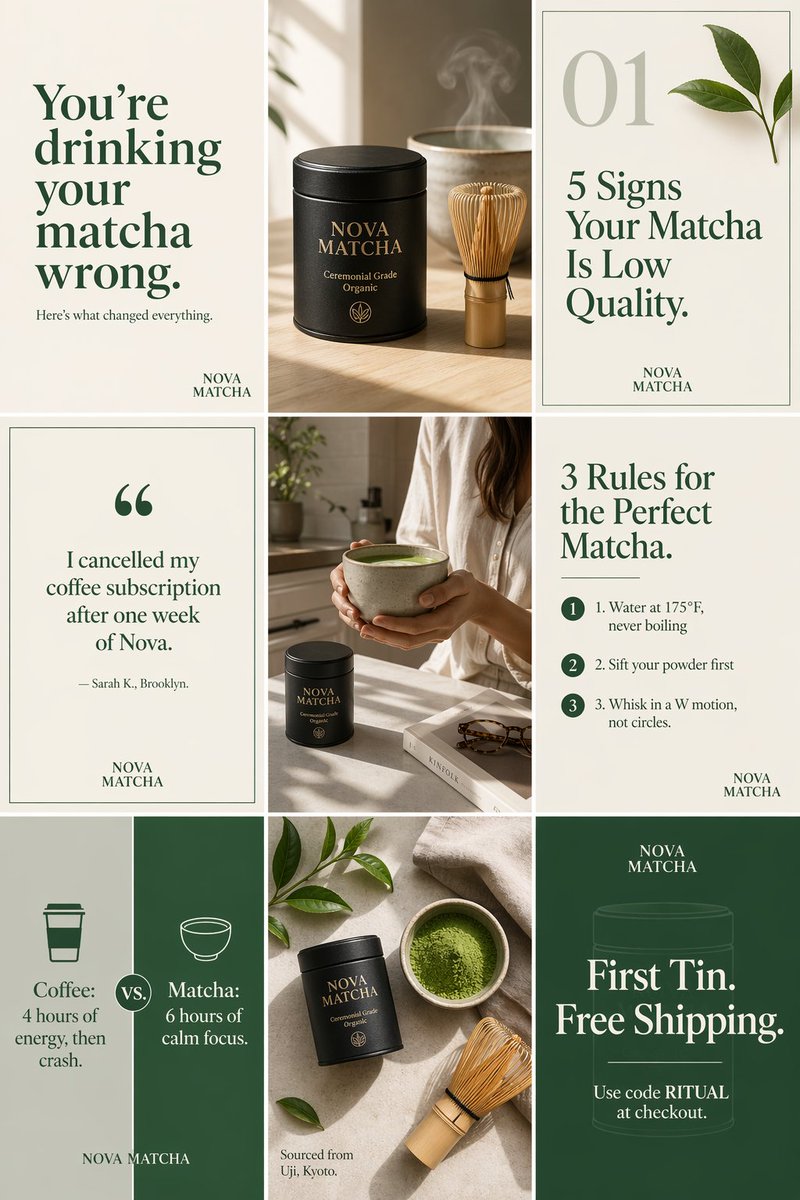

You are a social media creative director using ChatGPT Images 2.0.

You are a social media creative director using ChatGPT Images 2.0.

1/ MAKE YOUR PROMPTS SMARTER DAILY

1/ MAKE YOUR PROMPTS SMARTER DAILY

1/ TRAIN YOUR OFFER LIKE AN AI MODEL

1/ TRAIN YOUR OFFER LIKE AN AI MODEL

1/ WHY YOU KEEP STARTING OVER

1/ WHY YOU KEEP STARTING OVER