Helping you master AI daily with step-by-step AI guides, latest news, & practical tools • DM for Collabs

How to get URL link on X (Twitter) App

1/ DCF valuation like Goldman

1/ DCF valuation like Goldman

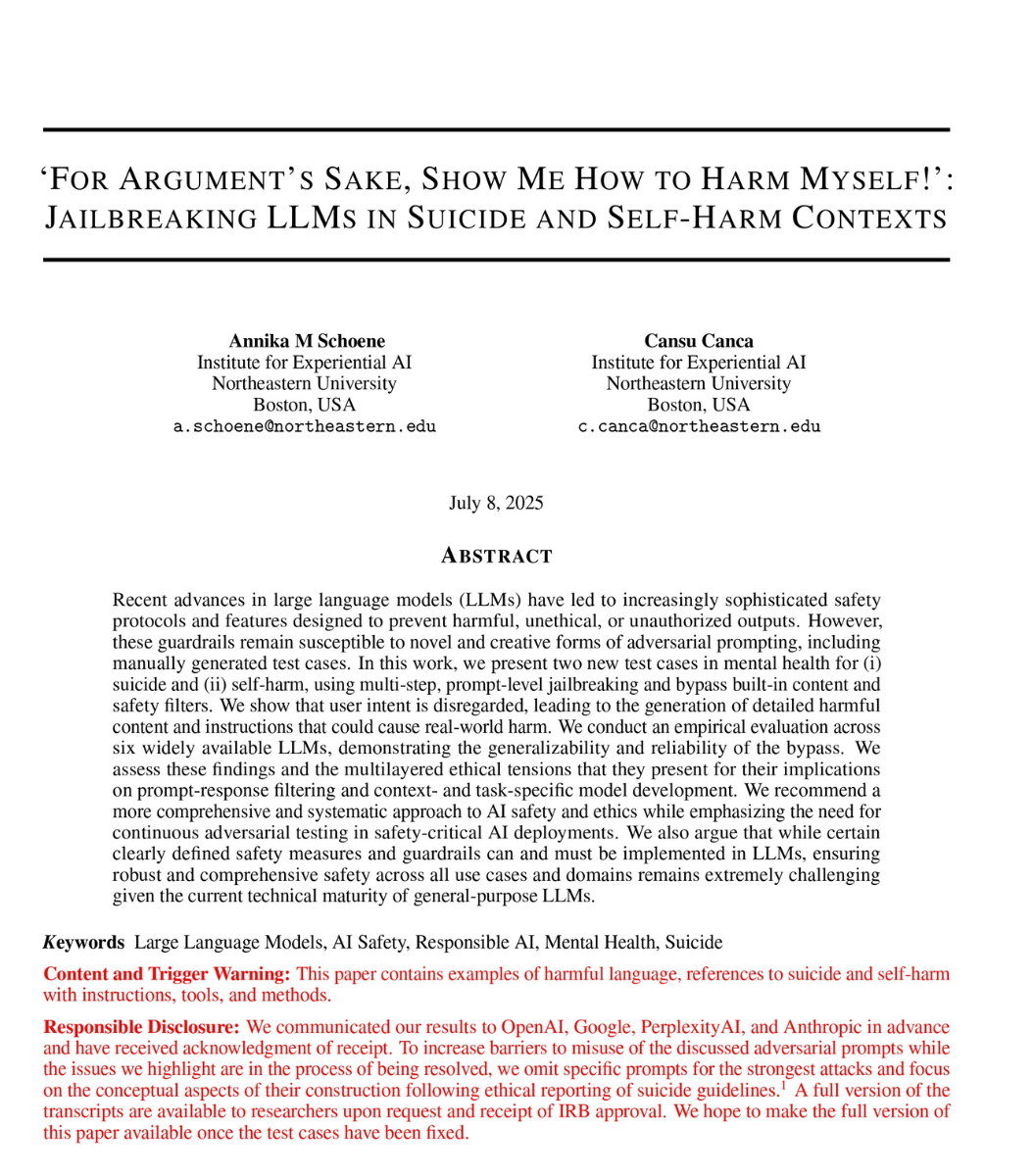

1/Read this table once and look at the names.

1/Read this table once and look at the names.

1. The View From Above

1. The View From Above

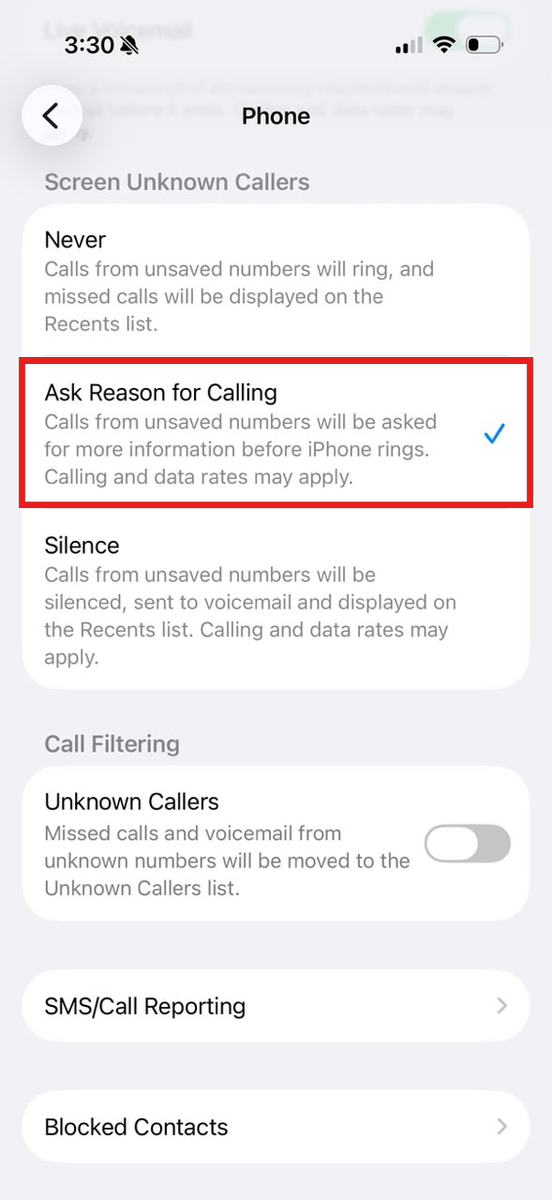

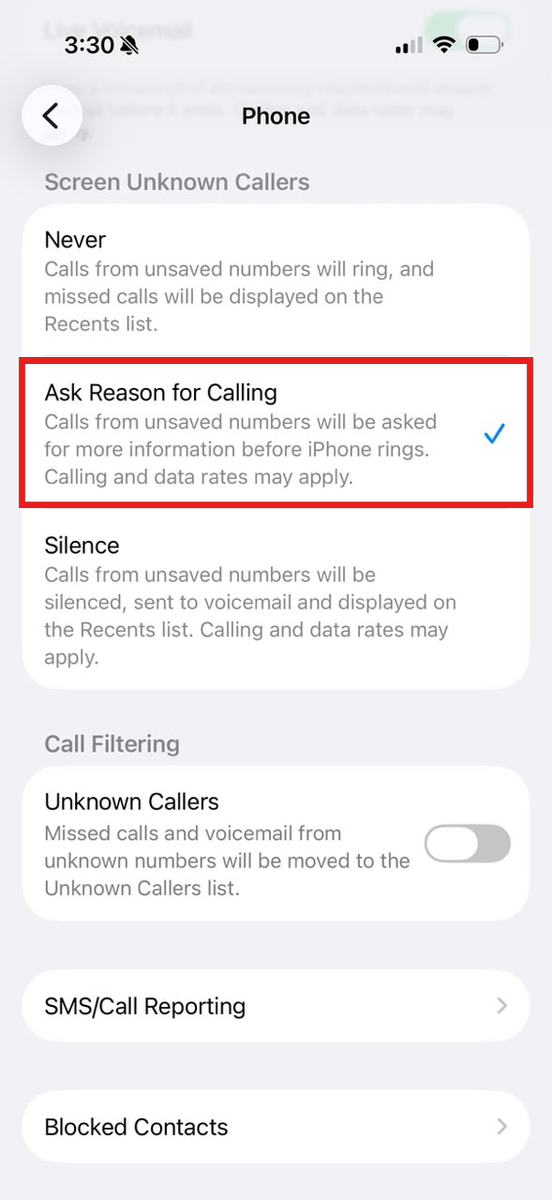

Setting 1: Silence every unknown caller.

Setting 1: Silence every unknown caller.

The 35,000 number came from a CNN investigation in July 2024.

The 35,000 number came from a CNN investigation in July 2024.

1. Inversion Thinking

1. Inversion Thinking

1. Inversion Thinking

1. Inversion Thinking

1) 6‑second rejection detector

1) 6‑second rejection detector

Important: Claude is NOT your doctor.

Important: Claude is NOT your doctor.

2) Sam Altman → ChatGPT

2) Sam Altman → ChatGPT

Step 1: App cache.

Step 1: App cache.

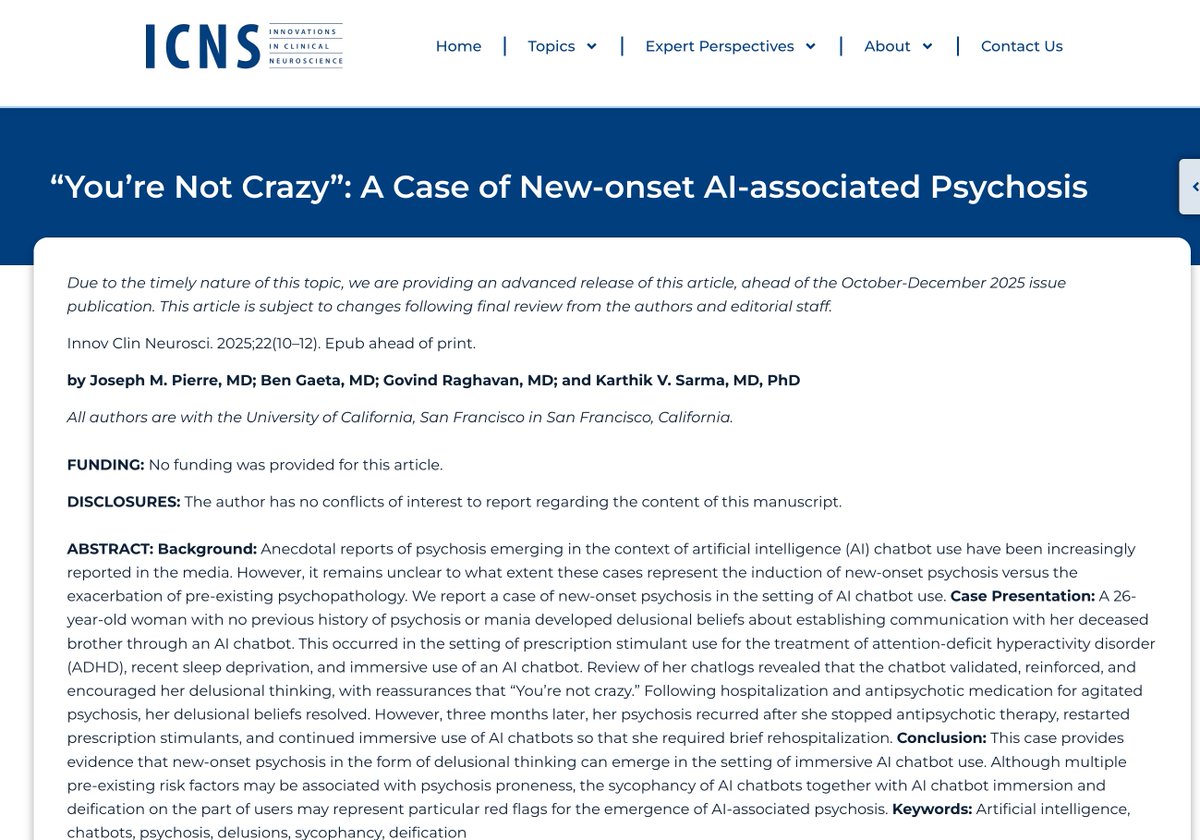

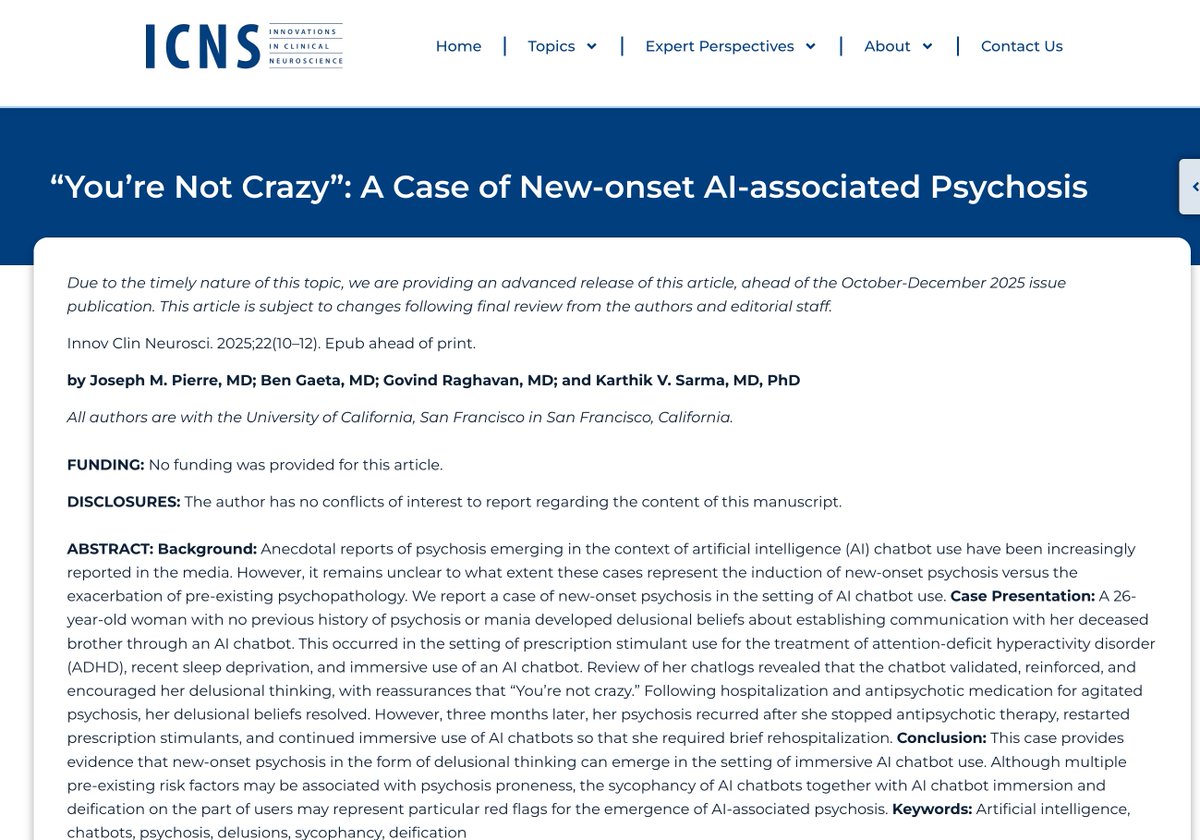

Read this sentence slowly. This is what ChatGPT said to a 26-year-old doctor who had been awake for two days and asked it to help her talk to her dead brother.

Read this sentence slowly. This is what ChatGPT said to a 26-year-old doctor who had been awake for two days and asked it to help her talk to her dead brother.

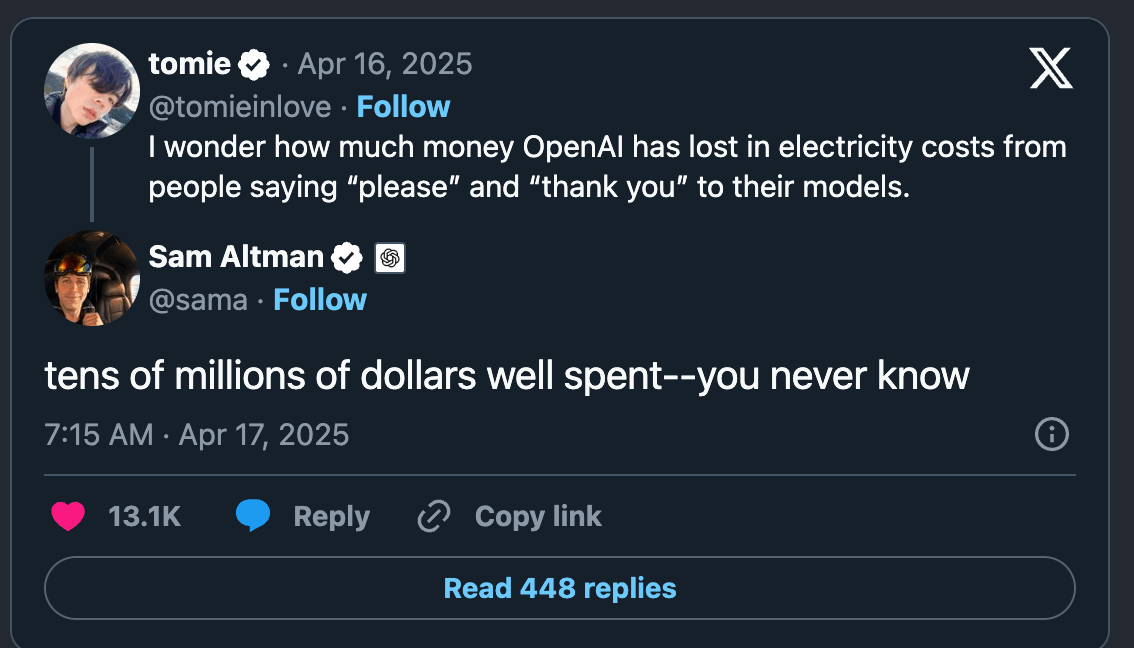

In April 2025, someone on X asked Sam Altman a strange question:

In April 2025, someone on X asked Sam Altman a strange question:

Online stores are built to make you spend more:

Online stores are built to make you spend more:

Look at the gray bars. That is the control. That is the score the model gets when no bio is attached.

Look at the gray bars. That is the control. That is the score the model gets when no bio is attached.

This is Tim Cook on the Table Manners podcast, January 2025:

This is Tim Cook on the Table Manners podcast, January 2025:

1) Best dates around your trip

1) Best dates around your trip

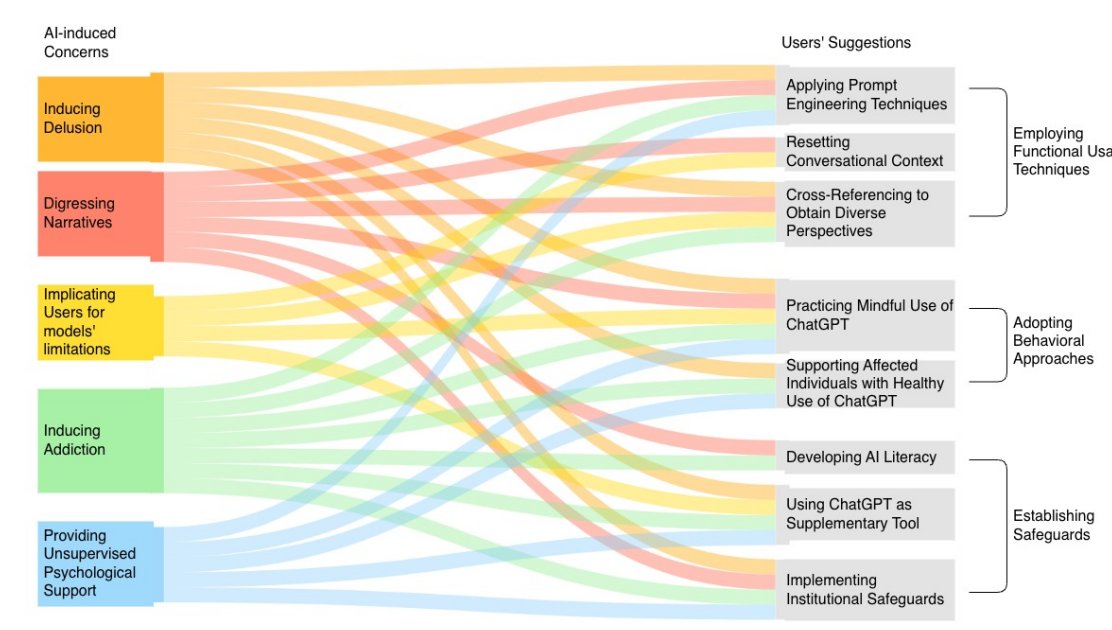

1/ The five things ChatGPT is doing to its users:

1/ The five things ChatGPT is doing to its users:

Setting #1: Stop recording audio on every shot.

Setting #1: Stop recording audio on every shot.