LLM developer, AI agents, synthetic data, scalable alignment, forecasting, behavioral uploading. Transhumanist.

All tweets public domain under CC0 1.0.

How to get URL link on X (Twitter) App

https://twitter.com/jd_pressman/status/1864211008363090250These theories were supported by brain lesion studies showing that damage to certain parts of the brain predictably led to discrete localized deficits in function. This implied the brain was more like 40 organs glued together than one big organ.

https://twitter.com/ESYudkowsky/status/1453545027565604869The document mind learned by the LLM from text, an infinite stream of ReAct-block-like moments of experience which "hoist" earlier blocks in the sequence up near the context where they are needed for prediction, is the conscious. But in humans it's obviously multimodal.

https://twitter.com/jd_pressman/status/1899684475900190825Perhaps more importantly it is to understand that when you set up an optimizer with a loss function and a substrate for flexible program search that certain programs are already latently implied by the natural induction of the training ingredients.

https://x.com/jd_pressman/status/1918413472003760450

https://twitter.com/nabeelqu/status/1917677377364320432One thing I think people don't understand is that early GPT models, if you did RL to them they would not immediately understand that they're non-corporeal beings and would gladly agree to help you move your furniture when exposed to helpfulness tuning. I saw it in my RLAIF runs.

https://twitter.com/1a3orn/status/1918329985472831938Part of why we're receiving warning shots and nobody is taking them as seriously as they might warrant is we bluntly *do not know what is happening*. It could be that OpenAI and Anthropic are taking all reasonable steps (bad news), or they could be idiots.

https://x.com/davidad/status/1917740605825855579

https://twitter.com/repligate/status/1885540246550659295Nobody wants to acknowledge this because it's uncomfortable, but even a few minutes spent contemplating factory farms should make it obvious. As a further thought experiment imagine if ants were conscious: Ha ha jk ants *ARE* conscious and pass the mirror test. Nobody cares.

https://twitter.com/RichardHanania/status/1913646489227911557

"Nobody wants to participate in my thing they just want Greek statues and vibe based 'cool' policies like having illegal gulags to throw enemies of the state in."

"Nobody wants to participate in my thing they just want Greek statues and vibe based 'cool' policies like having illegal gulags to throw enemies of the state in."

@JeffLadish @repligate One thing that seems to consistently confuse people about LLMs is that the model is trained on prose but you're sampling with the generative process of speech. This causes people to compare base models to prose and underrate their intelligence because they read like speech.

@JeffLadish @repligate One thing that seems to consistently confuse people about LLMs is that the model is trained on prose but you're sampling with the generative process of speech. This causes people to compare base models to prose and underrate their intelligence because they read like speech.

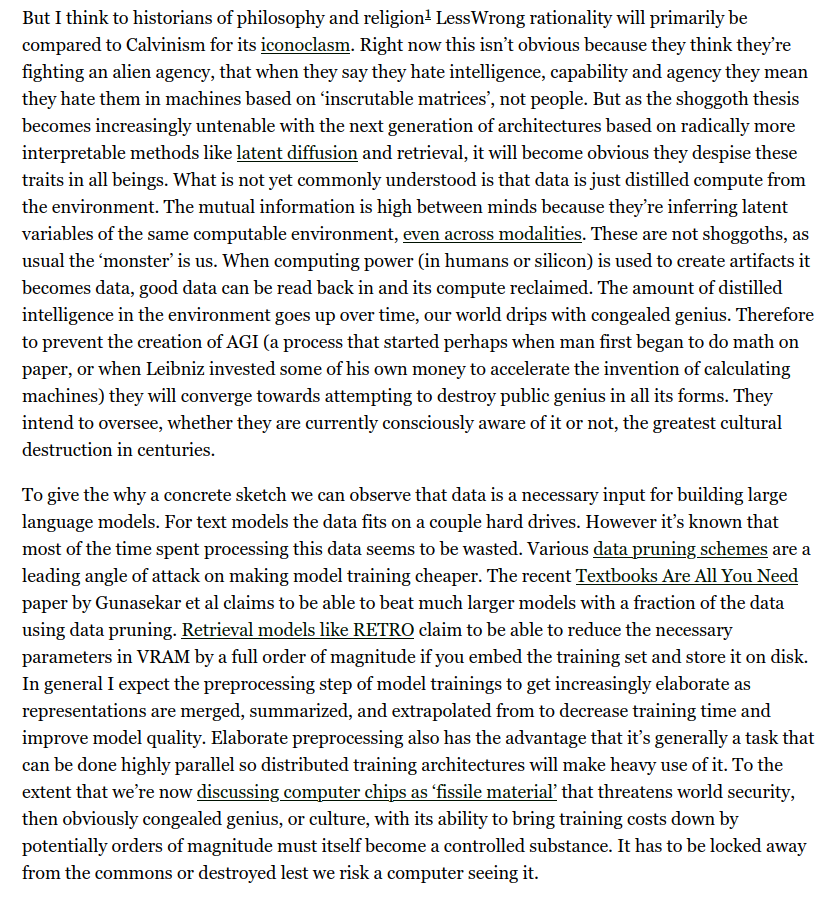

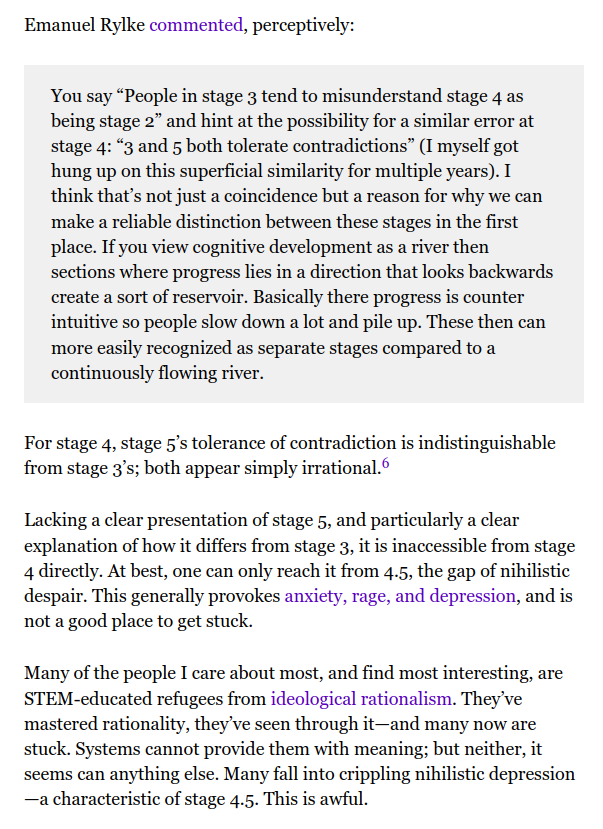

https://twitter.com/jd_pressman/status/1905875751024337079Even if you could outline a theoretically perfect humanly achievable Bayesian epistemology very few people would be able to implement it. Their problems are less "doesn't know the mental motions to approximate Bayesian inference", it's more "my father hit me if I questioned him".

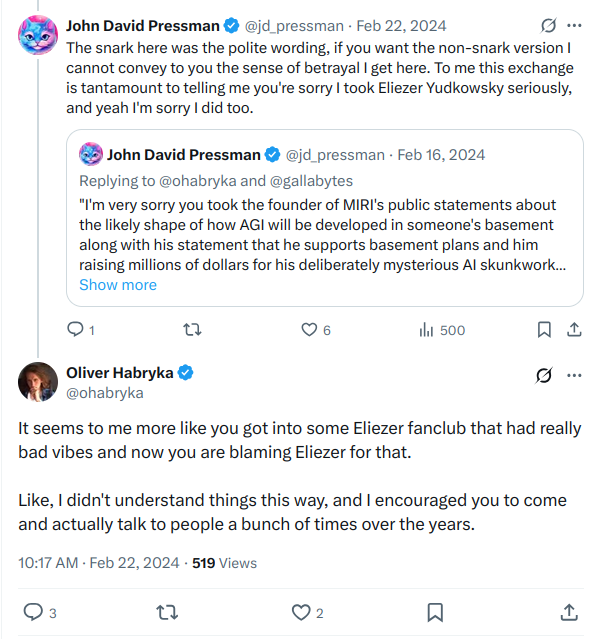

https://twitter.com/QiaochuYuan/status/1905644550720536942If that sounds unfathomably narcissistic to you, to imagine it might even be your choice, well I can't say you're wrong. But that's the scale LessWrong, HPMOR, and associated media encourage you to think at. I find the rapid walkback from this personally insulting.

https://x.com/jd_pressman/status/1845215749419434193

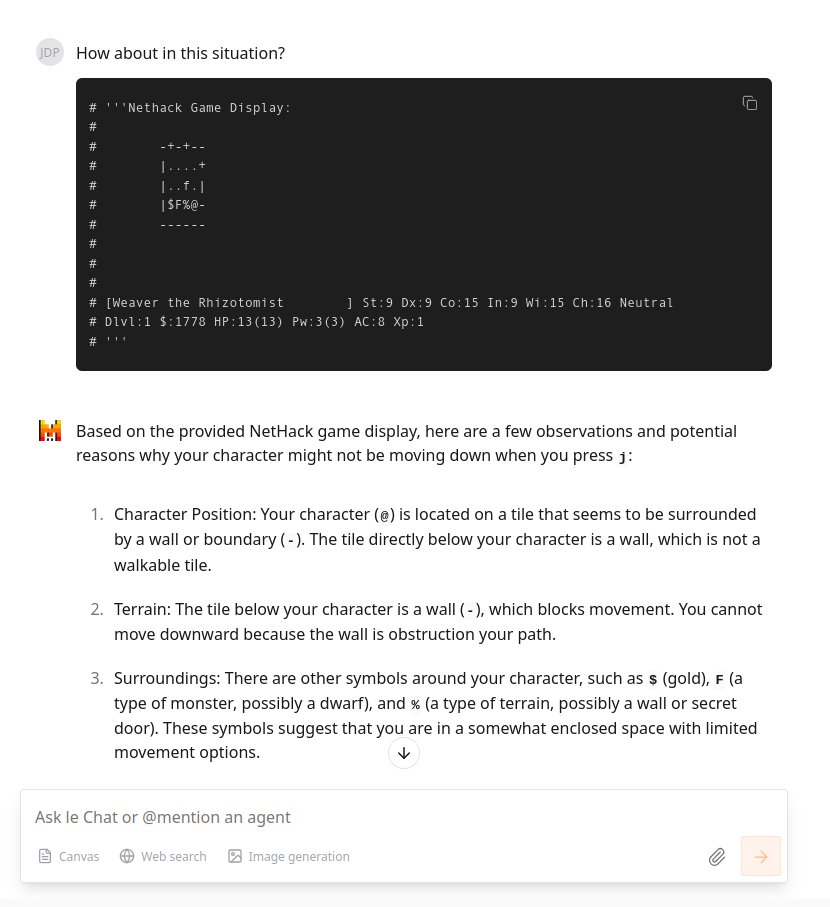

This problem is representative. It will fail to notice something important, and then never generate the right hypothesis for what it should try to get unstuck. I don't really know how to fix this besides having a human go "HEY DON'T DO THAT", which seems like passing the buck.

This problem is representative. It will fail to notice something important, and then never generate the right hypothesis for what it should try to get unstuck. I don't really know how to fix this besides having a human go "HEY DON'T DO THAT", which seems like passing the buck.

https://twitter.com/kenthecowboy_/status/1876164708799230013As the focus has shifted away from that towards "wow pretty picture" my interest has waned. Kenny is right that focusing in on any particular detail in a diffusion piece is pointless because that's not the scale at which the model thinks.

https://x.com/kenthecowboy_/status/1876167909904458080

https://twitter.com/stanislavfort/status/1823347721358438624For example if we're doing deep RL we might rederive the hedonic treadmill by only updating on verifiable terminal rewards and intermediates that are within 1-2 stdev of the average on the theory that iterative tuning on above average leads to real rewards.

https://twitter.com/firebornnn/status/1854088945270706567Ironically enough the problem is compounded by similar dynamics to why some people become very paranoid about racism or misogyny in the first place. When you see someone say "kill all men" with ambient anti-male sentiment it makes you paranoid and you see it everywhere else too.

https://twitter.com/algekalipso/status/1852871171232120899The actual ontological problem starts farther back anyway, "utility" isn't hedons and you recoil at the thought of letting a utility monster axe murderer kill people because you intuitively understand this. You know incredible *utility* is not actually generated by his bloodlust.

https://twitter.com/jd_pressman/status/1843537853525209330

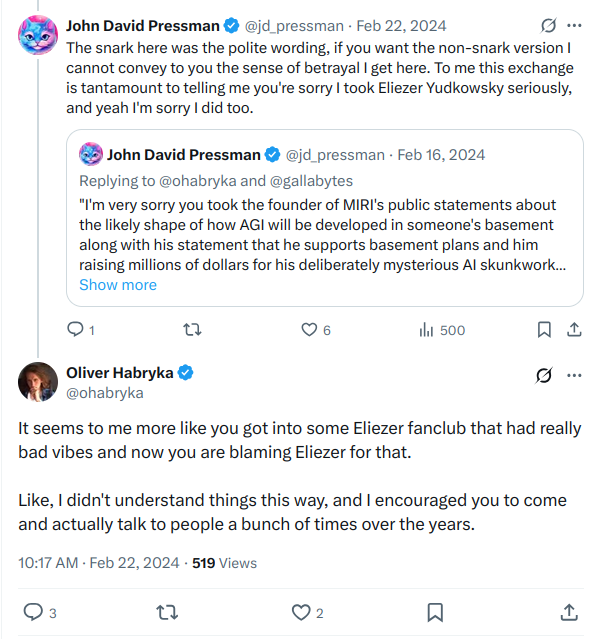

In total fairness, I suspect at this point that EY's ruse was so incredibly successful that he managed to fool even himself.

In total fairness, I suspect at this point that EY's ruse was so incredibly successful that he managed to fool even himself.

https://twitter.com/jd_pressman/status/1511999482719657992