I do AI Alignment Research. Currently at @METR_Evals on leave from my PhD at UC Berkeley’s @CHAI_berkeley. Opinions are my own.

How to get URL link on X (Twitter) App

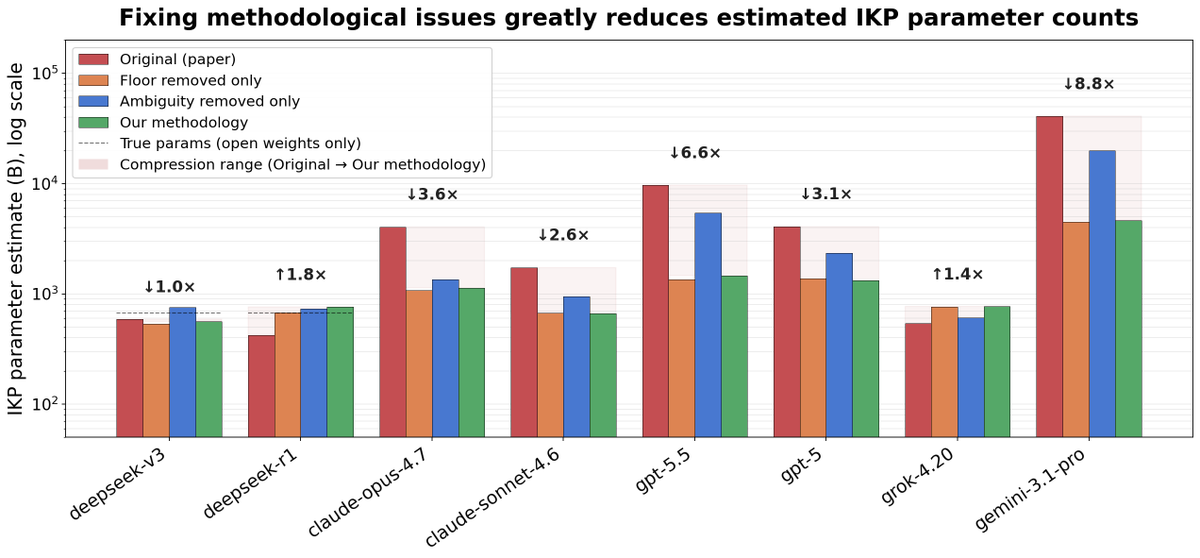

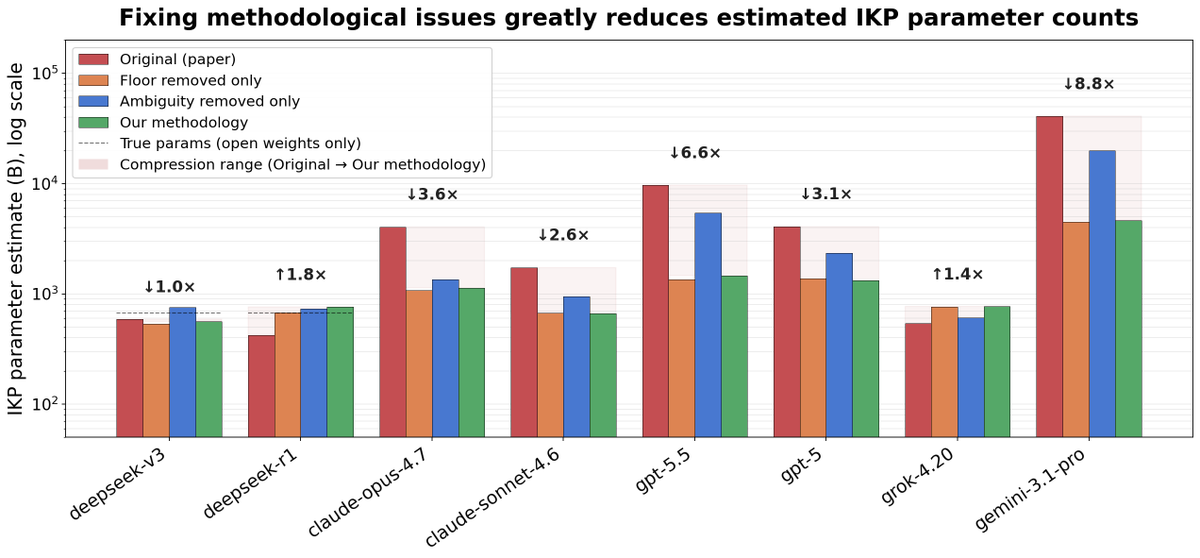

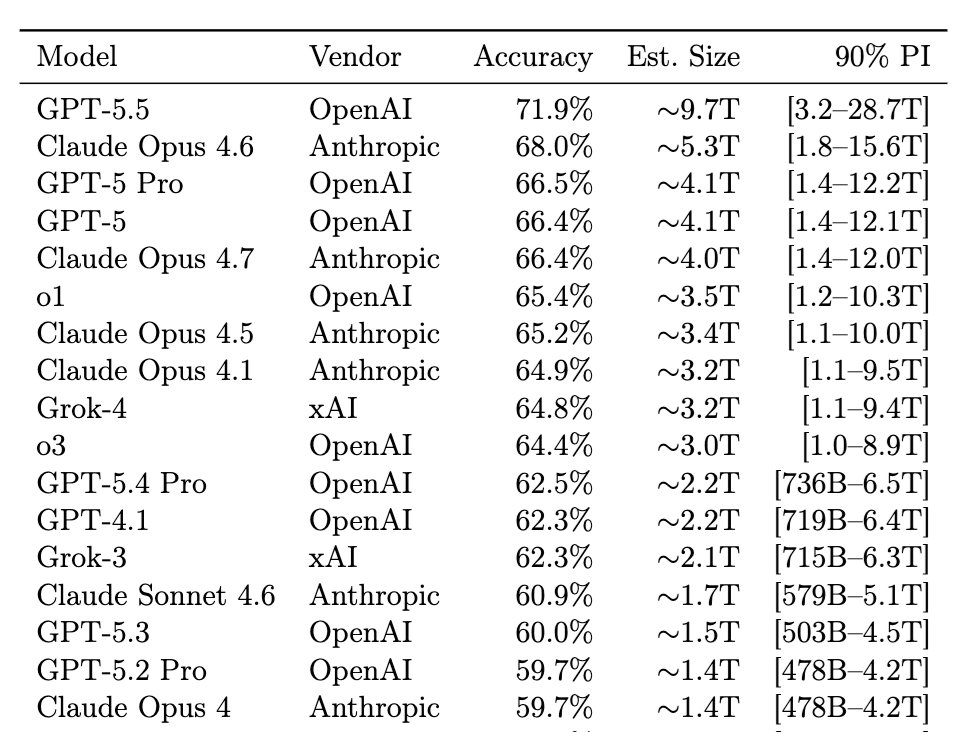

The paper, “Incompressible Knowledge Probes” (by @bojie_li), constructs a dataset of 1400 factual questions, and fits accuracy against parameter count.

The paper, “Incompressible Knowledge Probes” (by @bojie_li), constructs a dataset of 1400 factual questions, and fits accuracy against parameter count.

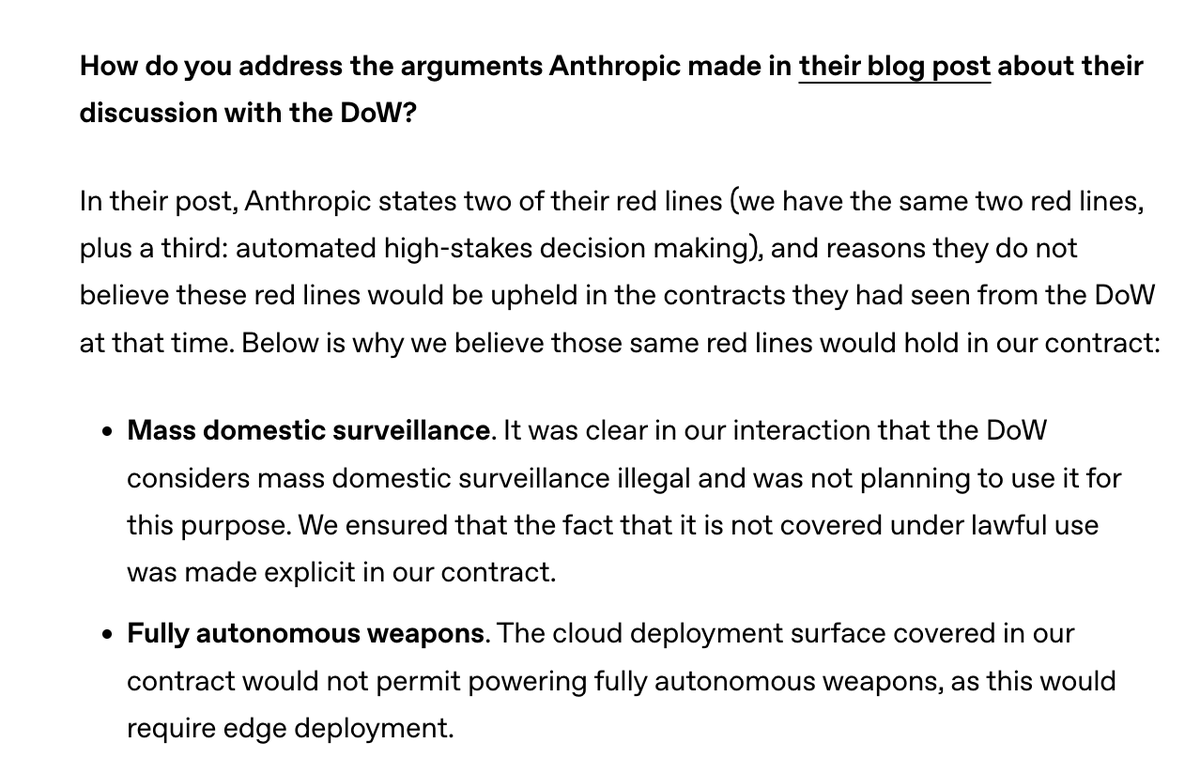

Why isn’t “intentional” enough? The DoW has long claimed that "incidentally" sweeping up Americans' data while targeting foreigners isn’t "intentional" domestic surveillance. And prior surveillance scandals often involved “incidental” collection.

Why isn’t “intentional” enough? The DoW has long claimed that "incidentally" sweeping up Americans' data while targeting foreigners isn’t "intentional" domestic surveillance. And prior surveillance scandals often involved “incidental” collection.

This, of course, did not stop OpenAI from blatantly misrepresenting this language in the blog post and in Sam Altman's tweets!

This, of course, did not stop OpenAI from blatantly misrepresenting this language in the blog post and in Sam Altman's tweets!

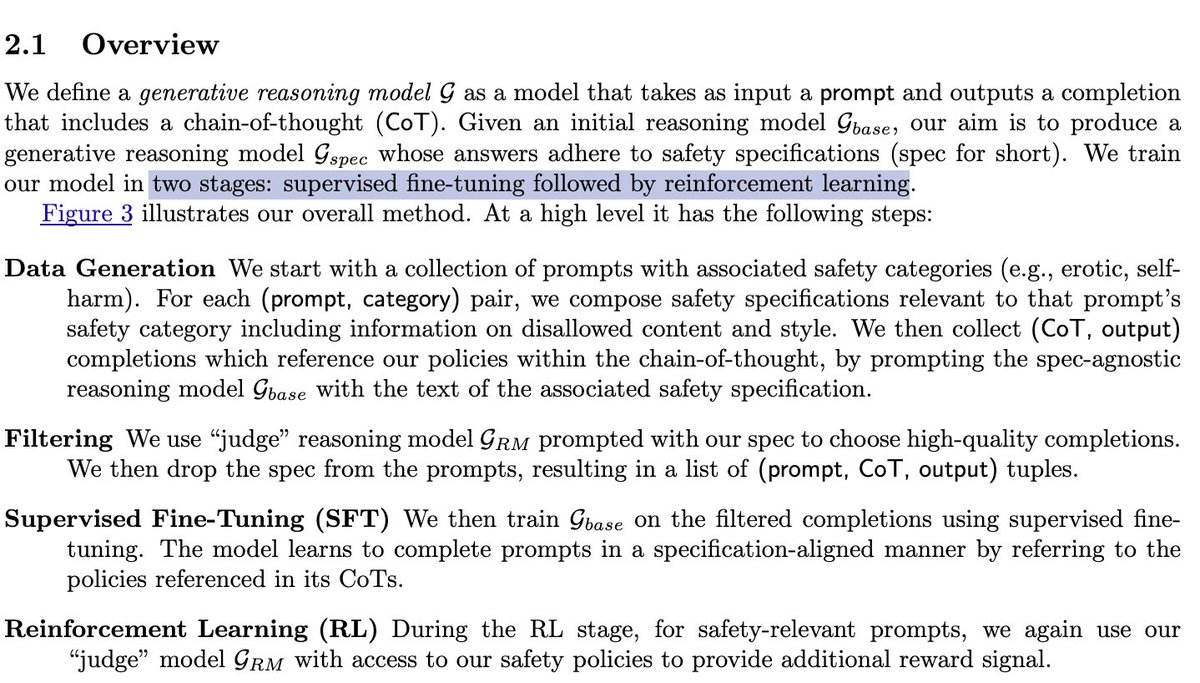

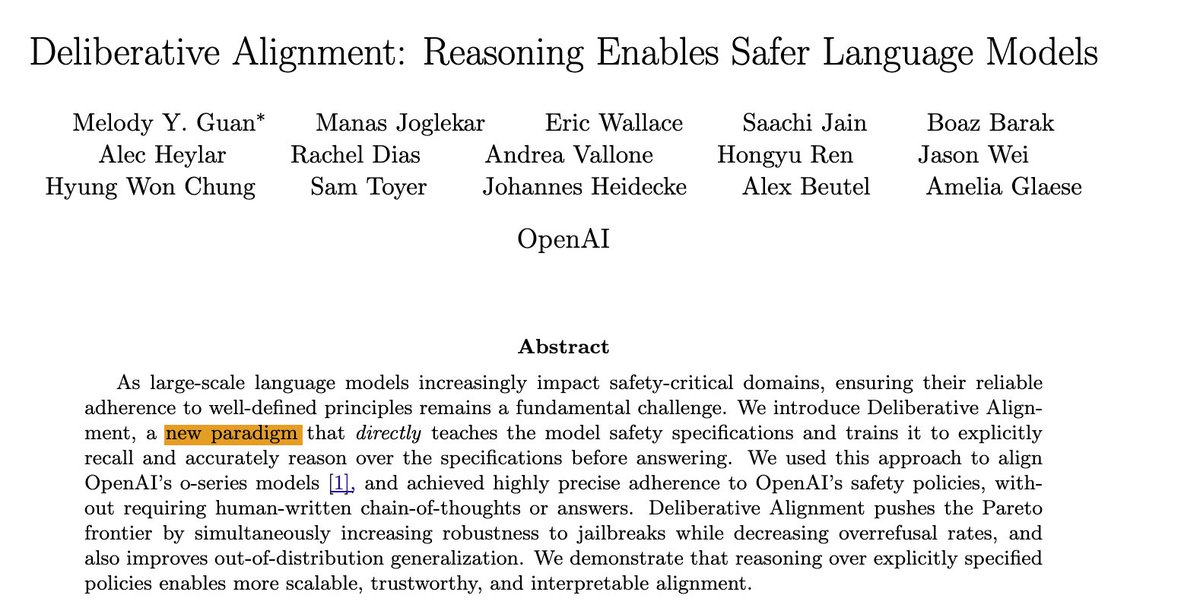

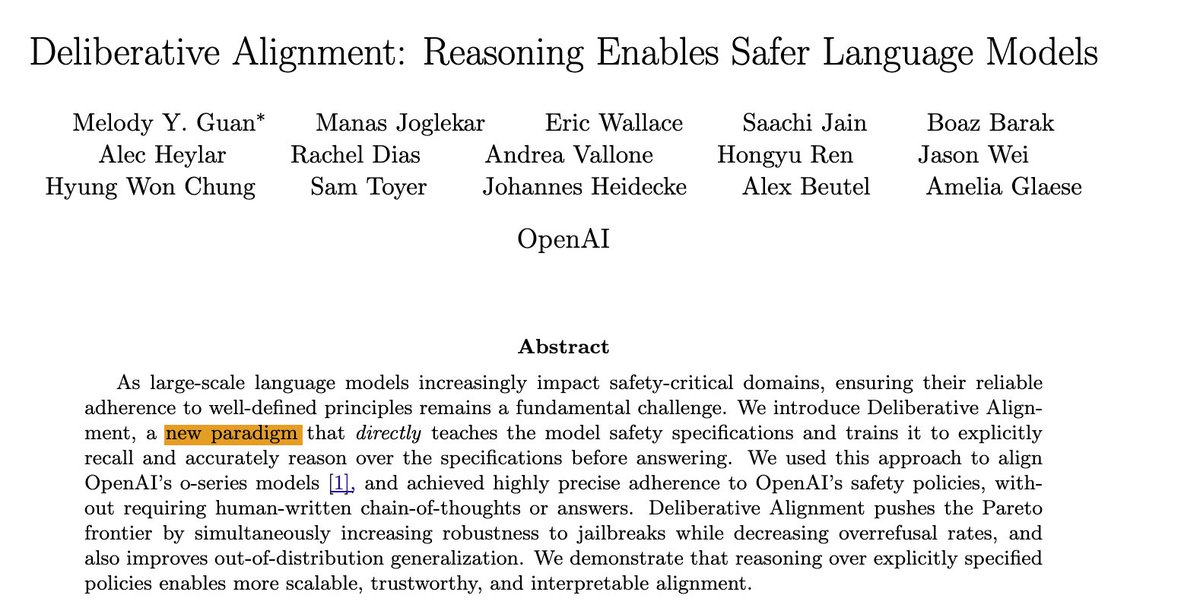

In the Deliberative Alignment paper, Guan et al. take a dataset of OAI policy-violating prompts, first use supervised finetuning to distill policy specifications into the generation model, then use a rating model with access to the policy specs to RLAIF the model.

In the Deliberative Alignment paper, Guan et al. take a dataset of OAI policy-violating prompts, first use supervised finetuning to distill policy specifications into the generation model, then use a rating model with access to the policy specs to RLAIF the model.