assoc prof @UCLLaws, fellow @ivir_uva. not resigned to today's digital power structures (yet). 🦣 https://t.co/pCUFKDvU4O https://t.co/cdvcO13Jmm

How to get URL link on X (Twitter) App

@huggingface @github @HelloCivitai @rgorwa There are a growing number of model marketplaces (Table). They can be hosting models that can create clear legal liability (e.g. models that can output terrorist manuals or CSAM). They are also hosting AI that may be used harmfully, and some are already trying to moderate this.

@huggingface @github @HelloCivitai @rgorwa There are a growing number of model marketplaces (Table). They can be hosting models that can create clear legal liability (e.g. models that can output terrorist manuals or CSAM). They are also hosting AI that may be used harmfully, and some are already trying to moderate this.

https://twitter.com/rasmus_kleis/status/1560509600671141888

Tuition fees are a political topic because they’re visible to students, but the real question is ‘how is a degree funded’? The burden continues to shift from taxation into individual student debt, precarious reliance on int’l students, and lecturer pay.

Tuition fees are a political topic because they’re visible to students, but the real question is ‘how is a degree funded’? The burden continues to shift from taxation into individual student debt, precarious reliance on int’l students, and lecturer pay.

https://twitter.com/krausefx/status/1560373298382442503Form: m.facebook.com/help/contact/5…

https://twitter.com/OfficeforAI/status/1548965702878601218Meanwhile, regulators are warned not to actually do anything, and care about unspecified, directionless innovation most of all, as will be clearer this afternoon as the UK's proposed data protection reforms are perhaps published in a Bill.

https://twitter.com/EUCourtPress/status/1511245698771173379Further reinforces that if you are going to order a targeted retention of data, it has to be done by an independent body, not by a public prosecutor (HK v Prokuratuur) or an independent-ish wing of the actual police (this case, presumably the the Gardaí Telecoms Liason Unit).

https://twitter.com/mikarv/status/1465605600843341824?s=20&t=KOZYVpalGgv9uu93Vgf3Dg

They are not currently announcing an enforcement plan relating to publishers.

They are not currently announcing an enforcement plan relating to publishers.

as the @zotero team notes, “Elsevier later stated that the change was required by new European privacy regulations — a bizarre claim, given that those regulations are designed to give people control over their data and guarantee data portability, not the opposite”.

as the @zotero team notes, “Elsevier later stated that the change was required by new European privacy regulations — a bizarre claim, given that those regulations are designed to give people control over their data and guarantee data portability, not the opposite”.

Firstly, the Commission acknowledges that standards are increasingly touching not on technical issues but on European fundamental rights (although doesn’t highlight the AI Act here). This has long been an elephant in the room: accused private delegation of rule making by the EC.

Firstly, the Commission acknowledges that standards are increasingly touching not on technical issues but on European fundamental rights (although doesn’t highlight the AI Act here). This has long been an elephant in the room: accused private delegation of rule making by the EC.

In particular, in the SCHUFA case, the referring court (Wiesbaden Admin Court) struggles with reconciling the idea that the score is just information with the fact it is relied upon by downstream actors.

In particular, in the SCHUFA case, the referring court (Wiesbaden Admin Court) struggles with reconciling the idea that the score is just information with the fact it is relied upon by downstream actors.

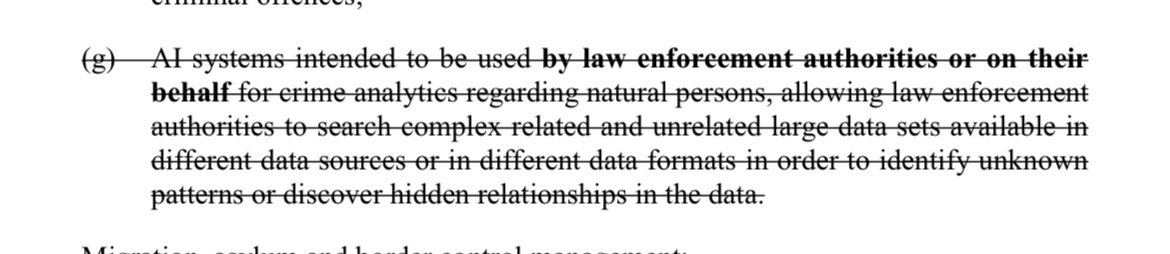

The Act (new trendy EU name for a Regulation) is structured by risk: from prohibitions to 'high risk' systems to 'transparency risks'. So far so good. Let's look at the prohibitions first.

The Act (new trendy EU name for a Regulation) is structured by risk: from prohibitions to 'high risk' systems to 'transparency risks'. So far so good. Let's look at the prohibitions first.

You'll join a deeply interdisciplinary team of critical privacy engineers (@carmelatroncoso @sedyst); sensor experts (@SrdjanCapkun); epidemiologists and medical devices experts (@marcelsalathe @klausscho); and systems and security whizzes (@gannimo @JamesLarus @ebugnion) 2/

You'll join a deeply interdisciplinary team of critical privacy engineers (@carmelatroncoso @sedyst); sensor experts (@SrdjanCapkun); epidemiologists and medical devices experts (@marcelsalathe @klausscho); and systems and security whizzes (@gannimo @JamesLarus @ebugnion) 2/

https://twitter.com/MSFTResearch/status/1392904046537756676

Requires constantly pouring all face data on Teams through Azure APIs. Especially identifies head gestures and confusion to pull audience members out to the front, just in case you weren’t policing your face enough during meetings already.

Requires constantly pouring all face data on Teams through Azure APIs. Especially identifies head gestures and confusion to pull audience members out to the front, just in case you weren’t policing your face enough during meetings already.