How to get URL link on X (Twitter) App

You can read it here:

You can read it here:

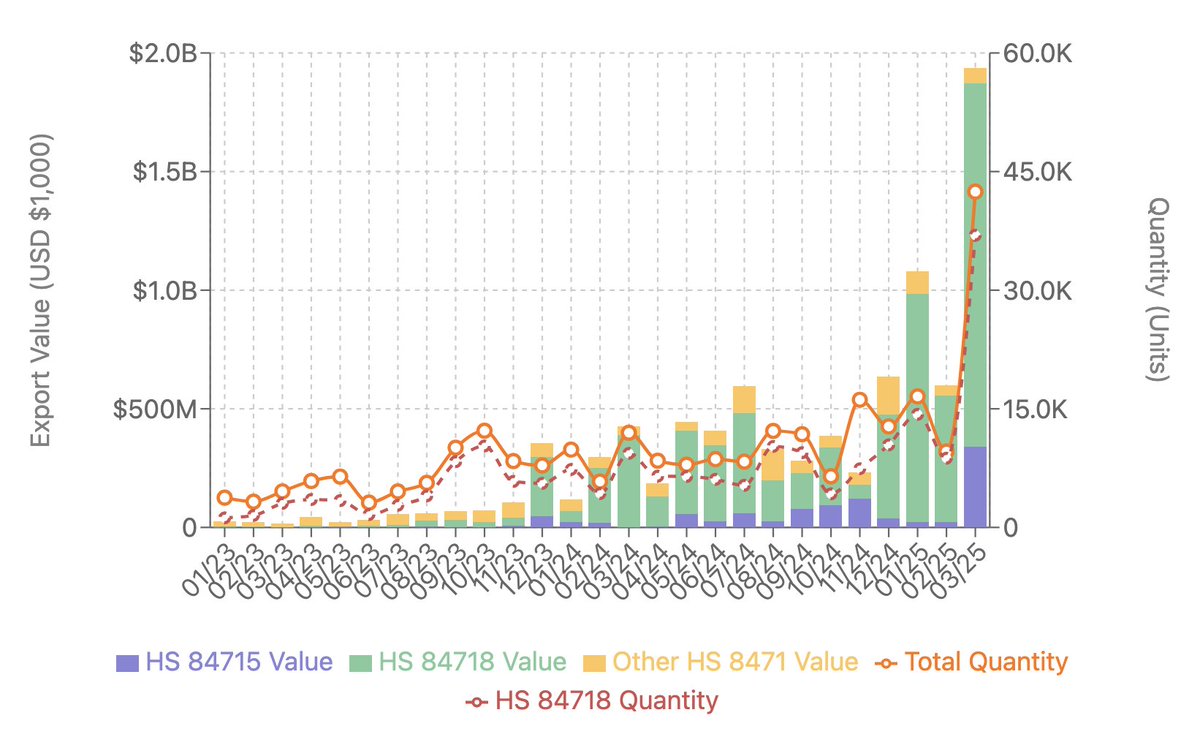

Most of the value is HS84718: "Other units of automatic data processing machines."

Most of the value is HS84718: "Other units of automatic data processing machines."

The 910C is basically two co-packaged Ascend 910Bs, China's best current-gen accelerator. But there's a twist: most (potentially all) of these chips weren't produced domestically—they were illicitly procured from TSMC despite export controls. 2/

The 910C is basically two co-packaged Ascend 910Bs, China's best current-gen accelerator. But there's a twist: most (potentially all) of these chips weren't produced domestically—they were illicitly procured from TSMC despite export controls. 2/

Full paper here:

Full paper here:

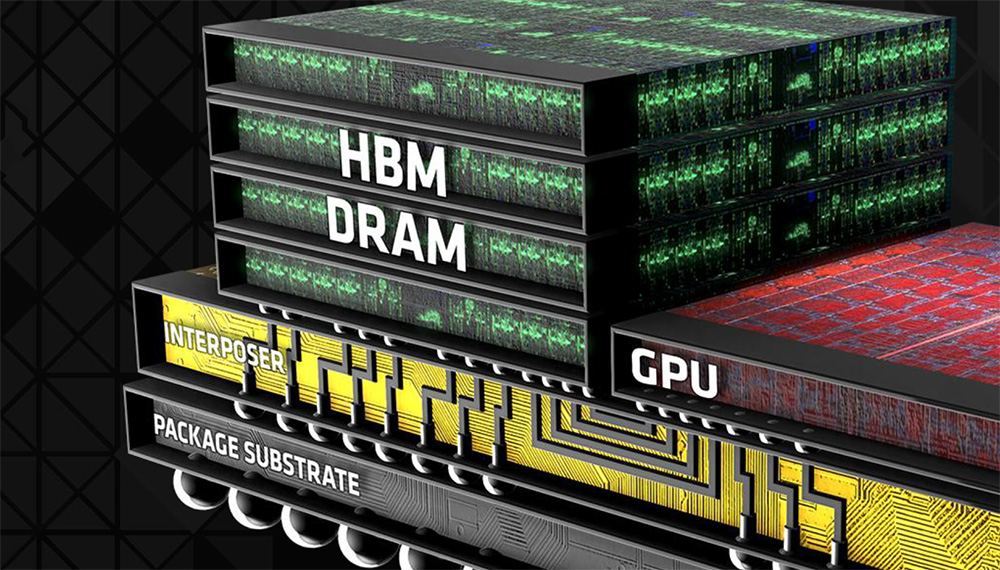

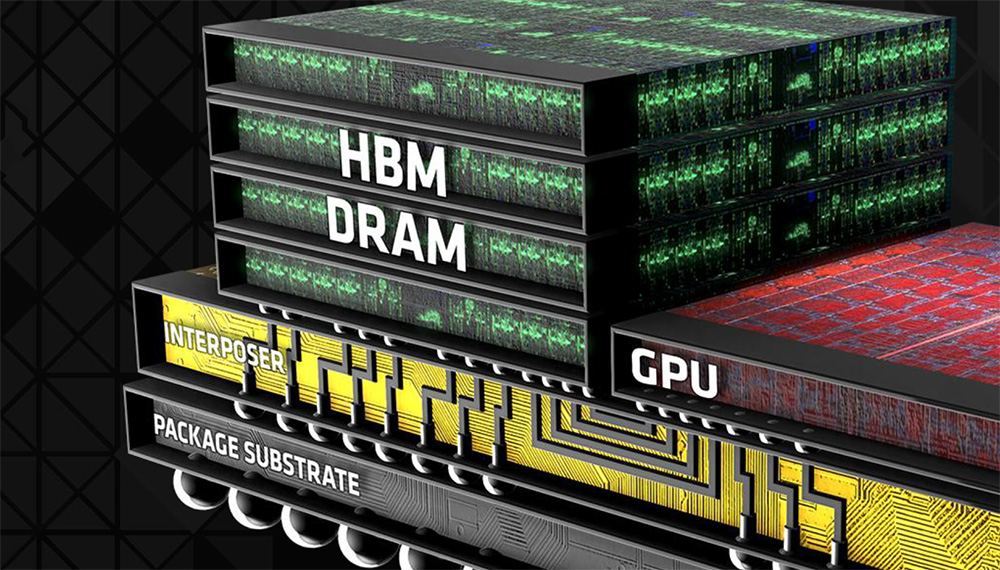

Quick HBM primer: HBM is the most advanced high-performance memory. It’s made by stacking DRAM dies. Only SK Hynix, Micron, and Samsung currently produce it at scale. All current leading data center AI chips use HBM. 2/

Quick HBM primer: HBM is the most advanced high-performance memory. It’s made by stacking DRAM dies. Only SK Hynix, Micron, and Samsung currently produce it at scale. All current leading data center AI chips use HBM. 2/

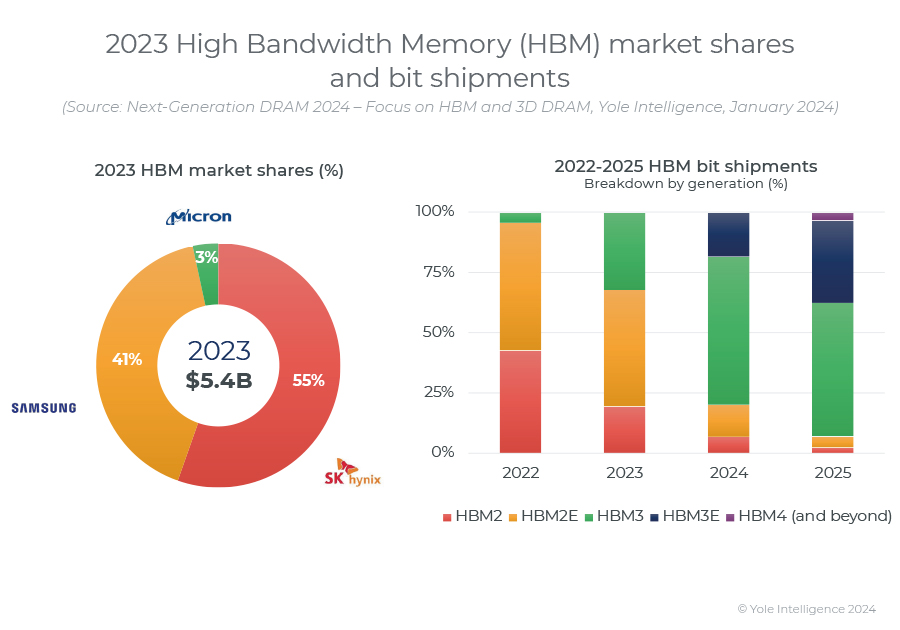

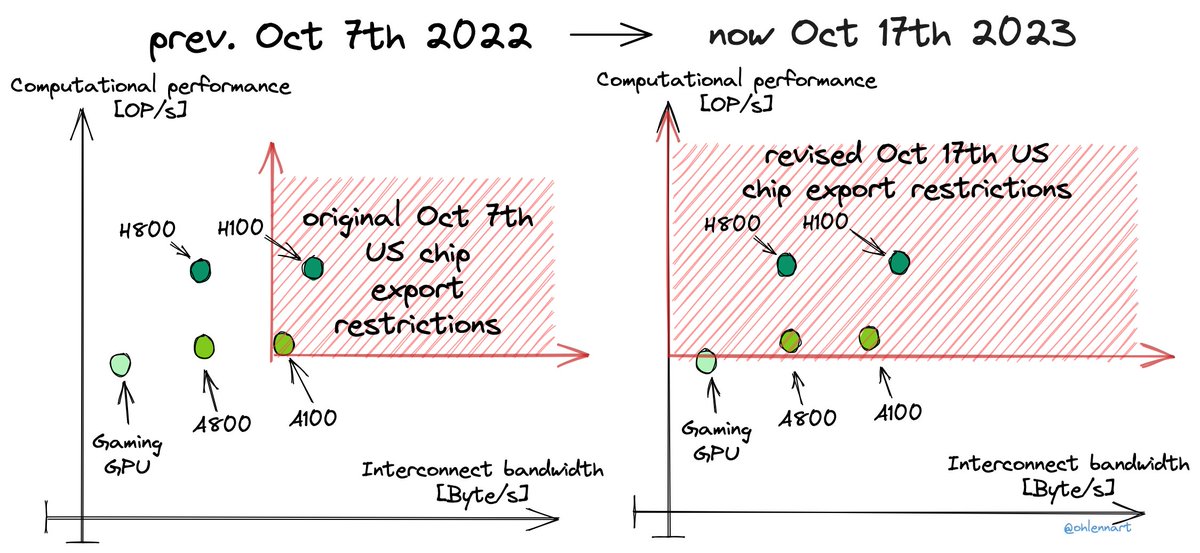

As I've highlighted before, there were loopholes in the initial controls. At first glance, these new measures seem to address those. The prior 'escape/scaling path' allowed continued scaling computational performance while bounding the interconnect.

As I've highlighted before, there were loopholes in the initial controls. At first glance, these new measures seem to address those. The prior 'escape/scaling path' allowed continued scaling computational performance while bounding the interconnect.https://twitter.com/ohlennart/status/1590413174788227072

They discuss the main difference between LSICS and traditional high-performance computing:

They discuss the main difference between LSICS and traditional high-performance computing:

1) We have curated a dataset of 123 milestone ML models.

1) We have curated a dataset of 123 milestone ML models.