How to get URL link on X (Twitter) App

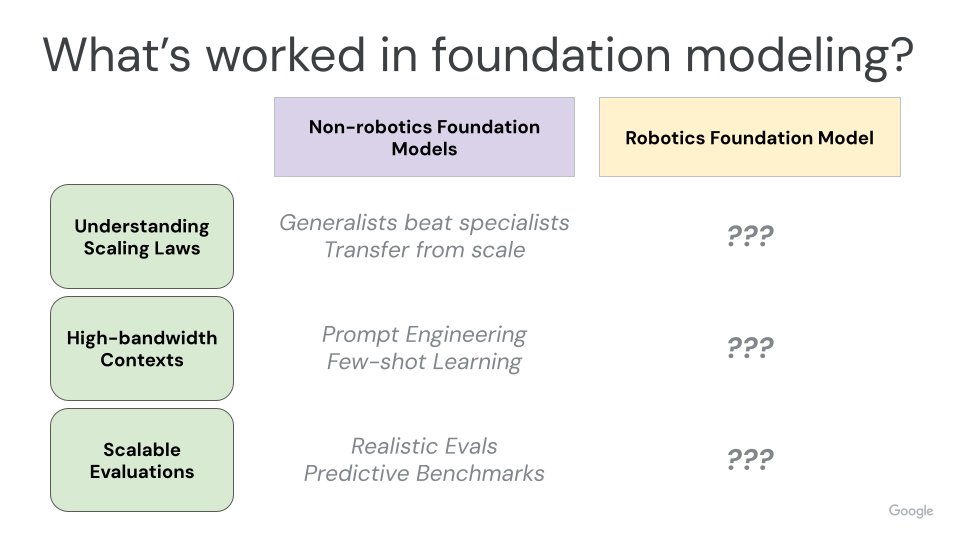

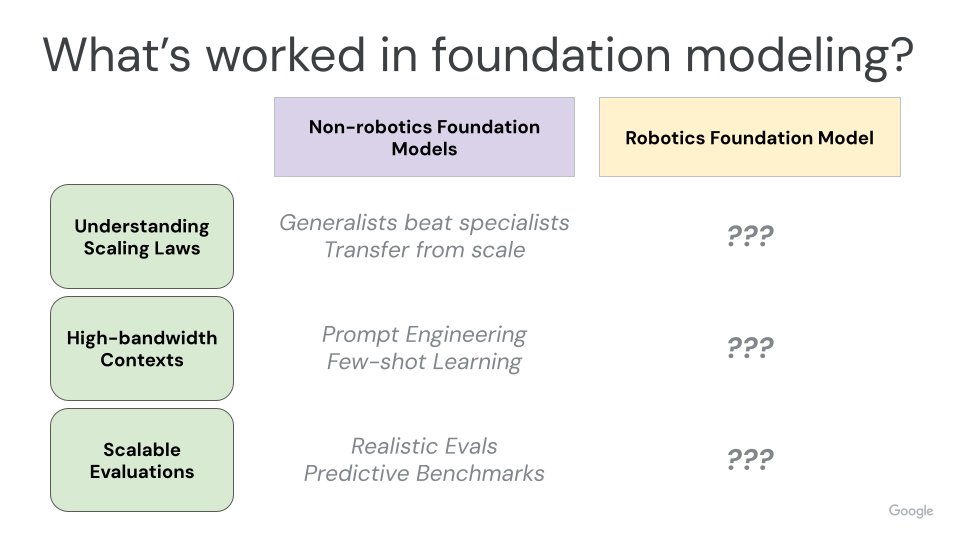

2) The first place to start might be to ask: why isn't robotics solved yet? The challenge is that even the most difficult robotics research settings are so many orders of magnitude less complex than the noise and chaos of the real world. How can we bridge this gap?

2) The first place to start might be to ask: why isn't robotics solved yet? The challenge is that even the most difficult robotics research settings are so many orders of magnitude less complex than the noise and chaos of the real world. How can we bridge this gap?

Large internet datasets, whether they are digital art or literature or Reddit posts, reflect some innate notion of the human condition. These kernels of truth can be shaped (ie. NSFW or hate speech filters) but always stem from some subset of human-produced content. (2/N)

Large internet datasets, whether they are digital art or literature or Reddit posts, reflect some innate notion of the human condition. These kernels of truth can be shaped (ie. NSFW or hate speech filters) but always stem from some subset of human-produced content. (2/N)