@mesch_joo, @crathmann & @DLilloMartin presenting the aims and policies of the @SignLangLingSoc at #wfd2019.

Research on #signlanguages should:

- Promote and include #Deaf researchers

- Give back to the community (inform policy/lobby)

- Respect Deaf communities and diversity

Research on #signlanguages should:

- Promote and include #Deaf researchers

- Give back to the community (inform policy/lobby)

- Respect Deaf communities and diversity

There is tight collaboration with the WFD, who are sometimes better suited to lobby for #Deaf and #language rights to governments around the world (through national/regional associations)

#wfd2019

#wfd2019

Q: There are hearing researching #signlanguages without knowing how to sign, what do you think about this?

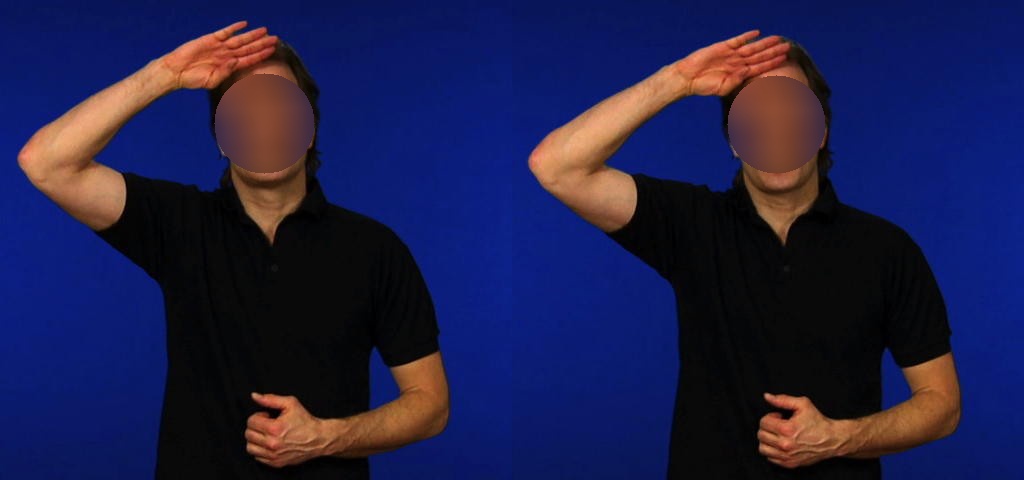

A: Increase the num of Deaf researchers and support collaborations between Deaf and hearing. Hearing researchers encouraged to sign! [signs hearing @DLilloMartin] #wfd2019

A: Increase the num of Deaf researchers and support collaborations between Deaf and hearing. Hearing researchers encouraged to sign! [signs hearing @DLilloMartin] #wfd2019

• • •

Missing some Tweet in this thread? You can try to

force a refresh