First time I helped to install Win10:

- How many install it without a MS account? 5%? Less?

- Lots of #darkpattern 'choices' regarding personal data

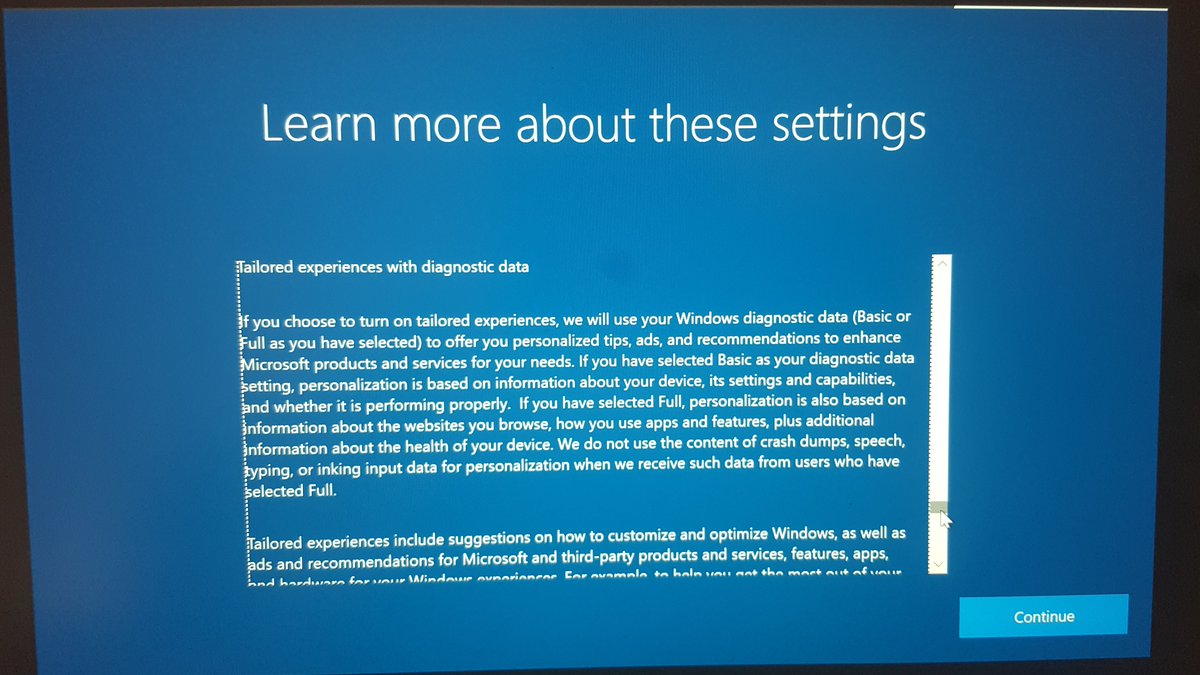

- 'Tailored experiences with diagnostic data'

Digital profiling for personalization+ads based on 'diagnostic data' …seriously?

- How many install it without a MS account? 5%? Less?

- Lots of #darkpattern 'choices' regarding personal data

- 'Tailored experiences with diagnostic data'

Digital profiling for personalization+ads based on 'diagnostic data' …seriously?

'If you have selected Full, personalization is also based on information about the websites you browse, how you use apps and features' #preselected

So, Win10 tricks users into massive digital profiling based on everything they do? This is not what an operating system should do.

So, Win10 tricks users into massive digital profiling based on everything they do? This is not what an operating system should do.

And it gets even better. Ultimately, Microsoft asks users to 'let apps use' their 'advertising ID', which is a unique identifier for each person using a Win10 device.

This identifier is much better suited to link profile data across many different companies than a name.

This identifier is much better suited to link profile data across many different companies than a name.

'App developers' and 'advertising networks' (= all kinds of real-time data brokers) can 'associate personal data they collect about you with your advertising ID' to provide 'more relevant advertising' (=ads based on massive digital profiling) and 'other personalized experiences'.

Now take a look at this video by a major Australian media network that claims to use data from '15 million Microsoft registered users to collect over 6 million behavioral markers …every minute'. Does this even include data from Win10 or MS Office users?

https://twitter.com/WolfieChristl/status/1139496671958700033

What about Microsoft's recent acquisition of Drawbridge, a company that helps other companies spy on 1 billion consumers and 3 billion devices across everyday life?

https://twitter.com/WolfieChristl/status/1134191525653598208

Microsoft deserves much more scrutiny than it gets with regards to personal data processing.

Here's some information about 'diagnostic data' they collected from Win10 users back in 2017:

Here's some information about 'diagnostic data' they collected from Win10 users back in 2017:

https://twitter.com/WolfieChristl/status/849952460395466752

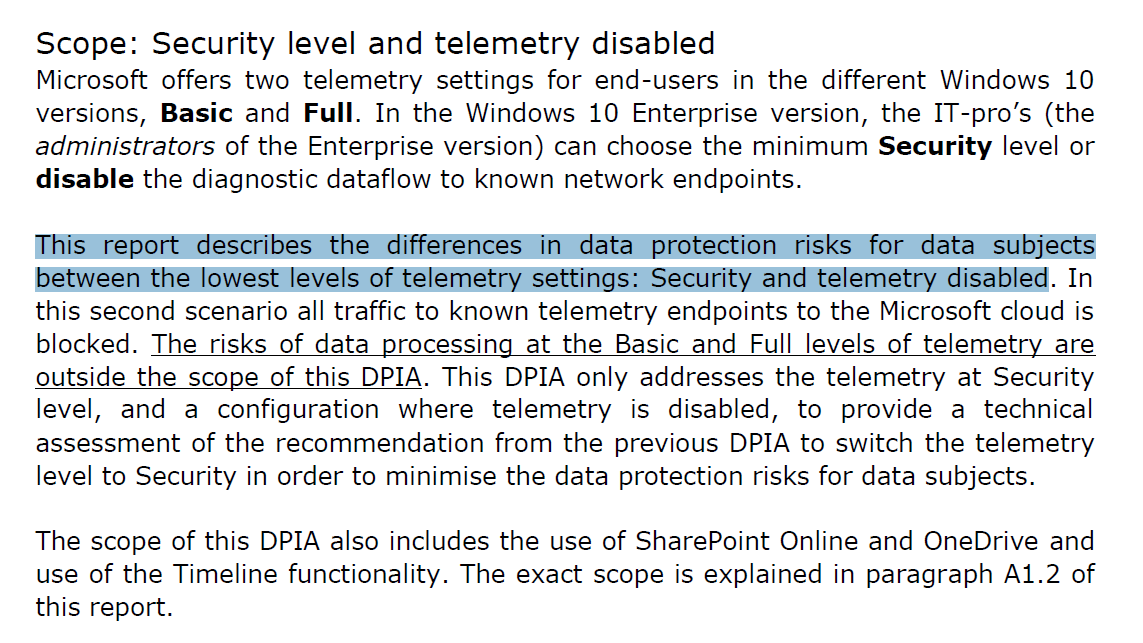

Prior to 2018, 'Microsoft assumed the telemetry data were not personal data', according to a report commissioned by the Dutch government. Absurd:

Here's an overview of this 'data protection impact assessment' on MS Office ProPlus: privacycompany.eu/en/impact-asse…

https://twitter.com/WolfieChristl/status/1062349356702068737

Here's an overview of this 'data protection impact assessment' on MS Office ProPlus: privacycompany.eu/en/impact-asse…

"The Microsoft audience graph consists of 120 million Office365 subscribers, 1.5 billion Windows users and 500 million LinkedIn users. LinkedIn professional data is a unique element in the mix. There’s also data from Outlook and Skype users"

From 2018:

From 2018:

https://twitter.com/WolfieChristl/status/1046611158902296576

Some more Microsoft stuff:

LinkedIn used email addresses of 18 million non-members to 1:1 target people on Facebook, according to the Irish DPA:

LinkedIn shares user data for 'social, economic and workplace research' by default?

LinkedIn used email addresses of 18 million non-members to 1:1 target people on Facebook, according to the Irish DPA:

https://twitter.com/WolfieChristl/status/1066066783688409089

LinkedIn shares user data for 'social, economic and workplace research' by default?

https://twitter.com/WolfieChristl/status/997263935626727424

LinkedIn privacy policy:

"We do not share your personal data with any [third parties] except for …hashed or device identifiers" or "data already visible to any users of the Services".

So, Microsoft may actually share nearly any kind of personal data with others? Totally shady.

"We do not share your personal data with any [third parties] except for …hashed or device identifiers" or "data already visible to any users of the Services".

So, Microsoft may actually share nearly any kind of personal data with others? Totally shady.

When MS acquired LinkedIn in 2016, TC outlined how MS might integrate LinkedIn+data into Office and other products including for CRM/sales, recruitment and 'talent management' purposes; to increase engagement+subscriptions+ 'open the door' for advertising: techcrunch.com/2016/06/13/how…

This new examination commissioned by the Dutch government found that while the April 2019 (enterprise!) version of Office 365 ProPlus doesn't routinely scan Word docs to detect resumes anymore, its transmission of 'diagnostic' data is still concerning: rijksoverheid.nl/binaries/rijks…

There's a whole set of brand new data protection impact assessments of Microsoft enterprise products commissioned by the Dutch government.

Short/long blog:

privacycompany.eu/en/new-dpia-on…

privacycompany.eu/en/new-dpia-on…

Docs:

rijksoverheid.nl/documenten/rap…

ZDNet article:

zdnet.com/article/window…

Short/long blog:

privacycompany.eu/en/new-dpia-on…

privacycompany.eu/en/new-dpia-on…

Docs:

rijksoverheid.nl/documenten/rap…

ZDNet article:

zdnet.com/article/window…

The Win10 assessment examines the risks at the 'Security' level of diagnostic data transfer via telemetry, which is not available for many users.

We urgently need similar data protection assessments for standard MS products with Basic/Full telemetry and other settings enabled…

We urgently need similar data protection assessments for standard MS products with Basic/Full telemetry and other settings enabled…

This inspection of 'Office 365 Online and mobile Office apps' for the Dutch government found that 3 "iOS apps (Word, PowerPoint and Excel) secretly send diagnostic data to the US-based marketing company Braze, without any information about the existence …of this data processing"

• • •

Missing some Tweet in this thread? You can try to

force a refresh