Graph Convolutional Networks… from attention!

In attention 𝒂 is computed with a [soft]argmax over scores. In GCNs 𝒂 is simply given, and it's called "adjacency vector".

Slides: github.com/Atcold/pytorch…

Notebook: github.com/Atcold/pytorch…

Slide 1: *shows title*.

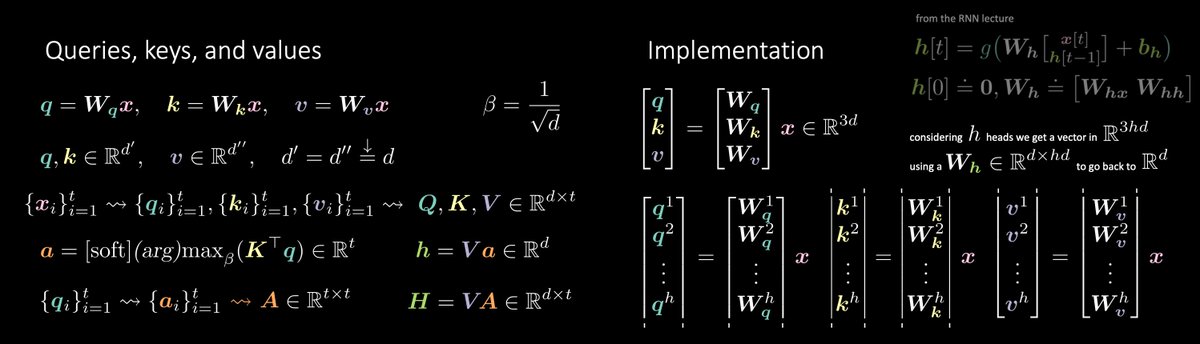

Slide 2: *recalls self-attention*.

Slide 3: *shows 𝒂, points out it's given*.

The end.

Literally!

I've spent the last week reading everything about these GCNs and… LOL, they quickly found a spot in my mind, next to attention!

In self-attention, every element in the set looks at each and every other element.

If a sparse graph is given, we limit each element (node / vertex) to look only at a few other elements (nodes / vertices).

Don't you worry! Let's reason on the edges (connecting the incoming nodes).

Enters the "gated" GCN, where the incoming node / message is modulated by a gate 𝜂.

Finally, because-why-not, let's add some residual connections to smooth a little the loss landscape.

Tadaaaa!

The end.

Next week (if I survive ICLR): training your own energy model from scratch.