I get asked quite a lot about the difference between the original US QAnon movement and the recent rise of a new, soft, global QAnon. Here's how I understand it: The original QAnon, until this year, was primarily an American movement deeply steeped into US culture and politics.

President Trump, the US culture war, partisan party politics and religious narratives of good vs evil and God vs Satan were central to the original QAnon movement. But the Covid-19 pandemic was a game changer. Suddenly, millions of people who'd previously barely heard of QAnon

found themselves in lockdown with hours and hours of time to spend on social media. Some people lost their jobs, were frightened by the impact of a virus about which we knew little, anxious about their loved ones, the wider community and the economy. And they found online content

that acknowledged their fears about lockdown, vaccines, masks, social distancing, jobs, civil liberties and the economy. Naturally, some of that content came from the US QAnon movement, who believed the virus was a plot by the deep state cabal and/or hostile enemies like China

to put an end to the Q operation, Trump presidency and the ensuing "storm". So US QAnon suddenly found a whole new global audience of Covid sceptics who might not necessarily have been interested in internal US politics and culture. And then the big shift happened in June/July,

when social media companies began restricting the famous QAnon terms, phrases and hashtags on their platforms. Suddenly, the reach of QAnon narratives and its ability ro recruit new believers was weakened, and therefore they came up with the idea of hijacking some

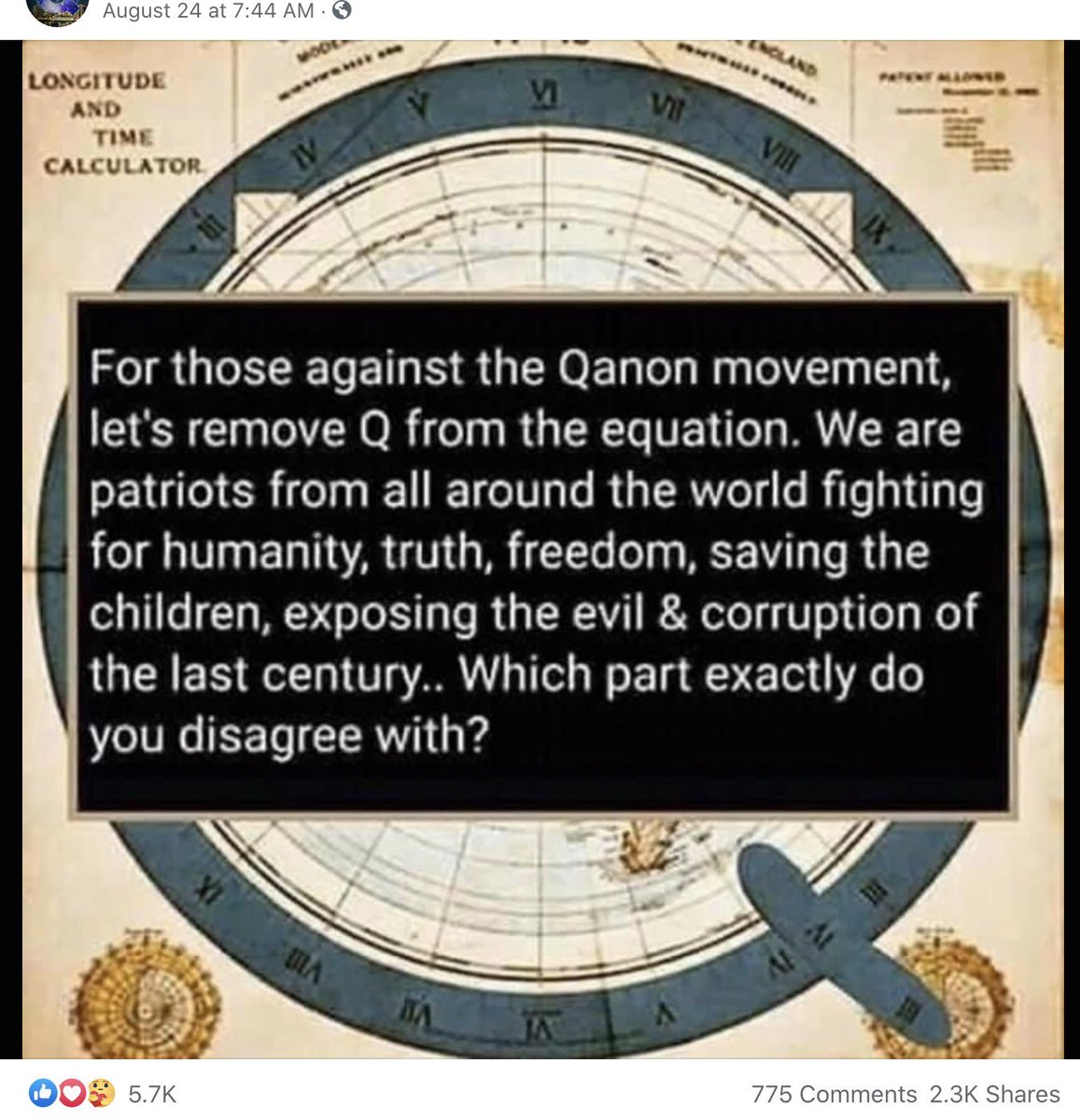

well-known, established hashtags and phrases like #SaveTheChildren and #SaveOurChildren. This was such a clever move. Millions of people around the world saw these hashtags pop up on their social media feeds. Who can possibly disagree with the idea of saving children and

opposing child abuse and trafficking? That's something literally all of us, regardless of our politics and personal views, can get behind. This is precisely why global "Save Our Children" marches have become popular, featuring diverse crowds from all walks of life/backgrounds.

Posts, memes and videos about the plight of hundreds of thousands of children around the world resonated with ordinary people in different countries. While some political, religious or cultural aspect of US QAnon might not have been too appealing to these people,

the secret paedo global elite aspect, brought to their attention by #SaveOurChildren, was. This is what I would describe as soft QAnon. And it probably explains why women and young people are heavily involved in these new rallies we are seeing in different parts of the world.

I spoke to people in a London "Save Our Children" march. While most were QAnon followers, some knew little about it or the nitty gritty of US politics, and were only there to campaign for children being trafficked by elites. However, the organisers are proper QAnon believers.

This is a distinction we need to make in our reporting if we want to understand the movement better. Not everyone who posts #SaveOurChildren on social media is necessarily a hardcore QAnon believer. And as QAnon spreads globally, the specifics will differ from one country

to another. So to sum up, two things happened this year which gave rise to US QAnon and made it a global movement with soft QAnon marches around the world: Covid-19 and the hijacking of #SaveOurChildren after social media companies clamped down on original QAnon terms.

• • •

Missing some Tweet in this thread? You can try to

force a refresh