Writing for @wired, @pomeranian99 gets a first-of-its-kind behind-the-scenes look at Youtube's algorithm development team, in order to document the company's attempt to reduce the service's role in spreading and reinforcing conspiracy theories.

wired.com/story/youtube-…

1/

wired.com/story/youtube-…

1/

Thompson traces the origin of the crisis to the company's drive for more "engagement" that led them to tune their recommendation system to identify and propose more specialized, esoteric versions of the video you'd just watched.

2/

2/

The idea was to lead you down a rabbit hole of ever-more-specific versions of your interests, helping you discover niches you never knew existed.

3/

3/

This dynamic in recommendation systems has gotten a lot of attention lately, and most of it is negative, but let's pause for a moment and talk about what this means for non-conspiratorial beliefs.

4/

4/

Say you happen upon a woodworking video, maybe due to a friend's post on social media. You watch a few of them and you find yourself interested in the subject and tuning in more often.

5/

5/

The recommendation system presents an array of possible next-views, but tilted away from general-interest woodworking videos, instead offering you a menu of specialized woodworking styles, like Japanese woodworking.

6/

6/

You sample one of these and find it fascinating, so you start watching more of these. The recommendation system clues you in to Japanese nail-free joinery:

7/

7/

And from there, you discover the frankly mesmerizing "Niju-mizu-kumi-tsugi" style of joinery, and you start to seek out more. You have found this narrow, weird, self-reinforcing community.

8/

https://twitter.com/TheJoinery_jp/status/763323644906905600

8/

This community could not exist without the internet and its signature power to locate and connect people with shared, widely dispersed, uncommon interests.

This power isn't just used to push conspiracies and woodworking techniques, either.

9/

This power isn't just used to push conspiracies and woodworking techniques, either.

9/

It's how people who know that their gender identity doesn't correspond to the gender they were assigned at birth find each other, and acquire a vocabulary for describing their views, and foment change.

10/

10/

It's how people who believe #BlackLivesMatter find one another, it's how the #GreenNewDeal movement came together.

It's also how people who wanted to cosplay Civil War soldiers in Charlottesville, waving tiki torches, chanting "Jews will not replace us" found each other.

11/

It's also how people who wanted to cosplay Civil War soldiers in Charlottesville, waving tiki torches, chanting "Jews will not replace us" found each other.

11/

And that is the conundrum of the recommendation engine. Helping people find others who share their views, passions and concerns is not, in and of itself, bad. It is vital. It's the thing that made the internet delightfully weird. It's also what made the world terribly weird.

12/

12/

Thompson takes us inside Youtube's algorithm team as they try to balance three priorities:

I. Increasing their traffic and profits

II. Helping people find others with common interest

III. Stopping conspiracies from spreading

13/

I. Increasing their traffic and profits

II. Helping people find others with common interest

III. Stopping conspiracies from spreading

13/

And he traces how they try, with limited success, to manage these competing goals by creating extremely fine-grained rules that define what is banned on the platform.

14/

14/

But naturally, this just gives rise to a new kind of content: stuff that is ALMOST bad enough for blocking, but not quite. The problem is that this stuff is indistinguishable (in all but the narrowest, technical way) from banned content.

15/

15/

So then Youtube has to create a new set of moderation guidelines: "What is so close to prohibited content that it, too, is prohibited?"

Naturally, this is creating a new kind of content: "Stuff that is not close-to-bannable, but is close-to-close-to-bannable."

16/

Naturally, this is creating a new kind of content: "Stuff that is not close-to-bannable, but is close-to-close-to-bannable."

16/

This dynamic should be familiar to anyone who's watched the moderation policies of Big Tech platforms evolve: what is "hate speech?" "What is 'almost-hate-speech?'" "What is almost-almost-hate-speech?'"

17/

17/

Ultimately, this ends up creating thick binders of pseudo-law that delivers advantages to the worst people: they can study the companies' policies and figure out how to skate RIGHT UP to the cliff's edge (no matter how it is defined).

18/

18/

And at the same time, they can goad their adversaries - the people they torment - into crossing these fractally complex lines and then nark them out, so that over time, these speech policies preferentially block good speech and leave bad speech untouched.

19/

19/

I am increasingly convinced that the problem isn't that Youtube is unsuited to moderating the video choices of a billion users - it's that no one is suited to this challenge.

20/

20/

Remedies that put moderation choices closer to the user - breaking up monopolies, allowing interoperable recommendation systems - solve the problem of scaling up AND covering edge cases by eliminating scale altogether, and letting the edge cases make their own calls.

21/

21/

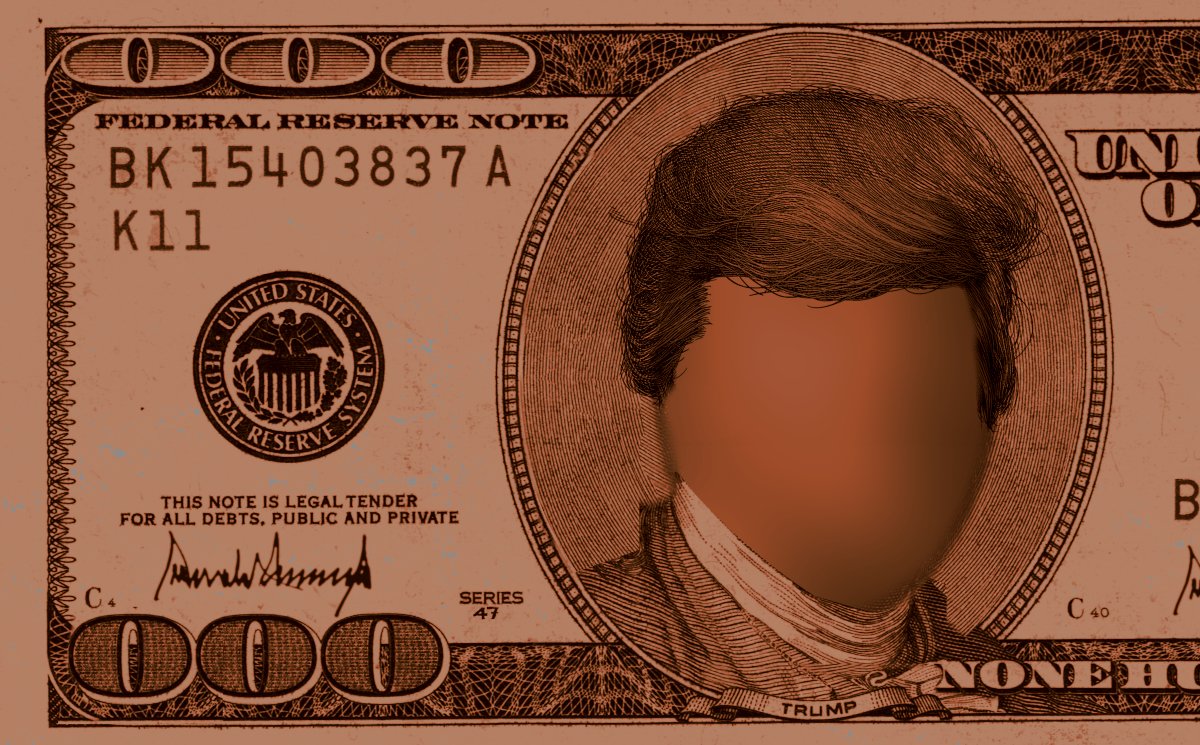

Image:

Mark Sargent

eof/

Mark Sargent

eof/

• • •

Missing some Tweet in this thread? You can try to

force a refresh