Greg Kahn's deep RL algorithms allows robots to navigation Berkeley's sidewalks! All the robot gets is a camera view, and supervision signal for when a safety driver told it to stop.

Website: sites.google.com/view/sidewalk-…

Arxiv: arxiv.org/abs/2010.04689

(more below)

Website: sites.google.com/view/sidewalk-…

Arxiv: arxiv.org/abs/2010.04689

(more below)

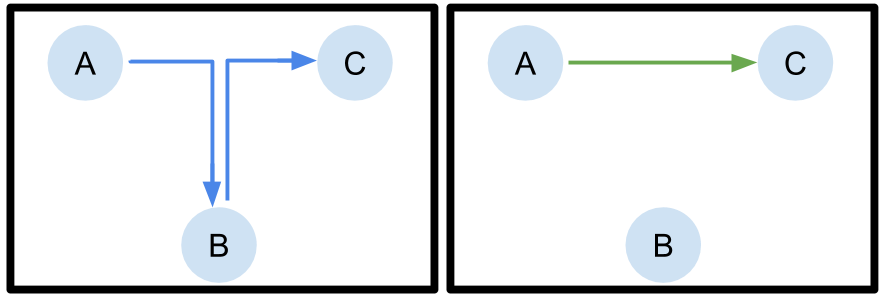

The idea is simple: a person follows the robot in a "training" phase (could also watch remotely from the camera), and stops the robot when it does something undesirable -- much like a safety driver might stop an autonomous car.

The robot then tries to take those actions that are least likely to lead to disengagement. The result is a learned policy that can navigate hundreds of meters of Berkeley sidewalks entirely from raw images, without any SLAM, localization, etc., entirely using a learned neural net

This work, which completes Greg's PhD thesis, is the culmination of over five years of research on vision-based navigation. Check out Greg's previous work in this area:

BADGR: sites.google.com/view/badgr

CAPs:

GCG: github.com/gkahn13/gcg

BADGR: sites.google.com/view/badgr

CAPs:

GCG: github.com/gkahn13/gcg

• • •

Missing some Tweet in this thread? You can try to

force a refresh