Have you ever implemented a dynamic function dispatcher in Python, where you can register functions at runtime using the decorator syntax? (Like the routers for FastAPI.)

I did this recently, and I am going to teach you how to do it! I'll walk you through it in the thread below.

I did this recently, and I am going to teach you how to do it! I'll walk you through it in the thread below.

We will build an event handler to catch arbitrary events and dynamically execute a function to handle the event.

(A good example is catching events in a webhook listener.)

Time to use some decorator magic!

(A good example is catching events in a webhook listener.)

Time to use some decorator magic!

The usage is straightforward:

1) instantiate the EventHandler,

2) register handler functions for specific events,

3) pass the event to the EventHandler instance when caught.

1) instantiate the EventHandler,

2) register handler functions for specific events,

3) pass the event to the EventHandler instance when caught.

The EventHandler has an internal dictionary, where it holds the (event, function) key-value pairs. This is where the single event handler functions are registered.

The magic happens in the `EventHandler.register_event()` method, which is not just a decorator. It is a 𝐝𝐞𝐜𝐨𝐫𝐚𝐭𝐨𝐫 𝐟𝐚𝐜𝐭𝐨𝐫𝐲.

It returns a parametrized decorator, whose only job is to store functions in the EventHandler's dictionary.

It returns a parametrized decorator, whose only job is to store functions in the EventHandler's dictionary.

Since `self` is in its closure, it can be accessed within the parametrized decorator. So, it can register functions to the `self.handlers` dictionary.

Why not just use a dictionary instead of EventHandler? I got two reasons.

1) The decorator syntax is much more convenient than manually jamming each function into a dictionary.

1) The decorator syntax is much more convenient than manually jamming each function into a dictionary.

2) You can easily implement additional functionality. What if you are building a webhook event handler, and you need to verify its signature before doing anything? Just create a validator method and slam it into `__call__`.

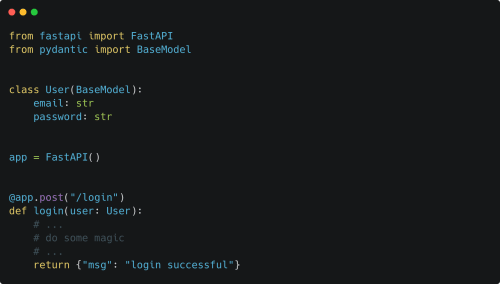

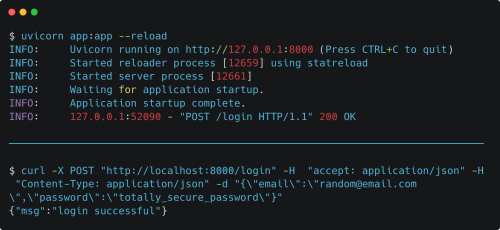

Decorators are a fantastic feature in Python. If you are wondering, this is very similar to how Flask and FastAPI implements routing.

What is your favorite application of function decorators?

What is your favorite application of function decorators?

• • •

Missing some Tweet in this thread? You can try to

force a refresh