OK, finally our tweeprint for the NeurIPS paper. Here we go. Synaptic plasticity, it's the holy grail of learning and memory. This is work by @basile_cfx, @hisspikeness, @ejagnes, @countzerozz & myself, on how to find the grail, maybe biorxiv.org/content/10.110…

Common dogma dictates that we remember, learn, and develop to sense, see, hear, etc. in early devo by way of activity-dependent rules that determine how we wire up, how we maintain and how we adjust our synapses.

Slice physiology points towards distinct and precise rules that determine synaptic strength. The most famous one is the Gerstner / Markram / Bi & Poo / Nelson Abbott STDP curve, for excitatory plasticity, read all about it e.g. here Magee & Grienberg annualreviews.org/doi/abs/10.114…

But there are other synapse types, and thus other rules, eg

Woodin et al. (Neuron, 2003), D’Amour & Froemke (Neuron, 2015), Gidon et al. Science, 2020; all hinting at much more complex mechanisms at play. Here is a review we wrote about it: doi.org/10.1146/annure…

Woodin et al. (Neuron, 2003), D’Amour & Froemke (Neuron, 2015), Gidon et al. Science, 2020; all hinting at much more complex mechanisms at play. Here is a review we wrote about it: doi.org/10.1146/annure…

Sadly, it’s impossible to test these rules in vivo, also cuz we can't access single synapses without destroying large swathes of tissue around it.

So we changed the question. Can we discover the necessary rules for a network to acquire a predetermined architecture or function?

So we changed the question. Can we discover the necessary rules for a network to acquire a predetermined architecture or function?

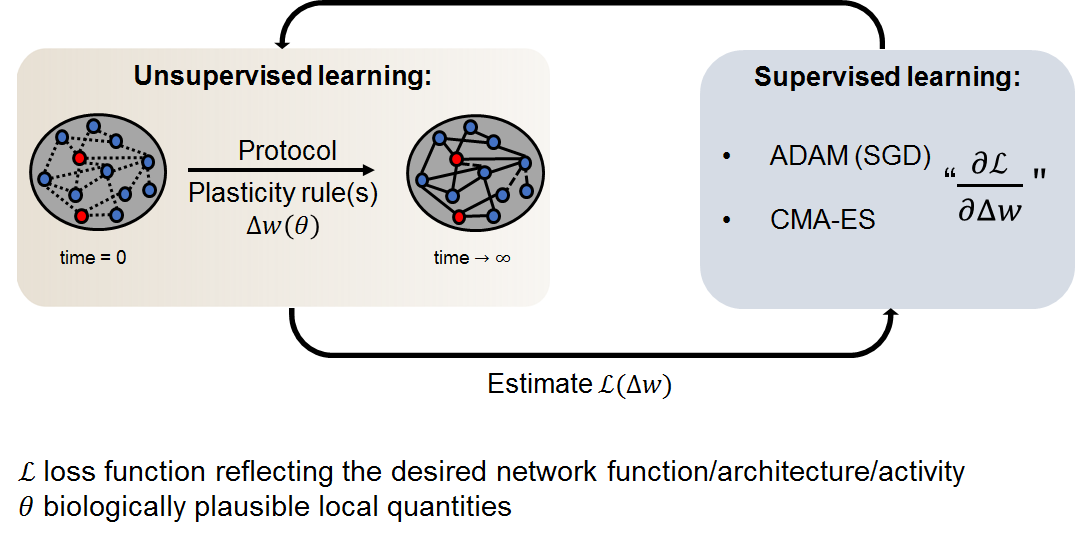

Towards that goal, we built a 2-layer optimisation framework. In layer 1, a network acquires a function / structure by way of unsupervised plasticity rule(s). In layer 2, the plasticity rules themselves are optimised so as to ascribe the *right* function/structure to the network.

To allow for the plasticity rule to be shaped and optimised, we must choose a search space/parameterization that contains a variety of rules. The parameterization remains interpretable, enabling us to make mechanistic predictions on the biological implementation of these rules.

To optimise the rules, we need a loss function, i.e. how well a given rule performs in making a network acquire its function or shape. We minimise this loss using robust local methods such as CMA-ES w.r.t the learning rules that shaped the network, see: arxiv.org/abs/1604.00772

Ok. Results time. First, we show that our approach is working in a single (rate) neuron example, with a known rule, that is “Oja’s rule”, which has been shown to find the first principal component of its inputs. We start with a random rule, and BOOM, we find Oja’s (rule).

Cool side note: This result was predicted by Benjio et al. in 1991, but they didn't run the sims. We now confirm that they were right. Whoop doi.org/10.1109/IJCNN.… Second side note: @NeurIPSConf consistently transcribed "Oja's" rule as "Oh YES!" Rule. We agree. We AGREE.

We then expand on this work with a multi-cell and multi-rule model, to allow the network to express additional principal components. Despite more complex network architecture, & two co-active rules we succeed to rediscover Oja’s rule + an anti-Hebbian rule. (Yay)

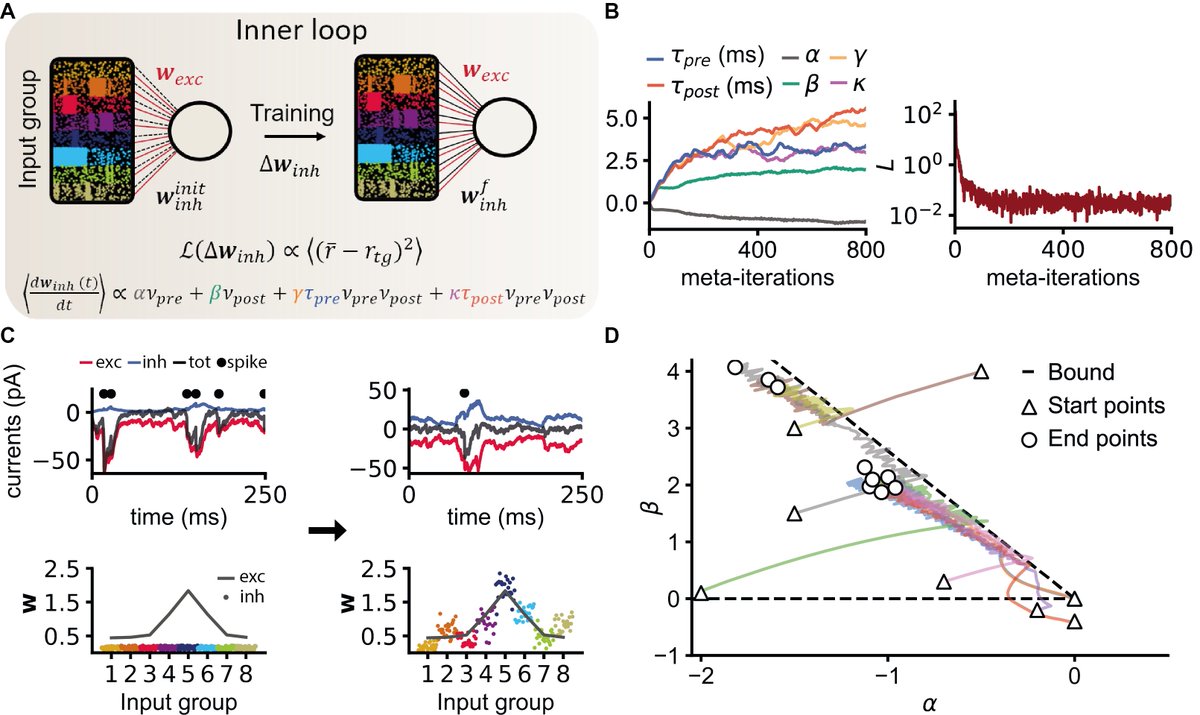

Next, we try to do the "Oh yes!" (not Oja's though) in spiking. We look at rules that aim to maintain constant firing rates in the face of variable inputs (That's my old paper w/ @sprekeler et al.) Our framework (sorta) finds it. Oh yes.! doi.org/10.1126/scienc…

In fact, we find a family of rules, and show that within the manifold (!) of theoretically optimal learning rules, some are easier to reach or more efficient than others.

If you wanna discuss this more, come to my @NeurIPSConf poster, and let's chat. neurips.cc/virtual/2020/p…

Also, check out this related work by @_jakobj et al.on learning functional rules in spiking neurons using a different optimisation technique. Quite complementary arxiv.org/abs/2005.14149

Of course, this is not the end! More like, the beginning. We are now looking to more biologically urgent questions, flexible rules with more parameters, so if you have an idea/data you think could be promising to apply to our framework, please reach out!

• • •

Missing some Tweet in this thread? You can try to

force a refresh