Here are the links for all the notes that I have from the Andrew NG Machine Learning Course that I made back in 2016

This was my first exposure to #MachineLearning They helped me a lot and I hope anyone who's just starting out and prefers handwritten notes can reference these 👇

This was my first exposure to #MachineLearning They helped me a lot and I hope anyone who's just starting out and prefers handwritten notes can reference these 👇

Linear Regression

drive.google.com/file/d/1A1chyl…

drive.google.com/file/d/1A1chyl…

Logistic Regression

drive.google.com/file/d/1AKE1Vj…

drive.google.com/file/d/1AKE1Vj…

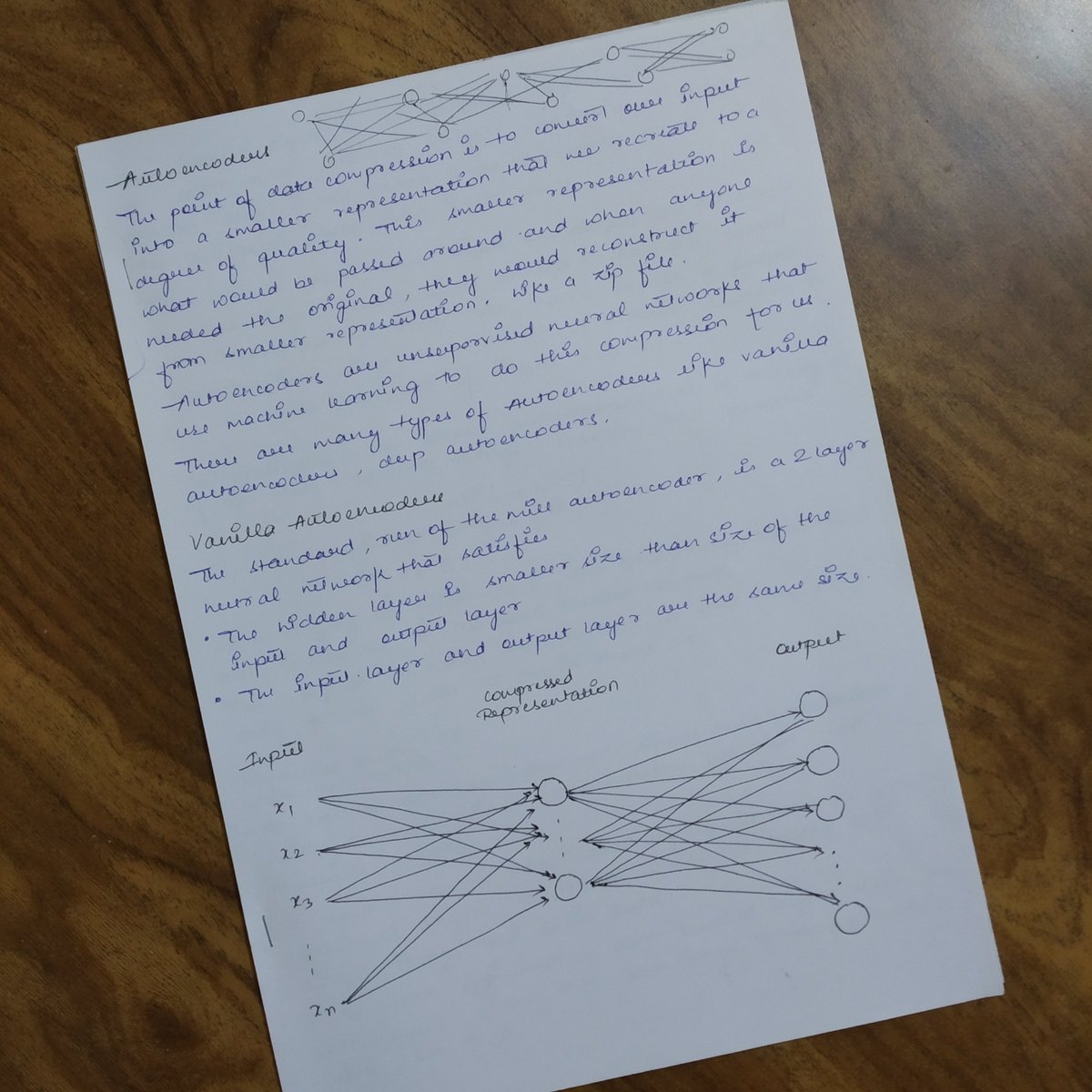

Unsupervised Learning

drive.google.com/file/d/1A2Ra60…

drive.google.com/file/d/1A2Ra60…

Neural Networks

drive.google.com/file/d/1AKl74N…

drive.google.com/file/d/1AKl74N…

Anomaly and Recommender System

drive.google.com/file/d/1A74ZEn…

drive.google.com/file/d/1A74ZEn…

Advice for appyling ML

drive.google.com/file/d/1A3p9QQ…

drive.google.com/file/d/1A3p9QQ…

I'll put out some other notes as well soon.

Hope it helps!

Hope it helps!

• • •

Missing some Tweet in this thread? You can try to

force a refresh