It's a very big day for me today, as I'm officially releasing version 0.2.3 for the @AdaptNLP project. With it comes a slew of changes, but what exactly?

A preview:

#nbdev, @fastdotai, #fastcore, and something called a ModelHub for both #FlairNLP and @huggingface, let's dive in:

A preview:

#nbdev, @fastdotai, #fastcore, and something called a ModelHub for both #FlairNLP and @huggingface, let's dive in:

First up, #nbdev:

Thanks to the lib2nbdev package (novetta.github.io/lib2nbdev), we've completely restructured the library to become test-driven development with nbdev, with integration tests and everything else that comes with the workflow 2/9

Thanks to the lib2nbdev package (novetta.github.io/lib2nbdev), we've completely restructured the library to become test-driven development with nbdev, with integration tests and everything else that comes with the workflow 2/9

Next @fastdotai and #fastcore, I'm restructuring the internal inference API to rely on fastai's Learner and Callback system, to decouple our code and make it more modularized. With the fastai_minima package as well, only the basic Learner and Callback classes are being used: 3/9

What this allows is any new implementation or inference API requires less boilerplate code, and we can get it off the ground faster. For example, this is all that was needed to get Language Model text-generation going, where I completely override fastai's prediction: 4/9

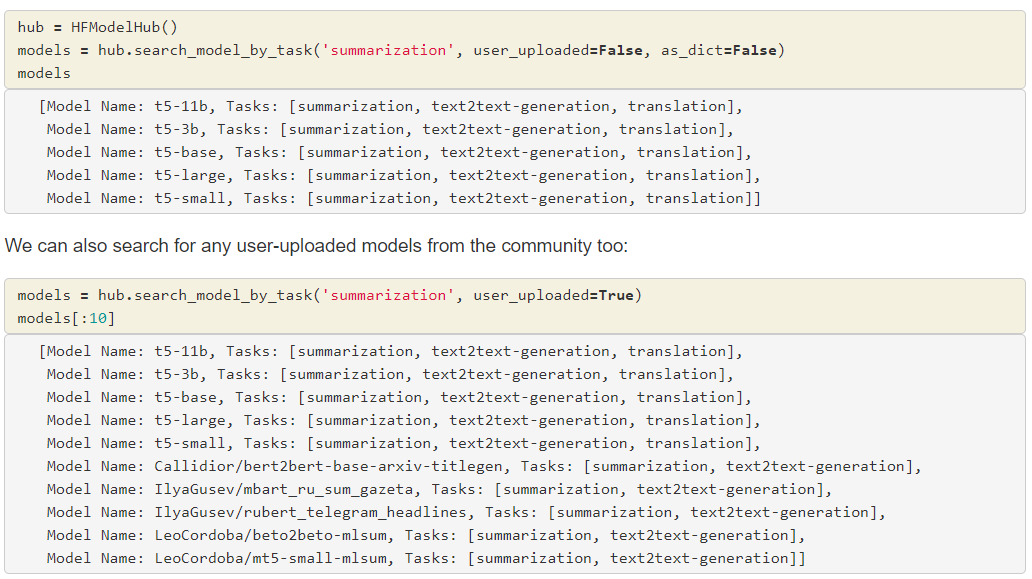

Next probably my favorite part of this package, the ModelHubs. Ever wanted to search all of @huggingface programmatically? I've made some improvements to their hub API to where you can search by model name, author, or even task, in just a few lines of code: 5/9

I've done the same for #flairNLP, where we can search for not only models in HuggingFace, but also any of the ones hosted by flair themselves:

Both of which let you search either by model name (which is the author part) or by task, named appropriately 7/9

Both of which let you search either by model name (which is the author part) or by task, named appropriately 7/9

Even if you don't use AdaptNLP for the actual inference, I do hope folks try out the ModelHub, as we've found it greatly eases the programmatic search API when trying to find models to use. 8/9

We also now officially support the latest versions of @PyTorch, Transformers, and FlairNLP.

That's my list of updates, and for me this amounts to my last few months of work over at @Novettasol :) I hope folks enjoy the package and update! 9/9

That's my list of updates, and for me this amounts to my last few months of work over at @Novettasol :) I hope folks enjoy the package and update! 9/9

• • •

Missing some Tweet in this thread? You can try to

force a refresh