I’ve had independent confirmation from multiple people that Apple is releasing a client-side tool for CSAM scanning tomorrow. This is a really bad idea.

These tools will allow Apple to scan your iPhone photos for photos that match a specific perceptual hash, and report them to Apple servers if too many appear.

Initially I understand this will be used to perform client side scanning for cloud-stored photos. Eventually it could be a key ingredient in adding surveillance to encrypted messaging systems.

The ability to add scanning systems like this to E2E messaging systems has been a major “ask” by law enforcement the world over. Here’s an open letter signed by former AG William Barr and other western governments. justice.gov/opa/pr/attorne…

This sort of tool can be a boon for finding child pornography in people’s phones. But imagine what it could do in the hands of an authoritarian government? google.com/amp/s/www.nyti…

The way Apple is doing this launch, they’re going to start with non-E2E photos that people have already shared with the cloud. So it doesn’t “hurt” anyone’s privacy.

But you have to ask why anyone would develop a system like this if scanning E2E photos wasn’t the goal.

But you have to ask why anyone would develop a system like this if scanning E2E photos wasn’t the goal.

But even if you believe Apple won’t allow these tools to be misused 🤞there’s still a lot to be concerned about. These systems rely on a database of “problematic media hashes” that you, as a consumer, can’t review.

Hashes using a new and proprietary neural hashing algorithm Apple has developed, and gotten NCMEC to agree to use.

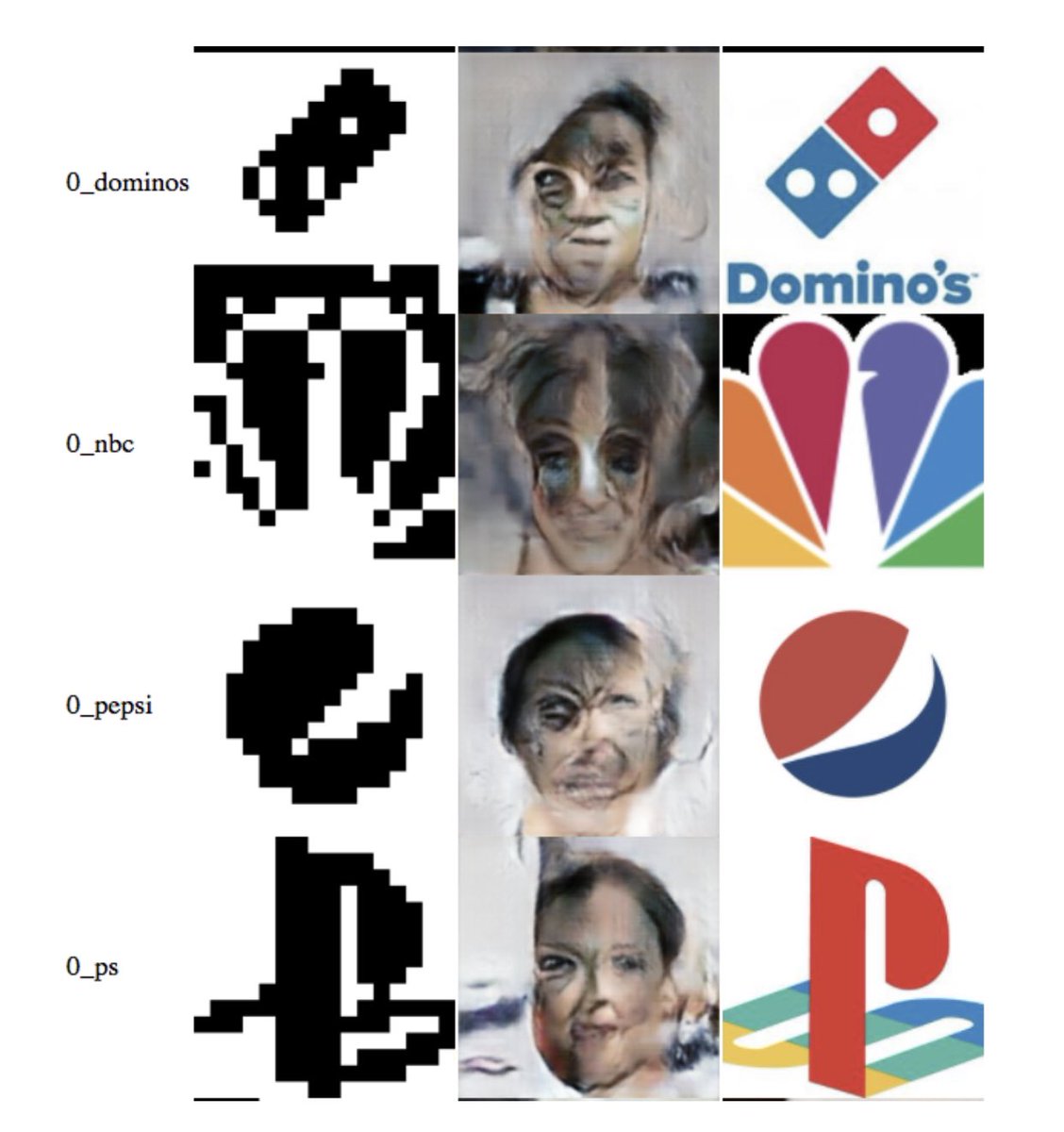

We don’t know much about this algorithm. What if someone can make collisions?

We don’t know much about this algorithm. What if someone can make collisions?

Imagine someone sends you a perfectly harmless political media file that you share with a friend. But that file shares a hash with some known child porn file?

These images are from an investigation using much simpler hash function than the new one Apple’s developing. They show how machine learning can be used to find such collisions. towardsdatascience.com/black-box-atta…

The idea that Apple is a “privacy” company has bought them a lot of good press. But it’s important to remember that this is the same company that won’t encrypt your iCloud backups because the FBI put pressure on them. google.com/amp/s/mobile.r…

• • •

Missing some Tweet in this thread? You can try to

force a refresh