Applying deep learning to pathology is quite challenging due to the sheer size of the slide images (gigapixels!).

A common approach is to divide images into smaller patches, for which deep learning features can be extracted & aggregated to provide a slide-level diagnosis (1/9)

A common approach is to divide images into smaller patches, for which deep learning features can be extracted & aggregated to provide a slide-level diagnosis (1/9)

Unfortunately, dividing into small patches limits the context to cellular features, missing out on the various levels of relevant features, like larger-scale tissue organization. (2/9)

Additionally, it is difficult to improve long-range dependencies with Transformers due to the high number of patches which makes attention calculation computationally difficult. (3/9)

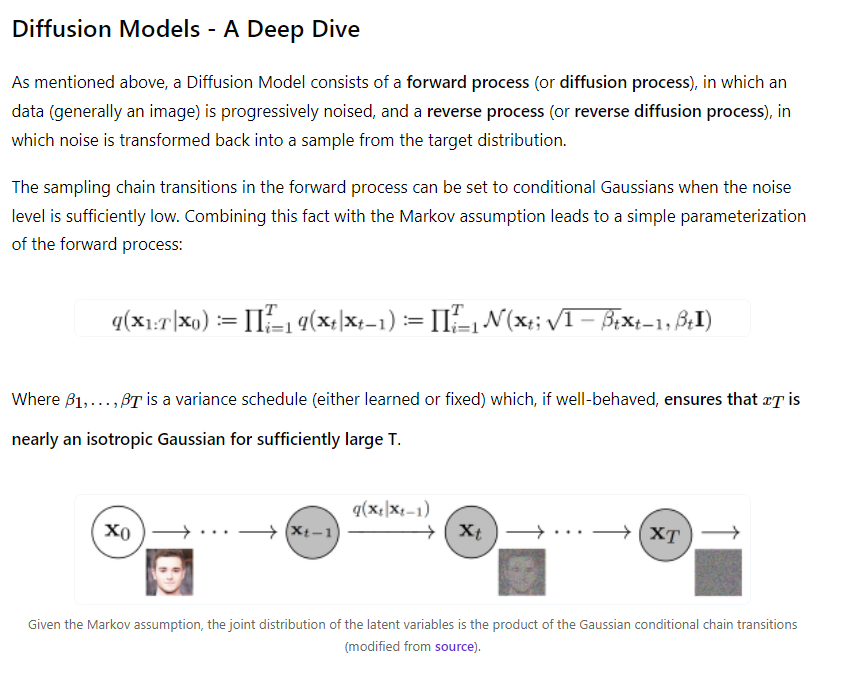

Recent work by @richardjchen et al. at #CVPR2022 introduced HIPT (Hierarchical Image Pyramid Transformer) to solve these challenges.

It's comprised of 3 separate ViTs that are applied at different scales. The output of one is treated as the input of the next transformer. (4/9)

It's comprised of 3 separate ViTs that are applied at different scales. The output of one is treated as the input of the next transformer. (4/9)

@richardjchen Two of the ViTs are pretrained via self-supervised learning, specifically with the DINO framework. After pretraining the first ViT, its outputs can be used to pretrain the next. This is referred to as hierarchical pretraining. (5/9)

@richardjchen After the model is pretrained on thousands of slide images from various cancer types (the TCGA dataset), it can then be fine-tuned for specific tasks.

Compared to other models, HIPT had better performance (AUC score) for breast, lung, and kidney cancer classification (6/9)

Compared to other models, HIPT had better performance (AUC score) for breast, lung, and kidney cancer classification (6/9)

@richardjchen Since these are Vision Transformers, it provides us with built-in interpretability and visualization with the attention heads.

A pathologist was able to confirm based on these visualizations that the ViTs are indeed attending to medically-relevant features (7/9)

A pathologist was able to confirm based on these visualizations that the ViTs are indeed attending to medically-relevant features (7/9)

@richardjchen Overall this was a very interesting paper and hopefully an important development for the field of computational pathology.

I also wonder what sorts of applications this architecture might have outside of pathology. (8/9)

I also wonder what sorts of applications this architecture might have outside of pathology. (8/9)

@richardjchen Link to the paper → arxiv.org/abs/2206.02647

Link to the code → github.com/mahmoodlab/HIPT

Link to video →

Great work @richardjchen @kuanchen22 Yicong Li, @tiffanyytchen @AndrewTrister @rahulgk @ai4pathology! (9/9)

Link to the code → github.com/mahmoodlab/HIPT

Link to video →

Great work @richardjchen @kuanchen22 Yicong Li, @tiffanyytchen @AndrewTrister @rahulgk @ai4pathology! (9/9)

• • •

Missing some Tweet in this thread? You can try to

force a refresh