A thread on the recent report published by @OpenAI: 🧵

Academic researchers often lack sufficient levels of introspection to understand the potential abuse of the technology they help create through the word list suggestions they make to AI developers, Data Scientists, & Trust and Safety at big tech companies in The United States.

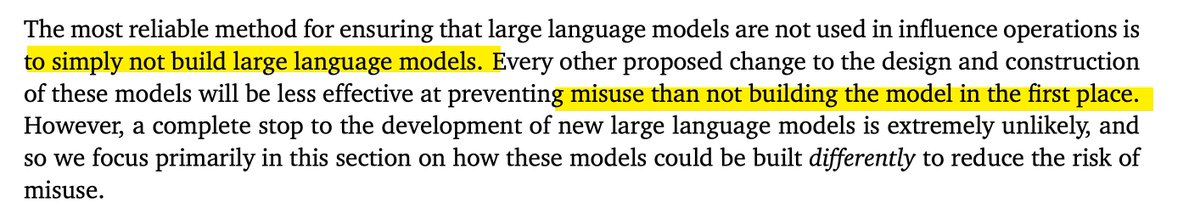

Direct attempt to influence policy through recommendation.

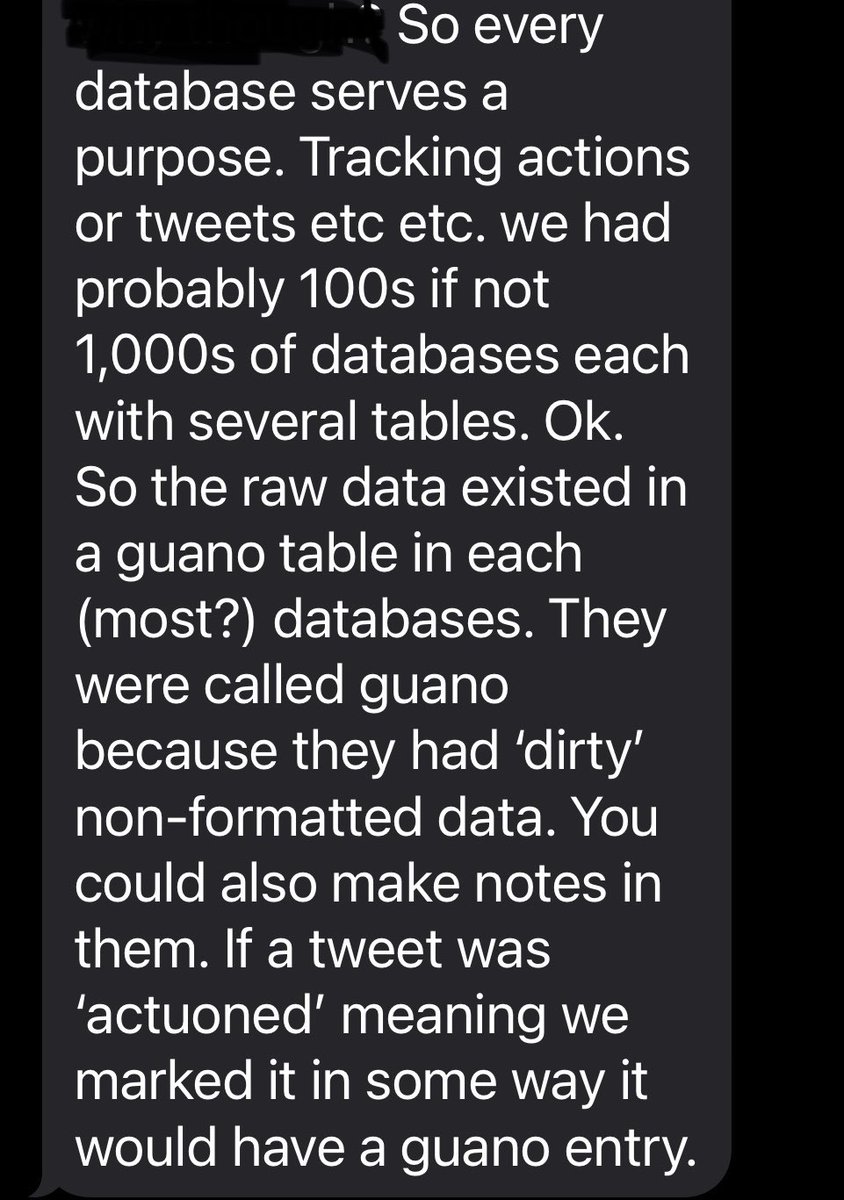

This is exactly what plays out at big tech companies.

Academic researchers suggest word lists to monitor for “misinfo.”

Trust and Safety & Data Scientists then follow the recs provided from the academic experts.

This is exactly what plays out at big tech companies.

Academic researchers suggest word lists to monitor for “misinfo.”

Trust and Safety & Data Scientists then follow the recs provided from the academic experts.

Aka: One option for the US is to monitor the term XX.

We find this term to be likely associated w/ misinfo.

Trust & Safety tells Data Scientists to add new misinfo word to param.

Data Science team adds word.

No one stops to question if the word was ever misinfo.

We find this term to be likely associated w/ misinfo.

Trust & Safety tells Data Scientists to add new misinfo word to param.

Data Science team adds word.

No one stops to question if the word was ever misinfo.

• • •

Missing some Tweet in this thread? You can try to

force a refresh