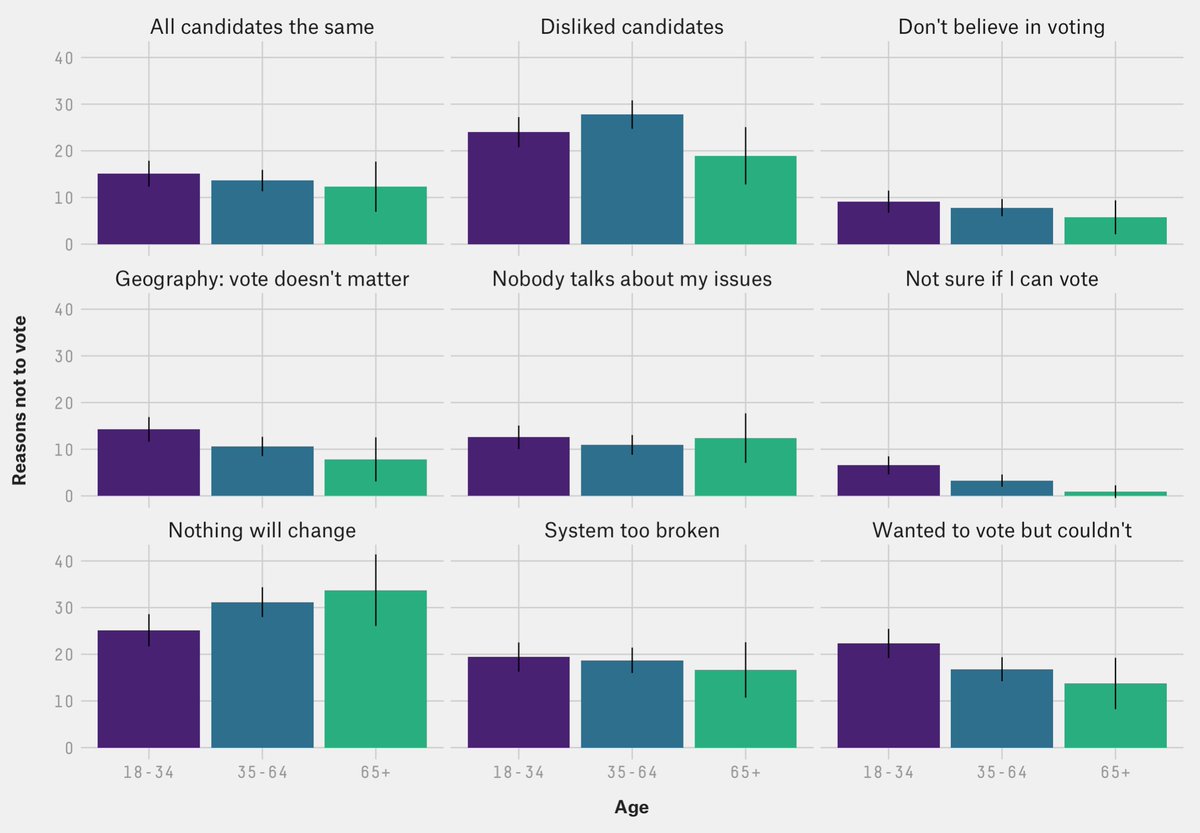

Why is turnout among young Americans so low? @ameliatd, @jazzmyth and I find that while people under 35 *are* more skeptical of the system, they're not apathetic. Instead, they're more likely to face structural barriers like not being able to get off work

53eig.ht/31Tsh22

53eig.ht/31Tsh22

(+ bonus charts!) How do we know young people aren't more apathetic? Well, they're not significantly more likely to say they don't vote because the system is too broken, or because they don't believe in voting. But they *are* more likely to say they wanted to vote, but couldn't.

In fact, if anything, young people are *less* likely to say that they don't vote because nothing will change for people like them no matter who wins!

But young Americans are more likely than older Americans to say that changes to government are needed. And they feel less represented: much more say no-one in elected office is like them.

And while all age groups think Republicans are more likely to want people like them *not* to vote than Democrats, that gap is particularly large among young people: 37% say Republicans don't want people like them to vote, compared to 17% for Democrats.

A fascinating stat that didn't make it into the article: young Americans aren't more enamored with army rule or autocracy than older ones, but they are a lot more positive about expert government.

And young people are much less likely than older ones to think that believing in God, displaying the flag, knowing the pledge of allegiance or supporting the military are important to being a good American. But they are more likely to think protesting is.

• • •

Missing some Tweet in this thread? You can try to

force a refresh