💡The challenge: teach my iPhone to recognize sharks and fish in images without writing a single line of code.

Let's see how close we can get to #NoCode #mobile #ComputerVision in 2020. A how-to thread 👇 1/13

Let's see how close we can get to #NoCode #mobile #ComputerVision in 2020. A how-to thread 👇 1/13

Step 1: Collecting images.

I went to the Omaha Zoo and spent a few hours wandering around the aquarium taking photos. 2/13

I went to the Omaha Zoo and spent a few hours wandering around the aquarium taking photos. 2/13

Step 2: Curating.

After looking through what I collected I decided I to train a model to detect the following critters:

🐠 fish

🦑 jellyfish

🐧 penguins

🦈 sharks

🐣 puffins

🐝☀️ stingrays

⭐️🐟 starfish

🧑 humans, and

🐢 turtles.

3/13

After looking through what I collected I decided I to train a model to detect the following critters:

🐠 fish

🦑 jellyfish

🐧 penguins

🦈 sharks

🐣 puffins

🐝☀️ stingrays

⭐️🐟 starfish

🧑 humans, and

🐢 turtles.

3/13

Step 3: Labeling.

I solicited help from a couple of friends and it only took us a few hours to draw boxes around the animals of interest in 638 images.

The final annotated dataset is available for download here: public.roboflow.com/object-detecti… 4/13

I solicited help from a couple of friends and it only took us a few hours to draw boxes around the animals of interest in 638 images.

The final annotated dataset is available for download here: public.roboflow.com/object-detecti… 4/13

Step 4: Inspection.

I used Roboflow's Dataset Health Check to discover and fix a few problems:

• I didn't have enough examples of humans or turtles

• There were some typos in my labels

• And someone accidentally labeled some "fish" as "bannerfish".

5/13

I used Roboflow's Dataset Health Check to discover and fix a few problems:

• I didn't have enough examples of humans or turtles

• There were some typos in my labels

• And someone accidentally labeled some "fish" as "bannerfish".

5/13

Step 5: Image Processing.

I also used Roboflow to downsize my images from 12 megapixels to something more appropriate (416x416), and to enlarge the size of my training data by applying several random transformations to my images and annotations. 6/13

I also used Roboflow to downsize my images from 12 megapixels to something more appropriate (416x416), and to enlarge the size of my training data by applying several random transformations to my images and annotations. 6/13

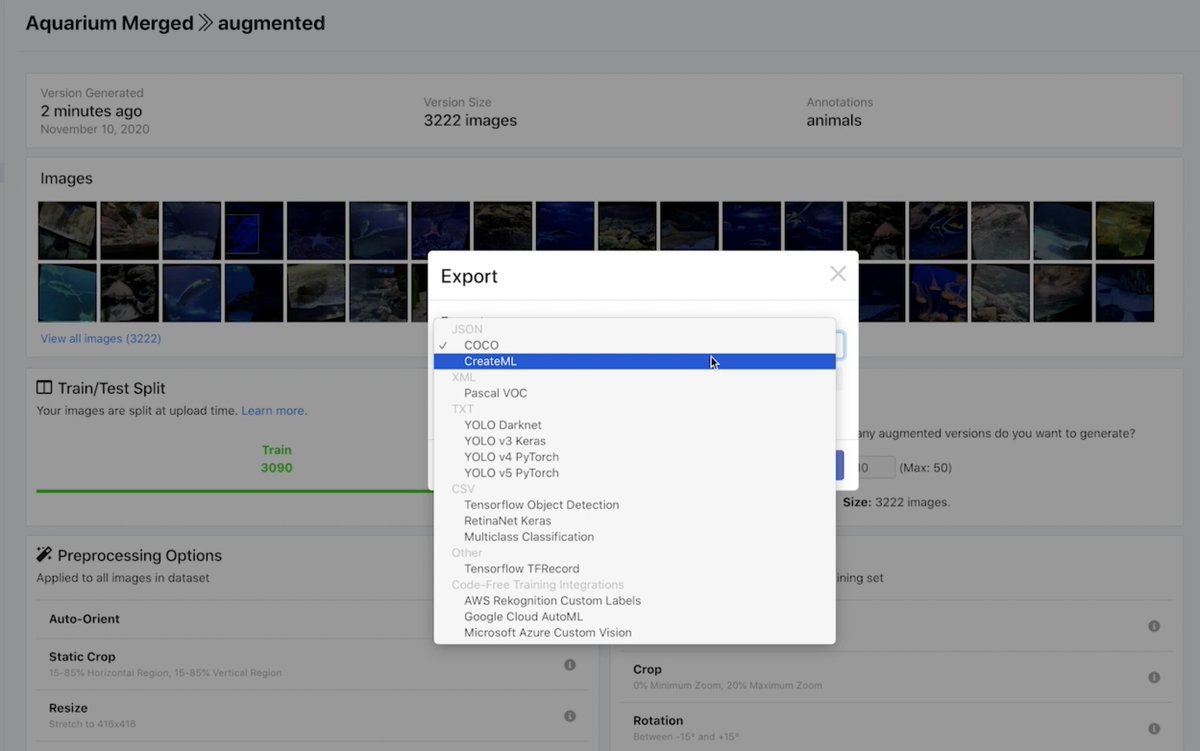

Step 6: Exporting.

A key part of being able to do this whole project with no code was downloading the dataset in the exact format I needed: Apple's CreateML JSON. 7/13

A key part of being able to do this whole project with no code was downloading the dataset in the exact format I needed: Apple's CreateML JSON. 7/13

Step 7: Importing into CreateML.

If you give it images and annotations in the right format, Apple's no-code training tool will spit out a custom machine learning model optimized for use with your iPhone's neural engine. 8/13

If you give it images and annotations in the right format, Apple's no-code training tool will spit out a custom machine learning model optimized for use with your iPhone's neural engine. 8/13

Step 8: Training a model!

It took about 6 hours for CreateML to go through my images 13,000 times. It learned bit-by-bit how to mimic the boxes we drew around the animals in my images. 9/13

It took about 6 hours for CreateML to go through my images 13,000 times. It learned bit-by-bit how to mimic the boxes we drew around the animals in my images. 9/13

Step 9: Checking the results.

CreateML will give you training metrics, but I find it much more useful to have a look at some example predictions on images the model has never seen before.

Certainly not perfect but not bad either! 10/13

CreateML will give you training metrics, but I find it much more useful to have a look at some example predictions on images the model has never seen before.

Certainly not perfect but not bad either! 10/13

Step 10: Packaging it up.

Luckily, Apple already did the heavy lifting for us and created a sample app that applies a CreateML-trained model to a live stream from your iPhone's camera.

All we have to do is replace their included model with our own. developer.apple.com/documentation/… 11/13

Luckily, Apple already did the heavy lifting for us and created a sample app that applies a CreateML-trained model to a live stream from your iPhone's camera.

All we have to do is replace their included model with our own. developer.apple.com/documentation/… 11/13

Step 11: Testing.

We're done! We trained a custom object detector from scratch on our own dataset and deployed it to our iPhone without writing a single line of code. 12/13

We're done! We trained a custom object detector from scratch on our own dataset and deployed it to our iPhone without writing a single line of code. 12/13

Want to try it for yourself?

Check out my no-code mobile object detection video on YouTube where I go through the full details and step-by-step instructions:

(Don't forget to like, subscribe, and ding the bell.) 13/13

Check out my no-code mobile object detection video on YouTube where I go through the full details and step-by-step instructions:

(Don't forget to like, subscribe, and ding the bell.) 13/13

• • •

Missing some Tweet in this thread? You can try to

force a refresh