Greg Mills asks how people coordinate when they interact with each other.

#Protolang7

#Protolang7

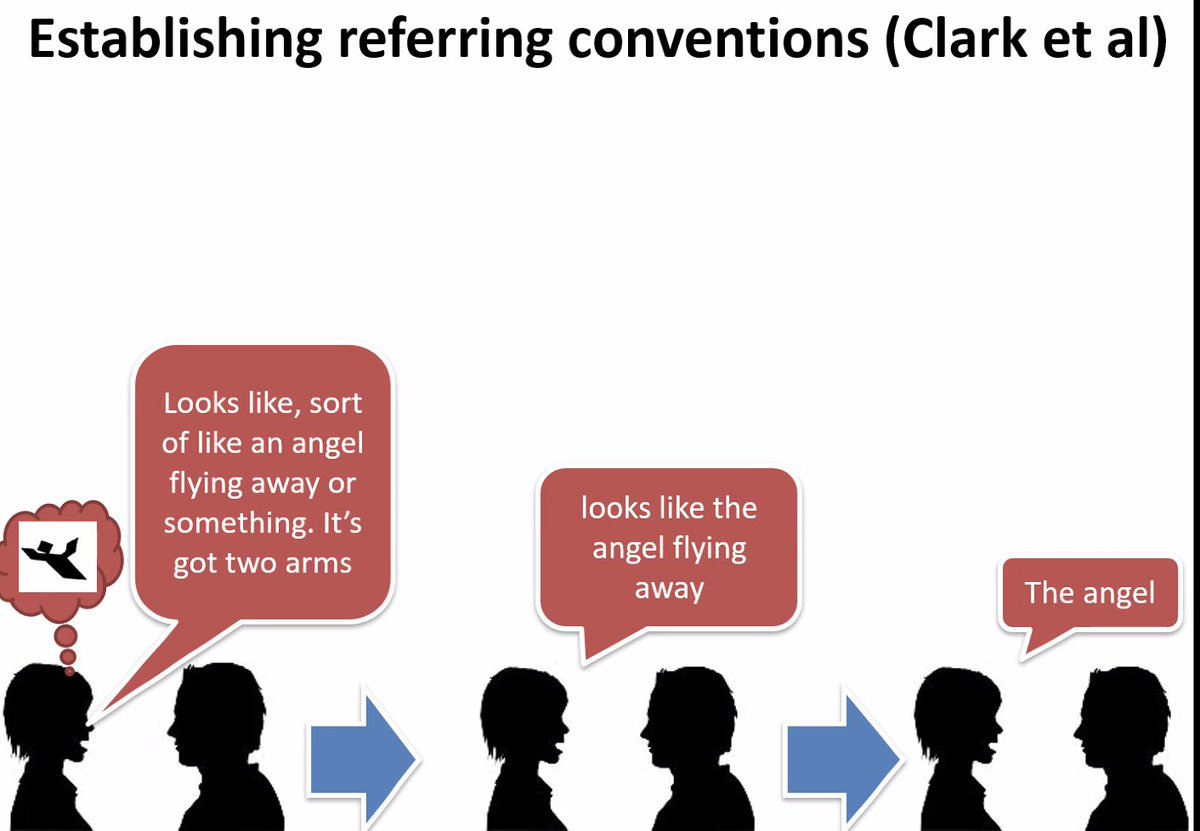

Usually we use reference games to study how conventions emerge to enable this. Which usually leads to patterns and the emergence of conventions lik enew referring expressions (or signs in experimental semiotics)

BUT there are more fundamental coordination problems in dialogue that are actually very different from referential problems. He shows clips of people coordinating on a street quite seemlessly and messed up high fives or tennis doubles, where coordination fails.

What fails is the timing, the turn-taking, signalling when to do what. We can use language to explicitly resolve this, using coordination expressions.

For example in hunting, actions need to be coordinated with signals like 'wait over there'. There are a large number of procedural expressions that are used in social coordination. X and Y here could be any task.

However, in psycholing, this has hardly been studied. However, e.g. in the tangram task, 30% of speech content is procedural

Procedural language is just as hard and ambiguous as referring expressions. To develop procedural conventions, we conventionalise, e.g., in the interaction routinising 'wait' to mean 'wait 5 seconds before doing x'

Mills argues that procedural language is a blind spot in studying language in interactive settings, since most studies focus on referring expressions and are concerned with repetitions/entrainment of contributions.

So how does procedural coordination actually develop? And what happens when participants don't have language to coordinate? And which mechanisms are involved?

He presents a guitar hero-based style, where participants can't use language, but have to coordinate, because only one person can see the instructions. They are only able to communicate with the buttons on the controller however, and only some notes they can play are shared.

This creates many procedural coordination problems, like the 3rd one where they have to take turns pressing notes.

Participants do manage to develop their own languae for solving this, where the procedural actions are the form of communication

A similar version has been done with keyboards with different conditions controlling what feedback they could provide. Being able to signal only negative feedback is actually detrimental to coordination, and alignment was actually higher in unsuccesful dialogues.

What the exps seem to suggest is that the solution to procedural coordination problems are rapidly conventionalised similar to referring expressions.

@hawkrobe perhaps interesting

• • •

Missing some Tweet in this thread? You can try to

force a refresh